Organizations working Apache Spark workloads, whether or not on Amazon EMR, AWS Glue, Amazon Elastic Kubernetes Service (Amazon EKS), or self-managed clusters, make investments numerous engineering hours in efficiency troubleshooting and optimization. When a vital extract, rework, and cargo (ETL) pipeline fails or runs slower than anticipated, engineers find yourself spending hours navigating via a number of interfaces comparable to logs or Spark UI, correlating metrics throughout completely different programs and manually analyzing execution patterns to determine root causes. Though Spark Historical past Server supplies detailed telemetry information, together with job execution timelines, stage-level metrics, and useful resource consumption patterns, accessing and deciphering this wealth of data requires deep experience in Spark internals and navigating via a number of interconnected internet interface tabs.

Immediately, we’re saying the open supply launch of Spark Historical past Server MCP, a specialised Mannequin Context Protocol (MCP) server that transforms this workflow by enabling AI assistants to entry and analyze your current Spark Historical past Server information via pure language interactions. This mission, developed collaboratively by AWS open supply and Amazon SageMaker Knowledge Processing, turns complicated debugging periods into conversational interactions that ship sooner, extra correct insights with out requiring adjustments to your present Spark infrastructure. You should use this MCP server along with your self-managed or AWS managed Spark Historical past Servers to research Spark functions working within the cloud or on-premises deployments.

Understanding Spark observability problem

Apache Spark has develop into the usual for large-scale information processing, powering vital ETL pipelines, real-time analytics, and machine studying (ML) workloads throughout 1000’s of organizations. Constructing and sustaining Spark functions is, nonetheless, nonetheless an iterative course of, the place builders spend important time testing, optimizing, and troubleshooting their code. Spark software builders centered on information engineering and information integration use circumstances typically encounter important operational challenges due to a couple completely different causes:

- Complicated connectivity and configuration choices to quite a lot of sources with Spark – Though this makes it a well-liked information processing platform, it typically makes it difficult to search out the basis explanation for inefficiencies or failures when Spark configurations aren’t optimally or appropriately configured.

- Spark’s in-memory processing mannequin and distributed partitioning of datasets throughout its staff – Though good for parallelism, this typically makes it tough for customers to determine inefficiencies. This leads to gradual software execution or root explanation for failures brought on by useful resource exhaustion points comparable to out of reminiscence and disk exceptions.

- Lazy analysis of Spark transformations – Though lazy analysis optimizes efficiency, it makes it difficult to precisely and shortly determine the appliance code and logic that prompted the failure from the distributed logs and metrics emitted from completely different executors.

Spark Historical past Server

Spark Historical past Server supplies a centralized internet interface for monitoring accomplished Spark functions, serving complete telemetry information together with job execution timelines, stage-level metrics, job distribution, executor useful resource consumption, and SQL question execution plans. Though Spark Historical past Server assists builders for efficiency debugging, code optimization, and capability planning, it nonetheless has challenges:

- Time-intensive handbook workflows – Engineers spend hours navigating via the Spark Historical past Server UI, switching between a number of tabs to correlate metrics throughout jobs, levels, and executors. Engineers should continually swap between the Spark UI, cluster monitoring instruments, code repositories, and documentation to piece collectively an entire image of software efficiency, which frequently takes days.

- Experience bottlenecks – Efficient Spark debugging requires deep understanding of execution plans, reminiscence administration, and shuffle operations. This specialised data creates dependencies on senior engineers and limits staff productiveness.

- Reactive problem-solving – Groups sometimes uncover efficiency points solely after they impression manufacturing programs. Handbook monitoring approaches don’t scale to proactively determine degradation patterns throughout lots of of day by day Spark jobs.

How MCP transforms Spark observability

The Mannequin Context Protocol supplies a standardized interface for AI brokers to entry domain-specific information sources. Not like general-purpose AI assistants working with restricted context, MCP-enabled brokers can entry technical details about particular programs and supply insights primarily based on precise operational information relatively than generic suggestions.With the assistance of Spark Historical past Server accessible via MCP, as a substitute of manually gathering efficiency metrics from a number of sources and correlating them to grasp software habits, engineers can interact with AI brokers which have direct entry to all Spark execution information. These brokers can analyze execution patterns, determine efficiency bottlenecks, and supply optimization suggestions primarily based on precise job traits relatively than normal greatest practices.

Introduction to Spark Historical past Server MCP

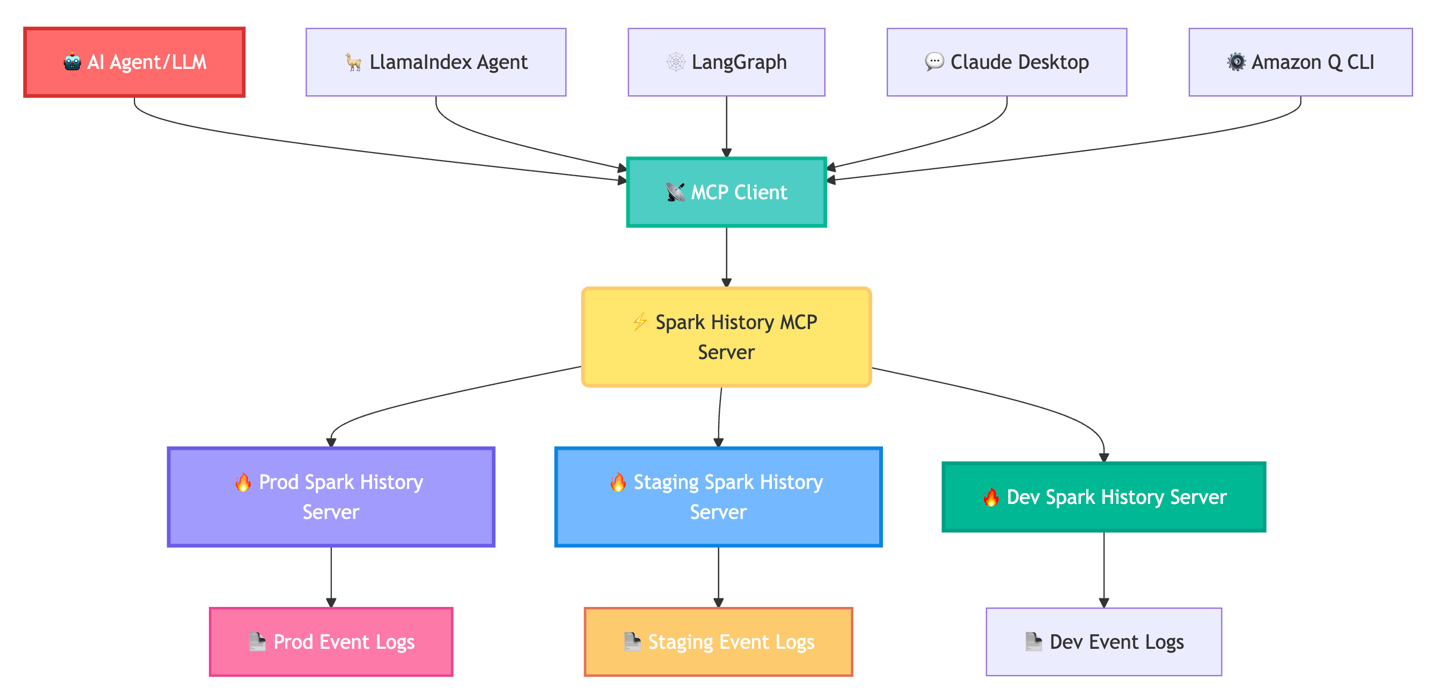

The Spark Historical past Server MCP is a specialised bridge between AI brokers and your current Spark Historical past Server infrastructure. It connects to a number of Spark Historical past Server cases and exposes their information via standardized instruments that AI brokers can use to retrieve software metrics, job execution particulars, and efficiency information.

Importantly, the MCP server features purely as a knowledge entry layer, enabling AI brokers comparable to Amazon Q Developer CLI, Claude desktop, Strands Brokers, LlamaIndex, and LangGraph to entry and motive about your Spark information. The next diagram exhibits this movement.

The Spark Historical past Server MCP instantly addresses these operational challenges by enabling AI brokers to entry Spark efficiency information programmatically. This transforms the debugging expertise from handbook UI navigation to conversational evaluation. As an alternative of hours within the UI, ask, “Why did job spark-abcd fail?” and obtain root trigger evaluation of the failure. This enables customers to make use of AI brokers for expert-level efficiency evaluation and optimization suggestions, with out requiring deep Spark experience.

The MCP server supplies complete entry to Spark telemetry throughout a number of granularity ranges. Software-level instruments retrieve execution summaries, useful resource utilization patterns, and success charges throughout job runs. Job and stage evaluation instruments present execution timelines, stage dependencies, and job distribution patterns for figuring out vital path bottlenecks. Job-level instruments expose executor useful resource consumption patterns and particular person operation timings for detailed optimization evaluation. SQL-specific instruments present question execution plans, be part of methods, and shuffle operation particulars for analytical workload optimization. You may overview the entire set of instruments obtainable within the MCP server within the mission README.

Find out how to use the MCP server

The MCP is an open customary that permits safe connections between AI functions and information sources. This MCP server implementation helps each Streamable HTTP and STDIO protocols for optimum flexibility.

The MCP server runs as an area service inside your infrastructure both on Amazon Elastic Compute Cloud (Amazon EC2) or Amazon EKS, connecting on to your Spark Historical past Server cases. You keep full management over information entry, authentication, safety, and scalability.

All of the instruments can be found with streamable HTTP and STDIO protocol:

- Streamable HTTP – Full superior instruments for LlamaIndex, LangGraph, and programmatic integrations

- STDIO mode – Core performance of Amazon Q CLI and Claude Desktop

For deployment, it helps a number of Spark Historical past Server cases and supplies deployments with AWS Glue, Amazon EMR, and Kubernetes.

Fast native setup

To arrange Spark Historical past MCP server domestically, execute the next instructions in your terminal:

For complete configuration examples and integration guides, seek advice from the mission README.

Integration with AWS managed companies

The Spark Historical past Server MCP integrates seamlessly with AWS managed companies, providing enhanced debugging capabilities for Amazon EMR and AWS Glue workloads. This integration adapts to numerous Spark Historical past Server deployments obtainable throughout these AWS managed companies whereas offering a constant, conversational debugging expertise:

- AWS Glue – Customers can use the Spark Historical past Server MCP integration with self-managed Spark Historical past Server on an EC2 occasion or launch domestically utilizing Docker container. Organising the mixing is easy. Comply with the step-by-step directions within the README to configure the MCP server along with your most well-liked Spark Historical past Server deployment. Utilizing this integration, AWS Glue customers can analyze AWS Glue ETL job efficiency no matter their Spark Historical past Server deployment strategy.

- Amazon EMR – Integration with Amazon EMR makes use of the service-managed Persistent UI function for EMR on Amazon EC2. The MCP server requires solely an EMR cluster Amazon Useful resource Title (ARN) to find the obtainable Persistent UI on the EMR cluster or robotically configure a brand new one for circumstances its lacking with token-based authentication. This eliminates the necessity for manually configuring Spark Historical past Server setup whereas offering safe entry to detailed execution information from EMR Spark functions. Utilizing this integration, information engineers can ask questions on their Spark workloads, comparable to “Are you able to get job bottle neck for spark-

? ” The MCP responds with detailed evaluation of execution patterns, useful resource utilization variations, and focused optimization suggestions, so groups can fine-tune their Spark functions for optimum efficiency throughout AWS companies.

For complete configuration examples and integration particulars, seek advice from the AWS Integration Guides.

Trying forward: The way forward for AI-assisted Spark optimization

This open-source launch establishes the muse for enhanced AI-powered Spark capabilities. This mission establishes the muse for deeper integration with AWS Glue and Amazon EMR to simplify the debugging and optimization expertise for purchasers utilizing these Spark environments. The Spark Historical past Server MCP is open supply underneath the Apache 2.0 license. We welcome contributions together with new software extensions, integrations, documentation enhancements, and deployment experiences.

Get began right this moment

Remodel your Spark monitoring and optimization workflow right this moment by offering AI brokers with clever entry to your efficiency information.

- Discover the GitHub repository

- Evaluation the great README for setup and integration directions

- Be a part of discussions and submit points for enhancements

- Contribute new options and deployment patterns

Acknowledgment: A particular because of everybody who contributed to the event and open-sourcing of the Apache Spark historical past server MCP: Vaibhav Naik, Akira Ajisaka, Wealthy Bowen, Savio Dsouza.

Concerning the authors

Manabu McCloskey is a Options Architect at Amazon Internet Providers. He focuses on contributing to open supply software supply tooling and works with AWS strategic prospects to design and implement enterprise options utilizing AWS sources and open supply applied sciences. His pursuits embrace Kubernetes, GitOps, Serverless, and Souls Collection.

Manabu McCloskey is a Options Architect at Amazon Internet Providers. He focuses on contributing to open supply software supply tooling and works with AWS strategic prospects to design and implement enterprise options utilizing AWS sources and open supply applied sciences. His pursuits embrace Kubernetes, GitOps, Serverless, and Souls Collection.

Vara Bonthu is a Principal Open Supply Specialist SA main Knowledge on EKS and AI on EKS at AWS, driving open supply initiatives and serving to AWS prospects to numerous organizations. He focuses on open supply applied sciences, information analytics, AI/ML, and Kubernetes, with intensive expertise in growth, DevOps, and structure. Vara focuses on constructing extremely scalable information and AI/ML options on Kubernetes, enabling prospects to maximise cutting-edge know-how for his or her data-driven initiatives

Vara Bonthu is a Principal Open Supply Specialist SA main Knowledge on EKS and AI on EKS at AWS, driving open supply initiatives and serving to AWS prospects to numerous organizations. He focuses on open supply applied sciences, information analytics, AI/ML, and Kubernetes, with intensive expertise in growth, DevOps, and structure. Vara focuses on constructing extremely scalable information and AI/ML options on Kubernetes, enabling prospects to maximise cutting-edge know-how for his or her data-driven initiatives

Andrew Kim is a Software program Improvement Engineer at AWS Glue, with a deep ardour for distributed programs structure and AI-driven options, specializing in clever information integration workflows and cutting-edge function growth on Apache Spark. Andrew focuses on re-inventing and simplifying options to complicated technical issues, and he enjoys creating internet apps and producing music in his free time.

Andrew Kim is a Software program Improvement Engineer at AWS Glue, with a deep ardour for distributed programs structure and AI-driven options, specializing in clever information integration workflows and cutting-edge function growth on Apache Spark. Andrew focuses on re-inventing and simplifying options to complicated technical issues, and he enjoys creating internet apps and producing music in his free time.

Shubham Mehta is a Senior Product Supervisor at AWS Analytics. He leads generative AI function growth throughout companies comparable to AWS Glue, Amazon EMR, and Amazon MWAA, utilizing AI/ML to simplify and improve the expertise of information practitioners constructing information functions on AWS.

Shubham Mehta is a Senior Product Supervisor at AWS Analytics. He leads generative AI function growth throughout companies comparable to AWS Glue, Amazon EMR, and Amazon MWAA, utilizing AI/ML to simplify and improve the expertise of information practitioners constructing information functions on AWS.

Kartik Panjabi is a Software program Improvement Supervisor on the AWS Glue staff. His staff builds generative AI options for the Knowledge Integration and distributed system for information integration.

Kartik Panjabi is a Software program Improvement Supervisor on the AWS Glue staff. His staff builds generative AI options for the Knowledge Integration and distributed system for information integration.

Mohit Saxena is a Senior Software program Improvement Supervisor on the AWS Knowledge Processing Workforce (AWS Glue and Amazon EMR). His staff focuses on constructing distributed programs to allow prospects with new AI/ML-driven capabilities to effectively rework petabytes of information throughout information lakes on Amazon S3, databases and information warehouses on the cloud.

Mohit Saxena is a Senior Software program Improvement Supervisor on the AWS Knowledge Processing Workforce (AWS Glue and Amazon EMR). His staff focuses on constructing distributed programs to allow prospects with new AI/ML-driven capabilities to effectively rework petabytes of information throughout information lakes on Amazon S3, databases and information warehouses on the cloud.