(Yurchanka-Siarhei/Shutterstock)

When Cloudflare reached the boundaries of what its present ELT device might do, the corporate had a call to make. It might attempt to discover a an present ELT device that would deal with its distinctive necessities, or it might construct its personal. After contemplating the choices, Cloudflare selected to construct its personal large knowledge pipeline framework, which it calls Jetflow.

Cloudflare is a trusted world supplier of safety, community, and content material supply options utilized by hundreds of organizations all over the world. It protects the privateness and safety of thousands and thousands of customers day by day, making the Web a safer and extra helpful place.

With so many companies, it’s not shocking to study that the corporate piles up its share of information. Cloudflare operates a petabyte-scale knowledge lake that’s full of hundreds of database tables day by day from Clickhouse, Postgres, Apache Kafka, and different knowledge repositories, the corporate stated in a weblog submit final week.

“These duties are sometimes complicated and tables could have tons of of thousands and thousands or billions of rows of recent knowledge every day,” the Cloudflare engineers wrote within the weblog. “In whole, about 141 billion rows are ingested day by day.”

When the amount and complexity of information transformations exceeded the potential its present ELT product, Cloudflare determined to exchange it with one thing that would deal with it. After evaluating the marketplace for ELT options, Cloudflare realized that there have been nothing that was generally out there was going to suit the invoice.

“It grew to become clear that we wanted to construct our personal framework to deal with our distinctive necessities–and so Jetflow was born,” the Cloudflare engineers wrote.

Earlier than laying down the primary bits, the Cloudflare group set out its necessities. The corporate wanted to maneuver knowledge into its knowledge lake in a streaming vogue, because the earlier batch-oriented system generally exceeded 24 hours, stopping each day updates. The quantity of compute and reminiscence additionally ought to come down.

Backwards compatibility and adaptability have been additionally paramount. “Attributable to our utilization of Spark downstream and Spark’s limitations in merging disparate Parquet schemas, the chosen resolution needed to supply the flexibleness to generate the exact schemas wanted for every case to match legacy,” the engineers wrote. Integration with its metadata system was additionally required.

Cloudflare additionally wished the brand new ELT instruments’ configuration recordsdata to be model managed, and to not change into a bottleneck when many adjustments are made concurrently. Ease-of-use was one other consideration, as the corporate deliberate to have folks with totally different roles and technical talents to make use of it.

“Customers shouldn’t have to fret about availability or translation of information varieties between supply and goal techniques, or writing new code for every new ingestion,” they wrote. “The configuration wanted also needs to be minimal–for instance, knowledge schema needs to be inferred from the supply system and never should be equipped by the consumer.”

On the identical time, Cloudflare wished the brand new ELT device to be customizable, and to have the choice of tuning the system to deal with particular use circumstances, similar to allocating extra sources to deal with writing Parquet recordsdata (which is a extra resource-heavy process than studying Parquet recordsdata). The engineers additionally wished to have the ability to spin up concurrent staff in several threads, totally different containers, or on totally different machines, on an as-needed foundation.

Lastly, they wished the brand new ELT device to be testable. Engineers wished to allow customers to have the ability to write exams for each stage of the information pipeline to make sure that all edge circumstances are accounted for earlier than selling a pipeline into manufacturing.

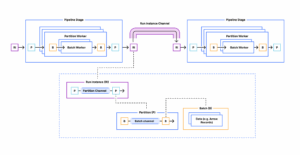

The ensuing Jetflow framework is a streaming knowledge transformation system that’s damaged down into shoppers, transformers, and loaders. The information pipeline is created as a YAML file, and the three levels will be independently examined.

The corporate designed Jetflow’s parallel knowledge processing capabilities to be idempotent (or internally constant) each on complete pipeline re-runs in addition to with retries of updates to any explicit desk as a result of an error. It additionally contains a batch mode, which supplies chunking of enormous knowledge units down into smaller items for extra environment friendly parallel stream processing, the engineers write.

One of many largest questions the Cloudflare engineers confronted was how to make sure compatibility with the assorted Jetflow levels. Initially the engineers wished to create a customized sort system that might permit levels to output knowledge in a number of knowledge codecs. That changed into a “painful studying expertise,” the engineers wrote, and led them to maintain every stage extractor class working with only one knowledge format.

The engineers chosen Apache Arrow as its inner, in-memory knowledge format. As a substitute of an inefficient strategy of studying row-based knowledge after which changing it into the columnar format, that are used to generate Parquet recordsdata (its major knowledge format for its knowledge lake), Cloudflare makes an effort to ingest knowledge in column codecs within the first place.

This paid dividends for transferring knowledge from its Clickhouse knowledge warehouse into the information lake. As a substitute of studying knowledge utilizing Clickhouse’s RowBinary format, Jetflow reads knowledge utilizing Clickhouse’s Blocks format. By utilizing the ch-go low degree library, Jetflow is ready to ingest thousands and thousands of rows of information per second utilizing a single Clickhouse connection.

“A useful lesson realized is that as with all software program, tradeoffs are sometimes made for the sake of comfort or a typical use case that won’t match your personal,” the Cloudflare engineers wrote. “Most database drivers have a tendency to not be optimized for studying giant batches of rows, and have excessive per-row overhead.”

The Cloudflare group additionally made a strategic choice when it got here to the kind of Postgres database driver to make use of. They use the jackc/pgx driver, however bypassed the database/sql Scan interface in favor of receiving uncooked knowledge for every row and utilizing the jackc/pgx inner scan features for every Postgres OID. The ensuing speedup permits Cloudflare to ingest about 600,000 rows per second with low reminiscence utilization, the engineers wrote.

At the moment, Jetflow is getting used to ingest 77 billion data per day into the Cloudflare knowledge lake. When the migration is full, it will likely be working 141 billion data per day. “The framework has allowed us to ingest tables in circumstances that might not in any other case have been potential, and offered important value financial savings as a result of ingestions working for much less time and with fewer sources,” the engineers write.

The corporate plans to open supply Jetflow sooner or later sooner or later.

Associated Objects:

ETL vs ELT for Telemetry Knowledge: Technical Approaches and Sensible Tradeoffs

Exploring the Prime Choices for Actual-Time ELT

50 Years Of ETL: Can SQL For ETL Be Changed?