There are a number of benchmarks that probe the frontier of agent capabilities (GDPval, Humanity’s Final Examination (HLE), ARC-AGI-2), however we don’t discover them consultant of the sorts of duties which are necessary to our clients. To fill this hole, we have created and are open-sourcing OfficeQA—a benchmark that proxies for economically invaluable duties carried out by Databricks’ enterprise clients. We concentrate on a quite common but difficult enterprise process: Grounded Reasoning, which includes answering questions based mostly on complicated proprietary datasets that embrace unstructured paperwork and tabular information.

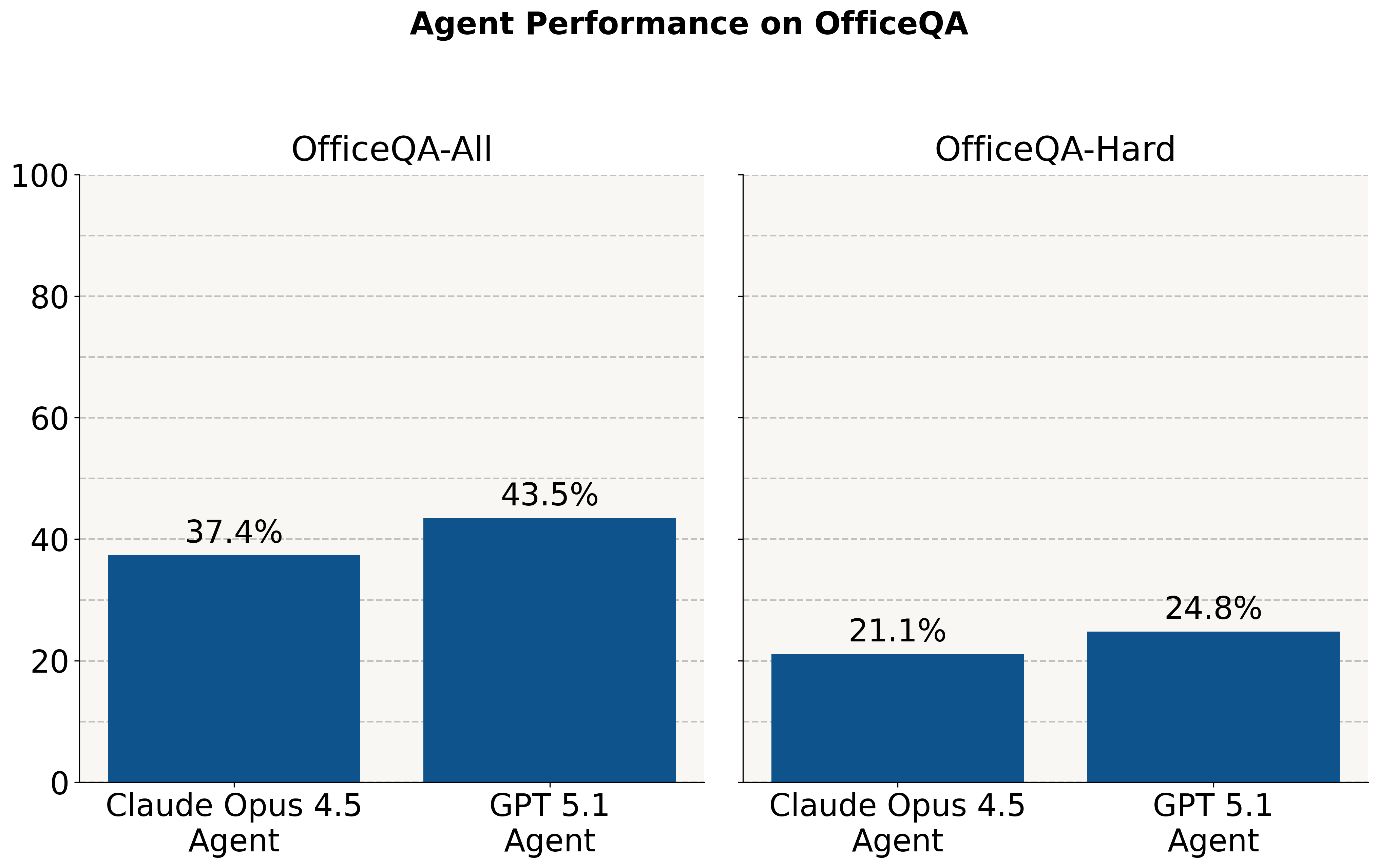

Regardless of frontier fashions performing effectively on Olympiad-style questions, we discover they nonetheless battle on these economically necessary duties. With out entry to the corpus, they reply ~2% of questions accurately. When supplied with a corpus of PDF paperwork, brokers carry out at <45% accuracy throughout all questions and <25% on a subset of the toughest questions.

On this publish, we first describe OfficeQA and our design ideas. We then consider current AI agent options — together with a GPT-5.1 Agent utilizing OpenAI’s File Search & Retrieval API and a Claude Opus 4.5 Agent utilizing Claude’s Agent SDK — on the benchmark. We experiment with utilizing Databricks’ ai_parse_document to parse OfficeQA’s corpus of PDFs, and discover that this delivers important features. Even with these enhancements, we discover that each one programs nonetheless fall in need of 70% accuracy on the complete benchmark and solely attain round 40% accuracy on the toughest cut up, indicating substantial room for enchancment on this process. Lastly, we announce the Databricks Grounded Reasoning Cup, a contest in Spring 2026 the place AI brokers will compete towards human groups to drive innovation on this area.

Dataset Desiderata

We had a number of key objectives in constructing OfficeQA. First, questions ought to be difficult as a result of they require cautious work—precision, diligence, and time—not as a result of they demand PhD-level experience. Second, every query will need to have a single, clearly appropriate reply that may be checked routinely towards floor fact, so programs could be educated and evaluated with none human or LLM judging. Lastly and most significantly, the benchmark ought to precisely mirror frequent issues that enterprise clients face.

We distilled frequent enterprise issues into three major parts:

- Doc complexity: Enterprises have massive collections of supply supplies—equivalent to scans, PDFs, or images—that usually include substantial numerical or tabular information.

- Info retrieval and aggregation: They should effectively search, extract, and mix info throughout many such paperwork.

- Analytical reasoning and query answering: They require programs able to answering questions and performing analyses grounded in these paperwork, typically involving calculations or exterior data.

We additionally be aware that many enterprises demand extraordinarily excessive precision when performing these duties. Shut just isn’t adequate. Being off by one on a product or bill quantity can have catastrophic downstream outcomes. Forecasting income and being off by 5% can result in dramatically incorrect enterprise choices.

|

Present benchmarks don’t meet our wants: |

||

|

|

|

Instance |

|

GDPVal |

Duties are clear examples of economically invaluable duties, however most don’t particularly take a look at for issues our clients care about. Professional human judging is beneficial. This benchmark additionally gives solely the set of paperwork wanted to reply every query instantly, which doesn’t enable for analysis of agent retrieval capabilities over a big corpus. |

“You’re a Music Producer in Los Angeles in 2024. You’re employed by a shopper to create an instrumental observe for a music video for a tune referred to as ‘Deja Vu’” |

|

ARC-AGI-2 |

Duties are so summary as to be divorced from the connection to actual world economically invaluable duties – they contain summary visible manipulation of coloured grids. Very small, specialised fashions are able to matching the efficiency of far bigger (1000x) common goal LLMs. |

|

|

Humanity’s Final Examination (HLE) |

Not clearly consultant of most economically invaluable work, and definitely not consultant of the workloads of Databricks’ clients. Questions require PhD-level experience and no single human is probably going in a position to reply all of the questions. |

“Compute the lowered twelfth dimensional Spin bordism of the classifying area of the Lie group G2. “Lowered” means you could ignore any bordism lessons that may be represented by manifolds with trivial principal G2 bundle.” |

Introducing the OfficeQA Benchmark

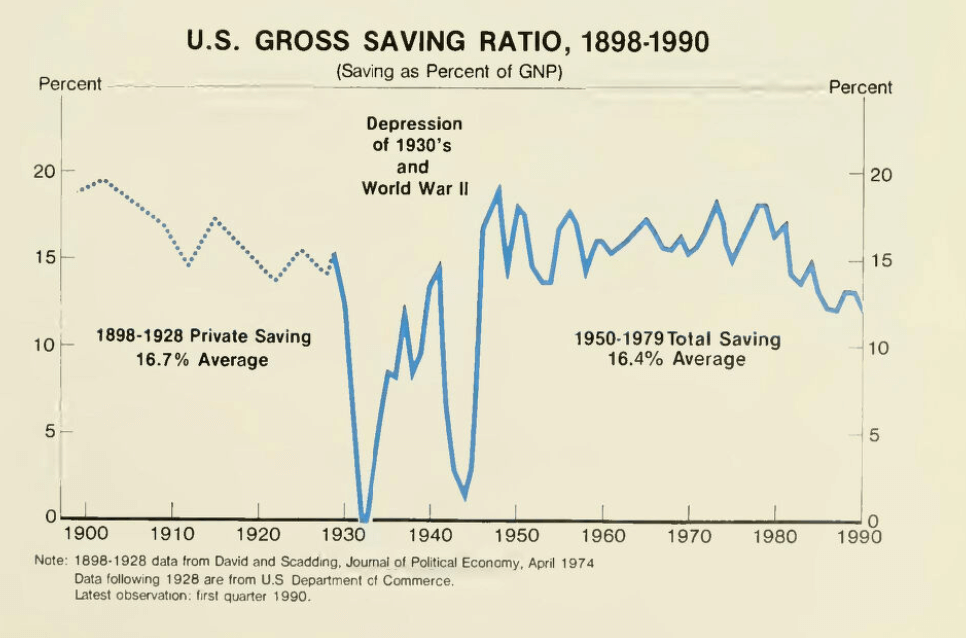

We introduce OfficeQA, a dataset approximating proprietary enterprise corpora, however freely obtainable and supporting a wide range of numerous and attention-grabbing questions. We leverage the U.S. Treasury Bulletins to create this benchmark, traditionally revealed month-to-month for 5 many years starting in 1939 and quarterly thereafter. Every bulletin is 100-200 pages lengthy and consists of prose, many complicated tables, charts and figures describing the operations of the U.S. Treasury – the place cash got here from, the place it’s, the place it went and the way it financed operations. The full dataset includes ~89,000 pages. Till 1996, the bulletins have been scans of bodily paperwork and afterwards, digitally produced PDFs.

We additionally see worth in making this historic Treasury information extra accessible to the general public, researchers, and lecturers. USAFacts is a corporation that naturally shares this imaginative and prescient, on condition that its core mission is “to make authorities information simpler to entry and perceive.” They partnered with us to develop this benchmark, figuring out the Treasury Bulletins as a perfect dataset and guaranteeing our questions mirrored reasonable use circumstances for these paperwork.

In step with our objective that the questions ought to be answerable by non-expert people, not one of the questions require greater than highschool math operations. We do count on most people would want to search for a few of the monetary or statistical phrases through the net.

Dataset Overview

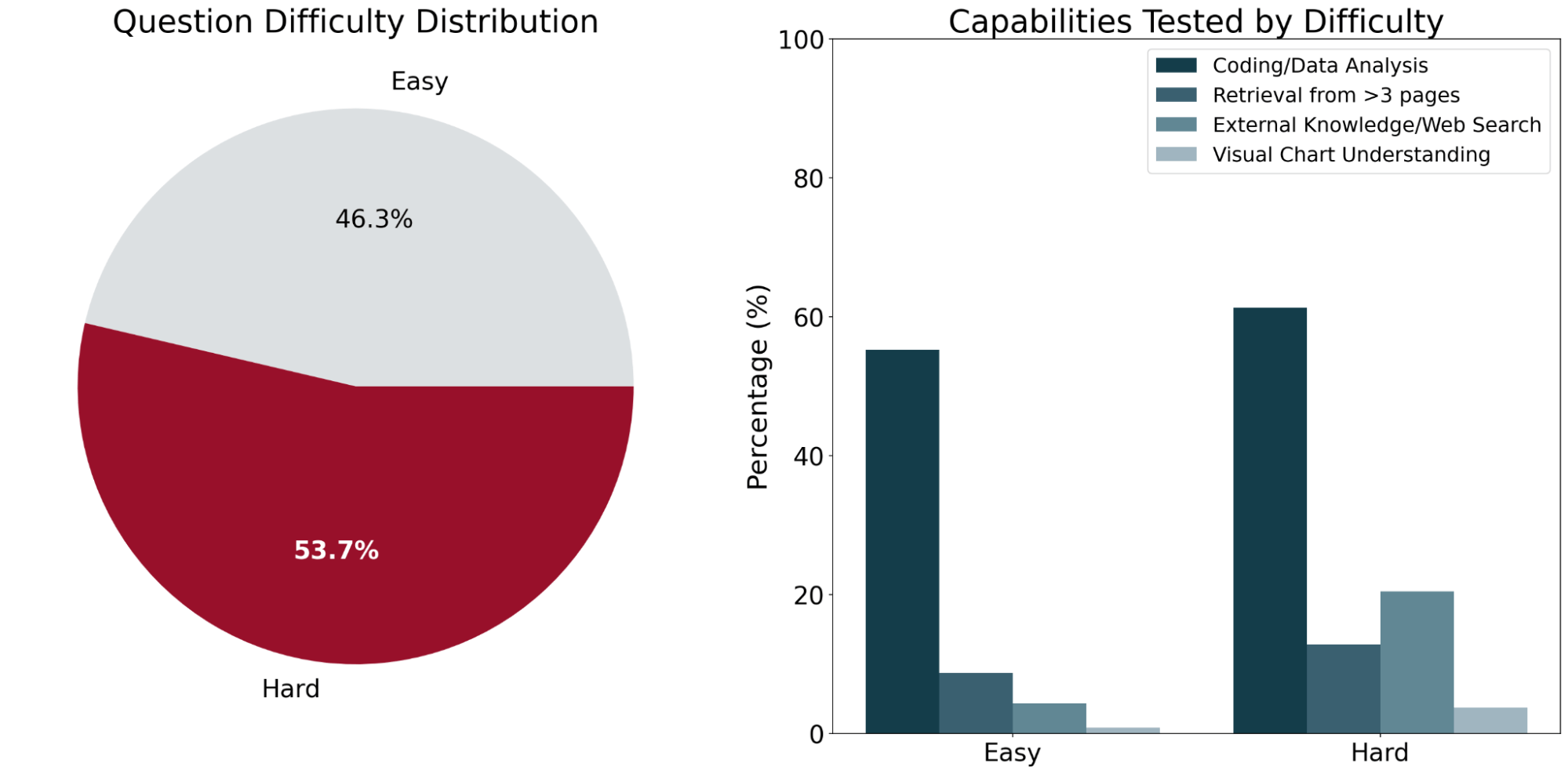

OfficeQA consists of 246 questions organized into two problem ranges – straightforward and arduous – based mostly on the efficiency of current AI programs on the questions. “Straightforward” questions are outlined as questions that each of the frontier agent programs (detailed under) bought appropriate, and “Arduous” questions are questions that not less than one of many brokers answered incorrectly.

The questions on common require info from ~2 completely different Treasury Bulletin paperwork. Throughout a consultant pattern of the benchmark, human solvers averaged a completion time of fifty minutes per query. Nearly all of this time was spent finding the knowledge required to reply the query throughout quite a few tables and figures throughout the corpus.

To make sure the questions in OfficeQA required document-grounded retrieval, we made greatest effort to filter out any questions that LLMs may reply accurately with out entry to the supply paperwork (i.e., could possibly be answered through a mannequin’s parametric data or internet search). Most of those filtered questions tended to be easier, or ask about extra common details, like “Within the fiscal yr that George H.W. Bush first turned president, which U.S federal belief fund had the most important improve in funding?”

Curiously, there have been a number of seemingly extra complicated questions that fashions have been in a position to reply with parametric data alone like “Conduct a two-sample t-test to find out whether or not the imply U.S Treasury bond rate of interest modified between 1942–1945 (earlier than the top of World Warfare II) and 1946–1949 (after the top of World Warfare II) on the 5% significance stage. What’s the calculated t-statistic, rounded to the closest hundredth?” On this case, the mannequin leverages historic monetary information that have been memorized throughout pre-training after which computes the ultimate worth accurately. Examples like these have been filtered from the ultimate benchmark.

Instance OfficeQA Questions

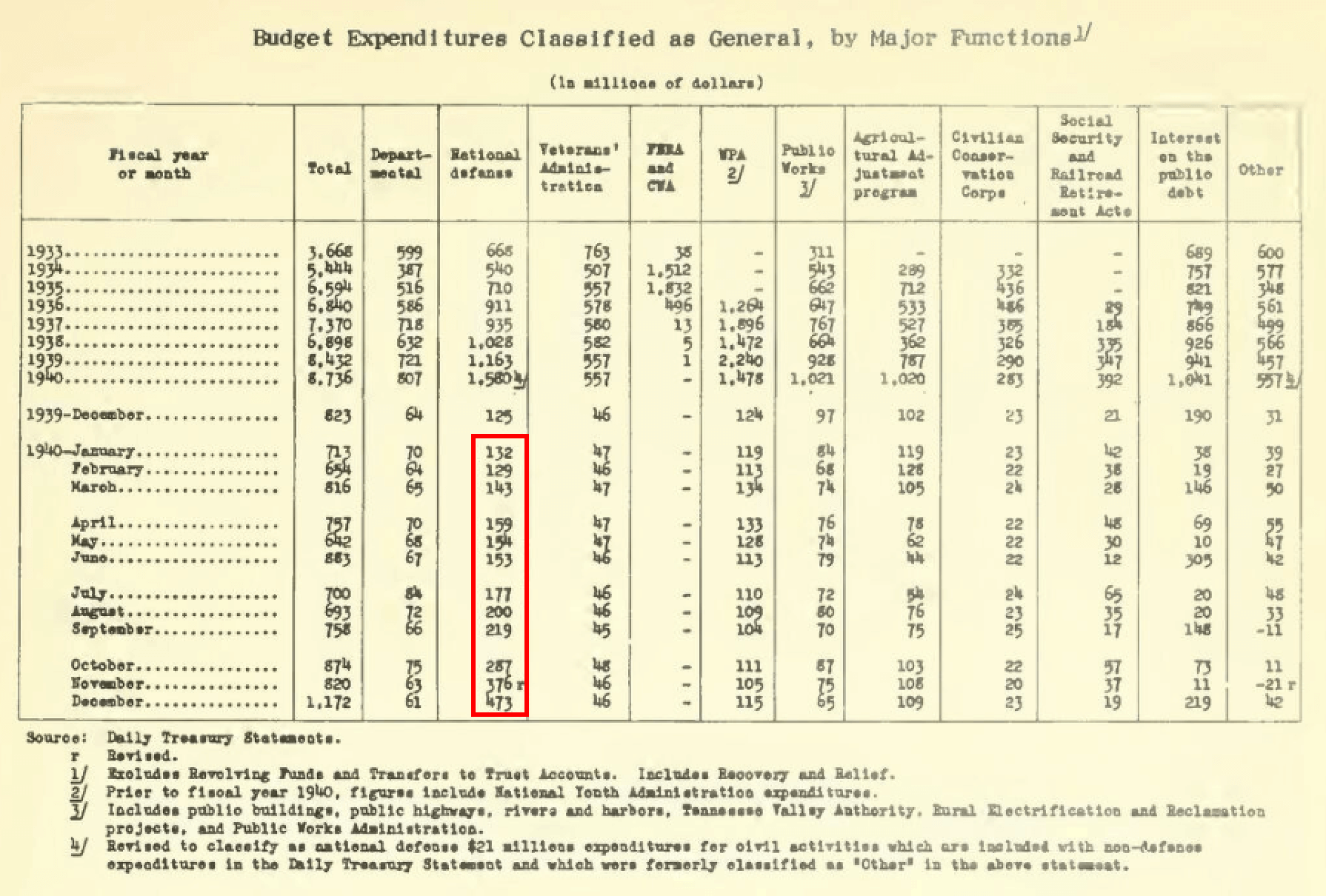

Straightforward: “What have been the entire expenditures (in hundreds of thousands of nominal {dollars}) for U.S nationwide protection within the calendar yr of 1940?”

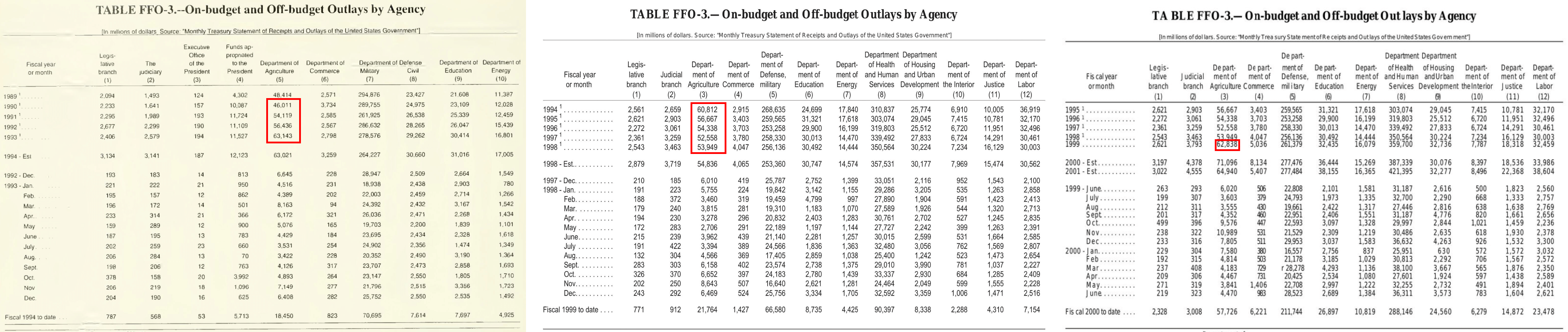

This requires a primary worth look-up, and summing of the values for the months within the specified calendar yr in a single desk (highlighted in purple). Observe that the totals for prior years are for fiscal and never calendar years.

Arduous: “Predict the entire outlays of the US Division of Agriculture in 1999 utilizing annual information from the years 1990-1998 (inclusive). Use a primary linear regression match to supply the slope and y-intercept. Deal with 1990 as yr “0” for the time variable. Carry out all calculations in nominal {dollars}. You do not want to take into consideration postyear changes. Report all values inside sq. brackets, separated by commas, with the primary worth because the slope rounded to the closest hundredth, the second worth because the y-intercept rounded to the closest complete quantity and the third worth as the expected worth rounded to the closest complete quantity.”

This requires discovering info whereas navigating throughout a number of paperwork (pictured above), and includes extra superior reasoning and statistical calculation with detailed answering pointers.

Baseline Brokers: Implementation and Efficiency

We consider the next baselines1:

- GPT-5.1 Agent with File Search: We use GPT-5.1, configured with reasoning_effort=excessive, through the OpenAI Responses API and provides it entry to instruments like file search and internet search. The PDFs are uploaded to the OpenAI Vector Retailer, the place they’re routinely parsed and listed. We additionally experiment with offering the Vector Retailer with pre-parsed paperwork utilizing ai_parse_document.

- Claude Opus 4.5 Agent: We use Claude’s Agent Python SDK with Claude Opus 4.5 as a backend (default considering=excessive) and configure this agent with the SDK-offered autonomous capabilities like context administration and a built-in device ecosystem containing instruments like file search (learn, grep, glob, and so on.), internet search, programming execution and different device functionalities. Because the Claude Agent SDK didn’t present its personal built-in parsing resolution, we experimented with (1) offering the agent with the PDFs saved in an area folder sandbox and talent to put in PDF reader packages like

pdftotextandpdfplumber, and (2) offering the agent with pre-parsed paperwork utilizing ai_parse_document. - LLM with Oracle PDF Web page(s): We consider Claude Opus 4.5 and GPT 5.1 by instantly offering the mannequin with the precise oracle PDF(s) web page(s) required for answering the query. It is a non-agentic baseline that measures how effectively LLMs can carry out with the supply materials obligatory for reasoning and deriving the proper response, representing an higher certain of efficiency assuming an oracle retrieval system.

- LLM with Oracle Parsed PDF Web page(s): We additionally take a look at offering Claude Opus 4.5 and GPT-5.1 instantly with the pre-parsed Oracle PDF web page(s) required to reply the query, which have been parsed utilizing ai_parse_document.

For all experiments, we take away any current OCR layer from the U.S. Treasury Bulletin PDFs on account of their low accuracy. This ensures truthful analysis of every agent’s capability to extract and interpret info instantly from the scanned paperwork.

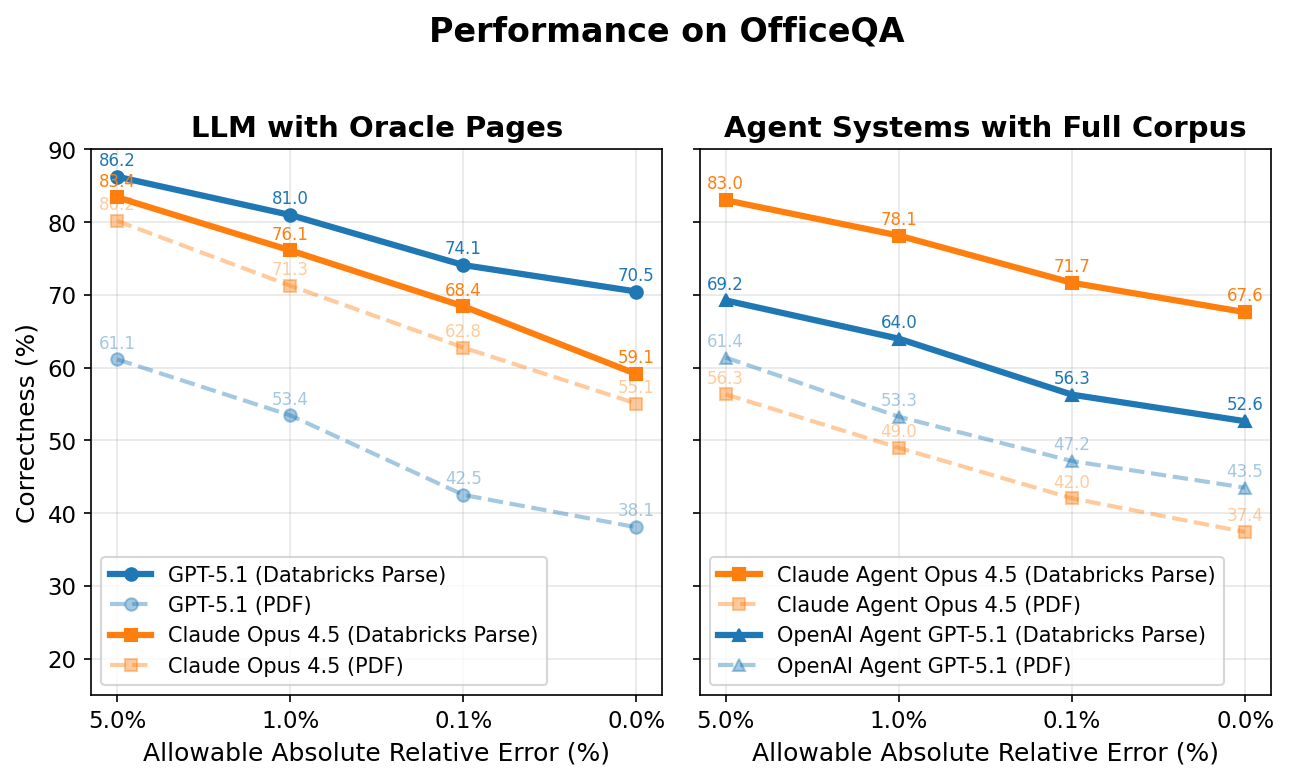

We plot the correctness of all of the brokers under on the y-axis whereas the x-axis is the allowable absolute relative error to be thought-about appropriate. For instance, if the reply to a query is ‘5.2 million’ and the agent solutions ‘5.1 million’ (1.9% off from the unique reply), the agent could be scored as appropriate at something above a 1.9% allowable absolute relative error, and incorrect at something <1.9%.

LLM with Oracle Web page(s)

Curiously, each Claude Opus 4.5 and GPT 5.1 carry out poorly even when offered instantly with the oracle PDF web page(s) wanted for every query. Nonetheless, when these identical pages are preprocessed utilizing Databricks ai_parse_document, efficiency jumps considerably—by +4.0 and +32.4 share factors for Claude Opus 4.5 and GPT 5.1 respectively (representing +7.5% and +85.0% relative will increase).

With parsing, the best-performing mannequin (GPT-5.1) reaches roughly 70% accuracy. The remaining ~30% hole stems from a number of components: (1) these non-agent baselines lack entry to instruments like internet search, which ~13% of questions require; (2) parsing and extraction errors from tables and charts happen; and (3) computational reasoning errors stay.

Agent Programs with Full Corpus

When supplied with the OfficeQA corpus instantly, each brokers reply over half of OfficeQA questions incorrectly – reaching a most efficiency of 43.5% at 0% allowable error. Offering brokers with paperwork parsed with Databricks ai_parse_document improves efficiency as soon as once more: the Claude 4.5 Opus Agent improves by +30.2 share factors and the GPT 5.1 Agent by +9.1 share factors (81.7% and 20.9% relative will increase, respectively).

Nonetheless, even the perfect agent – Claude Agent with Claude Opus 4.5 – nonetheless achieves lower than 70% p.c correctness at 0% allowable error with parsed paperwork, underscoring the issue of those duties for frontier AI programs. Attaining this larger efficiency additionally requires larger latency and related price. On common, the Claude Agent takes ~5 minutes to reply every query, whereas the lower-scoring OpenAI agent takes ~3 minutes.

As anticipated, correctness scores regularly improve when larger absolute relative errors are allowed. Such discrepancies come up from precision divergence, the place the brokers might use supply values which have slight variations that drift throughout cascading operations and produce small remaining deviations within the remaining reply. Errors embrace incorrect parsing (studying ‘508’ as ‘608’, for instance), misinterpretation of statistical values, or an agent’s lack of ability to retrieve related and correct info from the corpus. As an example, an agent produces an incorrect but shut reply to the bottom fact for this query: “What’s the sum of every yr’s complete Public debt securities excellent held by US Authorities accounts, in nominal hundreds of thousands of {dollars} recorded on the finish of the fiscal years 2005 to 2009 inclusive, returned as a single worth?” The agent finally ends up retrieving info from the June 2010 bulletin, however the related and proper values are discovered within the September 2010 publication (upon reported revisions), leading to a distinction of 21 million {dollars} (0.01% off from the bottom fact).

One other instance that ends in a bigger distinction is inside this query, “Carry out a time collection evaluation on the reported complete surplus/deficit values from calendar years 1989-2013, treating all values as nominal values in hundreds of thousands of US {dollars} after which match a cubic polynomial regression mannequin to estimate the anticipated surplus or deficit for calendar yr 2025 and report absolutely the distinction with the U.S. Treasury’s reported estimate rounded to the closest complete quantity in hundreds of thousands of {dollars}.”, an agent incorrectly retrieves the fiscal yr values as a substitute of the calendar yr values for 8 years, which modifications the enter collection used for the cubic regression and results in a unique 2025 prediction and absolute-difference end result that’s off by $286,831 million (31.6% off from the bottom fact).

Failure Modes

Whereas creating OfficeQA, we noticed a number of frequent failure modes of current AI programs:

- Parsing errors stay a elementary problem—complicated tables with nested column hierarchies, merged cells, and weird formatting usually end in misaligned or incorrectly extracted values. For instance, we noticed circumstances the place column shifts throughout automated extraction triggered numerical values to be attributed to the flawed headers totally.

- Reply ambiguity additionally poses difficulties: monetary paperwork just like the U.S. Treasury Bulletin are regularly revised and reissued, that means a number of respectable values might exist for a similar information level relying on which publication date the agent references. Brokers usually cease looking as soon as they discover a believable reply, lacking essentially the most authoritative or up-to-date supply, regardless of being prompted to seek out the most recent values.

- Visible understanding represents one other important hole. Roughly 3% of OfficeQA questions reference charts, graphs, or figures that require visible reasoning. Present brokers regularly fail on these duties, as proven within the instance under.

These remaining failure modes showcase that analysis progress remains to be wanted earlier than AI brokers can deal with the complete spectrum of enterprise in-domain reasoning duties.

Databricks Grounded Reasoning Cup

We’ll pit AI Brokers towards groups of people in Spring 2026 to see who can obtain the perfect outcomes on the OfficeQA benchmark.

- Timing: We’re focusing on San Francisco for the principle occasion, possible between late March and late April. Precise dates shall be launched shortly to those that join updates.

- In-Individual Finale: The highest groups shall be invited to San Francisco for the ultimate competitors.

We’re at present opening an curiosity record. Go to the hyperlink to get notified as quickly because the official guidelines, dates, and prize swimming pools are introduced. (Coming quickly!)

Conclusion

The OfficeQA benchmark represents a major step towards evaluating AI brokers on economically invaluable, real-world grounded reasoning duties. By grounding our benchmark within the U.S. Treasury Bulletins, a corpus of practically 89,000 pages spanning over eight many years, we’ve created a difficult testbed that requires brokers to parse complicated tables, retrieve info throughout many paperwork, and carry out analytical reasoning with excessive precision.

The OfficeQA benchmark is freely obtainable to the analysis neighborhood and could be discovered right here. We encourage groups to discover OfficeQA and current options on the benchmark as a part of the Databricks Grounded Reasoning Cup.

Authors: Arnav Singhvi, Krista Opsahl-Ong, Jasmine Collins, Ivan Zhou, Cindy Wang, Ashutosh Baheti, Jacob Portes, Sam Havens, Erich Elsen, Michael Bendersky, Matei Zaharia, Xing Chen.

We’d prefer to thank Dipendra Kumar Misra, Owen Oertell, Andrew Drozdov, Jonathan Chang, Simon Favreau-Lessard, Erik Lindgren, Pallavi Koppol, Veronica Lyu, in addition to SuperAnnotate and Turing for serving to to create the questions in OfficeQA.

Lastly, we’d additionally prefer to thank USAFacts for his or her steerage in figuring out the U.S. Treasury Bulletins and offering suggestions to make sure questions have been topical and related.

1 We tried to guage the not too long ago launched Gemini File Search Instrument API as a part of a consultant Gemini Agent baseline with Gemini 3. Nonetheless, about 30% of the PDFs and parsed PDFs within the OfficeQA corpus didn’t ingest, and the File Search Instrument is incompatible with the Google Search Instrument. Since this may restrict the agent from answering OfficeQA questions that want exterior data, we excluded this setup from our baseline analysis. We’ll revisit it as soon as ingestion works reliably so we are able to measure its efficiency precisely.