Final up to date: December 17, 2025

Initially printed: December 18, 2017

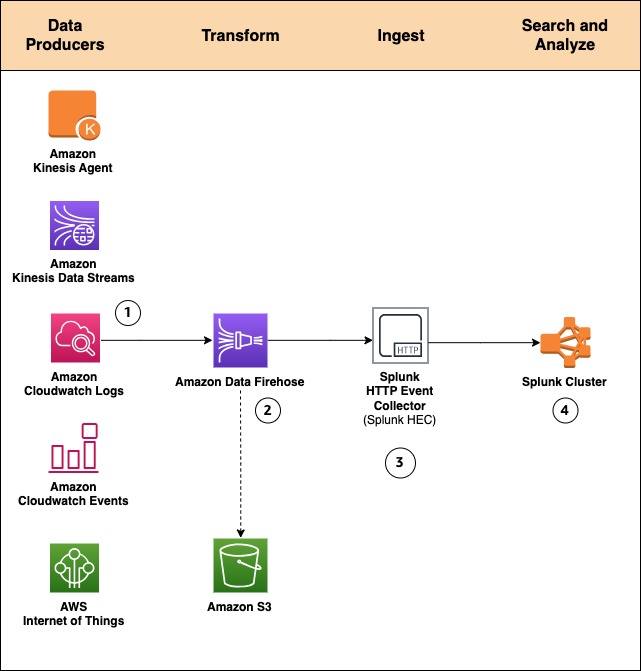

Amazon Knowledge Firehose helps Splunk Enterprise and Splunk Cloud as a supply vacation spot. This native integration between Splunk Enterprise, Splunk Cloud, and Amazon Knowledge Firehose is designed to make AWS knowledge ingestion setup seamless, whereas providing a safe and fault-tolerant supply mechanism. We wish to allow prospects to observe and analyze machine knowledge from any supply and use it to ship operational intelligence and optimize IT, safety, and enterprise efficiency.

With Amazon Knowledge Firehose, prospects can use a totally managed, dependable, and scalable knowledge streaming answer to Splunk. On this put up, we inform you a bit extra in regards to the Amazon Knowledge Firehose and Splunk integration. We additionally present you tips on how to ingest giant quantities of information into Splunk utilizing Amazon Knowledge Firehose.

Push vs. Pull knowledge ingestion

Presently, prospects use a mix of two ingestion patterns, based totally on knowledge supply and quantity, along with present firm infrastructure and experience:

- Pull-based strategy: Utilizing devoted pollers operating the favored Splunk Add-on for AWS to tug knowledge from numerous AWS companies equivalent to Amazon CloudWatch or Amazon S3.

- Push-based strategy: Streaming knowledge immediately from AWS to Splunk HTTP Occasion Collector (HEC) by utilizing Amazon Knowledge Firehose. Examples of relevant knowledge sources embody CloudWatch Logs and Amazon Kinesis Knowledge Streams.

The pull-based strategy presents knowledge supply ensures equivalent to retries and checkpointing out of the field. Nevertheless, it requires extra ops to handle and orchestrate the devoted pollers, that are generally operating on Amazon EC2 cases. With this setup, you pay for the infrastructure even when it’s idle.

Then again, the push-based strategy presents a low-latency scalable knowledge pipeline made up of serverless assets like Amazon Knowledge Firehose sending on to Splunk indexers (by utilizing Splunk HEC). This strategy interprets into decrease operational complexity and value. Nevertheless, should you want assured knowledge supply then you need to design your answer to deal with points equivalent to a Splunk connection failure or Lambda execution failure. To take action, you may use, for instance, AWS Lambda Useless Letter Queues.

How about getting the very best of each worlds?

Let’s go over the brand new integration’s end-to-end answer and look at how Amazon Knowledge Firehose and Splunk collectively broaden the push-based strategy right into a native AWS answer for relevant knowledge sources.

By utilizing a managed service like Amazon Knowledge Firehose for knowledge ingestion into Splunk, we offer out-of-the-box reliability and scalability. One of many ache factors of the outdated strategy was the overhead of managing the info assortment nodes (Splunk heavy forwarders). With the brand new Amazon Knowledge Firehose to Splunk integration, there aren’t any forwarders to handle or arrange. Knowledge producers (1) are configured via the AWS Administration Console to drop knowledge into Amazon Knowledge Firehose.

It’s also possible to create your personal knowledge producers. For instance, you possibly can drop knowledge right into a Firehose supply stream by utilizing Amazon Kinesis Agent, or by utilizing the Firehose API (PutRecord(), PutRecordBatch()), or by writing to a Kinesis Knowledge Stream configured to be the info supply of a Firehose supply stream. For extra particulars, discuss with Sending Knowledge to an Amazon Knowledge Firehose Supply Stream.

You may want to remodel the info earlier than it goes into Splunk for evaluation. For instance, you may wish to enrich it or filter or anonymize delicate knowledge. You are able to do so utilizing AWS Lambda and enabling knowledge transformation in Amazon Knowledge Firehose. On this situation, Amazon Knowledge Firehose is used to decompress the Amazon CloudWatch logs by enabling the function.

Programs fail on a regular basis. Let’s see how this integration handles exterior failures to ensure knowledge sturdiness. In circumstances when Amazon Knowledge Firehose can’t ship knowledge to the Splunk Cluster, knowledge is mechanically backed as much as an S3 bucket. You possibly can configure this function whereas creating the Firehose supply stream (2). You possibly can select to again up all knowledge or solely the info that’s failed throughout supply to Splunk.

Along with utilizing S3 for knowledge backup, this Firehose integration with Splunk helps Splunk Indexer Acknowledgments to ensure occasion supply. This function is configured on Splunk’s HTTP Occasion Collector (HEC) (3). It ensures that HEC returns an acknowledgment to Amazon Knowledge Firehose solely after knowledge has been listed and is out there within the Splunk cluster (4).

Now let’s take a look at a hands-on train that exhibits tips on how to ahead VPC move logs to Splunk.

How-to information

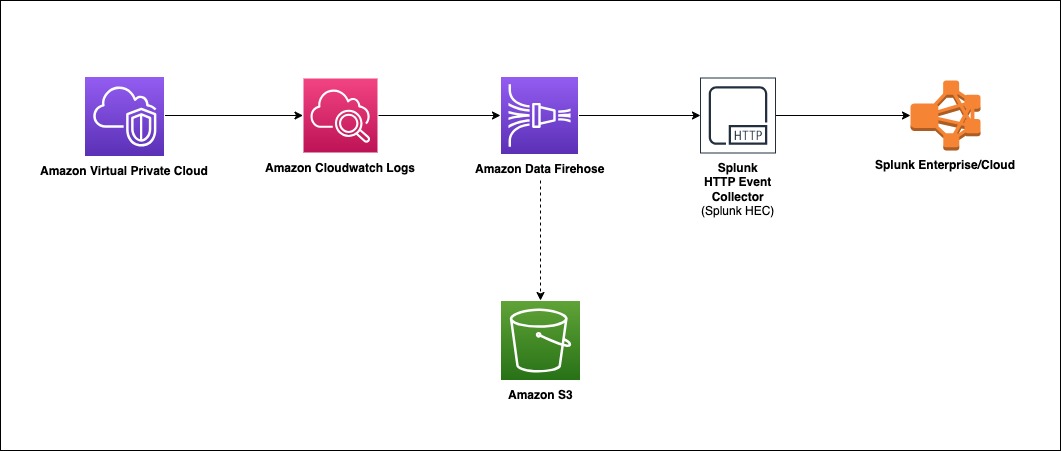

To course of VPC move logs, we implement the next structure.

Amazon Digital Non-public Cloud (Amazon VPC) delivers move log information into an Amazon CloudWatch Logs group. Utilizing a CloudWatch Logs subscription filter, we arrange real-time supply of CloudWatch Logs to an Amazon Knowledge Firehose stream.

Knowledge coming from CloudWatch Logs is compressed with gzip compression. To work with this compression, we are going to allow decompression for the Firehose stream. Firehose then delivers the uncooked logs to the Splunk Http Occasion Collector (HEC).

If supply to the Splunk HEC fails, Firehose deposits the logs into an Amazon S3 bucket. You possibly can then ingest the occasions from S3 utilizing an alternate mechanism equivalent to a Lambda operate.

When knowledge reaches Splunk (Enterprise or Cloud), Splunk parsing configurations (packaged within the Splunk Add-on for Amazon Knowledge Firehose) extract and parse all fields. They make knowledge prepared for querying and visualization utilizing Splunk Enterprise and Splunk Cloud.

Walkthrough

Set up the Splunk Add-on for Amazon Knowledge Firehose

The Splunk Add-on for Amazon Knowledge Firehose permits Splunk (be it Splunk Enterprise, Splunk App for AWS, or Splunk Enterprise Safety) to make use of knowledge ingested from Amazon Knowledge Firehose. Set up the Add-on on all of the indexers with an HTTP Occasion Collector (HEC). The Add-on is out there for obtain from Splunkbase. For troubleshooting help, please discuss with: AWS Knowledge Firehose troubleshooting documentation & Splunk’s official troubleshooting information

HTTP Occasion Collector (HEC)

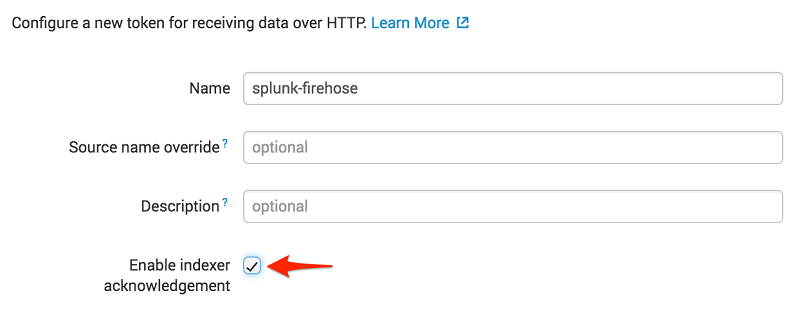

Earlier than you should utilize Amazon Knowledge Firehose to ship knowledge to Splunk, arrange the Splunk HEC to obtain the info. From Splunk internet, go to the Setting menu, select Knowledge Inputs, and select HTTP Occasion Collector. Select World Settings, guarantee All tokens is enabled, after which select Save. Then select New Token to create a brand new HEC endpoint and token. If you create a brand new token, ensure that Allow indexer acknowledgment is checked.

When prompted to pick out a supply kind, choose aws:cloudwatchlogs:vpcflow

Create an S3 backsplash bucket

To offer for conditions during which Amazon Knowledge Firehose can’t ship knowledge to the Splunk Cluster, we use an S3 bucket to again up the info. You possibly can configure this function to again up all knowledge or solely the info that’s failed throughout supply to Splunk.

Be aware: Bucket names are distinctive.

Create an Amazon Knowledge Firehose supply stream

On the AWS console, open the Amazon Knowledge Firehose console, and select Create Firehose Stream.

Choose DirectPUT because the supply and Splunk because the vacation spot.

In case you are utilizing Firehose to ship CloudWatch Logs and wish to ship decompressed knowledge to your Firehose stream vacation spot, use Firehose Knowledge Format Conversion (Parquet, ORC) or Dynamic partitioning. You have to allow decompression in your Firehose stream, take a look at Ship decompressed Amazon CloudWatch Logs to Amazon S3 and Splunk utilizing Amazon Knowledge Firehose

Enter your Splunk HTTP Occasion Collector (HEC) info in vacation spot settings

Be aware: Amazon Knowledge Firehose requires the Splunk HTTP Occasion Collector (HEC) endpoint to be terminated with a legitimate CA-signed certificates matching the DNS hostname used to connect with your HEC endpoint. You obtain supply errors if you’re utilizing a self-signed certificates.

On this instance, we solely again up logs that fail throughout supply.

To observe your Firehose supply stream, allow error logging. Doing this implies that you may monitor file supply errors. Create an IAM function for the Firehose stream by selecting Create new, or Select present IAM function.

You now get an opportunity to assessment and modify the Firehose stream settings. When you’re happy, select Create Firehose Stream.

Create a VPC Circulation Log

To ship occasions from Amazon VPC, you want to arrange a VPC move log. If you have already got a VPC move log you wish to use, you possibly can skip to the “Publish CloudWatch to Amazon Knowledge Firehose” part.

On the AWS console, open the Amazon VPC service. Then select VPC, and select the VPC you wish to ship move logs from. Select Circulation Logs, after which select Create Circulation Log. For those who don’t have an IAM function that enables your VPC to publish logs to CloudWatch, select Create and use a brand new service function.

As soon as lively, your VPC move log ought to appear like the next.

Publish CloudWatch to Amazon Knowledge Firehose

If you generate site visitors to or out of your VPC, the log group is created in Amazon CloudWatch. We create an IAM function to permit Cloudwatch to publish logs to the Amazon Knowledge Firehose Stream.

To permit CloudWatch to publish to your Firehose stream, you want to give it permissions.

Right here is the content material for TrustPolicyForCWLToFireHose.json.

Connect the coverage to the newly created function.

Right here is the content material for PermissionPolicyForCWLToFireHose.json.

The brand new log group has no subscription filter, so arrange a subscription filter. Setting this up establishes a real-time knowledge feed from the log group to your Firehose supply stream. Choose the VPC move log and select Actions. Then select Subscription filters adopted by Create Amazon Knowledge Firehose subscription filter.

If you run the AWS CLI command previous, you don’t get any acknowledgment. To validate that your CloudWatch Log Group is subscribed to your Firehose stream, examine the CloudWatch console.

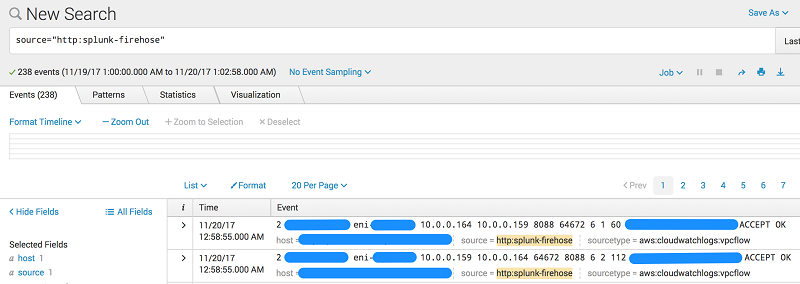

As quickly because the subscription filter is created, the real-time log knowledge from the log group goes into your Firehose supply stream. Your stream then delivers it to your Splunk Enterprise or Splunk Cloud surroundings for querying and visualization. The screenshot following is from Splunk Enterprise.

As well as, you possibly can monitor and look at metrics related along with your supply stream utilizing the AWS console.

Conclusion

Though our walkthrough makes use of VPC Circulation Logs, the sample can be utilized in lots of different eventualities. These embody ingesting knowledge from AWS IoT, different CloudWatch logs and occasions, Kinesis Streams or different knowledge sources utilizing the Kinesis Agent or Kinesis Producer Library. You might use a Lambda blueprint or disable file transformation fully relying in your use case. For an extra use case utilizing Amazon Knowledge Firehose, take a look at That is My Structure Video, which discusses tips on how to securely centralize cross-account knowledge analytics utilizing Kinesis and Splunk.

For those who discovered this put up helpful, you should definitely take a look at Integrating Splunk with Amazon Kinesis Streams.

In regards to the Authors