This can be a visitor put up by Jake J. Dalli, Knowledge Platform Group Lead at Tipico, in partnership with AWS.

Tipico is the primary identify in sports activities betting in Germany. Every single day, we join thousands and thousands of followers to the joys of sport, combining expertise, ardour, and belief to ship quick, safe, and thrilling betting, each on-line and in additional than a thousand retail outlets throughout Germany. We additionally carry this expertise to Austria, the place we proudly function a powerful sports activities betting enterprise.

On this put up, we present how Tipico constructed a unified information transformation platform utilizing Amazon Managed Workflows for Apache Airflow (Amazon MWAA) and AWS Batch.

Resolution overview

To assist vital wants resembling product monitoring, buyer insights, and income assurance, our central information perform wanted to offer the instruments for a number of cross-functional analytics and information science groups to run scalable batch workloads on the present information warehouse, powered by Amazon Redshift. The workloads of Tipico’s information neighborhood included extract, rework, and cargo (ELT), statistical modeling, machine studying (ML) coaching, and reporting throughout various frameworks and languages.

Prior to now, analytics groups operated in isolation, distinct from one another and the central information perform. Completely different groups maintained their very own set of instruments, typically performing the identical perform and creating information silos. Lack of visibility meant an absence of standardization. This siloed method slowed down the supply of insights and prevented the corporate from reaching a unified information technique that ensured availability and scalability.

The necessity to introduce a single, unified platform that promoted visibility and collaboration turned clear. Nonetheless, the range of workloads introduced one other layer of complexity. Groups wanted to sort out various kinds of issues and introduced distinct skillsets and preferences in tooling. Analysts would possibly rely closely on SQL and enterprise intelligence (BI) platforms, whereas information scientists most well-liked Python or R, and engineers leaned on containerized workflows or orchestration frameworks.

Our objective was to architect a brand new system that helps range whereas sustaining operational management, delivering an open orchestration platform with built-in safety isolation, scheduling, retry mechanisms, fine-grained role-based entry management (RBAC), and governance options resembling two-person approval for manufacturing workflows. We achieved this by designing a system with the next rules:

- Carry Your Personal Container (BYOC) – Groups are given the pliability to bundle their workloads as containers and are free to decide on dependencies, libraries, or runtime environments. For groups with extremely specialised workloads, this meant that they may work in a setup tailor-made to their wants whereas additionally working inside a harmonized platform. Alternatively, groups that didn’t require totally custom-made environments may redesign their workloads to align with current workloads.

- Centralized orchestration for full transparency – All groups can see all workflows and construct interdependencies between them

- Shared orchestration, remoted compute – Workloads run in team-specific Docker containers inside a unified compute setting, offering scalability whereas retaining execution traceable to every crew.

- Standardized interfaces, versatile execution – Widespread patterns (operators, hooks, logging, or monitoring) scale back complexity, and groups retain freedom to innovate inside their containers.

- Cross-team approvals for vital workflows saved inside model management – Adjustments comply with a four-eye precept, requiring evaluate and approval from one other crew earlier than execution, offering accountability and decreasing threat. This allowed our core information perform to watch and contribute recommendations to work throughout totally different analytics groups.

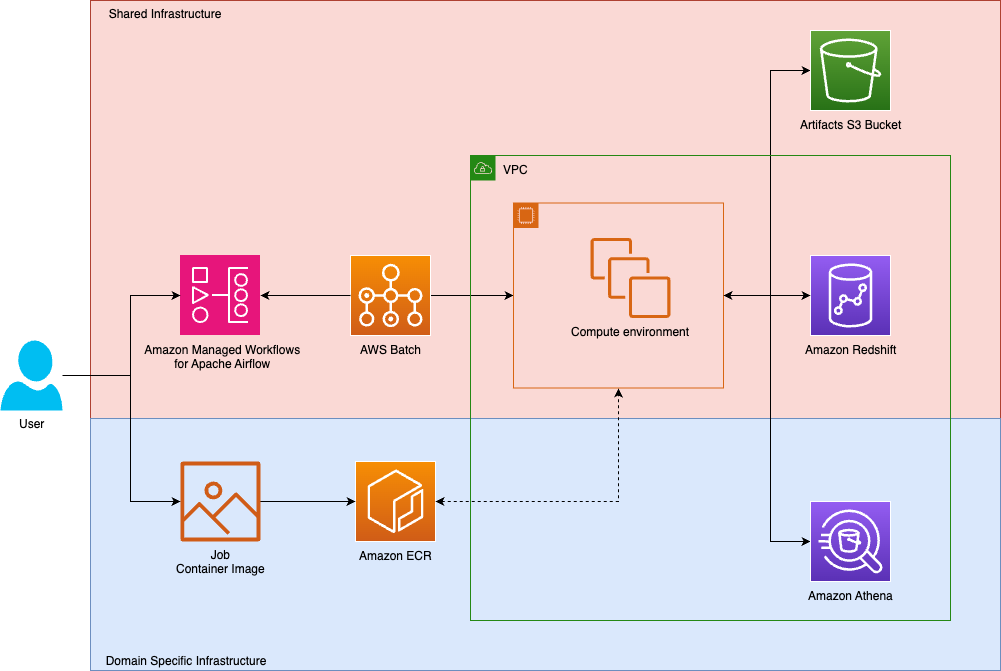

We devised a system whereby orchestration and execution of duties function on shared infrastructure, which groups work together with by domain-specific infrastructure. In Tipico’s case, every crew pushes photos to team-owned container situations. Such containers present code for workflows, together with execution of ELT pipelines or transformations on prime of domain-specific information lakes.

The next diagram exhibits the answer structure.

The technical problem was to architect a versatile and high-performance orchestration layer that might scale reliably whereas additionally remaining framework-agnostic, integrating seamlessly with current infrastructure.

When designing our system, we have been conscious of the a number of container orchestration options provided by Amazon Net Companies (AWS), together with Amazon Elastic Kubernetes Service (Amazon EKS), Amazon Elastic Container Service (Amazon ECS), and AWS Batch, amongst others. In the long run, the crew chosen AWS Batch as a result of it abstracts away cluster administration, offers elastic scaling, and inherently helps batch workloads as a design function.

Resolution particulars

Earlier than adopting the present resolution, Tipico experimented with working a self-managed Apache Airflow setup. Though it was purposeful, it turned more and more burdensome to keep up. The shift towards a managed and scalable resolution was pushed by the necessity to focus extra on empowering groups to ship relatively than sustaining the infrastructure. Tipico replatformed the central orchestration resolution utilizing Amazon MWAA and AWS Batch.

Amazon MWAA is a totally managed service that simplifies operating open supply Apache Airflow on AWS. Customers can construct and execute information processing workflows whereas integrating seamlessly with numerous AWS companies, which suggests builders and information engineers can consider constructing workflows relatively than managing infrastructure.

AWS Batch is a totally managed service that simplifies batch computing within the cloud so customers can run batch jobs without having to provision, handle, or keep clusters. It automates useful resource provisioning and workload distribution, with customers solely paying for the underlying AWS assets consumed.

The brand new design offers a unified framework the place analytics workloads are containerized, orchestrated, and executed on scalable compute and built-in with persistent storage:

- Containerization – Analytics workloads are packaged into Docker containers, with dependencies bundled to offer reproducibility. These photos are versioned and saved in Amazon Elastic Container Registry (Amazon ECR). This method decouples execution from infrastructure and permits constant conduct throughout environments.

- Workflow orchestration – Airflow Directed Acyclic Graphs (DAGs) are version-controlled in Git and deployed to Amazon MWAA utilizing a steady integration and steady supply (CI/CD) pipeline. Amazon MWAA schedules and orchestrates duties, triggering AWS Batch jobs utilizing customized operators. Logs and metrics are streamed to Amazon CloudWatch, enabling real-time observability and alerting.

- Knowledge persistence – Workflows work together with Amazon Easy Storage Service (Amazon S3) for sturdy storage of inputs, outputs, and intermediate artifacts. Amazon Elastic File System (Amazon EFS) is mounted to Amazon MWAA for quick entry to shared code and configuration information, synchronized constantly from the Git repository.

- Scalable compute – Amazon MWAA triggers AWS Batch jobs utilizing standardized job definitions. These jobs run in elastic compute environments resembling Amazon Elastic Compute Cloud (Amazon EC2) or AWS Fargate, with secrets and techniques securely injected utilizing AWS Secrets and techniques Supervisor. AWS Batch environments auto scale primarily based on workload demand, optimizing value and efficiency.

- Safety and governance – AWS Identification and Entry Administration (IAM) roles are scoped per crew and workload, offering least-privilege entry. Job executions are logged and auditable, with fine-grained entry management enforced throughout Amazon S3, Amazon ECR, and AWS Batch.

Widespread operators

To streamline the execution of batch jobs throughout groups, we developed a shared operator that wraps the built-in Airflow AWS Batch operator. This abstraction simplifies the execution of containerized workloads by encapsulating frequent logic resembling:

- Job definition choice

- Job queue focusing on

- Setting variable injection

- Secrets and techniques decision

- Retry insurance policies and logging configuration

Parameterization is dealt with utilizing Airflow Variables and XComs, enabling dynamic conduct throughout DAG runs. The operator is maintained in a shared Git repository, versioned and centrally ruled, however accessible to all groups.

To additional speed up growth, some groups use a DAG Manufacturing facility sample, which programmatically generates DAGs from configuration information. This reduces boilerplate and enforces consistency so groups can outline new workflows declaratively.

By standardizing this operator and supporting patterns, Tipico reduces onboarding friction, promotes reuse, and offers constant observability and error dealing with throughout the analytics ecosystem.

Governance

Governance is enforced by a mixture of fine-grained IAM roles, AWS IAM Identification Heart and automatic function mapping. Every crew is assigned a devoted IAM function, which governs entry to AWS companies resembling Amazon S3, Amazon ECR, AWS Batch and Secrets and techniques Supervisor. These roles are tightly scoped to reduce the extent of harm and supply traceability.

On condition that the airflow setting runs model 2.9.2, which doesn’t assist multi-tenant entry, Tipico developed a customized element that dynamically maps AWS IAM roles to Airflow roles. The element, which executes periodically utilizing Airflow itself, dynamically syncs IAM function assignments with Airflow’s inner RBAC mannequin. Airflow tags are used to manipulate entry to totally different DAGs, governing which groups have entry to execute or modify the settings on the DAG. This aligns entry permissions stay with organizational construction and crew tasks.

Adoption

The shift towards a managed, scalable resolution was pushed by the necessity for larger crew autonomy, standardization, and scalability. The journey started with a single analytics crew validating the brand new method. When it was profitable, the platform crew generalized the answer and rolled it out incrementally to different groups, refining it with every iteration.One of many greatest challenges was migrating legacy code, which regularly included outdated logic and undocumented dependencies. To assist adoption, Tipico launched a structured onboarding course of with hands-on coaching, actual use circumstances, and inner champions. In some circumstances, groups additionally needed to undertake Git for the primary time—marking a broader shift towards fashionable engineering practices inside the analytics group.

Key advantages

One of the vital helpful outcomes of our new structure that’s primarily constructed round Amazon MWAA and AWS Batch is to speed up analytics groups’ time to worth. Analysts can now concentrate on constructing transformation logic and workloads with out worrying concerning the underlying infrastructure. With this method, analysts can depend on preprepared integrations and analytics patterns used throughout totally different groups, supported by customary interfaces developed by the core information crew.

Except for constructing analytics on Amazon Redshift, the orchestration resolution additionally interfaces with a number of different analytics companies resembling Amazon Athena and AWS Glue ETL, offering most flexibility on the kind of workloads being delivered. Groups inside the group have additionally shared practices in utilizing totally different frameworks, resembling dbt Labs, to reuse customized developments to hold out customary processes.

One other helpful final result is the flexibility to obviously segregate prices throughout groups. Inside the structure, Airflow delegates heavy lifting to AWS Batch, offering job isolation that spans past Airflow’s built-in staff. By means of this, we acquire granular visibility into useful resource utilization and correct value attribution, selling monetary accountability throughout the group.

Lastly, the platform additionally offers embedded governance and safety, with RBAC and standardized secrets and techniques administration offering an operationalized mannequin for securing and governing working flows throughout totally different groups.

Groups can now concentrate on constructing and iterating rapidly, figuring out that the encompassing buildings present full transparency and are coherent with the group’s governance, structure, and FinOps targets. On the identical time, centralized orchestration fosters a collaborative setting the place groups can uncover, reuse, and construct upon one another’s workflows, driving innovation and decreasing duplication throughout the info panorama.

Conclusion

By reimagining our orchestration layer with Amazon MWAA and AWS Batch, Tipico has unlocked a brand new degree of agility and transparency throughout its information workflows.

Beforehand, analytics groups confronted lengthy lead occasions, typically stretching into weeks, to implement new reporting use circumstances. A lot of this time was spent figuring out datasets, aligning transformation logic, discovering integration choices, and navigating inconsistent high quality assurance processes. Right this moment, that has modified. Analysts can now develop and deploy a use case inside a single enterprise day, shifting their focus from groundwork to motion.

The trendy structure empowers groups to maneuver sooner and extra independently inside a safe, ruled, and scalable framework. The result’s a collaborative information ecosystem the place experimentation is inspired, operational overhead is diminished, and insights are delivered at pace.

To begin constructing your personal orchestrated information platform, discover the Get began with Amazon Managed Workflows for Apache Airflow and AWS Batch Consumer Information. These companies may help you obtain related ends in democratizing information transformations throughout your group. For hands-on expertise with these options, strive our Amazon MWAA for Analytics Workshop or contact your AWS account crew to study extra.

Concerning the authors