This put up is co-written with Srinivasa Are, Principal Cloud Architect, and Karthick Shanmugam, Head of Structure Verisk EES (Excessive Occasion Options).

Verisk, a disaster modeling SaaS supplier serving insurance coverage and reinsurance firms worldwide, reduce processing time from hours to minutes-level aggregations whereas lowering storage prices by implementing a lakehouse structure with Amazon Redshift and Apache Iceberg. In the event you’re managing billions of disaster modeling information throughout hurricanes, earthquakes, and wildfires, this strategy eliminates the normal compute-versus-cost trade-off by separating storage from processing energy.

On this put up, we look at Verisk’s lakehouse implementation, specializing in 4 architectural selections that delivered measurable enhancements:

- Execution efficiency: Sub-hour aggregations throughout billions of information changed lengthy batch course of

- Storage effectivity: Columnar Parquet compression diminished prices with out sacrificing response time

- Multi-tenant safety: Schema-level isolation enforced full information separation between insurance coverage purchasers

- Schema flexibility: Apache Iceberg assist column additions and historic information entry with out downtime

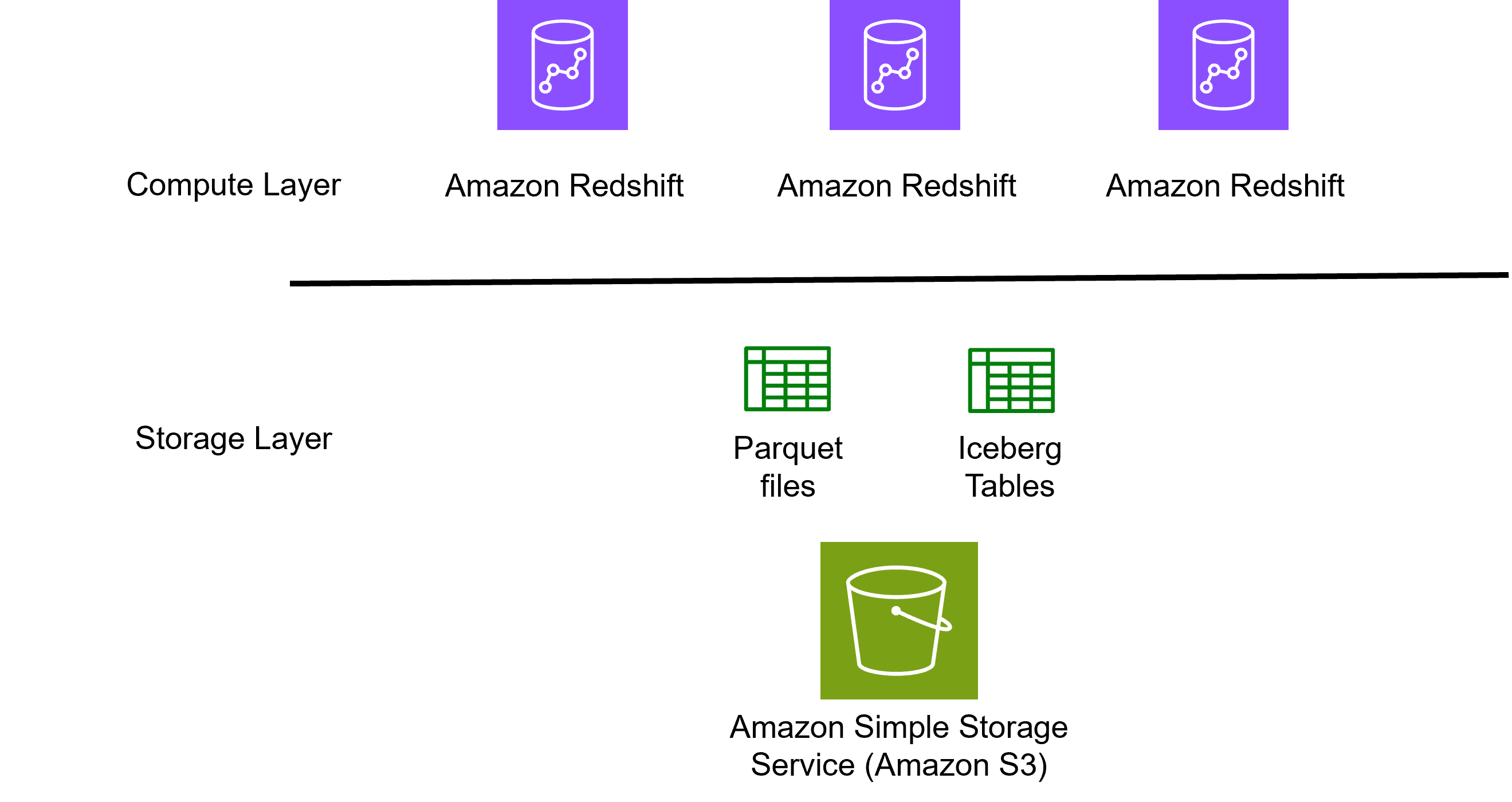

The structure separates compute (Amazon Redshift) from storage (Amazon S3), demonstrating the way to scale from billions to trillions of information with out proportional value will increase.

Present state and challenges

In Verisk’s world of threat analytics, information volumes develop at exponential charges. Every single day, threat modeling methods generate billions of rows of structured and semi-structured information. Every document captures a micro-slice of publicity, occasion chance, or loss correlation. To transform this uncooked data into actionable insights at scale, consultants want an information engine designed for high-volume analytical workloads.

Every Verisk mannequin run produces detailed, high-granularity outputs that embody billions of simulated threat elements and event-level outcomes, multi-year loss projections throughout 1000’s of perils, and deep relational joins throughout publicity, coverage, and claims datasets.

Working significant aggregations (reminiscent of, loss by area, peril, or occupancy sort) over such excessive volumes created efficiency challenges.

Verisk wanted to construct a SQL service that might mixture at scale within the quickest time doable and combine into their broader AWS options, requiring a serverless, open, and performant SQL engine able to dealing with billions of information effectively.

Previous to this cloud-based launch, Verisk’s threat analytics infrastructure operated on an on-premises structure centered round relational database clusters. Processing nodes shared entry to centralized storage volumes by means of devoted interconnect networks. This structure required capital funding in server {hardware}, storage arrays, and networking gear. The deployment mannequin required handbook capability planning and provisioning cycles, limiting the group’s capability to reply to fluctuating workload calls for. Database operations trusted batch-oriented processing home windows, with analytical queries competing for shared compute sources.

Amazon Redshift and lakehouse structure

Lakehouse structure on AWS combines information lake storage scalability with information warehouse analytical efficiency in a unified structure. This structure shops huge quantities of structured and semi-structured information in cost-effective Amazon S3 storage whereas sustaining Amazon Redshift’s massively parallel SQL analytics.

Amazon Redshift is a totally managed, petabyte-scale cloud information warehouse service that delivers quick question efficiency utilizing massively parallel processing (MPP) and columnar storage. Amazon Redshift eliminates the complexity of provisioning {hardware}, putting in software program, and managing infrastructure, protecting give attention to deriving insights from their information reasonably than sustaining methods.

To fulfill their problem, Verisk designed a hybrid information lakehouse structure that mixes the storage scalability of Amazon S3 with the compute energy of Amazon Redshift. The next diagram exhibits the foundational compute and storage structure that powers Verisk’s analytical resolution.

Structure Overview

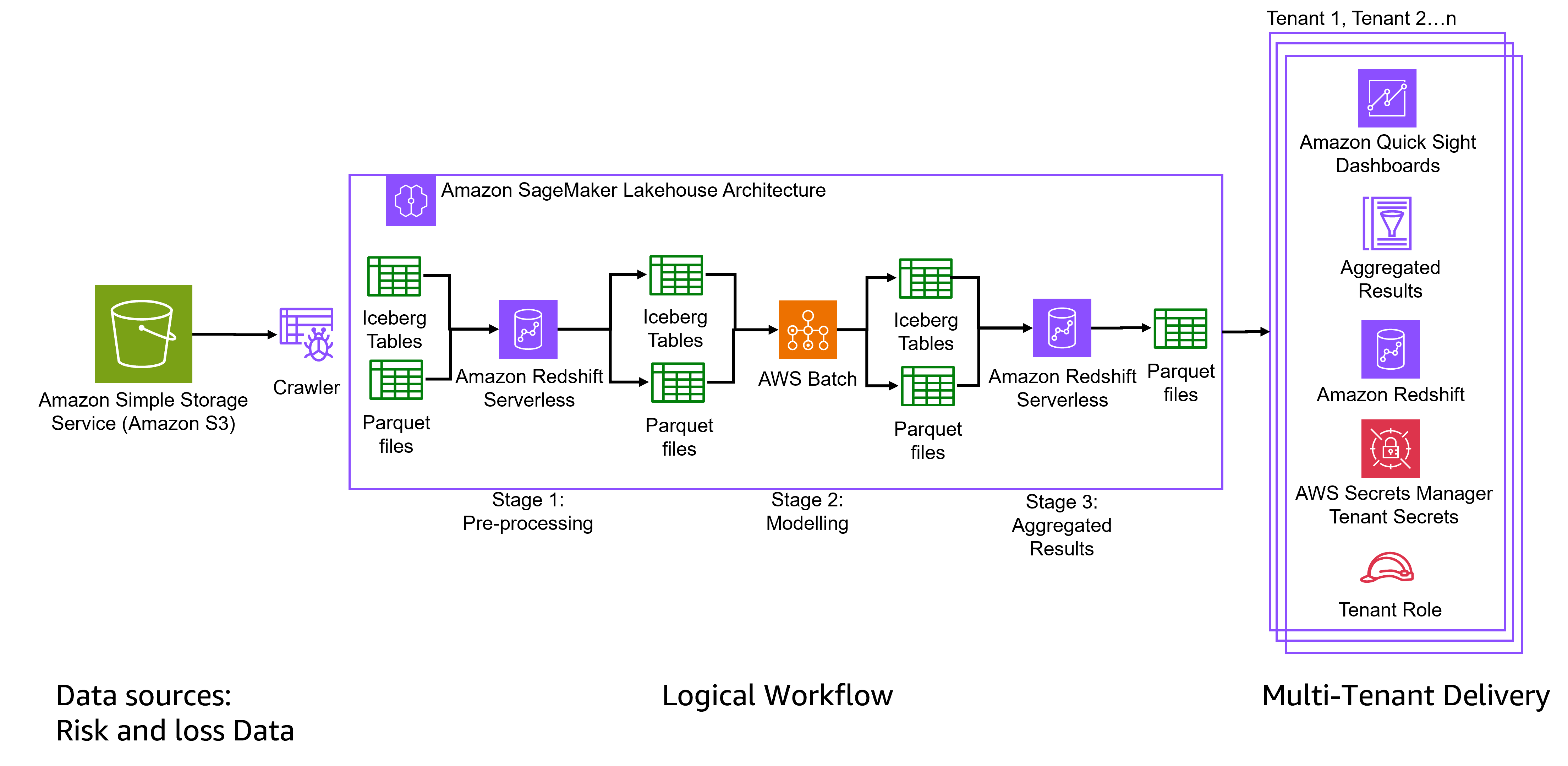

The structure processes threat and loss information by means of three distinct levels throughout the lakehouse structure, with complete multi-tenant supply capabilities to keep up isolation between insurance coverage purchasers.

Amazon Redshift permits retrieving information immediately from S3 utilizing normal SQL for background processing. This resolution collects detailed consequence outputs, be a part of them with inner reference information, and executes aggregations over billions of rows. Concurrency scaling ensures that tons of of background analyses utilizing a number of serverless clusters can run simultaneous aggregation queries.

The next diagram exhibits the structure designed by Verisk

Knowledge ingestion and storage basis

Verisk shops threat mannequin outputs, location stage losses, publicity tables, and mannequin information in columnar Parquet format inside Amazon S3. An AWS Glue crawler extracts metadata from S3 and feeds it into the lakehouse processing pipeline.

For versioned datasets like publicity tables, Verisk adopted Apache Iceberg, an open desk format that addresses schema evolution and historic versioning necessities. Apache Iceberg gives transactional consistency by means of atomicity, consistency, isolation, sturdiness ACID-compliant operations that preserve constant snapshots throughout concurrent updates. Snapshot-based time journey permits information retrieval at earlier cut-off dates for regulatory compliance, audit trails, and mannequin comparability with rollback capabilities. Schema evolution helps including, dropping, or renaming columns with out downtime or dataset rewrites. Incremental processing makes use of metadata monitoring to course of solely modified information, lowering refresh occasions. Hidden partitioning and file-level statistics cut back I/O operations, enhancing aggregation efficiency. Engine interoperability permits accessing the identical tables throughout Amazon Redshift, Amazon Athena, Spark, and different engines with out information duplication.

Verisk constructed a basis that mixes S3’s cost-effectiveness with information administration by adopting Apache Iceberg as open desk format for this resolution.

Three-stage processing pipeline

This pipeline orchestrates information movement from uncooked inputs to analytical outputs by means of three sequential levels. Pre-processing prepares and cleanses information, modeling applies threat calculations and analytics, and post-processing aggregates outcomes for supply.

- Stage 1: Pre-processing transforms uncooked information into structured codecs utilizing Iceberg Tables and Parquet information, then processes it by means of Amazon Redshift Serverless for preliminary information cleansing and transformation.

- Stage 2: Modeling takes place with a course of constructed on AWS Batch the pre-processed information and applies superior analytics and have engineering. Outcomes are saved in Iceberg Tables and Parquet information.

- Stage 3: Aggregated Outcomes are obtained throughout post-processing utilizing Amazon Redshift Serverless, it produces the ultimate analytical outputs in Parquet information, prepared for consumption by finish customers.

Multi-tenant supply system

The structure delivers outcomes to a number of insurance coverage purchasers (tenants) by means of a safe, remoted supply system that features:

- Amazon Fast Sight dashboards for visualization and enterprise intelligence

- Amazon Redshift as the information warehouse for querying aggregated outcomes

- AWS Batch for modelling processing.

- AWS Secrets and techniques Supervisor to handle tenant-specific credentials

- Tenant Roles implementing role-based entry management to supply information isolation between purchasers

Summarized outcomes are uncovered by means of Amazon Fast Sight dashboards or downstream APIs to underwriting groups.

Multi-tenant safety structure

A crucial requirement for Verisk’s SaaS resolution was supporting complete information and compute isolation between totally different insurance coverage and reinsurance purchasers. Verisk applied a complete multi-tenant safety mannequin that gives isolation whereas sustaining operational effectivity.

Our resolution implements an isolation technique in two layers combining logical and bodily separation. On the logical layer, every consumer’s information resides in devoted schemas with entry controls that forestall cross-tenant operations. Amazon Redshift Metadata safety restricts tenants from discovering or accessing different purchasers’ schemas, tables, or database objects by means of system catalogs. On the bodily layer, for bigger deployments, devoted Amazon Redshift clusters present workload separation on the compute stage, stopping one tenant’s analytical operations from impacting one other’s efficiency. This twin strategy meets regulatory necessities for information isolation within the insurance coverage business by means of schema-level isolation inside clusters for traditional deployments and full compute separation throughout devoted clusters for larger-scale implementations.

The implementation makes use of saved procedures to automate safety configuration, sustaining constant utility of entry controls throughout tenants. This defense-in-depth strategy combines schema-level isolation, system catalog lockdown, and selective permission grants to create a safety mannequin.

For information architects enthusiastic about implementing comparable multi-tenant architectures, overview Implementing Metadata Safety for Multi-Tenant Amazon Redshift Atmosphere.

Implementation concerns

Verisk’s structure reveals three resolution factors for firms constructing comparable methods.

When to undertake open desk codecs

Apache Iceberg proved important for datasets requiring schema evolution and historic versioning. Knowledge engineers ought to consider open desk codecs when analytical workloads span a number of engines (Amazon Redshift, Amazon Athena, Spark) or when regulatory necessities demand point-in-time information reconstruction.

Multi-tenant isolation technique

Schema-level separation mixed with metadata safety prevented cross-tenant information discovery with out efficiency overhead. This strategy scales extra effectively than database-per-tenant architectures whereas assembly insurance coverage business compliance necessities. Safety consultants ought to implement isolation controls throughout preliminary deployment reasonably than retrofitting them later.

Saved procedures or utility logic

Redshift saved procedures standardized aggregation calculations throughout groups and constructed dynamic SQL queries. This strategy works greatest when enterprise logic adjustments continuously or when a number of groups want totally different aggregation dimensions on the identical datasets.

Conclusion

Verisk’s implementation of Amazon Redshift Serverless with Apache Iceberg and lakehouse structure exhibits how separating compute from storage addresses enterprise analytics challenges at billion-record scale. By combining cost-effective Amazon S3 storage with Redshift’s massively parallel SQL compute, Verisk achieved aggregations throughout billions of disaster modeling information, diminished storage prices by means of environment friendly parquet compression, and eradicated ingestion delays. Now underwriting groups can run ad-hoc analyses throughout enterprise hours reasonably than ready for long-running batch jobs. The mix of open requirements like Apache Iceberg, serverless compute with Amazon Redshift, and multi-tenant safety gives the scalability, efficiency, and value effectivity wanted for contemporary analytics workloads.

Verisk’s journey has positioned them to scale confidently into the long run, processing not simply billions, however probably trillions of information as their mannequin decision will increase.

Concerning the authors