At re:Invent 2025, we introduced serverless storage for Amazon EMR Serverless, eliminating the necessity to provision native disk storage for Apache Spark workloads. Serverless storage of Amazon EMR Serverless reduces knowledge processing prices by as much as 20% whereas serving to forestall job failures from disk capability constraints.

On this publish, we discover the associated fee enhancements we noticed when benchmarking Apache Spark jobs with serverless storage on EMR Serverless. We take a deeper take a look at how serverless storage helps scale back prices for shuffle-heavy Spark workloads, and we define sensible steerage on figuring out the forms of queries that may profit most from enabling serverless storage in your EMR Serverless Spark jobs.

Benchmark outcomes for EMR 7.12 with serverless storage towards normal disks

We performed the efficiency and price financial savings benchmarking utilizing the TPC-DS dataset at 3TB scale, operating 100+ queries that included a mixture of excessive and low shuffle operations. The check configuration utilized Dynamic Useful resource Allocation (DRA) with no pre-initialized capability. The system was arrange with 20GB of disk house, and Spark configurations included 4 cores and 14GB reminiscence for each driver and executor, with dynamic allocation beginning at 3 preliminary executors (spark.dynamicAllocation.initialExecutors = 3). A comparative evaluation was carried out between native disk storage and serverless storage configurations. The purpose was to evaluate each whole and common price implications between these storage approaches.

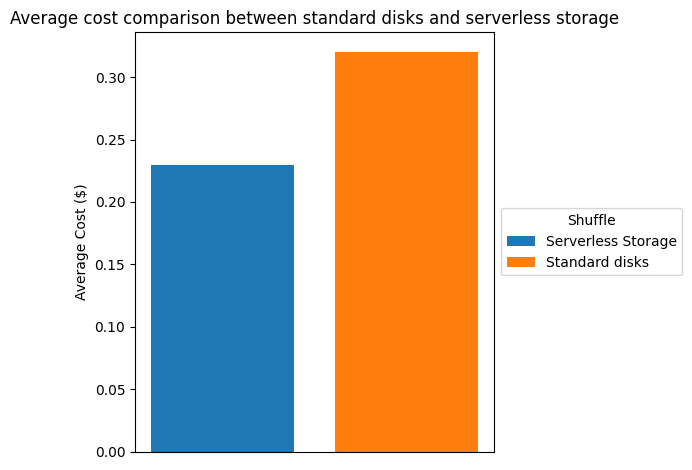

The next desk and chart examine the associated fee discount we noticed within the testing surroundings described above. Based mostly on us-east-1 pricing, we noticed a value financial savings of greater than 26% when utilizing serverless storage.

| Shuffle | |||

| serverless storage | normal Disks | financial savings | |

| Whole Value ($) | 24.28 | 33.1 | 26.65% |

| Common Value ($) | 0.233 | 0.318 | 26.73% |

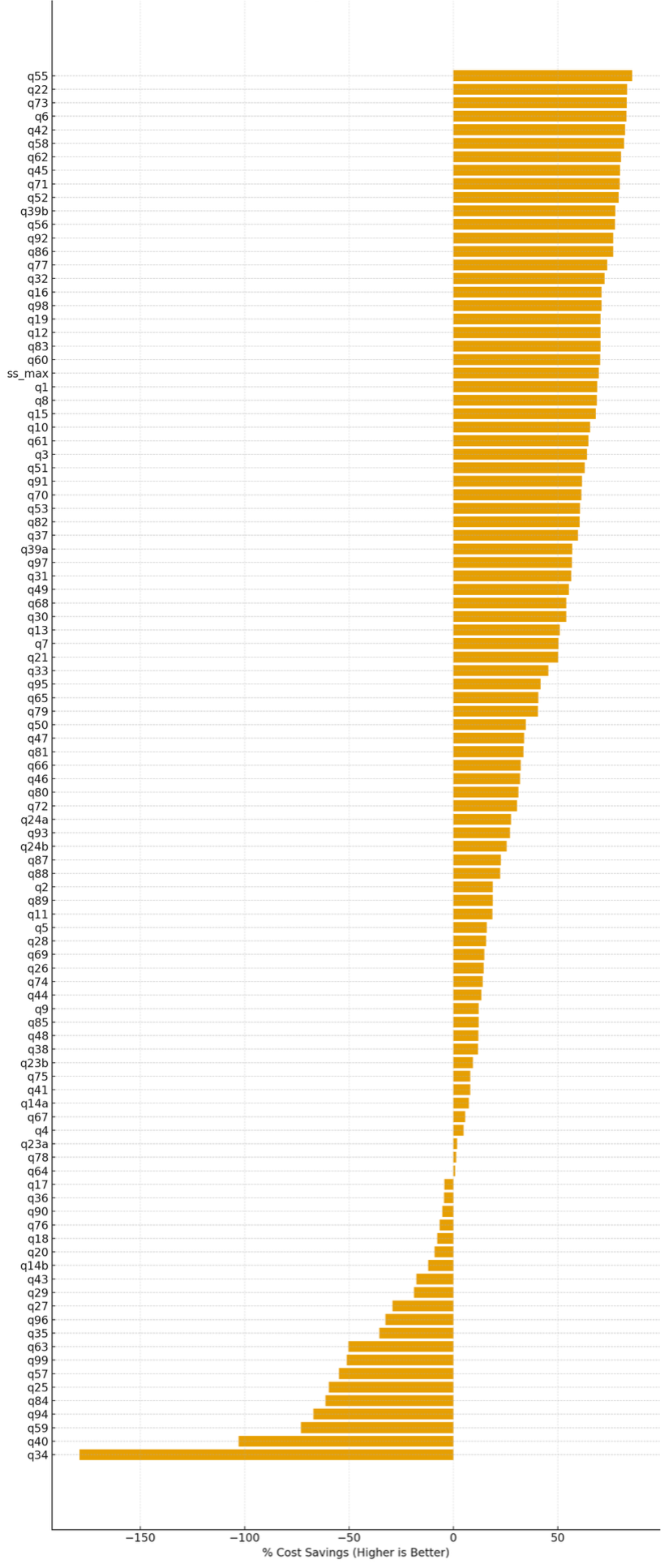

% Relative financial savings (per question) of serverless storage in comparison with normal disk shuffle

On this testing, we noticed that serverless storage in EMR Serverless reduces price for roughly 80% of TPC-DS queries. For the queries the place it supplies advantages, it delivers a mean price saving of roughly 47%, with financial savings of as much as 85%. Queries that regress sometimes have low shuffle depth, preserve excessive parallelism all through execution, or full rapidly sufficient that executor scale-down alternatives are minimal. The next determine exhibits the proportion price distinction for every of the TPC-DS queries when serverless storage was enabled, in comparison with the baseline configuration with out serverless storage. Constructive values point out price financial savings (increased is healthier), whereas damaging values point out price regressions.

Share price financial savings per TPC-DS question with serverless storage enabled

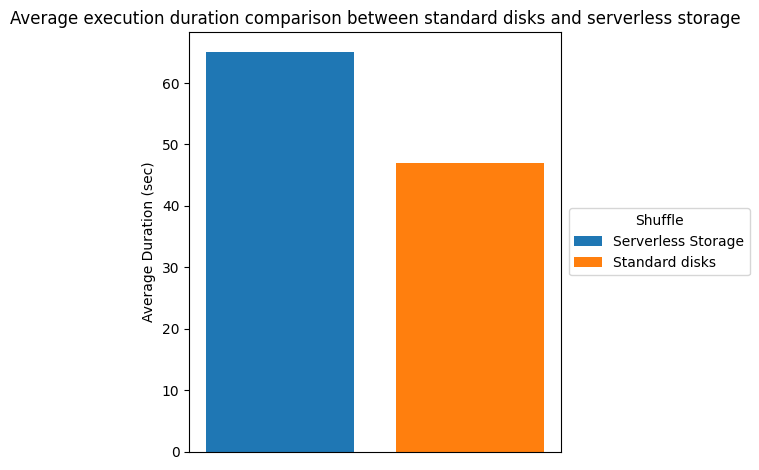

Runtime comparability

There may be important price financial savings as a result of elevated elasticity from terminating executors earlier. Nonetheless, job completion time could improve as a result of the shuffle knowledge is saved in serverless storage somewhat than regionally on the executors. The extra learn and write latency for shuffle knowledge contributes to the longer runtime. The next desk and chart present the runtime comparability, we noticed in our testing surroundings.

| Shuffle | |||

| serverless storage | normal disks | runtime | |

| Whole Period (sec) | 6770.63 | 4908.52 | -37.94% |

| Common Period (sec) | 65.1 | 47.2 | -37.92% |

Storing shuffle externally and decoupling from the compute allowed the pliability for EMR Serverless to show off unused assets dynamically because the state data has been offloaded from the compute. Nonetheless, these price financial savings might be realized solely when DRA is on. If DRA is turned off, Spark would maintain these unused assets alive including to the entire price.

Question patterns that profit from serverless storage

The fee financial savings from serverless storage rely closely on how executor demand modifications throughout phases of a job. On this part, we look at frequent execution patterns and clarify which question shapes are almost certainly to learn from serverless storage of EMR Serverless and which question patterns could not profit from shuffle externalization.

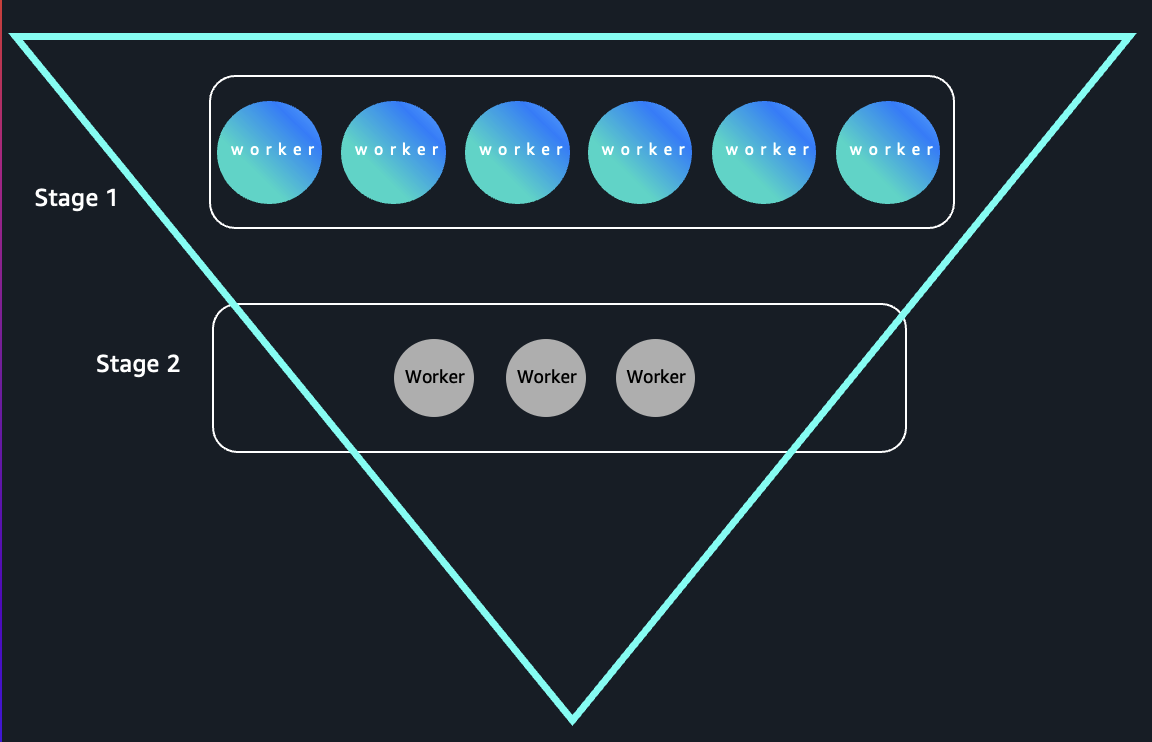

Inverted triangle sample queries

So as to perceive why externalizing the shuffle knowledge can enable such a major price financial savings, take into account a simplified question. The next question calculates annual whole gross sales from the TPC-DS dataset by becoming a member of the store_sales and date_dim tables, summing the gross sales quantities per 12 months, and ordering the outcomes.

This question demonstrates that top executor demand through the map part and low executor demand within the scale back part is an aggregation question with a excessive cardinality enter and a low cardinality group by.

- Stage 1 (Excessive Executor Demand)

The be part of and browse steps scan your complete store_sales and date_dim tables. This usually entails billions of rows in large-scale TPC-DS datasets, so Spark will attempt to parallelize the scan throughout many executors to maximise learn throughput and compute effectivity.

- Stage 2 (Low Executor Demand)

The aggregation is on d_year, which generally has few distinctive values, akin to solely a handful of years within the knowledge. This implies after the shuffle stage, the scale back part combines the partial aggregates into numerous keys equal to the variety of years (usually

With shuffle info saved on the native disk, the compute assets related to these idle executors would nonetheless be operating with a purpose to maintain the shuffle knowledge out there. With shuffle knowledge offloaded from the nodes operating the executors, with DRA enabled, these nodes with idle executors get launched instantly.

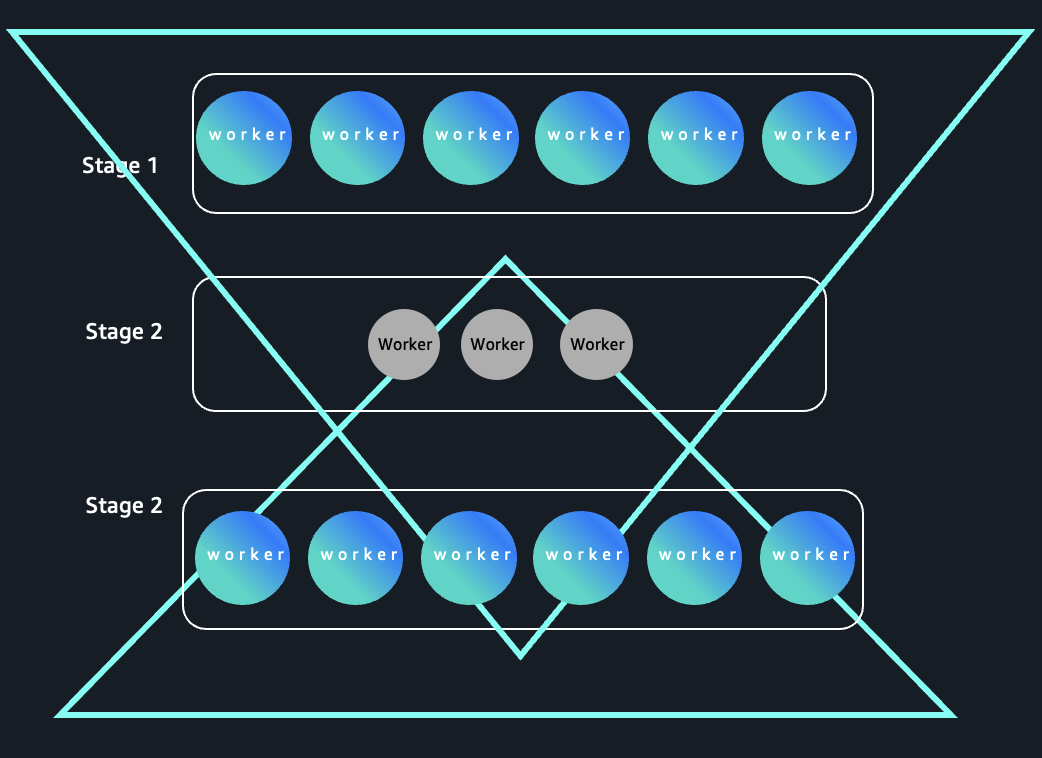

As a result of early phases course of high-cardinality inputs and later phases collapse knowledge right into a small variety of keys, these queries kind an “inverted triangle” execution sample: extensive parallelism on the prime and slim parallelism on the backside as proven within the following picture:

Hourglass sample queries

Relying upon the complexity of the job, there might be a number of phases with various demand on variety of executors wanted for the stage. Such jobs can profit from higher elasticity obtained by offloading shuffle knowledge to exterior serverless storage. One such sample is the hour glass sample. The next determine exhibits a workload sample the place executor demand expands, contracts throughout shuffle-heavy phases, and expands once more. Serverless storage of EMR Serverless decouples shuffle knowledge from compute, enabling extra environment friendly scale-down throughout slim phases and serving to enhance price optimization for elastic workloads.

Hourglass sample in Spark stage execution

To establish queries of this class, take into account the next instance, The question progresses via three phases:

- Stage 1: The preliminary be part of and filter between

store_salesandmerchandiseproduces a large, high-cardinality intermediate dataset, requiring excessive parallelism (many executors). - Stage 2: Aggregation teams by a small set of classes akin to “Residence” or “Electronics”, leading to a drastic drop in output partitions. So this stage effectively runs with just a few executors, as there’s little knowledge to parallelize.

- Stage 3: The small result’s joined (often a broadcast be part of) again to a big reality desk with a date dimension, once more producing a big consequence that’s well-parallelized, inflicting Spark to ramp up executor utilization for this stage.

This sample is frequent for reporting and dimensional evaluation eventualities and is efficient for demonstrating how Spark dynamically adjusts useful resource utilization throughout job phases based mostly on cardinality and parallelism wants. Such queries may also profit from the elasticity enabled by exterior serverless storage.

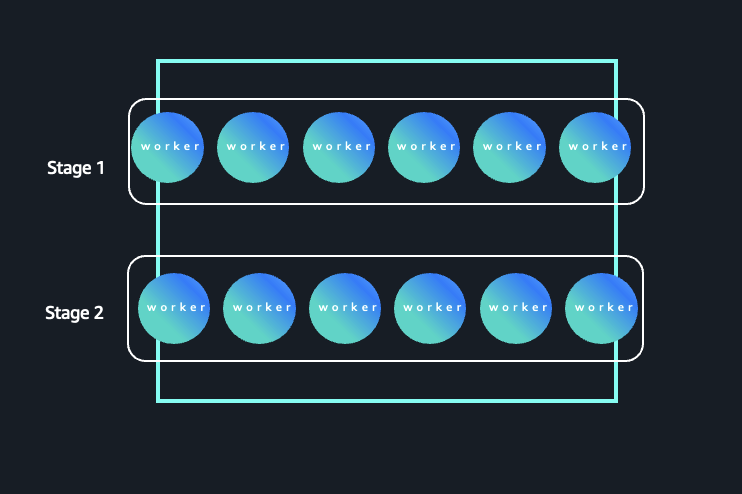

Rectangle sample queries

Not all queries profit from externalizing the shuffle. Contemplate a question the place the cardinality is excessive all through, which means each the phases function on numerous partitions and keys. Sometimes, queries that group by high-cardinality columns (akin to merchandise or buyer) trigger most phases to require comparable quantities of parallelism. The next determine illustrates a Spark workload the place parallelism stays constantly excessive throughout phases. On this sample, each Stage 1 and Stage 2 function on numerous partitions and keys, leading to sustained executor demand all through the job lifecycle.

Excessive-cardinality execution sample with sustained parallelism

The next question is identical question that we used within the inverted triangle sample earlier, with one change. We’ve changed the dim_date desk (low cardinality) with merchandise (excessive cardinality).

- Stage 1: Reads the rows from

store_salesand joins withmerchandise, spreading knowledge throughout many partitions—much like the unique question’s first stage. - Stage 2: The aggregation is by

i_item_id, which usually has hundreds to tens of millions of distinct values in actual datasets. This retains parallelism excessive; many duties deal with non-overlapping keys, and shuffle outputs stay massive.

There isn’t any important drop in cardinality: As a result of neither stage is diminished to a small group set, most executors keep busy all through the job’s essential phases, with little idle time even after the shuffle. This kind of question ends in a flatter executor utilization profile as a result of every stage processes an analogous quantity of labor, thus minimizing variation in useful resource utilization. These rectangle sample queries won’t see the associated fee profit from the elasticity obtained by offloading shuffle knowledge. Nonetheless, there should be different advantages akin to discount of job failures and efficiency bottlenecks from disk constraints, freedom from capability planning and sizing, and provisioning of storage for intermediate knowledge operations.

Conclusion

Serverless storage for Amazon EMR Serverless can ship substantial price financial savings for workloads with dynamic useful resource patterns, as seen within the 26% common price financial savings we noticed in our testing surroundings. By externalizing shuffle knowledge, you may acquire the elasticity to launch idle executors instantly, demonstrated by the financial savings reaching as much as 85% in our testing surroundings, on queries following inverted triangle and hourglass patterns when Dynamic Useful resource Allocation is enabled.Understanding your workload traits is essential. Whereas rectangle sample queries could not see dramatic price reductions, they’ll nonetheless profit from improved reliability and removing of capability planning overhead.

To get began: Analyze your job execution patterns, allow Dynamic Useful resource Allocation, and pilot serverless storage on shuffle-heavy workloads. Seeking to scale back your Amazon EMR Serverless prices for Spark workloads? Discover serverless storage for EMR Serverless at present.

Concerning the authors