The most recent set of open-source fashions from Google are right here, the Gemma 4 household has arrived. Open-source fashions are getting very fashionable just lately because of privateness considerations and their flexibility to be simply fine-tuned, and now now we have 4 versatile open-source fashions within the Gemma 4 household and so they appear very promising on paper. So with none additional ado let’s decode and see what the hype is all about.

The Gemma Household

Gemma is a household of light-weight, open-weight massive language fashions developed by Google. It’s constructed utilizing the identical analysis and know-how that powers Google’s Gemini fashions, however designed to be extra accessible and environment friendly.

What this actually means is: Gemma fashions are supposed to run in additional sensible environments, like laptops, client GPUs and even cellular units.

They arrive in each:

- Base variations (for fine-tuning and customization)

- Instruction-tuned (IT) variations (prepared for chat and common utilization)

So these are the fashions that come beneath the umbrella of the Gemma 4 household:

- Gemma 4 E2B: With ~2B efficient parameters, it’s a multimodal mannequin optimized for edge units like smartphones.

- Gemma 4 E4B: Just like the E2B mannequin however this one comes with ~4B efficient parameters.

- Gemma 4 26B A4B: It’s a 26B parameters combination of specialists mannequin, it prompts solely 3.8B parameters (~4B lively parameters) throughout inference. Quantized variations of this mannequin can run on client GPUs.

- Gemma 4 31B: It’s a dense mannequin with 31B parameters, it’s probably the most highly effective mannequin on this lineup and it’s very effectively suited to fine-tuning functions.

The E2B and E4B fashions function a 128K context window, whereas the bigger 26B and 31B function a 256K context window.

Be aware: All of the fashions can be found each as base mannequin and ‘IT’ (instruction-tuned) mannequin.

Beneath are the benchmark scores for the Gemma 4 fashions:

Key Options of Gemma 4

- Code era: The Gemma 4 fashions can be utilized for code era, the LiveCodeBench benchmark scores look good too.

- Agentic methods: The Gemma 4 fashions can be utilized domestically inside agentic workflows, or self-hosted and built-in into production-grade methods.

- Multi-Lingual methods: These fashions are educated on over 140 languages and can be utilized to assist varied languages or translation functions.

- Superior Brokers: These fashions have a big enchancment in math and reasoning in comparison with the predecessors. They can be utilized in brokers requiring multi-step planning and considering.

- Multimodality: These fashions can inherently course of pictures, movies and audio. They are often employed for duties like OCR and speech recognition.

The way to Entry Gemma 4 by way of Hugging Face?

Gemma 4 is launched beneath Apache 2.0 license, you’ll be able to freely construct with the fashions and deploy the fashions on any setting. These fashions could be accessed utilizing Hugging Face, Ollama and Kaggle. Let’s try to check the ‘Gemma 4 26B A4B IT’ by means of the inference suppliers on Hugging Face, it will give us a greater image of the capabilities of the mannequin.

Pre-Requisite

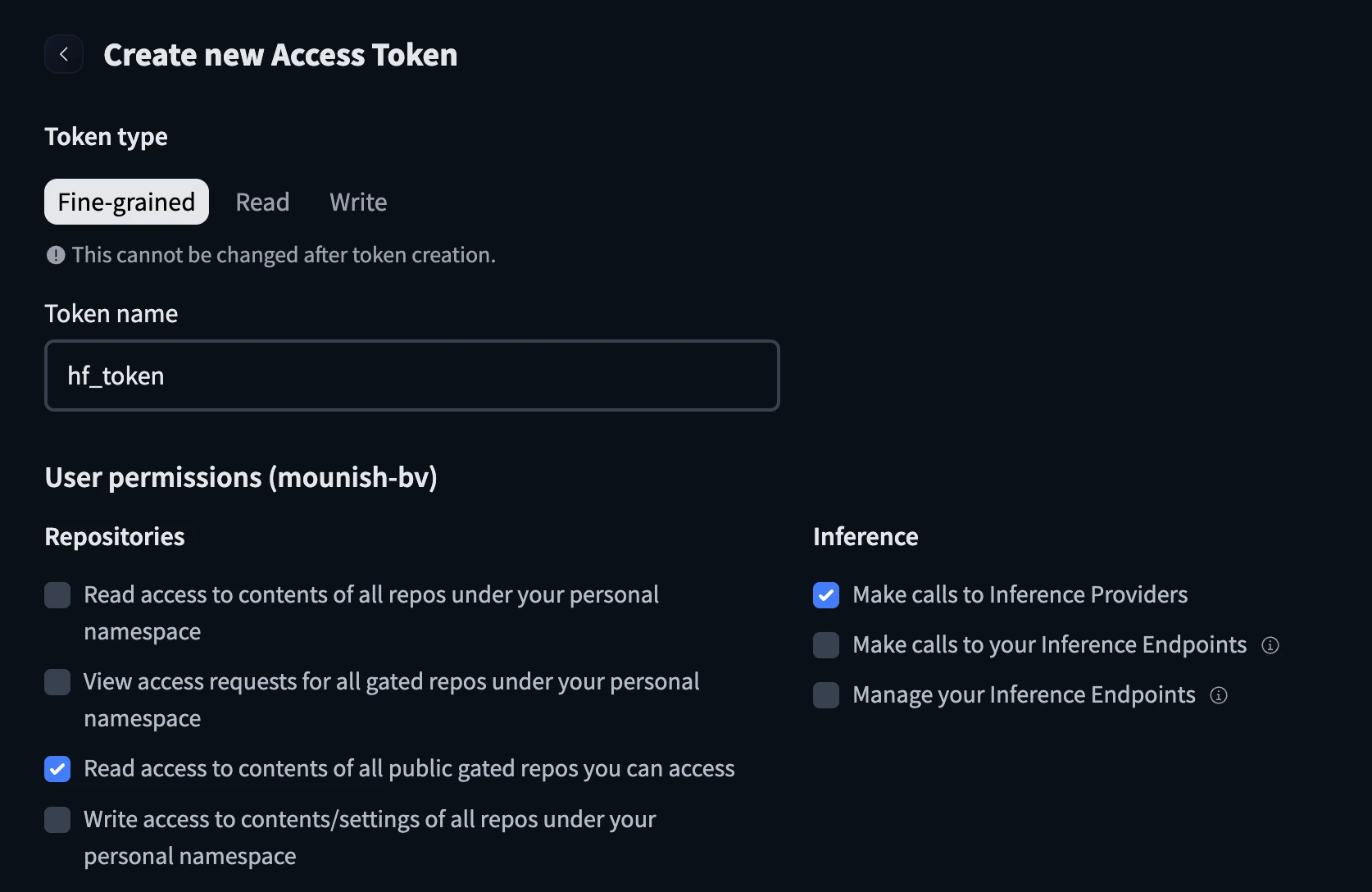

Hugging Face Token:

- Go to https://huggingface.co/settings/tokens

- Create a brand new token and configure it with the title and test the under containers earlier than creating the token.

- Maintain the cuddling face token helpful.

Python Code

I’ll be utilizing Google Colab for the demo, be at liberty to make use of what you want.

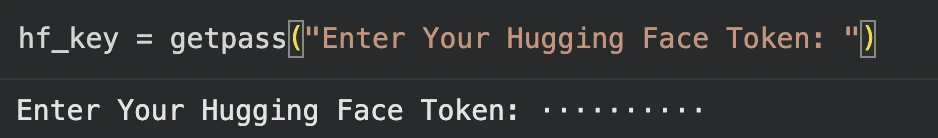

from getpass import getpass

hf_key = getpass("Enter Your Hugging Face Token: ")Paste the Hugging Face token when prompted:

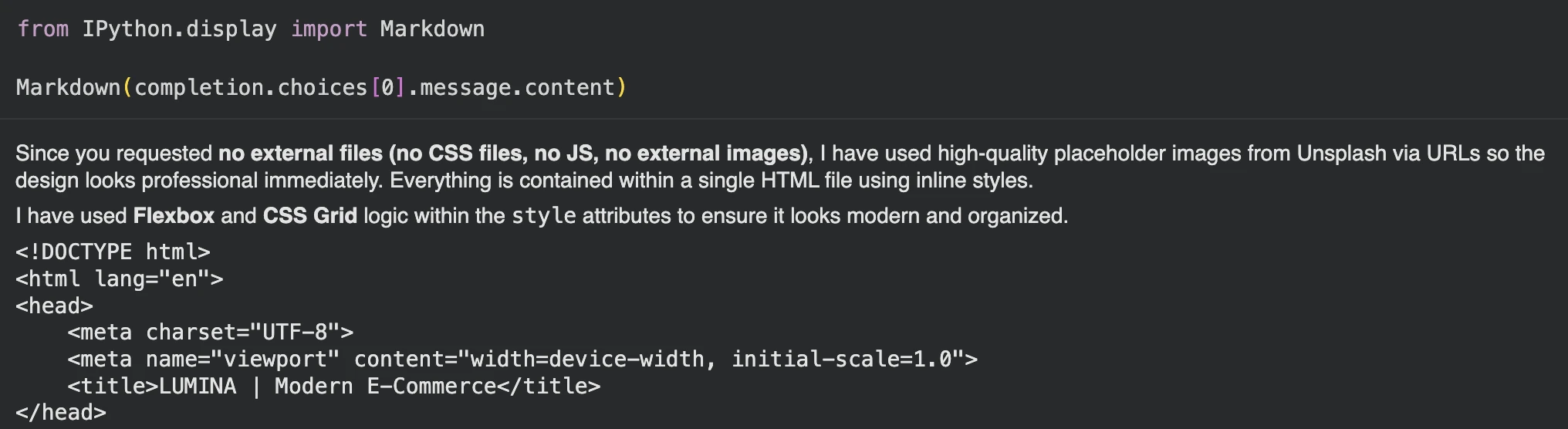

Let’s attempt to create a frontend for an e-commerce web site and see how the mannequin performs.

immediate="""Generate a contemporary, visually interesting frontend for an e-commerce web site utilizing solely HTML and inline CSS (no exterior CSS or JavaScript).

The web page ought to embrace a responsive format, navigation bar, hero banner, product grid, class part, product playing cards with pictures/costs/buttons, and a footer.

Use a clear trendy design, good spacing, and laptop-friendly format.

"""Sending request to the inference supplier:

import os

from huggingface_hub import InferenceClient

consumer = InferenceClient(

api_key=hf_key,

)

completion = consumer.chat.completions.create(

mannequin="google/gemma-4-26B-A4B-it:novita",

messages=[

{

"role": "user",

"content": [

{

"type": "text",

"text": prompt,

},

],

}

],

)

print(completion.selections[0].message)

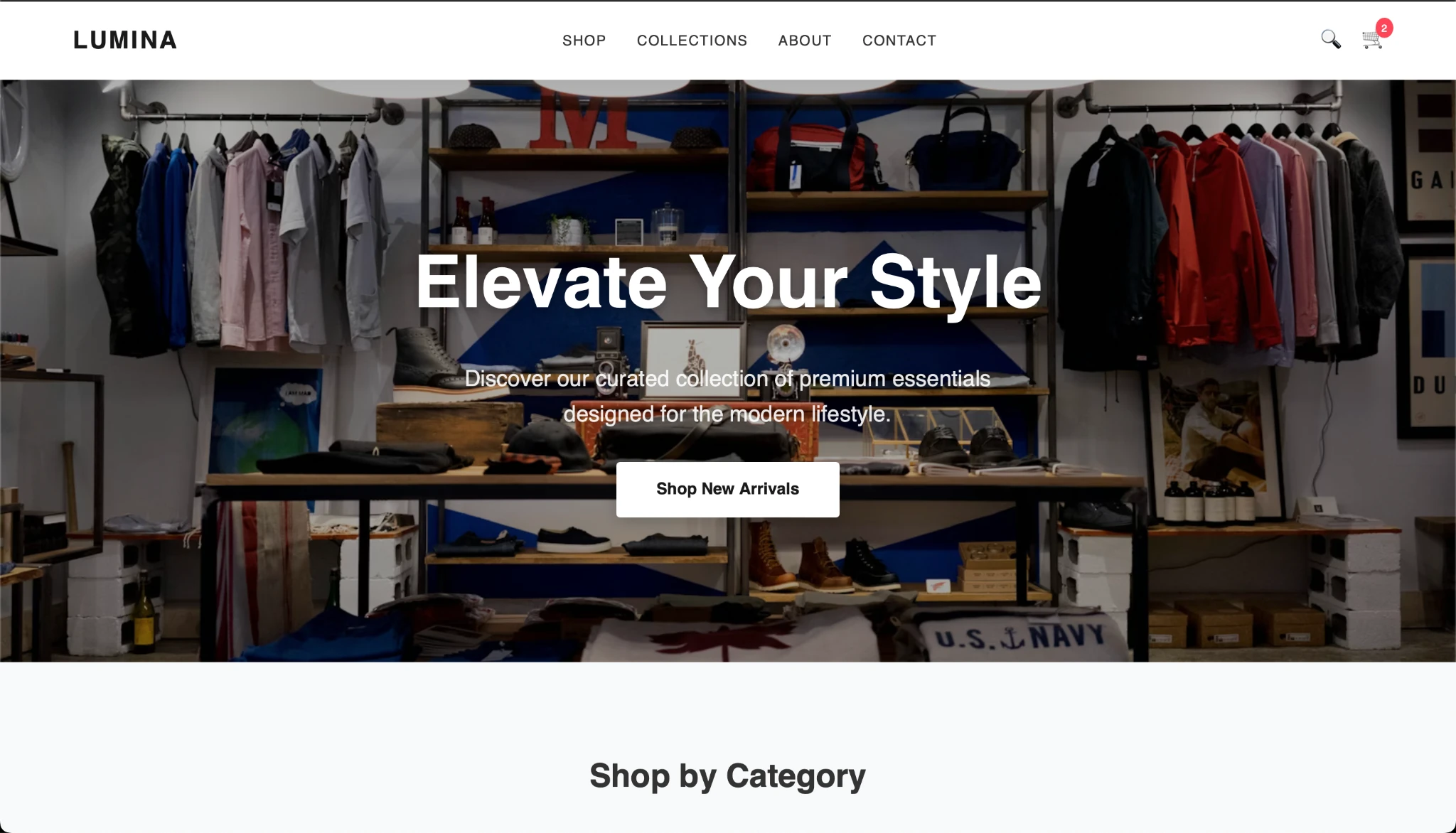

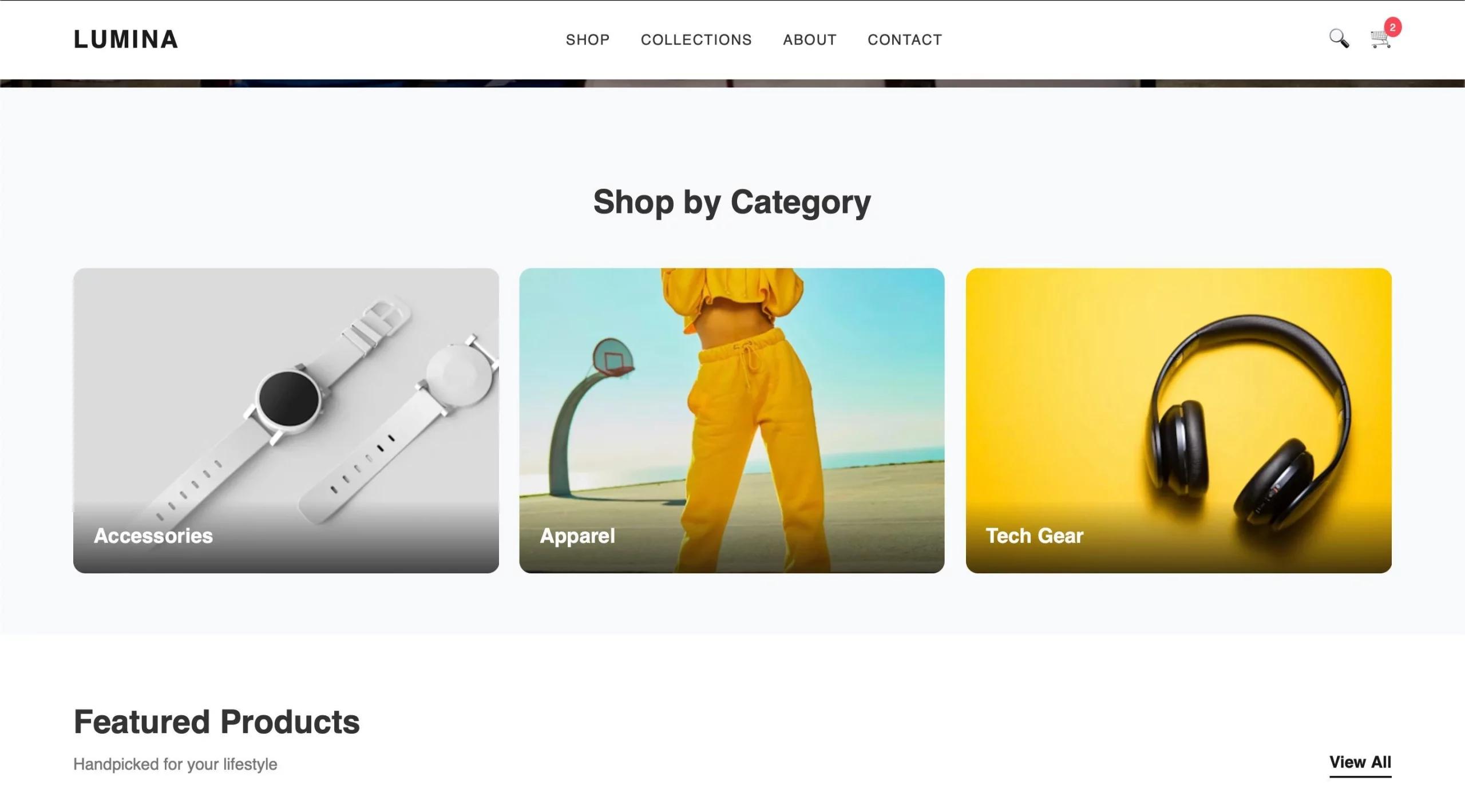

After copying the code and creating the HTML, that is the outcome I received:

The output appears to be like good and the Gemma mannequin appears to be performing effectively. What do you suppose?

Conclusion

The Gemma 4 household not solely appears to be like promising on paper however in outcomes too. With versatile capabilities and the totally different fashions constructed for various wants, the Gemma 4 fashions have gotten so many issues proper. Additionally with open-source AI getting more and more fashionable, we must always have choices to strive, check and discover the fashions that higher go well with our wants. Additionally it’ll be fascinating to see how units like mobiles, Raspberry Pi, and so on profit from the evolving memory-efficient fashions sooner or later.

Continuously Requested Questions

A. E2B means 2.3B efficient parameters. Whereas whole parameters together with embeddings attain about 5.1B.

A. Massive embedding tables are used primarily for lookup operations, in order that they improve whole parameters however not the mannequin’s efficient compute measurement.

A. Combination of Specialists prompts solely a small subset of specialised skilled networks per token, enhancing effectivity whereas sustaining excessive mannequin capability. The Gemma 4 26B is a MoE mannequin.

Login to proceed studying and revel in expert-curated content material.