You know the way, again within the day, we used easy phrase‐depend tips to symbolize textual content? Effectively, issues have come a great distance since then. Now, once we speak concerning the evolution of embeddings, we imply numerical snapshots that seize not simply which phrases seem however what they actually imply, how they relate to one another in context, and even how they tie into pictures and different media. Embeddings energy every part from serps that perceive your intent to suggestion techniques that appear to learn your thoughts. They’re on the coronary heart of slicing‐edge AI and machine‐studying functions, too. So, let’s take a stroll by way of this evolution from uncooked counts to semantic vectors, exploring how every strategy works, what it brings to the desk, and the place it falls quick.

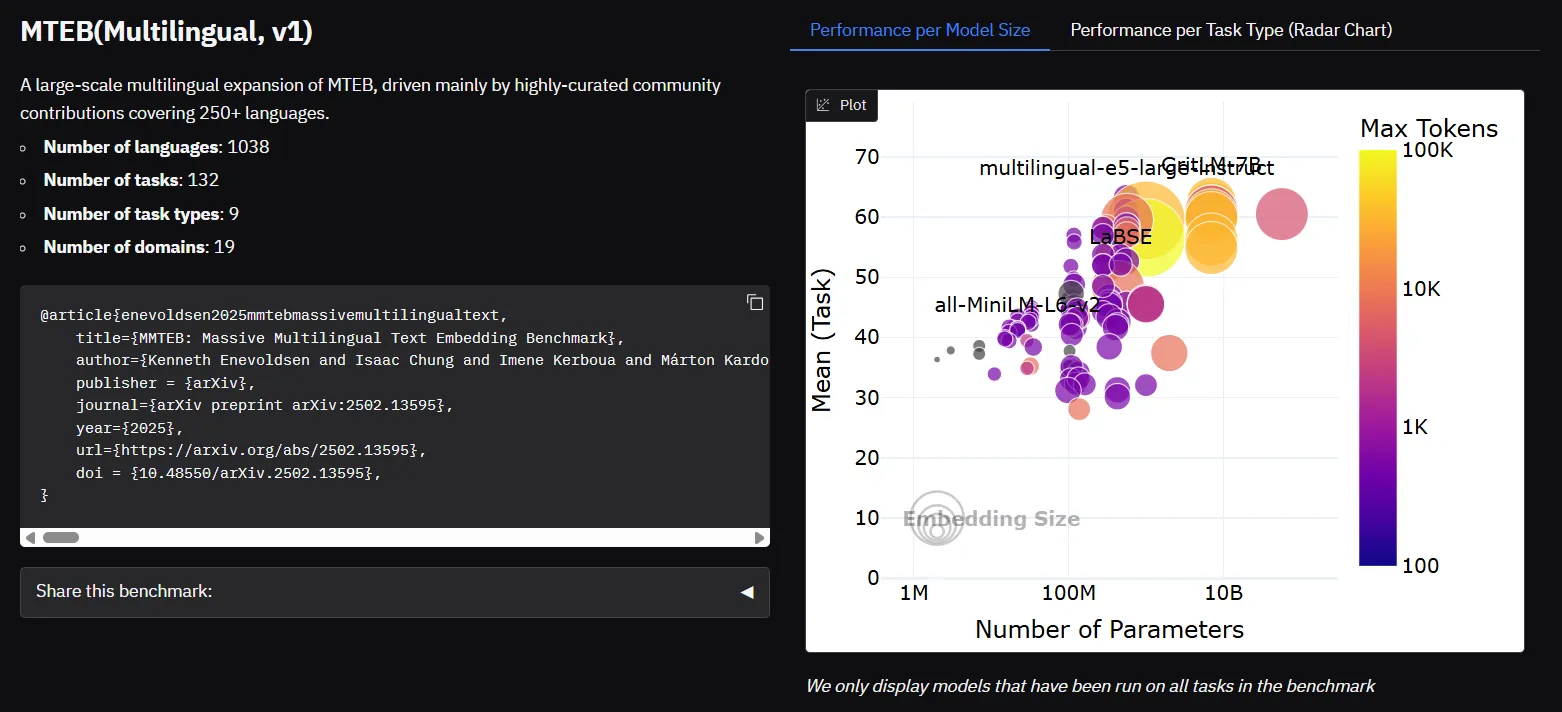

Rating of Embeddings in MTEB Leaderboards

Most fashionable LLMs generate embeddings as intermediate outputs of their architectures. These may be extracted and fine-tuned for varied downstream duties, making LLM-based embeddings some of the versatile instruments accessible immediately.

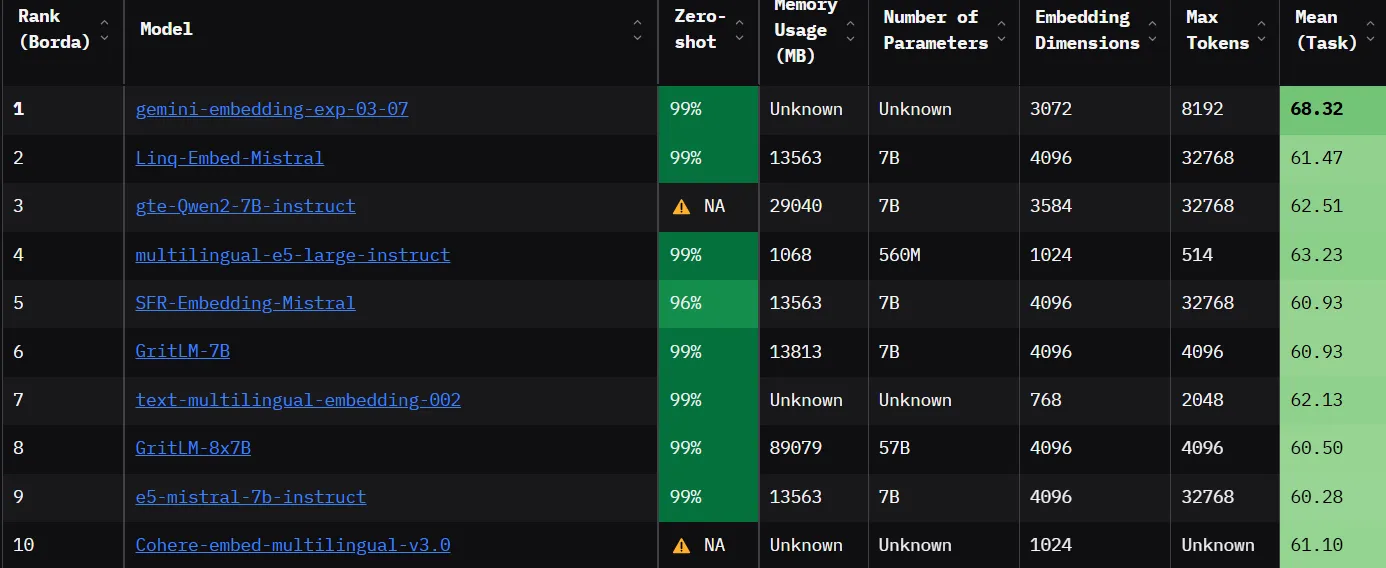

To maintain up with the fast-moving panorama, platforms like Hugging Face have launched sources just like the Huge Textual content Embedding Benchmark (MTEB) Leaderboard. This leaderboard ranks embedding fashions based mostly on their efficiency throughout a variety of duties, together with classification, clustering, retrieval, and extra. That is considerably serving to practitioners determine the perfect fashions for his or her use instances.

Armed with these leaderboard insights, let’s roll up our sleeves and dive into the vectorization toolbox – depend vectors, TF–IDF, and different traditional strategies, which nonetheless function the important constructing blocks for immediately’s refined embeddings.

1. Depend Vectorization

Depend Vectorization is likely one of the easiest strategies for representing textual content. It emerged from the necessity to convert uncooked textual content into numerical kind in order that machine studying fashions might course of it. On this technique, every doc is reworked right into a vector that displays the depend of every phrase showing in it. This easy strategy laid the groundwork for extra advanced representations and continues to be helpful in eventualities the place interpretability is vital.

How It Works

- Mechanism:

- The textual content corpus is first tokenized into phrases. A vocabulary is constructed from all distinctive tokens.

- Every doc is represented as a vector the place every dimension corresponds to the phrase’s respective vector within the vocabulary.

- The worth in every dimension is solely the frequency or depend of a sure phrase within the doc.

- Instance: For a vocabulary [“apple“, “banana“, “cherry“], the doc “apple apple cherry” turns into [2, 0, 1].

- Extra Element: Depend Vectorization serves as the muse for a lot of different approaches. Its simplicity doesn’t seize any contextual or semantic info, nevertheless it stays a vital preprocessing step in lots of NLP pipelines.

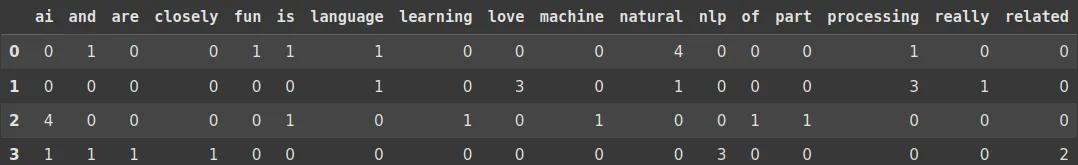

Code Implementation

from sklearn.feature_extraction.textual content import CountVectorizer

import pandas as pd

# Pattern textual content paperwork with repeated phrases

paperwork = [

"Natural Language Processing is fun and natural natural natural",

"I really love love love Natural Language Processing Processing Processing",

"Machine Learning is a part of AI AI AI AI",

"AI and NLP NLP NLP are closely related related"

]

# Initialize CountVectorizer

vectorizer = CountVectorizer()

# Match and remodel the textual content knowledge

X = vectorizer.fit_transform(paperwork)

# Get characteristic names (distinctive phrases)

feature_names = vectorizer.get_feature_names_out()

# Convert to DataFrame for higher visualization

df = pd.DataFrame(X.toarray(), columns=feature_names)

# Print the matrix

print(df)Output:

Advantages

- Simplicity and Interpretability: Straightforward to implement and perceive.

- Deterministic: Produces a hard and fast illustration that’s simple to research.

Shortcomings

- Excessive Dimensionality and Sparsity: Vectors are sometimes giant and principally zero, resulting in inefficiencies.

- Lack of Semantic Context: Doesn’t seize which means or relationships between phrases.

2. One-Scorching Encoding

One-hot encoding is likely one of the earliest approaches to representing phrases as vectors. Developed alongside early digital computing strategies within the Nineteen Fifties and Nineteen Sixties, it transforms categorical knowledge, equivalent to phrases, into binary vectors. Every phrase is represented uniquely, making certain that no two phrases share comparable representations, although this comes on the expense of capturing semantic similarity.

How It Works

- Mechanism:

- Each phrase within the vocabulary is assigned a vector whose size equals the scale of the vocabulary.

- In every vector, all components are 0 aside from a single 1 within the place similar to that phrase.

- Instance: With a vocabulary [“apple“, “banana“, “cherry“], the phrase “banana” is represented as [0, 1, 0].

- Extra Element: One-hot vectors are fully orthogonal, which implies that the cosine similarity between two totally different phrases is zero. This strategy is easy and unambiguous however fails to seize any similarity (e.g., “apple” and “orange” seem equally dissimilar to “apple” and “automobile”).

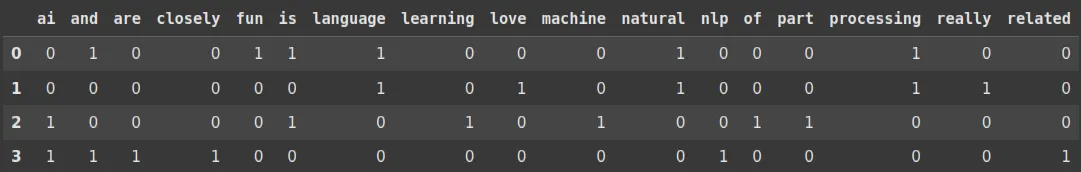

Code Implementation

from sklearn.feature_extraction.textual content import CountVectorizer

import pandas as pd

# Pattern textual content paperwork

paperwork = [

"Natural Language Processing is fun and natural natural natural",

"I really love love love Natural Language Processing Processing Processing",

"Machine Learning is a part of AI AI AI AI",

"AI and NLP NLP NLP are closely related related"

]

# Initialize CountVectorizer with binary=True for One-Scorching Encoding

vectorizer = CountVectorizer(binary=True)

# Match and remodel the textual content knowledge

X = vectorizer.fit_transform(paperwork)

# Get characteristic names (distinctive phrases)

feature_names = vectorizer.get_feature_names_out()

# Convert to DataFrame for higher visualization

df = pd.DataFrame(X.toarray(), columns=feature_names)

# Print the one-hot encoded matrix

print(df)Output:

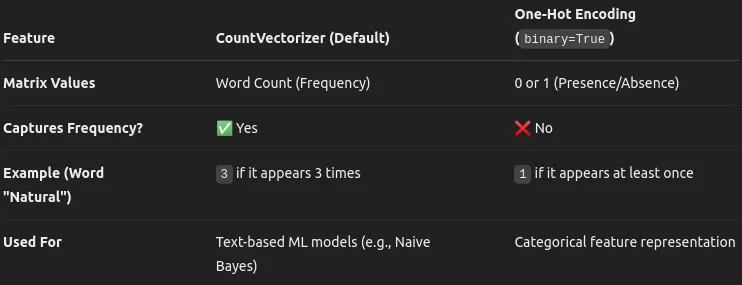

So, mainly, you may view the distinction between Depend Vectorizer and One Scorching Encoding. Depend Vectorizer counts what number of instances a sure phrase exists in a sentence, whereas One Scorching Encoding labels the phrase as 1 if it exists in a sure sentence/doc.

When to Use What?

- Use CountVectorizer when the variety of instances a phrase seems is necessary (e.g., spam detection, doc similarity).

- Use One-Scorching Encoding if you solely care about whether or not a phrase seems no less than as soon as (e.g., categorical characteristic encoding for ML fashions).

Advantages

- Readability and Uniqueness: Every phrase has a definite and non-overlapping illustration

- Simplicity: Straightforward to implement with minimal computational overhead for small vocabularies.

Shortcomings

- Inefficiency with Giant Vocabularies: Vectors grow to be extraordinarily high-dimensional and sparse.

- No Semantic Similarity: Doesn’t permit for any relationships between phrases; all non-identical phrases are equally distant.

3. TF-IDF (Time period Frequency-Inverse Doc Frequency)

TF-IDF was developed to enhance upon uncooked depend strategies by counting phrase occurrences and weighing phrases based mostly on their general significance in a corpus. Launched within the early Seventies, TF-IDF is a cornerstone in info retrieval techniques and textual content mining functions. It helps spotlight phrases which are important in particular person paperwork whereas downplaying phrases which are frequent throughout all paperwork.

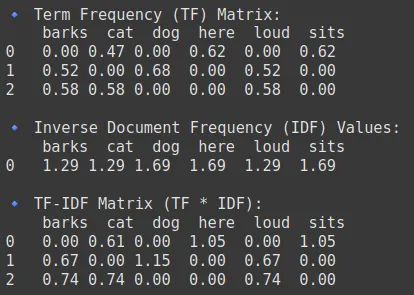

How It Works

- Mechanism:

- Time period Frequency (TF): Measures how usually a phrase seems in a doc.

- Inverse Doc Frequency (IDF): Scales the significance of a phrase by contemplating how frequent or uncommon it’s throughout all paperwork.

- The ultimate TF-IDF rating is the product of TF and IDF.

- Instance: Frequent phrases like “the” obtain low scores, whereas extra distinctive phrases obtain larger scores, making them stand out in doc evaluation. Therefore, we usually omit the frequent phrases, that are additionally referred to as Stopwords, in NLP duties.

- Extra Element: TF-IDF transforms uncooked frequency counts right into a measure that may successfully differentiate between necessary key phrases and generally used phrases. It has grow to be an ordinary technique in serps and doc clustering.

Code Implementation

from sklearn.feature_extraction.textual content import TfidfVectorizer

import pandas as pd

import numpy as np

# Pattern quick sentences

paperwork = [

"cat sits here",

"dog barks loud",

"cat barks loud"

]

# Initialize TfidfVectorizer to get each TF and IDF

vectorizer = TfidfVectorizer()

# Match and remodel the textual content knowledge

X = vectorizer.fit_transform(paperwork)

# Extract characteristic names (distinctive phrases)

feature_names = vectorizer.get_feature_names_out()

# Get TF matrix (uncooked time period frequencies)

tf_matrix = X.toarray()

# Compute IDF values manually

idf_values = vectorizer.idf_

# Compute TF-IDF manually (TF * IDF)

tfidf_matrix = tf_matrix * idf_values

# Convert to DataFrames for higher visualization

df_tf = pd.DataFrame(tf_matrix, columns=feature_names)

df_idf = pd.DataFrame([idf_values], columns=feature_names)

df_tfidf = pd.DataFrame(tfidf_matrix, columns=feature_names)

# Print tables

print("n🔹 Time period Frequency (TF) Matrix:n", df_tf)

print("n🔹 Inverse Doc Frequency (IDF) Values:n", df_idf)

print("n🔹 TF-IDF Matrix (TF * IDF):n", df_tfidf)Output:

Advantages

- Enhanced Phrase Significance: Emphasizes content-specific phrases.

- Reduces Dimensionality: Filters out frequent phrases that add little worth.

Shortcomings

- Sparse Illustration: Regardless of weighting, the ensuing vectors are nonetheless sparse.

- Lack of Context: Doesn’t seize phrase order or deeper semantic relationships.

Additionally Learn: Implementing Depend Vectorizer and TF-IDF in NLP utilizing PySpark

4. Okapi BM25

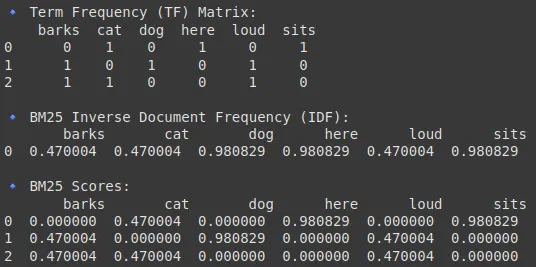

Okapi BM25, developed within the Nineteen Nineties, is a probabilistic mannequin designed primarily for rating paperwork in info retrieval techniques quite than as an embedding technique per se. BM25 is an enhanced model of TF-IDF, generally utilized in serps and knowledge retrieval. It improves upon TF-IDF by contemplating doc size normalization and saturation of time period frequency (i.e., diminishing returns for repeated phrases).

How It Works

- Mechanism:

- Probabilistic Framework: This framework estimates the relevance of a doc based mostly on the frequency of question phrases, adjusted by doc size.

- Makes use of parameters to manage the affect of time period frequency and to dampen the impact of very excessive counts.

Right here we can be wanting into the BM25 scoring mechanism:

BM25 introduces two parameters, k1 and b, which permit fine-tuning of the time period frequency saturation and the size normalization, respectively. These parameters are essential for optimizing the BM25 algorithm’s efficiency in varied search contexts.

- Instance: BM25 assigns larger relevance scores to paperwork that comprise uncommon question phrases with reasonable frequency whereas adjusting for doc size and vice versa.

- Extra Element: Though BM25 doesn’t produce vector embeddings, it has deeply influenced textual content retrieval techniques by bettering upon the shortcomings of TF-IDF in rating paperwork.

Code Implementation

import numpy as np

import pandas as pd

from sklearn.feature_extraction.textual content import CountVectorizer

# Pattern paperwork

paperwork = [

"cat sits here",

"dog barks loud",

"cat barks loud"

]

# Compute Time period Frequency (TF) utilizing CountVectorizer

vectorizer = CountVectorizer()

X = vectorizer.fit_transform(paperwork)

tf_matrix = X.toarray()

feature_names = vectorizer.get_feature_names_out()

# Compute Inverse Doc Frequency (IDF) for BM25

N = len(paperwork) # Whole variety of paperwork

df = np.sum(tf_matrix > 0, axis=0) # Doc Frequency (DF) for every time period

idf = np.log((N - df + 0.5) / (df + 0.5) + 1) # BM25 IDF system

# Compute BM25 scores

k1 = 1.5 # Smoothing parameter

b = 0.75 # Size normalization parameter

avgdl = np.imply([len(doc.split()) for doc in documents]) # Common doc size

doc_lengths = np.array([len(doc.split()) for doc in documents])

bm25_matrix = np.zeros_like(tf_matrix, dtype=np.float64)

for i in vary(N): # For every doc

for j in vary(len(feature_names)): # For every time period

term_freq = tf_matrix[i, j]

num = term_freq * (k1 + 1)

denom = term_freq + k1 * (1 - b + b * (doc_lengths[i] / avgdl))

bm25_matrix[i, j] = idf[j] * (num / denom)

# Convert to DataFrame for higher visualization

df_tf = pd.DataFrame(tf_matrix, columns=feature_names)

df_idf = pd.DataFrame([idf], columns=feature_names)

df_bm25 = pd.DataFrame(bm25_matrix, columns=feature_names)

# Show the outcomes

print("n🔹 Time period Frequency (TF) Matrix:n", df_tf)

print("n🔹 BM25 Inverse Doc Frequency (IDF):n", df_idf)

print("n🔹 BM25 Scores:n", df_bm25)Output:

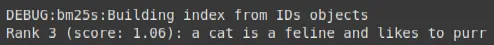

Code Implementation (Information Retrieval)

!pip set up bm25s

import bm25s

# Create your corpus right here

corpus = [

"a cat is a feline and likes to purr",

"a dog is the human's best friend and loves to play",

"a bird is a beautiful animal that can fly",

"a fish is a creature that lives in water and swims",

]

# Create the BM25 mannequin and index the corpus

retriever = bm25s.BM25(corpus=corpus)

retriever.index(bm25s.tokenize(corpus))

# Question the corpus and get top-k outcomes

question = "does the fish purr like a cat?"

outcomes, scores = retriever.retrieve(bm25s.tokenize(question), ok=2)

# Let's have a look at what we received!

doc, rating = outcomes[0, 0], scores[0, 0]

print(f"Rank {i+1} (rating: {rating:.2f}): {doc}")Output:

Advantages

- Improved Relevance Rating: Higher handles doc size and time period saturation.

- Broadly Adopted: Commonplace in lots of fashionable serps and IR techniques.

Shortcomings

- Not a True Embedding: It scores paperwork quite than producing a steady vector area illustration.

- Parameter Sensitivity: Requires cautious tuning for optimum efficiency.

Additionally Learn: How one can Create NLP Search Engine With BM25?

5. Word2Vec (CBOW and Skip-gram)

Launched by Google in 2013, Word2Vec revolutionized NLP by studying dense, low-dimensional vector representations of phrases. It moved past counting and weighting by coaching shallow neural networks that seize semantic and syntactic relationships based mostly on phrase context. Word2Vec is available in two flavors: Steady Bag-of-Phrases (CBOW) and Skip-gram.

How It Works

- CBOW (Steady Bag-of-Phrases):

- Mechanism: Predicts a goal phrase based mostly on the encompassing context phrases.

- Course of: Takes a number of context phrases (ignoring the order) and learns to foretell the central phrase.

- Skip-gram:

- Mechanism: Makes use of the goal phrase to foretell its surrounding context phrases.

- Course of: Notably efficient for studying representations of uncommon phrases by specializing in their contexts.

- Extra Element: Each architectures use a neural community with one hidden layer and make use of optimization tips equivalent to unfavorable sampling or hierarchical softmax to handle computational complexity. The ensuing embeddings seize nuanced semantic relationships as an example, “king” minus “man” plus “lady” approximates “queen.”

Code Implementation

!pip set up numpy==1.24.3

from gensim.fashions import Word2Vec

import networkx as nx

import matplotlib.pyplot as plt

# Pattern corpus

sentences = [

["I", "love", "deep", "learning"],

["Natural", "language", "processing", "is", "fun"],

["Word2Vec", "is", "a", "great", "tool"],

["AI", "is", "the", "future"],

]

# Practice Word2Vec fashions

cbow_model = Word2Vec(sentences, vector_size=10, window=2, min_count=1, sg=0) # CBOW

skipgram_model = Word2Vec(sentences, vector_size=10, window=2, min_count=1, sg=1) # Skip-gram

# Get phrase vectors

phrase = "is"

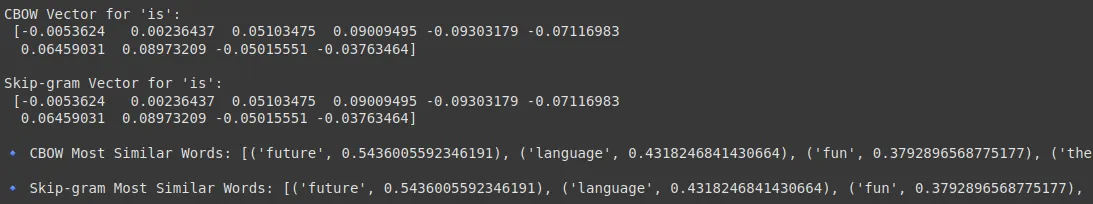

print(f"CBOW Vector for '{phrase}':n", cbow_model.wv[word])

print(f"nSkip-gram Vector for '{phrase}':n", skipgram_model.wv[word])

# Get most comparable phrases

print("n🔹 CBOW Most Related Phrases:", cbow_model.wv.most_similar(phrase))

print("n🔹 Skip-gram Most Related Phrases:", skipgram_model.wv.most_similar(phrase))

Output:

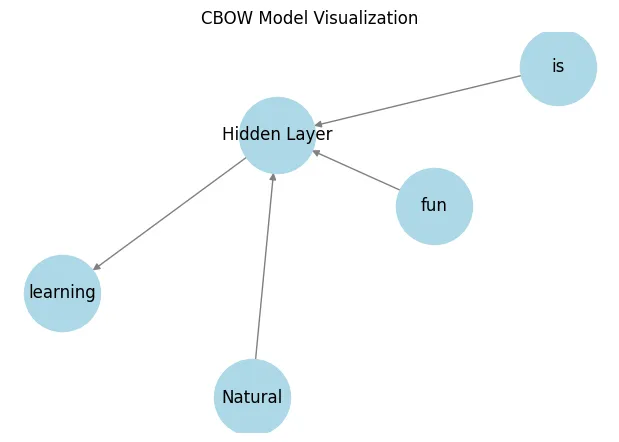

Visualizing the CBOW and Skip-gram:

def visualize_cbow():

G = nx.DiGraph()

# Nodes

context_words = ["Natural", "is", "fun"]

target_word = "studying"

for phrase in context_words:

G.add_edge(phrase, "Hidden Layer")

G.add_edge("Hidden Layer", target_word)

# Draw the community

pos = nx.spring_layout(G)

plt.determine(figsize=(6, 4))

nx.draw(G, pos, with_labels=True, node_size=3000, node_color="lightblue", edge_color="grey")

plt.title("CBOW Mannequin Visualization")

plt.present()

visualize_cbow()Output:

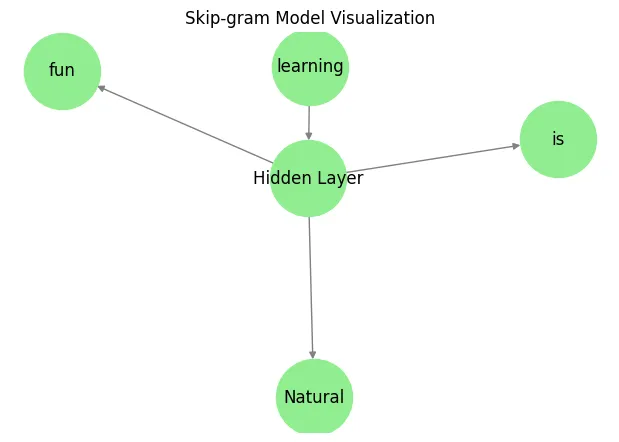

def visualize_skipgram():

G = nx.DiGraph()

# Nodes

target_word = "studying"

context_words = ["Natural", "is", "fun"]

G.add_edge(target_word, "Hidden Layer")

for phrase in context_words:

G.add_edge("Hidden Layer", phrase)

# Draw the community

pos = nx.spring_layout(G)

plt.determine(figsize=(6, 4))

nx.draw(G, pos, with_labels=True, node_size=3000, node_color="lightgreen", edge_color="grey")

plt.title("Skip-gram Mannequin Visualization")

plt.present()

visualize_skipgram()Output:

Advantages

- Semantic Richness: Learns significant relationships between phrases.

- Environment friendly Coaching: Might be skilled on giant corpora comparatively rapidly.

- Dense Representations: Makes use of low-dimensional, steady vectors that facilitate downstream processing.

Shortcomings

- Static Representations: Offers one embedding per phrase no matter context.

- Context Limitations: Can’t disambiguate polysemous phrases which have totally different meanings in numerous contexts.

To learn extra about Word2Vec learn this weblog.

6. GloVe (International Vectors for Phrase Illustration)

GloVe, developed at Stanford in 2014, builds on the concepts of Word2Vec by combining international co-occurrence statistics with native context info. It was designed to provide phrase embeddings that seize general corpus-level statistics, providing improved consistency throughout totally different contexts.

How It Works

- Mechanism:

- Co-occurrence Matrix: Constructs a matrix capturing how steadily pairs of phrases seem collectively throughout the complete corpus.

This logic of Co-occurence matrices are additionally broadly utilized in Laptop Imaginative and prescient too, particularly underneath the subject of GLCM(Grey-Stage Co-occurrence Matrix). It’s a statistical technique utilized in picture processing and pc imaginative and prescient for texture evaluation that considers the spatial relationship between pixels.

- Matrix Factorization: Factorizes this matrix to derive phrase vectors that seize international statistical info.

- Co-occurrence Matrix: Constructs a matrix capturing how steadily pairs of phrases seem collectively throughout the complete corpus.

- Extra Element:

In contrast to Word2Vec’s purely predictive mannequin, GloVe’s strategy permits the mannequin to be taught the ratios of phrase co-occurrences, which some research have discovered to be extra sturdy in capturing semantic similarities and analogies.

Code Implementation

import numpy as np

# Load pre-trained GloVe embeddings

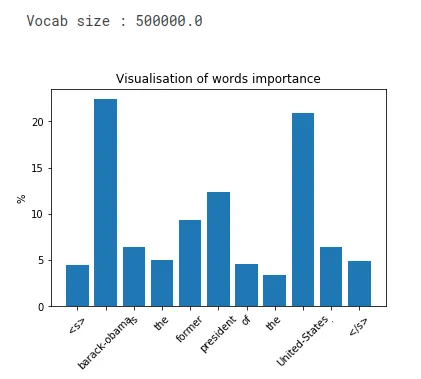

glove_model = api.load("glove-wiki-gigaword-50") # You need to use "glove-twitter-25", "glove-wiki-gigaword-100", and so forth.

# Instance phrases

phrase = "king"

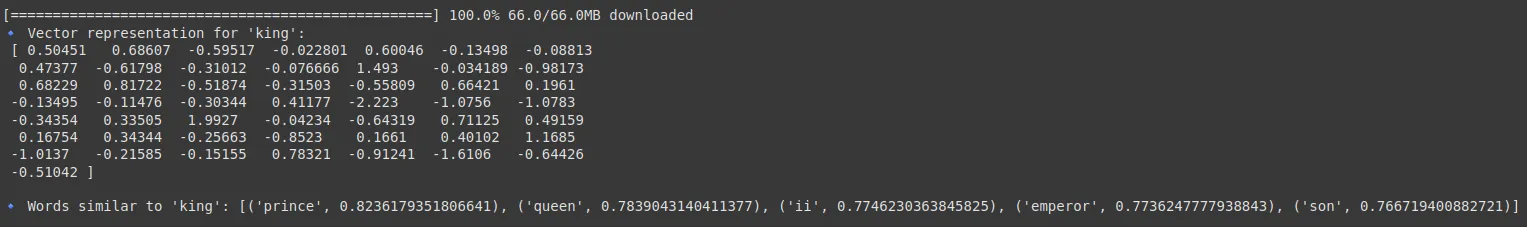

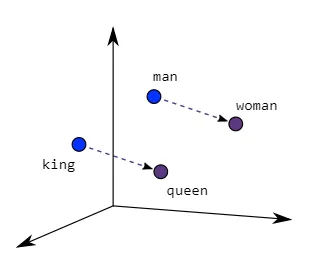

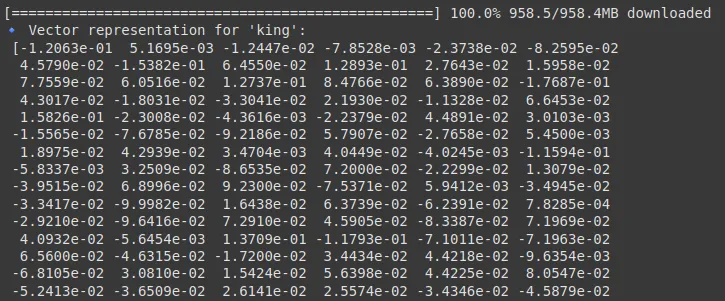

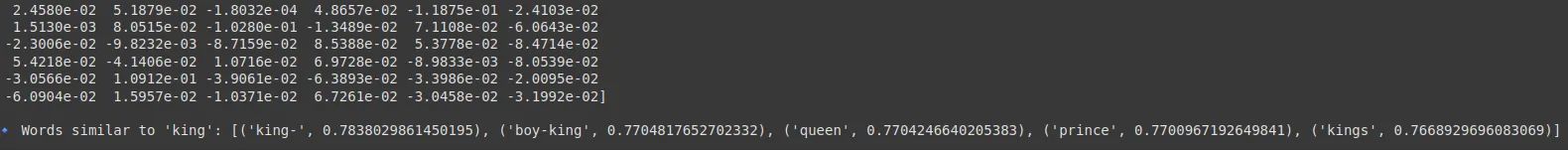

print(f"🔹 Vector illustration for '{phrase}':n", glove_model[word])

# Discover comparable phrases

similar_words = glove_model.most_similar(phrase, topn=5)

print("n🔹 Phrases just like 'king':", similar_words)

word1 = "king"

word2 = "queen"

similarity = glove_model.similarity(word1, word2)

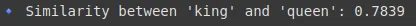

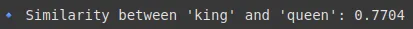

print(f"🔹 Similarity between '{word1}' and '{word2}': {similarity:.4f}")Output:

This picture will show you how to perceive how this similarity seems like when plotted:

Do seek advice from this for extra in-depth info.

Advantages

- International Context Integration: Makes use of total corpus statistics to enhance illustration.

- Stability: Usually yields extra constant embeddings throughout totally different contexts.

Shortcomings

- Useful resource Demanding: Constructing and factorizing giant matrices may be computationally costly.

- Static Nature: Much like Word2Vec, it doesn’t generate context-dependent embeddings.

GloVe learns embeddings from phrase co-occurrence matrices.

7. FastText

FastText, launched by Fb in 2016, extends Word2Vec by incorporating subword (character n-gram) info. This innovation helps the mannequin deal with uncommon phrases and morphologically wealthy languages by breaking phrases down into smaller items, thereby capturing inside construction.

How It Works

- Mechanism:

- Subword Modeling: Represents every phrase as a sum of its character n-gram vectors.

- Embedding Studying: Trains a mannequin that makes use of these subword vectors to provide a last phrase embedding.

- Extra Element:

This technique is especially helpful for languages with wealthy morphology and for coping with out-of-vocabulary phrases. By decomposing phrases, FastText can generalize higher throughout comparable phrase types and misspellings.

Code Implementation

import gensim.downloader as api

fasttext_model = api.load("fasttext-wiki-news-subwords-300")

# Instance phrase

phrase = "king"

print(f"🔹 Vector illustration for '{phrase}':n", fasttext_model[word])

# Discover comparable phrases

similar_words = fasttext_model.most_similar(phrase, topn=5)

print("n🔹 Phrases just like 'king':", similar_words)

word1 = "king"

word2 = "queen"

similarity = fasttext_model.similarity(word1, word2)

print(f"🔹 Similarity between '{word1}' and '{word2}': {similarity:.4f}")Output:

Advantages

- Dealing with OOV(Out of Vocabulary) Phrases: Improves efficiency when phrases are rare or unseen. Can say that the check dataset has some labels which don’t exist in our practice dataset.

- Morphological Consciousness: Captures the interior construction of phrases.

Shortcomings

- Elevated Complexity: The inclusion of subword info provides to computational overhead.

- Nonetheless Static or Fastened: Regardless of the enhancements, FastText doesn’t alter embeddings based mostly on a sentence’s surrounding context.

8. Doc2Vec

Doc2Vec extends Word2Vec’s concepts to bigger our bodies of textual content, equivalent to sentences, paragraphs, or total paperwork. Launched in 2014, it gives a way to acquire fixed-length vector representations for variable-length texts, enabling more practical doc classification, clustering, and retrieval.

How It Works

- Mechanism:

- Distributed Reminiscence (DM) Mannequin: Augments the Word2Vec structure by including a novel doc vector that, together with context phrases, predicts a goal phrase.

- Distributed Bag-of-Phrases (DBOW) Mannequin: Learns doc vectors by predicting phrases randomly sampled from the doc.

- Extra Element:

These fashions be taught document-level embeddings that seize the general semantic content material of the textual content. They’re particularly helpful for duties the place the construction and theme of the complete doc are necessary.

Code Implementation

import gensim

from gensim.fashions.doc2vec import Doc2Vec, TaggedDocument

import nltk

nltk.obtain('punkt_tab')

# Pattern paperwork

paperwork = [

"Machine learning is amazing",

"Natural language processing enables AI to understand text",

"Deep learning advances artificial intelligence",

"Word embeddings improve NLP tasks",

"Doc2Vec is an extension of Word2Vec"

]

# Tokenize and tag paperwork

tagged_data = [TaggedDocument(words=nltk.word_tokenize(doc.lower()), tags=[str(i)]) for i, doc in enumerate(paperwork)]

# Print tagged knowledge

print(tagged_data)

# Outline mannequin parameters

mannequin = Doc2Vec(vector_size=50, window=2, min_count=1, employees=4, epochs=100)

# Construct vocabulary

mannequin.build_vocab(tagged_data)

# Practice the mannequin

mannequin.practice(tagged_data, total_examples=mannequin.corpus_count, epochs=mannequin.epochs)

# Take a look at a doc by producing its vector

test_doc = "Synthetic intelligence makes use of machine studying"

test_vector = mannequin.infer_vector(nltk.word_tokenize(test_doc.decrease()))

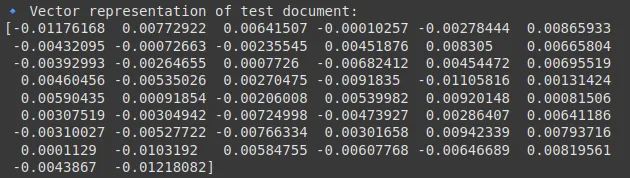

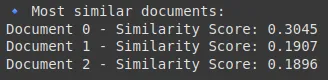

print(f"🔹 Vector illustration of check doc:n{test_vector}")

# Discover most comparable paperwork to the check doc

similar_docs = mannequin.dv.most_similar([test_vector], topn=3)

print("🔹 Most comparable paperwork:")

for tag, rating in similar_docs:

print(f"Doc {tag} - Similarity Rating: {rating:.4f}")Output:

Advantages

- Doc-Stage Illustration: Successfully captures thematic and contextual info of bigger texts.

- Versatility: Helpful in a wide range of duties, from suggestion techniques to clustering and summarization.

Shortcomings

- Coaching Sensitivity: Requires important knowledge and cautious tuning to provide high-quality docent vectors.

- Static Embeddings: Every doc is represented by one vector whatever the inside variability of content material.

9. InferSent

InferSent, developed by Fb in 2017, was designed to generate high-quality sentence embeddings by way of supervised studying on pure language inference (NLI) datasets. It goals to seize semantic nuances on the sentence stage, making it extremely efficient for duties like semantic similarity and textual entailment.

How It Works

- Mechanism:

- Supervised Coaching: Makes use of labeled NLI knowledge to be taught sentence representations that mirror the logical relationships between sentences.

- Bidirectional LSTMs: Employs recurrent neural networks that course of sentences from each instructions to seize context.

- Extra Element:

The mannequin leverages supervised understanding to refine embeddings in order that semantically comparable sentences are nearer collectively within the vector area, vastly enhancing efficiency on duties like sentiment evaluation and paraphrase detection.

Code Implementation

You’ll be able to comply with this Kaggle Pocket book to implement this.

Output:

Advantages

- Wealthy Semantic Capturing: Offers deep, contextually nuanced sentence representations.

- Process-Optimized: Excels at capturing relationships required for semantic inference duties.

Shortcomings

- Dependence on Labeled Knowledge: Requires extensively annotated datasets for coaching.

- Computationally Intensive: Extra resource-demanding than unsupervised strategies.

10. Common Sentence Encoder (USE)

The Common Sentence Encoder (USE) is a mannequin developed by Google to create high-quality, general-purpose sentence embeddings. Launched in 2018, USE has been designed to work effectively throughout a wide range of NLP duties with minimal fine-tuning, making it a flexible instrument for functions starting from semantic search to textual content classification.

How It Works

- Mechanism:

- Structure Choices: USE may be applied utilizing Transformer architectures or Deep Averaging Networks (DANs) to encode sentences.

- Pretraining: Skilled on giant, various datasets to seize broad language patterns, it maps sentences right into a fixed-dimensional area.

- Extra Element:

USE gives sturdy embeddings throughout domains and duties, making it a superb “out-of-the-box” answer. Its design balances efficiency and effectivity, providing high-level embeddings with out the necessity for intensive task-specific tuning.

Code Implementation

import tensorflow_hub as hub

import tensorflow as tf

import numpy as np

# Load the mannequin (this will take a couple of seconds on first run)

embed = hub.load("https://tfhub.dev/google/universal-sentence-encoder/4")

print("✅ USE mannequin loaded efficiently!")

# Pattern sentences

sentences = [

"Machine learning is fun.",

"Artificial intelligence and machine learning are related.",

"I love playing football.",

"Deep learning is a subset of machine learning."

]

# Get sentence embeddings

embeddings = embed(sentences)

# Convert to NumPy for simpler manipulation

embeddings_np = embeddings.numpy()

# Show form and first vector

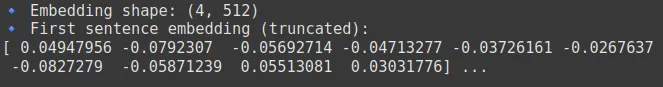

print(f"🔹 Embedding form: {embeddings_np.form}")

print(f"🔹 First sentence embedding (truncated):n{embeddings_np[0][:10]} ...")

from sklearn.metrics.pairwise import cosine_similarity

# Compute pairwise cosine similarities

similarity_matrix = cosine_similarity(embeddings_np)

# Show similarity matrix

import pandas as pd

similarity_df = pd.DataFrame(similarity_matrix, index=sentences, columns=sentences)

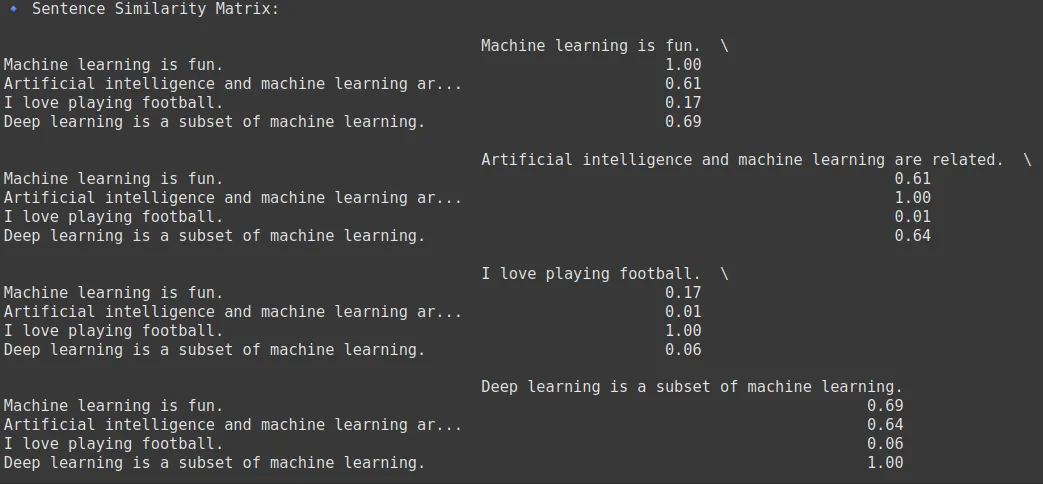

print("🔹 Sentence Similarity Matrix:n")

print(similarity_df.spherical(2))

import matplotlib.pyplot as plt

from sklearn.decomposition import PCA

# Cut back to 2D

pca = PCA(n_components=2)

decreased = pca.fit_transform(embeddings_np)

# Plot

plt.determine(figsize=(8, 6))

plt.scatter(decreased[:, 0], decreased[:, 1], shade="blue")

for i, sentence in enumerate(sentences):

plt.annotate(f"Sentence {i+1}", (decreased[i, 0]+0.01, decreased[i, 1]+0.01))

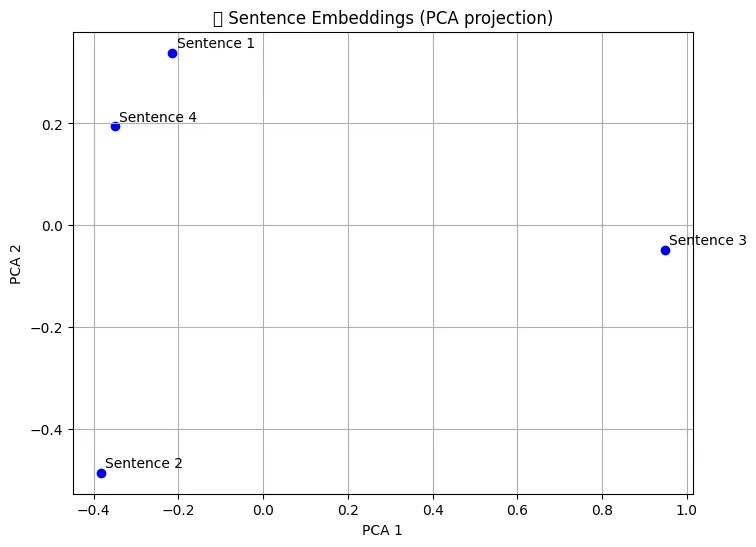

plt.title("📊 Sentence Embeddings (PCA projection)")

plt.xlabel("PCA 1")

plt.ylabel("PCA 2")

plt.grid(True)

plt.present()Output:

Advantages

- Versatility: Effectively-suited for a broad vary of functions with out extra coaching.

- Pretrained Comfort: Prepared for rapid use, saving time and computational sources.

Shortcomings

- Fastened Representations: Produces a single embedding per sentence with out dynamically adjusting to totally different contexts.

- Mannequin Dimension: Some variants are fairly giant, which may have an effect on deployment in resource-limited environments.

11. Node2Vec

Node2Vec is a technique initially designed for studying node embeddings in graph buildings. Whereas not a textual content illustration technique per se, it’s more and more utilized in NLP duties that contain community or graph knowledge, equivalent to social networks or data graphs. Launched round 2016, it helps seize structural relationships in graph knowledge.

Use Circumstances: Node classification, hyperlink prediction, graph clustering, suggestion techniques.

How It Works

- Mechanism:

- Random Walks: Performs biased random walks on a graph to generate sequences of nodes.

- Skip-gram Mannequin: Applies a method just like Word2Vec on these sequences to be taught low-dimensional embeddings for nodes.

- Extra Element:

By simulating the sentences inside the nodes, Node2Vec successfully captures the native and international construction of the graphs. It’s extremely adaptive and can be utilized for varied downstream duties, equivalent to clustering, classification or suggestion techniques in networked knowledge.

Code Implementation

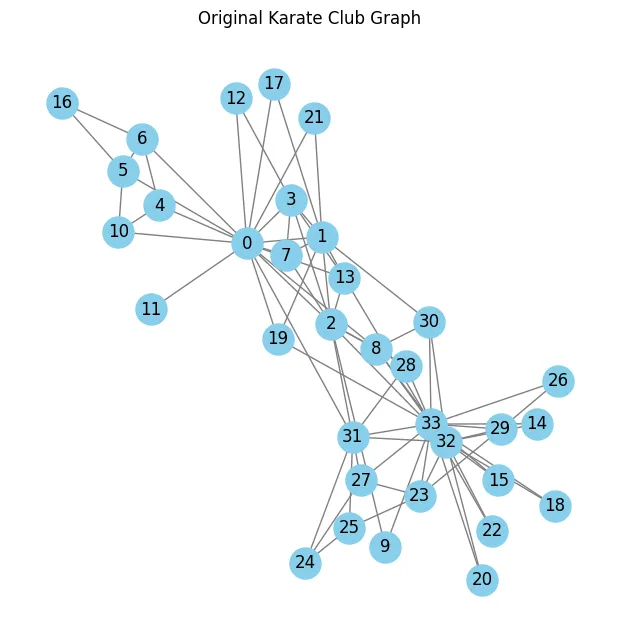

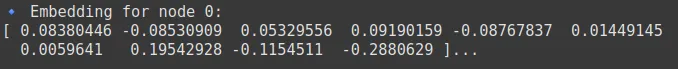

We are going to use this ready-made graph from NetworkX to view our Node2Vec implementation.To be taught extra concerning the Karate Membership Graph, click on right here.

!pip set up numpy==1.24.3 # Regulate model if wanted

import networkx as nx

import numpy as np

from node2vec import Node2Vec

import matplotlib.pyplot as plt

from sklearn.decomposition import PCA

# Create a easy graph

G = nx.karate_club_graph() # A well-known check graph with 34 nodes

# Visualize authentic graph

plt.determine(figsize=(6, 6))

nx.draw(G, with_labels=True, node_color="skyblue", edge_color="grey", node_size=500)

plt.title("Authentic Karate Membership Graph")

plt.present()

# Initialize Node2Vec mannequin

node2vec = Node2Vec(G, dimensions=64, walk_length=30, num_walks=200, employees=2)

# Practice the mannequin (Word2Vec underneath the hood)

mannequin = node2vec.match(window=10, min_count=1, batch_words=4)

# Get the vector for a particular node

node_id = 0

vector = mannequin.wv[str(node_id)] # Word: Node IDs are saved as strings

print(f"🔹 Embedding for node {node_id}:n{vector[:10]}...") # Truncated

# Get all embeddings

node_ids = mannequin.wv.index_to_key

embeddings = np.array([model.wv[node] for node in node_ids])

# Cut back dimensions to 2D

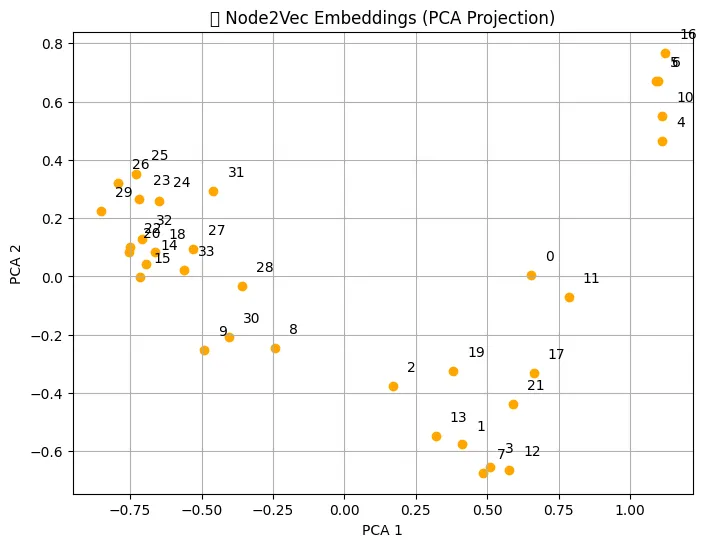

pca = PCA(n_components=2)

decreased = pca.fit_transform(embeddings)

# Plot embeddings

plt.determine(figsize=(8, 6))

plt.scatter(decreased[:, 0], decreased[:, 1], shade="orange")

for i, node in enumerate(node_ids):

plt.annotate(node, (decreased[i, 0] + 0.05, decreased[i, 1] + 0.05))

plt.title("📊 Node2Vec Embeddings (PCA Projection)")

plt.xlabel("PCA 1")

plt.ylabel("PCA 2")

plt.grid(True)

plt.present()

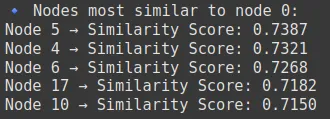

# Discover most comparable nodes to node 0

similar_nodes = mannequin.wv.most_similar(str(0), topn=5)

print("🔹 Nodes most just like node 0:")

for node, rating in similar_nodes:

print(f"Node {node} → Similarity Rating: {rating:.4f}")Output:

Advantages

- Graph Construction Seize: Excels at embedding nodes with wealthy relational info.

- Flexibility: Might be utilized to any graph-structured knowledge, not simply language.

Shortcomings

- Area Specificity: Much less relevant to plain textual content until represented as a graph.

- Parameter Sensitivity: The standard of embeddings is delicate to the parameters utilized in random walks.

12. ELMo (Embeddings from Language Fashions)

ELMo, launched by the Allen Institute for AI in 2018, marked a breakthrough by offering deep contextualized phrase representations. In contrast to earlier fashions that generate a single vector per phrase, ELMo produces dynamic embeddings that change based mostly on a sentence’s context, capturing each syntactic and semantic nuances.

How It Works

- Mechanism:

- Bidirectional LSTMs: Processes textual content in each ahead and backward instructions to seize full contextual info.

- Layered Representations: Combines representations from a number of layers of the neural community, every capturing totally different points of language.

- Extra Element:

The important thing innovation is that the identical phrase can have totally different embeddings relying on its utilization, permitting ELMo to deal with ambiguity and polysemy extra successfully. This context sensitivity results in enhancements in lots of downstream NLP duties. It operates by way of customizable parameters, together with dimensions (embedding vector dimension), walk_length (nodes per random stroll), num_walks (walks per node), and bias parameters p (return issue) and q (in-out issue) that management stroll habits by balancing breadth-first (BFS) and depth-first (DFS) search tendencies. The methodology combines biased random walks, which discover node neighborhoods with tunable search methods, with Word2Vec’s Skip-gram structure to be taught embeddings preserving community construction and node relationships. Node2Vec allows efficient node classification, hyperlink prediction, and graph clustering by capturing each native community patterns and broader buildings within the embedding area.

Code Implementation

To implement and perceive extra about ELMo, you may seek advice from this text right here.

Advantages

- Context-Consciousness: Offers phrase embeddings that fluctuate in accordance with the context.

- Enhanced Efficiency: Improves outcomes based mostly on a wide range of duties, together with sentiment evaluation, query answering, and machine translation.

Shortcomings

- Computationally Demanding: Requires extra sources for coaching and inference.

- Complicated Structure: Difficult to implement and fine-tune in comparison with different less complicated fashions.

13. BERT and Its Variants

What’s BERT?

BERT or Bidirectional Encoder Representations from Transformers, launched by Google in 2018, revolutionized NLP by introducing a transformer-based structure that captures bidirectional context. In contrast to earlier fashions that processed textual content in a unidirectional method, BERT considers each the left and proper context of every phrase. This deep, contextual understanding allows BERT to excel at duties starting from query answering and sentiment evaluation to named entity recognition.

How It Works:

- Transformer Structure: BERT is constructed on a multi-layer transformer community that makes use of a self-attention mechanism to seize dependencies between all phrases in a sentence concurrently. This enables the mannequin to weigh the dependency of every phrase on each different phrase.

- Masked Language Modeling: Throughout pre-training, BERT randomly masks sure phrases within the enter after which predicts them based mostly on their context. This forces the mannequin to be taught bidirectional context and develop a sturdy understanding of language patterns.

- Subsequent Sentence Prediction: BERT can also be skilled on pairs of sentences, studying to foretell whether or not one sentence logically follows one other. This helps it seize relationships between sentences, a vital characteristic for duties like doc classification and pure language inference.

Extra Element: BERT’s structure permits it to be taught intricate patterns of language, together with syntax and semantics. Advantageous-tuning on downstream duties is easy, resulting in state-of-the-art efficiency throughout many benchmarks.

Advantages:

- Deep Contextual Understanding: By contemplating each previous and future context, BERT generates richer, extra nuanced phrase representations.

- Versatility: BERT may be fine-tuned with comparatively little extra coaching for a variety of downstream duties.

Shortcomings:

- Heavy Computational Load: The mannequin requires important computational sources throughout each coaching and inference.

- Giant Mannequin Dimension: BERT’s giant variety of parameters could make it difficult to deploy in resource-constrained environments.

SBERT (Sentence-BERT)

Sentence-BERT (SBERT) was launched in 2019 to handle a key limitation of BERT—its inefficiency in producing semantically significant sentence embeddings for duties like semantic similarity, clustering, and knowledge retrieval. SBERT adapts BERT’s structure to provide fixed-size sentence embeddings which are optimized for evaluating the which means of sentences straight.

How It Works:

- Siamese Community Structure: SBERT modifies the unique BERT construction by using a siamese (or triplet) community structure. This implies it processes two (or extra) sentences in parallel by way of equivalent BERT-based encoders, permitting the mannequin to be taught embeddings such that semantically comparable sentences are shut collectively in vector area.

- Pooling Operation: After processing sentences by way of BERT, SBERT applies a pooling technique (generally which means pooling) on the token embeddings to provide a fixed-size vector for every sentence.

- Advantageous-Tuning with Sentence Pairs: SBERT is fine-tuned on duties involving sentence pairs utilizing contrastive or triplet loss. This coaching goal encourages the mannequin to position comparable sentences nearer collectively and dissimilar ones additional aside within the embedding area.

Advantages:

- Environment friendly Sentence Comparisons: SBERT is optimized for duties like semantic search and clustering. On account of its fastened dimension and semantically wealthy sentence embeddings, evaluating tens of hundreds of sentences turns into computationally possible.

- Versatility in Downstream Duties: SBERT embeddings are efficient for a wide range of functions, equivalent to paraphrase detection, semantic textual similarity, and knowledge retrieval.

Shortcomings:

- Dependence on Advantageous-Tuning Knowledge: The standard of SBERT embeddings may be closely influenced by the area and high quality of the coaching knowledge used throughout fine-tuning.

- Useful resource Intensive Coaching: Though inference is environment friendly, the preliminary fine-tuning course of requires appreciable computational sources.

DistilBERT

DistilBERT, launched by Hugging Face in 2019, is a lighter and sooner variant of BERT that retains a lot of its efficiency. It was created utilizing a way referred to as data distillation, the place a smaller mannequin (scholar) is skilled to imitate the habits of a bigger, pre-trained mannequin (instructor), on this case, BERT.

How It Works:

- Information Distillation: DistilBERT is skilled to match the output distributions of the unique BERT mannequin whereas utilizing fewer parameters. It removes some layers (e.g., 6 as an alternative of 12 within the BERT-base) however maintains essential studying habits.

- Loss Operate: The coaching makes use of a mixture of language modeling loss and distillation loss (KL divergence between instructor and scholar logits).

- Velocity Optimization: DistilBERT is optimized to be 60% sooner throughout inference whereas retaining ~97% of BERT’s efficiency on downstream duties.

Advantages:

- Light-weight and Quick: Best for real-time or cell functions on account of decreased computational calls for.

- Aggressive Efficiency: Achieves near-BERT accuracy with considerably decrease useful resource utilization.

Shortcomings:

- Slight Drop in Accuracy: Whereas very shut, it would barely underperform in comparison with the total BERT mannequin in advanced duties.

- Restricted Advantageous-Tuning Flexibility: It might not generalize as effectively in area of interest domains as full-sized fashions.

RoBERTa

RoBERTa or Robustly Optimized BERT Pretraining Strategy was launched by Fb AI in 2019 as a sturdy enhancement over BERT. It tweaks the pretraining methodology to enhance efficiency considerably throughout a variety of duties.

How It Works:

- Coaching Enhancements:

- Removes the Subsequent Sentence Prediction (NSP) goal, which was discovered to harm efficiency in some settings.

- Trains on a lot bigger datasets (e.g., Frequent Crawl) and for longer durations.

- Makes use of bigger mini-batches and extra coaching steps to stabilize and optimize studying.

- Dynamic Masking: This technique applies masking on the fly throughout every coaching epoch, exposing the mannequin to extra various masking patterns than BERT’s static masking.

Advantages:

- Superior Efficiency: Outperforms BERT on a number of benchmarks, together with GLUE and SQuAD.

- Strong Studying: Higher generalization throughout domains on account of improved coaching knowledge and methods.

Shortcomings:

- Useful resource Intensive: Much more computationally demanding than BERT.

- Overfitting Danger: With intensive coaching and huge datasets, there’s a danger of overfitting if not dealt with rigorously.

Code Implementation

from transformers import AutoTokenizer, AutoModel

import torch

# Enter sentence for embedding

sentence = "Pure Language Processing is reworking how machines perceive people."

# Select system (GPU if accessible)

system = torch.system("cuda" if torch.cuda.is_available() else "cpu")

# =============================

# 1. BERT Base Uncased

# =============================

# model_name = "bert-base-uncased"

# =============================

# 2. SBERT - Sentence-BERT

# =============================

# model_name = "sentence-transformers/all-MiniLM-L6-v2"

# =============================

# 3. DistilBERT

# =============================

# model_name = "distilbert-base-uncased"

# =============================

# 4. RoBERTa

# =============================

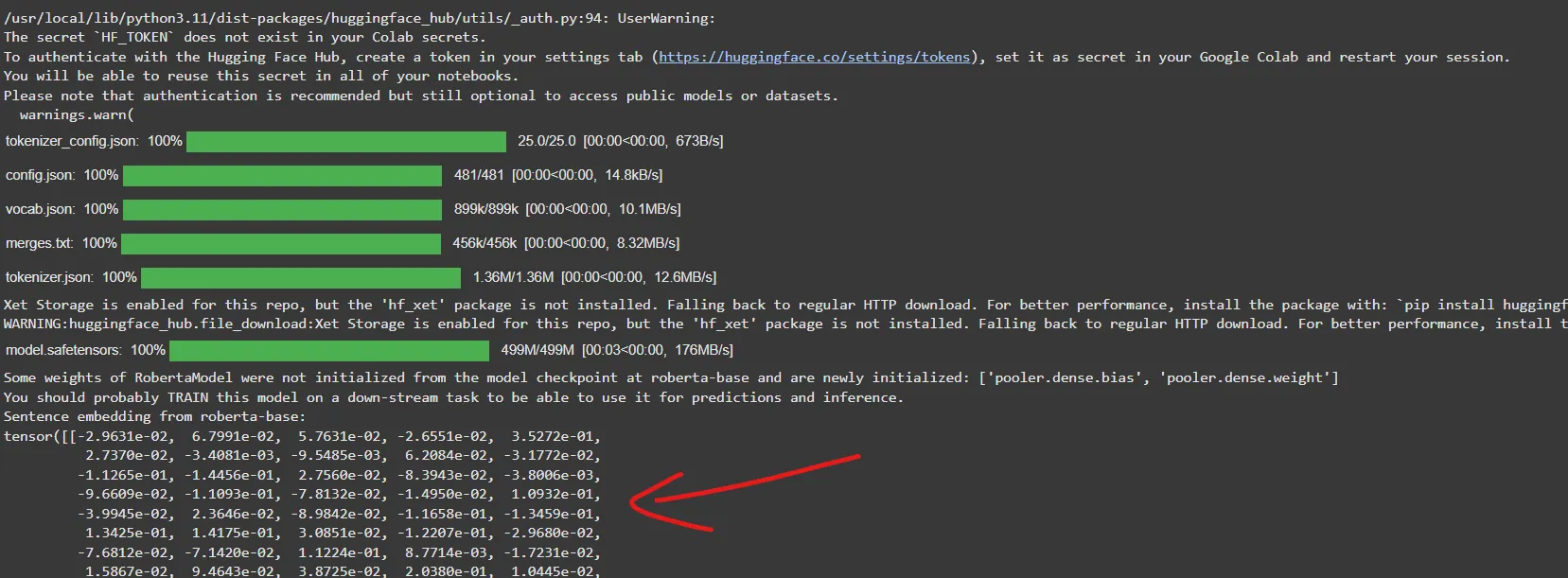

model_name = "roberta-base" # Solely RoBERTa is lively now uncomment different to check different fashions

# Load tokenizer and mannequin

tokenizer = AutoTokenizer.from_pretrained(model_name)

mannequin = AutoModel.from_pretrained(model_name).to(system)

mannequin.eval()

# Tokenize enter

inputs = tokenizer(sentence, return_tensors="pt", truncation=True, padding=True).to(system)

# Ahead move to get embeddings

with torch.no_grad():

outputs = mannequin(**inputs)

# Get token embeddings

token_embeddings = outputs.last_hidden_state # (batch_size, seq_len, hidden_size)

# Imply Pooling for sentence embedding

sentence_embedding = torch.imply(token_embeddings, dim=1)

print(f"Sentence embedding from {model_name}:")

print(sentence_embedding)Output:

Abstract

- BERT gives deep, bidirectional contextualized embeddings perfect for a variety of NLP duties. It captures intricate language patterns by way of transformer-based self-attention however produces token-level embeddings that must be aggregated for sentence-level duties.

- SBERT extends BERT by reworking it right into a mannequin that straight produces significant sentence embeddings. With its siamese community structure and contrastive studying goals, SBERT excels at duties requiring quick and correct semantic comparisons between sentences, equivalent to semantic search, paraphrase detection, and sentence clustering.

- DistilBERT presents a lighter, sooner various to BERT through the use of data distillation. It retains most of BERT’s efficiency whereas being extra appropriate for real-time or resource-constrained functions. It’s perfect when inference pace and effectivity are key issues, although it might barely underperform in advanced eventualities.

- RoBERTa improves upon BERT by modifying its pre-training regime, eradicating the subsequent sentence prediction process through the use of bigger datasets, and making use of dynamic masking. These adjustments lead to higher generalization and efficiency throughout benchmarks, although at the price of elevated computational sources.

Different Notable BERT Variants

Whereas BERT and its direct descendants like SBERT, DistilBERT, and RoBERTa have made a major impression in NLP, a number of different highly effective variants have emerged to handle totally different limitations and improve particular capabilities:

- ALBERT (A Lite BERT)

ALBERT is a extra environment friendly model of BERT that reduces the variety of parameters by way of two key improvements: factorized embedding parameterization (which separates the scale of the vocabulary embedding from the hidden layers) and cross-layer parameter sharing (which reuses weights throughout transformer layers). These adjustments make ALBERT sooner and extra memory-efficient whereas preserving efficiency on many NLP benchmarks. - XLNet

In contrast to BERT, which depends on masked language modeling, XLNet adopts a permutation-based autoregressive coaching technique. This enables it to seize bidirectional context with out counting on knowledge corruption like masking. XLNet additionally integrates concepts from Transformer-XL, which allows it to mannequin longer-term dependencies and outperform BERT on a number of NLP duties. - T5 (Textual content-to-Textual content Switch Transformer)

Developed by Google Analysis, T5 frames each NLP process, from translation to classification, as a text-to-text downside. For instance, as an alternative of manufacturing a classification label straight, T5 learns to generate the label as a phrase or phrase. This unified strategy makes it extremely versatile and highly effective, able to tackling a broad spectrum of NLP challenges.

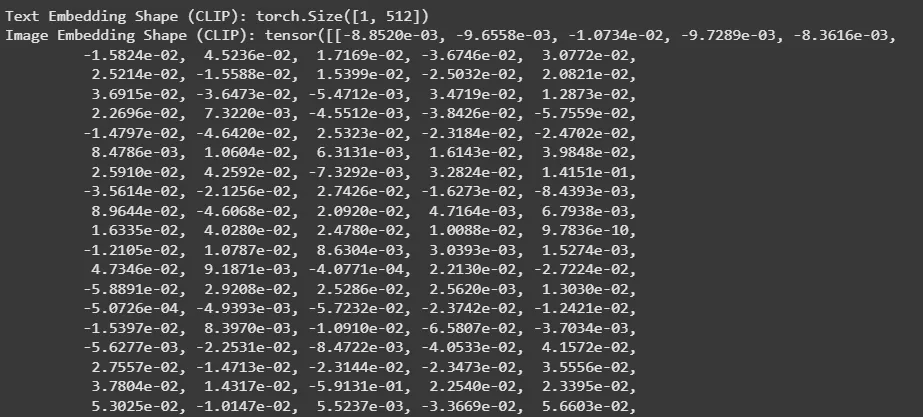

14. CLIP and BLIP

Fashionable multimodal fashions like CLIP (Contrastive Language-Picture Pretraining) and BLIP (Bootstrapping Language-Picture Pre-training) symbolize the newest frontier in embedding strategies. They bridge the hole between textual and visible knowledge, enabling duties that contain each language and pictures. These fashions have grow to be important for functions equivalent to picture search, captioning, and visible query answering.

How It Works

- CLIP:

- Mechanism: Trains on giant datasets of image-text pairs, utilizing contrastive studying to align picture embeddings with corresponding textual content embeddings.

- Course of: The mannequin learns to map pictures and textual content right into a shared vector area the place associated pairs are nearer collectively.

- BLIP:

- Mechanism: Makes use of a bootstrapping strategy to refine the alignment between language and imaginative and prescient by way of iterative coaching.

- Course of: Improves upon preliminary alignments to realize extra correct multimodal representations.

- Extra Element:

These fashions harness the ability of transformers for textual content and convolutional or transformer-based networks for pictures. Their means to collectively purpose about textual content and visible content material has opened up new prospects in multimodal AI analysis.

Code Implementation

from transformers import CLIPProcessor, CLIPModel

# from transformers import BlipProcessor, BlipModel # Uncomment to make use of BLIP

from PIL import Picture

import torch

import requests

# Select system

system = torch.system("cuda" if torch.cuda.is_available() else "cpu")

# Load a pattern picture and textual content

image_url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/predominant/datasets/cat_style_layout.png"

picture = Picture.open(requests.get(image_url, stream=True).uncooked).convert("RGB")

textual content = "a cute pet"

# ===========================

# 1. CLIP (for Embeddings)

# ===========================

clip_model_name = "openai/clip-vit-base-patch32"

clip_model = CLIPModel.from_pretrained(clip_model_name).to(system)

clip_processor = CLIPProcessor.from_pretrained(clip_model_name)

# Preprocess enter

inputs = clip_processor(textual content=[text], pictures=picture, return_tensors="pt", padding=True).to(system)

# Get textual content and picture embeddings

with torch.no_grad():

text_embeddings = clip_model.get_text_features(input_ids=inputs["input_ids"])

image_embeddings = clip_model.get_image_features(pixel_values=inputs["pixel_values"])

# Normalize embeddings (non-compulsory)

text_embeddings = text_embeddings / text_embeddings.norm(dim=-1, keepdim=True)

image_embeddings = image_embeddings / image_embeddings.norm(dim=-1, keepdim=True)

print("Textual content Embedding Form (CLIP):", text_embeddings.form)

print("Picture Embedding Form (CLIP):", image_embeddings)

# ===========================

# 2. BLIP (commented)

# ===========================

# blip_model_name = "Salesforce/blip-image-text-matching-base"

# blip_processor = BlipProcessor.from_pretrained(blip_model_name)

# blip_model = BlipModel.from_pretrained(blip_model_name).to(system)

# inputs = blip_processor(pictures=picture, textual content=textual content, return_tensors="pt").to(system)

# with torch.no_grad():

# text_embeddings = blip_model.text_encoder(input_ids=inputs["input_ids"]).last_hidden_state[:, 0, :]

# image_embeddings = blip_model.vision_model(pixel_values=inputs["pixel_values"]).last_hidden_state[:, 0, :]

# print("Textual content Embedding Form (BLIP):", text_embeddings.form)

# print("Picture Embedding Form (BLIP):", image_embeddings)Output:

Advantages

- Cross-Modal Understanding: Offers highly effective representations that work throughout textual content and pictures.

- Extensive Applicability: Helpful in picture retrieval, captioning, and different multimodal duties.

Shortcomings

- Excessive Complexity: Coaching requires giant, well-curated datasets of paired knowledge.

- Heavy Useful resource Necessities: Multimodal fashions are among the many most computationally demanding.

Comparability of Embeddings

| Embedding | Sort | Mannequin Structure / Strategy | Frequent Use Circumstances |

|---|---|---|---|

| Depend Vectorizer | Context-independent, No ML | Depend-based (Bag of Phrases) | Sentence embeddings for search, chatbots, and semantic similarity |

| One-Scorching Encoding | Context-independent, No ML | Handbook encoding | Baseline fashions, rule-based techniques |

| TF-IDF | Context-independent, No ML | Depend + Inverse Doc Frequency | Doc rating, textual content similarity, key phrase extraction |

| Okapi BM25 | Context-independent, Statistical Rating | Probabilistic IR mannequin | Search engines like google and yahoo, info retrieval |

| Word2Vec (CBOW, SG) | Context-independent, ML-based | Neural community (shallow) | Sentiment evaluation, phrase similarity, NLP pipelines |

| GloVe | Context-independent, ML-based | International co-occurrence matrix + ML | Phrase similarity, embedding initialization |

| FastText | Context-independent, ML-based | Word2Vec + Subword embeddings | Morphologically wealthy languages, OOV phrase dealing with |

| Doc2Vec | Context-independent, ML-based | Extension of Word2Vec for paperwork | Doc classification, clustering |

| InferSent | Context-dependent, RNN-based | BiLSTM with supervised studying | Semantic similarity, NLI duties |

| Common Sentence Encoder | Context-dependent, Transformer-based | Transformer / DAN (Deep Averaging Web) | Sentence embeddings for search, chatbots, semantic similarity |

| Node2Vec | Graph-based embedding | Random stroll + Skipgram | Graph illustration, suggestion techniques, hyperlink prediction |

| ELMo | Context-dependent, RNN-based | Bi-directional LSTM | Named Entity Recognition, Query Answering, Coreference Decision |

| BERT & Variants | Context-dependent, Transformer-based | Q&A, sentiment evaluation, summarization, and semantic search | Q&A, sentiment evaluation, summarization, semantic search |

| CLIP | Multimodal, Transformer-based | Imaginative and prescient + Textual content encoders (Contrastive) | Picture captioning, cross-modal search, text-to-image retrieval |

| BLIP | Multimodal, Transformer-based | Imaginative and prescient-Language Pretraining (VLP) | Picture captioning, VQA (Visible Query Answering) |

Conclusion

The journey of embeddings has come a great distance from fundamental count-based strategies like one-hot encoding to immediately’s highly effective, context-aware, and even multimodal fashions like BERT and CLIP. Every step has been about pushing previous the constraints of the final, serving to us higher perceive and symbolize human language. These days, due to platforms like Hugging Face and Ollama, we’ve entry to a rising library of cutting-edge embedding fashions making it simpler than ever to faucet into this new period of language intelligence.

However past figuring out how these strategies work, it’s value contemplating how they match our real-world objectives. Whether or not you’re constructing a chatbot, a semantic search engine, a recommender system, or a doc summarization system, there’s an embedding on the market that brings our concepts to life. In any case, in immediately’s world of language tech, there’s actually a vector for each imaginative and prescient.

Login to proceed studying and luxuriate in expert-curated content material.