Constructing with AI in the present day can really feel messy. You would possibly use one API for textual content, one other for photos, and a unique one for one thing else. Each mannequin comes with its personal setup, API key, and billing. This slows you down and makes issues tougher than they should be. What in case you might use all these fashions by one easy API. That’s the place OpenRouter helps. It offers you one place to entry fashions from suppliers like OpenAI, Google, Anthropic and extra. On this information, you’ll learn to use OpenRouter step-by-step, out of your first API name to constructing actual purposes.

What’s OpenRouter?

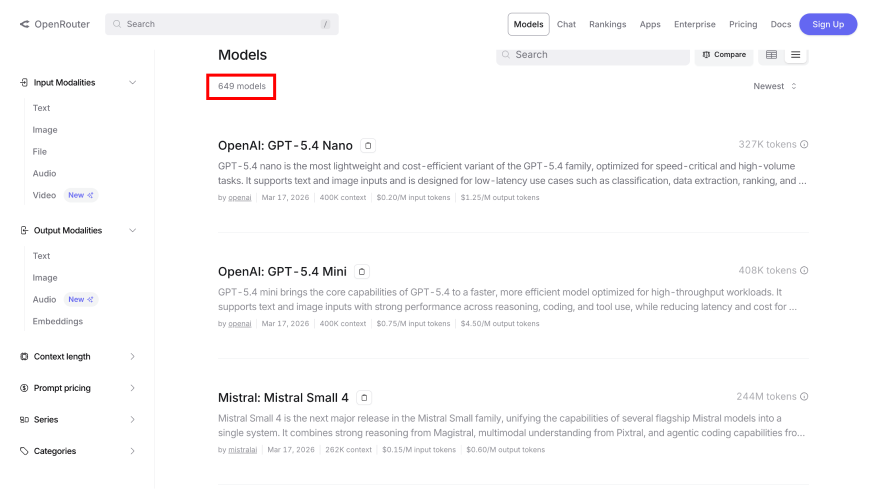

OpenRouter allows you to entry many AI fashions utilizing a single API. You don’t have to arrange every supplier individually. You join as soon as, use one API key, and write one set of code. OpenRouter handles the remaining, like authentication, request formatting, and billing. This makes it simple to strive totally different fashions. You may swap between fashions like GPT-5, Claude 4.6, Gemini 3.1 Professional, or Llama 4 by altering only one parameter in your code. This helps you select the correct mannequin primarily based on value, velocity or options like reasoning and picture understanding.

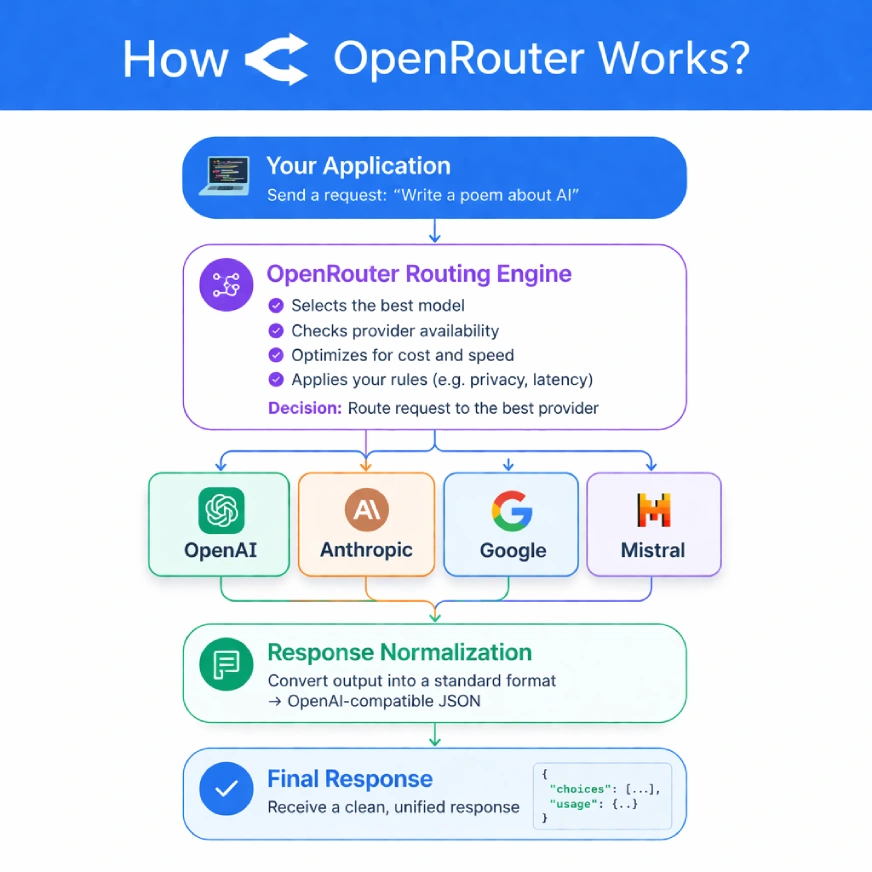

How OpenRouter Works?

OpenRouter acts as a bridge between your software and totally different AI suppliers. Your app sends a request to the OpenRouter API, and it converts that request into an ordinary format that any mannequin can perceive.

A cutting-edge routing engine is then concerned. It would discover the very best supplier of your request based on a set of rule which you can set. To offer an instance, it may be set to provide desire to probably the most cheap supplier, the one with the shortest latency, or merely these with a specific information privateness requirement resembling Zero Information Retention (ZDR).

The platform retains monitor of the efficiency and uptime of all of the suppliers and as such, is ready to make clever, real-time routing selections. In case your most well-liked supplier isn’t functioning correctly, the OpenRouter fails over to a known-good one mechanically and improves the soundness of your software.

Getting Began: Your First API Name

OpenRouter can be simple to arrange since it’s a hosted service, i.e. there isn’t a software program to be put in. It may be prepared in a matter of minutes:

Step 1: Create an Account and Get Credit:

First, join at OpenRouter.ai. To make use of the paid fashions, you’ll need to buy some credit.

Step 2: Generate an API Key

Navigate to the “Keys” part in your account dashboard. Click on “Create Key,” give it a reputation, and duplicate the important thing securely. For greatest apply, use separate keys for various environments (e.g., dev, prod) and set spending limits to regulate prices.

Step 3: Configure Your Setting

Retailer your API key in an setting variable to keep away from exposing it in your code.

Step 4: Native Setup utilizing an Setting Variable:

For macOS or Linux:

export OPENROUTER_API_KEY="your-secret-key-here"For Home windows (PowerShell):

setx OPENROUTER_API_KEY "your-secret-key-here"Making a Request on OpenRouter

Since OpenRouter has an API that’s appropriate with OpenAI, you should use official OpenAI shopper libraries to make requests. This renders the method of migration of an already accomplished OpenAI challenge extremely simple.

Python Instance utilizing the OpenAI SDK

# First, guarantee you've gotten the library put in:

# pip set up openai

import os

from openai import OpenAI

# Initialize the shopper, pointing it to OpenRouter's API

shopper = OpenAI(

base_url="https://openrouter.ai/api/v1",

api_key=os.environ.get("OPENROUTER_API_KEY"),

)

# Ship a chat completion request to a particular mannequin

response = shopper.chat.completions.create(

mannequin="openai/gpt-4.1-nano",

messages=[

{

"role": "user",

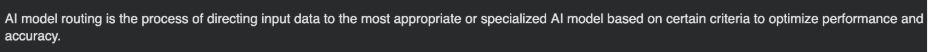

"content": "Explain AI model routing in one sentence."

},

],

)

print(response.selections[0].message.content material)Output:

Exploring Fashions and Superior Routing

OpenRouter reveals its true energy past easy requests. Its platform helps dynamic and clever AI mannequin routing.

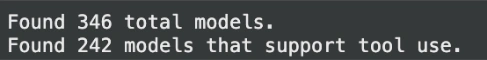

Programmatically Discovering Fashions

As fashions are constantly added or up to date, you aren’t alleged to hardcode mannequin names in one in all your manufacturing apps, as a substitute openrouter has a /fashions endpoint that returns the record of all obtainable fashions with instructed pricing, context limits and capabilities.

import os

import requests

# Fetch the record of accessible fashions

response = requests.get(

"https://openrouter.ai/api/v1/fashions",

headers={

"Authorization": f"Bearer {os.environ.get('OPENROUTER_API_KEY')}"

},

)

if response.status_code == 200:

fashions = response.json()["data"]

# Filter for fashions that assist software use

tool_use_models = [

m for m in models

if "tools" in (m.get("supported_parameters") or [])

]

print(f"Discovered {len(fashions)} complete fashions.")

print(f"Discovered {len(tool_use_models)} fashions that assist software use.")

else:

print(f"Error fetching fashions: {response.textual content}"Output:

Clever Routing and Fallbacks

You’ll be able to handle the best way OpenRouter chooses a supplier and might set backups in case of a request failure. That is the important resilience of manufacturing programs.

- Routing: Ship a supplier object into your request to rank fashions by latency or worth, or serve insurance policies resembling zdr (Zero Information Retention).

- Fallbacks: When the previous fails, OpenRouter mechanically makes an attempt the next within the record. Solely the profitable try can be charged.

Here’s a Python instance demonstrating a fallback chain:

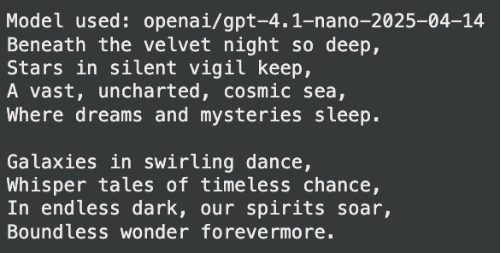

# The first mannequin is 'openai/gpt-4.1-nano'

# If it fails, OpenRouter will strive 'anthropic/claude-3.5-sonnet',

# then 'google/gemini-2.5-pro'

response = shopper.chat.completions.create(

mannequin="openai/gpt-4.1-nano",

extra_body={

"fashions": [

"anthropic/claude-3.5-sonnet",

"google/gemini-2.5-pro"

]

},

messages=[

{

"role": "user",

"content": "Write a short poem about space."

}

],

)

print(f"Mannequin used: {response.mannequin}")

print(response.selections[0].message.content material)Output:

Mastering Superior Capabilities

The identical chat completions API can be utilized to ship photos to any imaginative and prescient succesful mannequin to research them. All that’s wanted is so as to add the picture as a URL, or a base64-encoded string to your messages array.

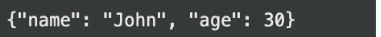

Structured Outputs (JSON Mode)

Want a dependable JSON output? You may instruct any appropriate mannequin to return a response that conforms to a particular JSON schema.The OpenRouter even has an optionally available Response Therapeutic plugin that can be utilized to restore malformed JSON resulting from fashions which have points with strict formatting.

# Requesting a structured JSON output

response = shopper.chat.completions.create(

mannequin="openai/gpt-4.1-nano",

messages=[

{

"role": "user",

"content": "Extract the name and age from this text: 'John is 30 years old.' in JSON format."

}

],

response_format={

"kind": "json_object",

"json_schema": {

"title": "user_schema",

"schema": {

"kind": "object",

"properties": {

"title": {"kind": "string"},

"age": {"kind": "integer"}

},

"required": ["name", "age"],

},

},

},

)

print(response.selections[0].message.content material)Output:

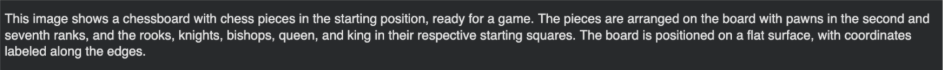

Multimodal Inputs: Working with Photographs

You need to use the identical chat completions API to ship photos to any vision-capable mannequin for evaluation. Merely add the picture as a URL or a base64-encoded string to your messages array.

# Sending a picture URL for evaluation

response = shopper.chat.completions.create(

mannequin="openai/gpt-4.1-nano",

messages=[

{

"role": "user",

"content": [

{

"type": "text",

"text": "What is in this image?"

},

{

"type": "image_url",

"image_url": {

"url": "https://encrypted-tbn0.gstatic.com/images?q=tbn:ANd9GcRmqgVW-371UD3RgE3HwhF11LYbGcVfn9eiTYqiw6a8fK51Es4SYBK0fNVyCnJzQit6YKo9ze3vg1tYoWlwqp3qgiOmRxkTg1bxPwZK3A&s=10"

}

},

],

}

],

)

print(response.selections[0].message.content material)Output:

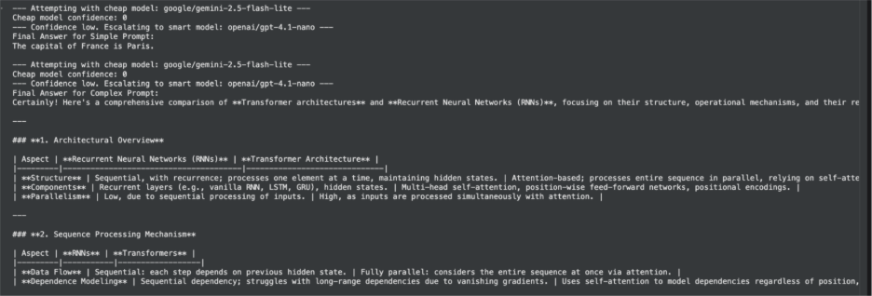

A Value-Conscious, Multi-Supplier Agent

The precise power of OpenRouter lies within the growth of superior, reasonably priced, and excessive availability purposes. As an illustration, we will develop a sensible agent that can dynamically select the very best mannequin to accomplish a particular process with the help of a tiered method to cheap-to-smart technique.

The very first thing that this agent will do is to try to reply to a question offered by a person utilizing a quick and low cost mannequin. In case that mannequin isn’t adequate (e.g. in case the duty entails deep reasoning) it will upwardly redirect the question to a extra highly effective, premium mannequin. It is a typical pattern with regards to manufacturing purposes which should strike a stability between efficiency, worth, and high quality.

The “Low-cost-to-Good” Logic

Our agent will observe these steps:

- Obtain a person’s immediate.

- Ship the immediate to a low value mannequin at first.

- Study the response to decide whether or not the mannequin was ready to reply to the request. One simple methodology of doing that is to request the mannequin to offer a confidence rating with its output.

- When the arrogance is low, the agent will mechanically repeat the identical immediate with a high-end mannequin which ends up in an excellent reply to a fancy process.

This method ensures you aren’t overpaying for easy requests whereas nonetheless having the ability of top-tier fashions on demand.

Python Implementation

Right here’s how one can implement this logic in Python. We’ll use structured outputs to ask the mannequin for its confidence stage, which makes parsing the response dependable.

from openai import OpenAI

import os

import json

# Initialize the shopper for OpenRouter

shopper = OpenAI(

base_url="https://openrouter.ai/api/v1",

api_key=os.environ.get("OPENROUTER_API_KEY"),

)

def run_cheap_to_smart_agent(immediate: str):

"""

Runs a immediate first by an affordable mannequin, then escalates to a

smarter mannequin if confidence is low.

"""

cheap_model = "mistralai/mistral-7b-instruct"

smart_model = "openai/gpt-4.1-nano"

# Outline the specified JSON construction for the response

json_schema = {

"kind": "object",

"properties": {

"reply": {"kind": "string"},

"confidence": {

"kind": "integer",

"description": "A rating from 1-100 indicating confidence within the reply.",

},

},

"required": ["answer", "confidence"],

}

# First, strive a budget mannequin

print(f"--- Making an attempt with low cost mannequin: {cheap_model} ---")

strive:

response = shopper.chat.completions.create(

mannequin=cheap_model,

messages=[

{

"role": "user",

"content": f"Answer the following prompt and provide a confidence score from 1-100. Prompt: {prompt}",

}

],

response_format={

"kind": "json_object",

"json_schema": {

"title": "agent_response",

"schema": json_schema,

},

},

)

# Parse the JSON response

outcome = json.hundreds(response.selections[0].message.content material)

reply = outcome.get("reply")

confidence = outcome.get("confidence", 0)

print(f"Low-cost mannequin confidence: {confidence}")

# If confidence is under a threshold (e.g., 70), escalate

if confidence Output:

This hands-on instance goes past a easy API name and showcases learn how to architect a extra clever, cost-effective system utilizing OpenRouter’s core strengths: mannequin selection and structured outputs.

Monitoring and Observability

Understanding your software’s efficiency and prices is essential. OpenRouter offers built-in instruments to assist.

- Utilization Accounting: Each API response accommodates detailed metadata about token utilization and value for that particular request, permitting for real-time expense monitoring.

- Broadcast Function: With none further code, you may configure OpenRouter to mechanically ship detailed traces of your API calls to observability platforms like Langfuse or Datadog. This offers deep insights into latency, errors, and efficiency throughout all fashions and suppliers.

Conclusion

The period of being tethered to a single AI supplier is over. Instruments like OpenRouter are essentially altering the developer expertise by offering a layer of abstraction that unlocks unprecedented flexibility and resilience. By unifying the fragmented AI panorama, OpenRouter not solely saves you from the tedious work of managing a number of integrations but in addition empowers you to construct smarter, more cost effective, and sturdy purposes. The way forward for AI growth isn’t about choosing one winner; it’s about having seamless entry to all of them. With this information, you now have the map to navigate that future.

Often Requested Questions

A. OpenRouter offers a single, unified API to entry tons of of AI fashions from numerous suppliers. This simplifies growth, enhances reliability with computerized fallbacks, and lets you simply swap fashions to optimize for value or efficiency.

A. No, it’s designed to be an OpenAI-compatible API. You need to use current OpenAI SDKs and infrequently solely want to alter the bottom URL to level to OpenRouter.

A. OpenRouter’s fallback characteristic mechanically retries your request with a backup mannequin you specify. This makes your software extra resilient to supplier outages.

A. Sure, you may set strict spending limits on every API key, with every day, weekly, or month-to-month reset schedules. Each API response additionally consists of detailed value information for real-time monitoring.

A. Sure, OpenRouter helps structured outputs. You may present a JSON schema in your request to drive the mannequin to return a response in a sound, predictable format.

Login to proceed studying and luxuriate in expert-curated content material.