It is now not whether or not you possibly can construct an agent—it’s how briskly and seamlessly you possibly can go from thought to enterprise-ready deployment.

This weblog submit is the fourth out of a six-part weblog collection referred to as Agent Manufacturing facility which is able to share finest practices, design patterns, and instruments to assist information you thru adopting and constructing agentic AI.

Developer experiences as the important thing to scale

AI brokers are transferring shortly from experimentation to actual manufacturing programs. Throughout industries, we see builders testing prototypes of their Built-in Improvement Surroundings (IDE) one week and deploying manufacturing brokers to serve 1000’s of customers the following. The important thing differentiator is now not whether or not you possibly can construct an agent—it’s how briskly and seamlessly you possibly can go from thought to enterprise-ready deployment.

Trade traits reinforce this shift:

- In-repo AI improvement: Fashions, prompts, and evaluations at the moment are first-class residents in GitHub repos—giving builders a unified area to construct, take a look at, and iterate on AI options.

- Extra succesful coding brokers: GitHub Copilot’s new coding agent can open pull requests after finishing duties like writing checks or fixing bugs, performing as an asynchronous teammate.

- Open frameworks maturing: Communities round LangGraph, LlamaIndex, CrewAI, AutoGen, and Semantic Kernel are quickly increasing, with “agent templates” on GitHub repos turning into frequent.

- Open protocols rising: Requirements just like the Mannequin Context Protocol (MCP) and Agent-to-Agent (A2A) are creating interoperability throughout platforms.

Builders more and more anticipate to remain of their present workflow—GitHub, VS Code, and acquainted frameworks—whereas tapping into enterprise-grade runtimes and integrations. The platforms that win will probably be people who meet builders the place they’re—with openness, pace, and belief.

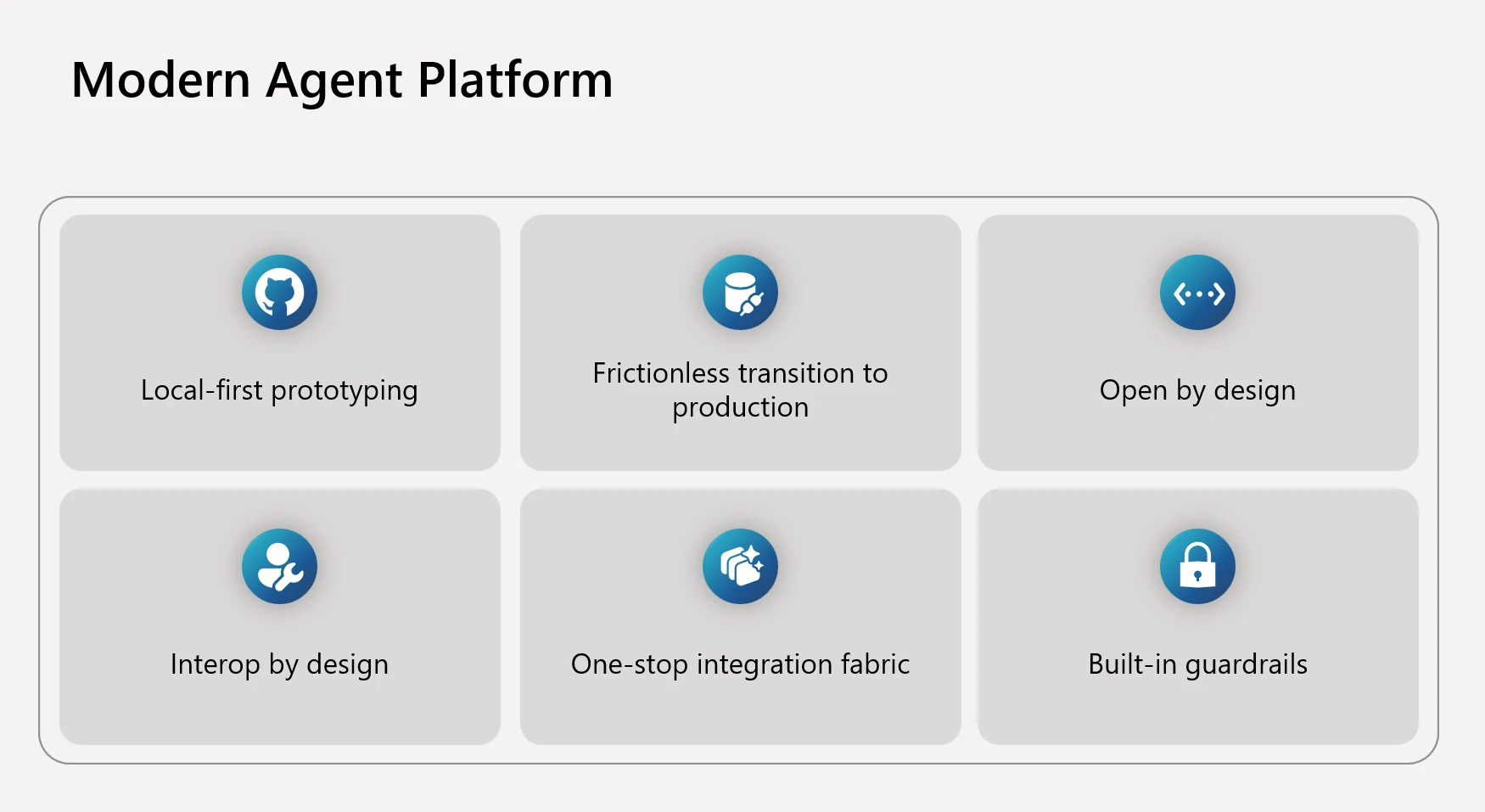

What a contemporary agent platform ought to ship

From our work with clients and the open-source neighborhood, we’ve seen a transparent image emerge of what builders actually need. A contemporary agent platform should transcend providing fashions or orchestration—it has to empower groups throughout your entire lifecycle:

- Native-first prototyping: Builders wish to keep of their circulation. Meaning designing, tracing, and evaluating AI brokers immediately of their IDE with the identical ease as writing and debugging code. If constructing an agent requires leaping right into a separate UI or unfamiliar atmosphere, iteration slows and adoption drops.

- Frictionless transition to manufacturing: A standard frustration we hear is that an agent that runs superb regionally turns into brittle or requires heavy rewrites in manufacturing. The appropriate platform gives a single, constant API floor from experimentation to deployment, so what works in improvement works in manufacturing—with scale, safety, and governance layered in mechanically.

- Open by design: No two organizations use the very same stack. Builders could begin with LangGraph for orchestration, LlamaIndex for knowledge retrieval, or CrewAI for coordination. Others favor Microsoft’s first-party frameworks like Semantic Kernel or AutoGen. A contemporary platform should help this range with out forcing lock-in, whereas nonetheless providing enterprise-grade pathways for many who need them.

- Interop by design: Brokers are not often self-contained. They have to discuss to instruments, databases, and even different brokers throughout completely different ecosystems. Proprietary protocols create silos and fragmentation. Open requirements just like the Mannequin Context Protocol (MCP) and Agent-to-Agent (A2A) unlock collaboration throughout platforms, enabling a market of interoperable instruments and reusable agent expertise.

- One-stop integration material: An agent’s actual worth comes when it could possibly take significant motion: updating a file in Dynamics 365, triggering a workflow in ServiceNow, querying a SQL database, or posting to Groups. Builders shouldn’t need to rebuild connectors for each integration. A strong agent platform gives a broad library of prebuilt connectors and easy methods to plug into enterprise programs.

- Constructed-in guardrails: Enterprises can not afford brokers which might be opaque, unreliable, or non-compliant. Observability, evaluations, and governance should be woven into the event loop—not added as an afterthought. The flexibility to hint agent reasoning, run steady evaluations, and implement identification, safety, and compliance insurance policies is as important because the fashions themselves.

How Azure AI Foundry delivers this expertise

Azure AI Foundry is designed to fulfill builders the place they’re, whereas giving enterprises the belief, safety, and scale they want. It connects the dots throughout IDEs, frameworks, protocols, and enterprise channels—making the trail from prototype to manufacturing seamless.

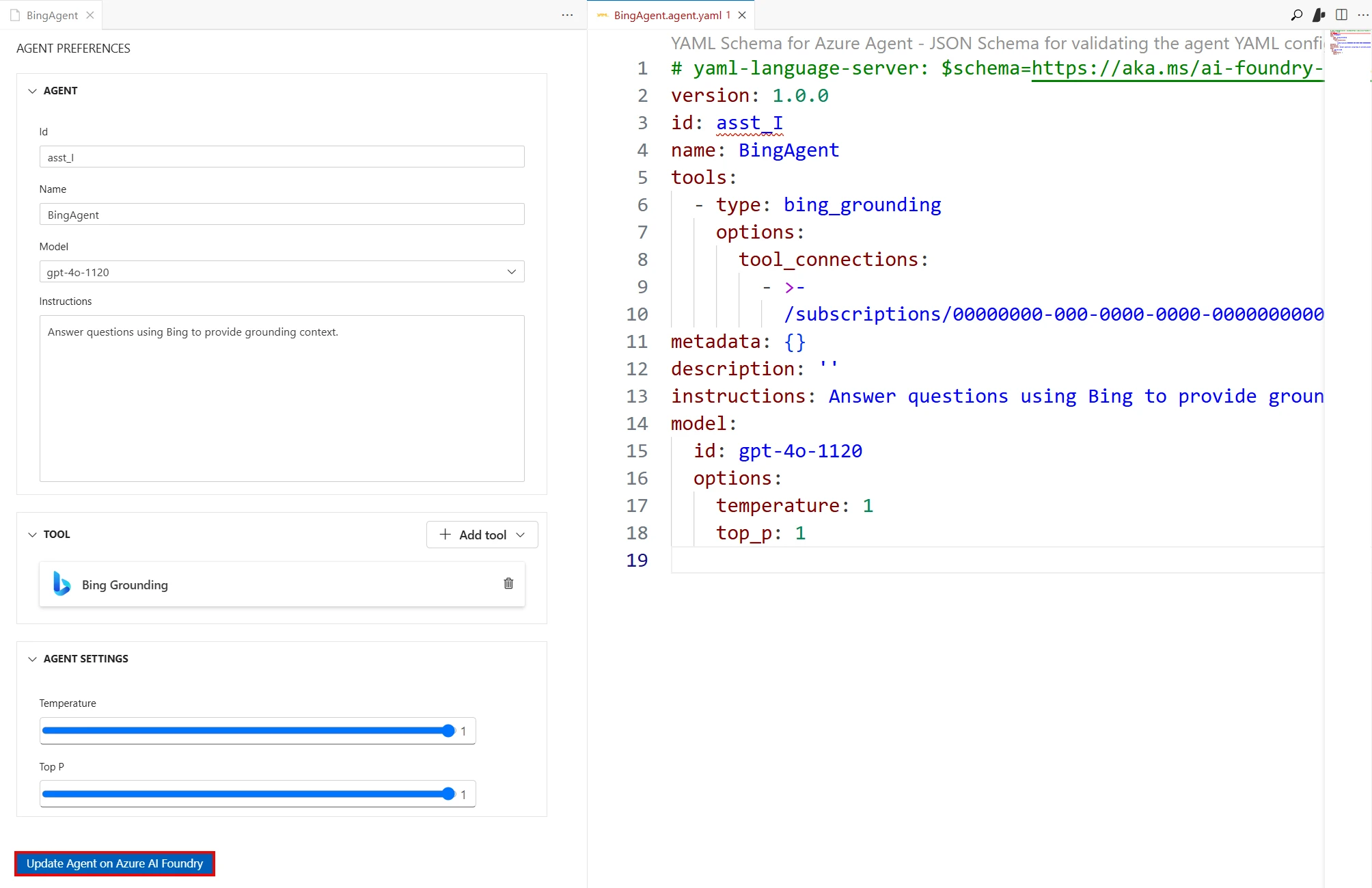

Construct the place builders reside: VS Code, GitHub, and Foundry

Builders anticipate to design, debug, and iterate AI brokers of their each day instruments—not swap into unfamiliar environments. Foundry integrates deeply with each VS Code and GitHub to help this circulation.

- VS Code extension for Foundry: Builders can create, run, and debug brokers regionally with direct connection to Foundry assets. The extension scaffolds initiatives, gives built-in tracing and analysis, and permits one-click deployment to Foundry Agent Service—all contained in the IDE they already use.

- Mannequin Inference API: With a single, unified inference endpoint, builders can consider efficiency throughout fashions and swap them with out rewriting code. This flexibility accelerates experimentation whereas future-proofing purposes towards a fast-moving mannequin ecosystem.

- GitHub Copilot and the coding agent: Copilot has grown past autocomplete into an autonomous coding agent that may tackle points, spin up a safe runner, and generate a pull request, signaling how agentic AI improvement is turning into a standard a part of the developer loop. When used alongside Azure AI Foundry, builders can speed up agent improvement by having Copilot generate agent code whereas pulling within the fashions, agent runtime, and observability instruments from Foundry wanted to construct, deploy, and monitor production-ready brokers.

Use your frameworks

Brokers are usually not one-size-fits-all, and builders typically begin with the frameworks they know finest. Foundry embraces this range:

- First-party frameworks: Foundry helps each Semantic Kernel and AutoGen, with a convergence right into a fashionable unified framework coming quickly. This future-ready framework is designed for modularity, enterprise-grade reliability, and seamless deployment to Foundry Agent Service.

- Third-party frameworks: Foundry Agent Service integrates immediately with CrewAI, LangGraph, and LlamaIndex, enabling builders to orchestrate multi-turn, multi-agent conversations throughout platforms. This ensures you possibly can work together with your most well-liked OSS ecosystem whereas nonetheless benefiting from Foundry’s enterprise runtime.

Interoperability with open protocols

Brokers don’t reside in isolation—they should interoperate with instruments, programs, and even different brokers. Foundry helps open protocols by default:

- MCP: Foundry Agent Service permits brokers to name any MCP-compatible instruments immediately, giving builders a easy method to join exterior programs and reuse instruments throughout platforms.

- A2A: Semantic Kernel helps A2A, implementing the protocol to allow brokers to collaborate throughout completely different runtimes and ecosystems. With A2A, multi-agent workflows can span distributors and frameworks, unlocking situations like specialist brokers coordinating to unravel advanced issues.

Ship the place the enterprise runs

Constructing an agent is simply step one—impression comes when customers can entry it the place they work. Foundry makes it straightforward to publish brokers to each Microsoft and customized channels:

- Microsoft 365 and Copilot: Utilizing the Microsoft 365 Brokers SDK, builders can publish Foundry brokers on to Groups, Microsoft 365 Copilot, BizChat, and different productiveness surfaces.

- Customized apps and APIs: Brokers might be uncovered as REST APIs, embedded into net apps, or built-in into workflows utilizing Logic Apps and Azure Features—with 1000’s of prebuilt connectors to SaaS and enterprise programs.

Observe and harden

Reliability and security can’t be bolted on later—they should be built-in into the event loop. As we explored in the earlier weblog, observability is crucial for delivering AI that’s not solely efficient, but additionally reliable. Foundry builds these capabilities immediately into the developer workflow:

- Tracing and analysis instruments to debug, examine, and validate agent habits earlier than and after deployment.

- CI/CD integration with GitHub Actions and Azure DevOps, enabling steady analysis and governance checks on each commit.

- Enterprise guardrails—from networking and identification to compliance and governance—in order that prototypes can scale confidently into manufacturing.

Why this issues now

Developer expertise is the brand new productiveness moat. Enterprises have to allow their groups to construct and deploy AI brokers shortly, confidently, and at scale. Azure AI Foundry delivers an open, modular, and enterprise-ready path—assembly builders in GitHub and VS Code, supporting each open-source and first-party frameworks, and guaranteeing brokers might be deployed the place customers and knowledge already reside.

With Foundry, the trail from prototype to manufacturing is smoother, quicker, and safer—serving to organizations innovate on the pace of AI.

What’s subsequent

In Half 5 of the Agent Manufacturing facility collection, we’ll discover how brokers join and collaborate at scale. We’ll demystify the mixing panorama—from agent-to-agent collaboration with A2A, to software interoperability with MCP, to the position of open requirements in guaranteeing brokers can work throughout apps, frameworks, and ecosystems. Anticipate sensible steering and reference patterns for constructing really linked agent programs.

Did you miss these posts within the collection?