As we speak, AWS introduced that Amazon Kinesis Knowledge Streams now helps file sizes as much as 10MiB – a tenfold enhance from the earlier restrict. With this launch, now you can publish intermittent bigger knowledge payloads in your knowledge streams whereas persevering with to make use of present Kinesis Knowledge Streams APIs in your functions with out extra effort. This launch is accompanied by a 2x enhance within the most PutRecords request dimension from 5MiB to 10MiB, simplifying knowledge pipelines and lowering operational overhead for IoT analytics, change knowledge seize, and generative AI workloads.

On this submit, we discover Amazon Kinesis Knowledge Streams giant file assist, together with key use circumstances, configuration of most file sizes, throttling concerns, and greatest practices for optimum efficiency.

Actual world use circumstances

As knowledge volumes develop and use circumstances evolve, we’ve seen growing demand for supporting bigger file sizes in streaming workloads. Beforehand, once you wanted to course of information bigger than 1MiB, you had two choices:

- Cut up giant information into a number of smaller information in producer functions and reassemble them in shopper functions

- Retailer giant information in Amazon Easy Storage Service (Amazon S3) and ship solely metadata by means of Kinesis Knowledge Streams

Each these approaches are helpful, however they add complexity to knowledge pipelines, requiring extra code, growing operational overhead, and complicating error dealing with and debugging, notably when clients have to stream giant information intermittently.

This enhancement improves the benefit of use and reduces operational overhead for purchasers dealing with intermittent knowledge payloads throughout varied industries and use circumstances. Within the IoT analytics area, linked autos and industrial tools are producing growing volumes of sensor telemetry knowledge, with the scale of particular person telemetry information often exceeding the earlier 1MiB restrict in Kinesis. This required clients to implement advanced workarounds, comparable to splitting giant information into a number of smaller ones or storing the big information individually and solely sending metadata by means of Kinesis. Equally, in database change knowledge seize (CDC) pipelines, giant transaction information could be produced, particularly throughout bulk operations or schema modifications. Within the machine studying and generative AI area, workflows are more and more requiring the ingestion of bigger payloads to assist richer characteristic units and multi-modal knowledge sorts like audio and pictures. The elevated Kinesis file dimension restrict from 1MiB to 10MiB limits the necessity for these kinds of advanced workarounds, simplifying knowledge pipelines and lowering operational overhead for purchasers in IoT, CDC, and superior analytics use circumstances. Clients can now extra simply ingest and course of these intermittent giant knowledge information utilizing the identical acquainted Kinesis APIs.

The way it works

To start out processing bigger information:

- Replace your stream’s most file dimension restrict (

maxRecordSize) by means of the AWS Console, AWS CLI, or AWS SDKs. - Proceed utilizing the identical

PutRecordandPutRecordsAPIs for producers. - Proceed utilizing the identical

GetRecordsorSubscribeToShardAPIs for shoppers.

Your stream will likely be in Updating standing for just a few seconds earlier than being able to ingest bigger information.

Getting began

To start out processing bigger information with Kinesis Knowledge Streams, you’ll be able to replace the utmost file dimension through the use of the AWS Administration Console, CLI or SDK.

On the AWS Administration Console,

- Navigate to the Kinesis Knowledge Streams console.

- Select your stream and choose the Configuration tab.

- Select Edit (subsequent to Most file dimension).

- Set your required most file dimension (as much as 10MiB).

- Save your modifications.

Notice: This setting solely adjusts the utmost file dimension for this Kinesis knowledge stream. Earlier than growing this restrict, confirm that each one downstream functions can deal with bigger information.

Commonest shoppers comparable to Kinesis Shopper Library (beginning with model 2.x), Amazon Knowledge Firehose supply to Amazon S3 and AWS Lambda assist processing information bigger than 1 MiB. To study extra, check with the Amazon Kinesis Knowledge Streams documentation for big information.

It’s also possible to replace this setting utilizing the AWS CLI:

Or utilizing the AWS SDK:

Throttling and greatest practices for optimum efficiency

Particular person shard throughput limits of 1MiB/s for writes and 2MiB/s for reads stay unchanged with assist for bigger file sizes. To work with giant information, let’s perceive how throttling works. In a stream, every shard has a throughput capability of 1 MiB per second. To accommodate giant information, every shard quickly bursts as much as 10MiB/s, ultimately averaging out to 1MiB per second. To assist visualize this habits, consider every shard having a capability tank that refills at 1MiB per second. After sending a big file (for instance, a 10MiB file), the tank begins refilling instantly, permitting you to ship smaller information as capability turns into accessible. This capability to assist giant information is constantly refilled into the stream. The speed of refilling relies on the scale of the big information, the scale of the baseline file, the general visitors sample, and your chosen partition key technique. While you course of giant information, every shard continues to course of baseline visitors whereas leveraging its burst capability to deal with these bigger payloads.

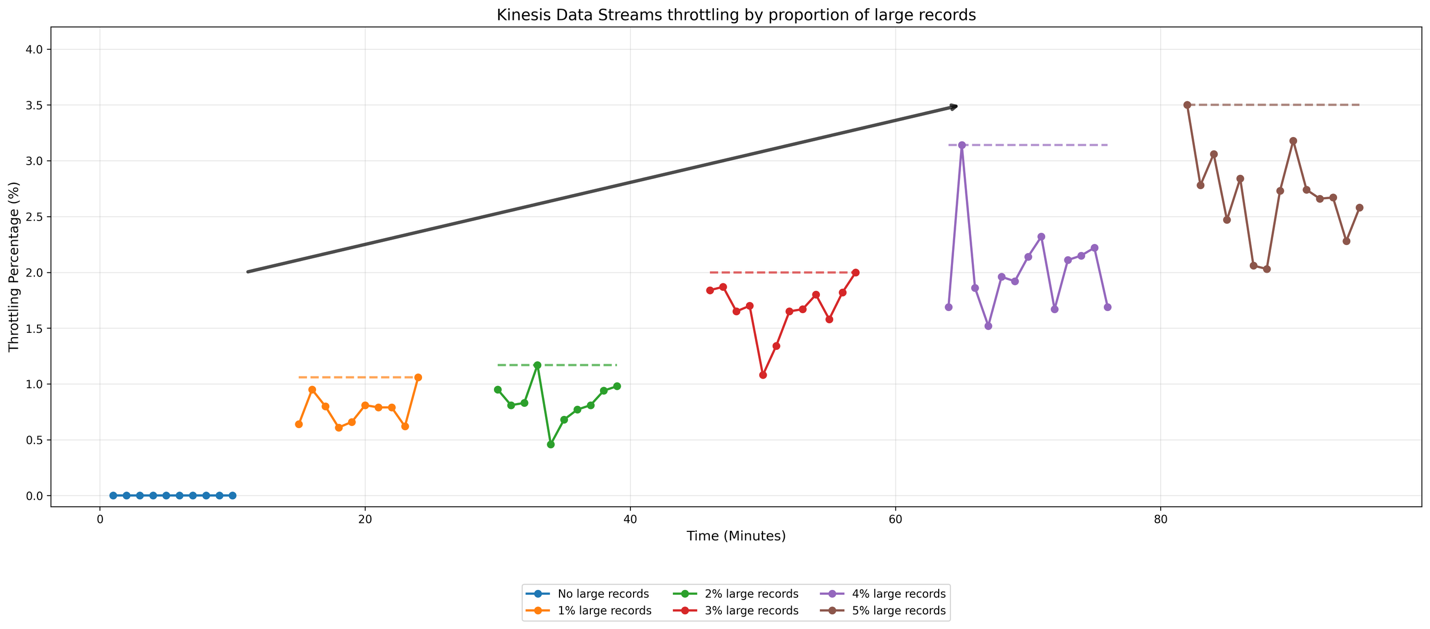

As an example how Kinesis Knowledge Streams handles completely different proportions of enormous information, let’s look at the outcomes a easy check. For our check configuration, we arrange a producer that sends knowledge to an on-demand stream (defaults to 4 shards) at a price of fifty information per second. The baseline information are 10KiB in dimension, whereas giant information are 2MiB every. We carried out a number of check circumstances by progressively growing the proportion of enormous information from 1% to five% of the overall stream visitors, together with a baseline case containing no giant information. To make sure constant testing circumstances, we distributed the big information uniformly over time for instance, within the 1% situation, we despatched one giant file for each 100 baseline information. The next graph exhibits the outcomes:

Within the graph, horizontal annotations point out throttling incidence peaks. The baseline situation, represented by the blue line, exhibits minimal throttling occasions. Because the proportion of enormous information will increase from 1% to five%, we observe a rise within the price at which your stream throttles your knowledge, with a notable acceleration in throttling occasions between the two% and 5% eventualities. This check demonstrates how Kinesis Knowledge Streams manages growing proportion of enormous information.

We advocate sustaining giant information at 1-2% of your whole file depend for optimum efficiency. In manufacturing environments, precise stream habits varies based mostly on three key components: the scale of baseline information, the scale of enormous information, and the frequency at which giant information seem within the stream. We advocate that you simply check along with your demand sample to find out the precise habits.

With on-demand streams, when the incoming visitors exceeds 500 KB/s per shard, it splits the shard inside quarter-hour. The father or mother shard’s hash key values are redistributed evenly throughout youngster shards. Kinesis routinely scales the stream to extend the variety of shards, enabling distribution of enormous information throughout a bigger variety of shards relying on the partition key technique employed.

For optimum efficiency with giant information:

- Use a random partition key technique to distribute giant information evenly throughout shards.

- Implement backoff and retry logic in producer functions.

- Monitor shard-level metrics to determine potential bottlenecks.

In the event you nonetheless have to constantly stream of enormous information, think about using Amazon S3 to retailer payloads and ship solely metadata references to the stream. Consult with Processing giant information with Amazon Kinesis Knowledge Streams for extra info.

Conclusion

Amazon Kinesis Knowledge Streams now helps file sizes as much as 10MiB, a tenfold enhance from the earlier 1MiB restrict. This enhancement simplifies knowledge pipelines for IoT analytics, change knowledge seize, and AI/ML workloads by eliminating the necessity for advanced workarounds. You possibly can proceed utilizing present Kinesis Knowledge Streams APIs with out extra code modifications and profit from elevated flexibility in dealing with intermittent giant payloads.

- For optimum efficiency, we advocate sustaining giant information at 1-2% of whole file depend.

- For greatest outcomes with giant information, implement a uniformly distributed partition key technique to evenly distribute information throughout shards, embody backoff and retry logic in producer functions, and monitor shard-level metrics to determine potential bottlenecks.

- Earlier than growing the utmost file dimension, confirm that each one downstream functions and shoppers can deal with bigger information.

We’re excited to see the way you’ll leverage this functionality to construct extra highly effective and environment friendly streaming functions. To study extra, go to the Amazon Kinesis Knowledge Streams documentation.

Concerning the authors