In the present day we’re saying serverless GPU acceleration and auto-optimization for vector index in Amazon OpenSearch Service that helps you construct large-scale vector databases quicker with decrease prices and robotically optimize vector indexes for optimum trade-offs between search high quality, velocity, and value.

Listed here are the brand new capabilities launched immediately:

- GPU acceleration – You’ll be able to construct vector databases as much as 10 instances quicker at 1 / 4 of the indexing value when in comparison with non-GPU acceleration, and you may create billion-scale vector databases in underneath an hour. With important good points in value saving and velocity, you get a bonus in time-to-market, innovation velocity, and adoption of vector search at scale.

- Auto-optimization – Yow will discover the perfect stability between search latency, high quality, and reminiscence necessities in your vector discipline while not having vector experience. This optimization helps you obtain higher cost-savings and recall charges when in comparison with default index configurations, whereas handbook index tuning can take weeks to finish.

You should use these capabilities to construct vector databases quicker and extra cost-effectively on OpenSearch Service. You should use them to energy generative AI purposes, search product catalogs and data bases, and extra. You’ll be able to allow GPU acceleration and auto-optimization while you create a brand new OpenSearch area or assortment, in addition to replace an present area or assortment.

Let’s undergo the way it works!

GPU acceleration for vector index

If you allow GPU acceleration in your OpenSearch Service area or Serverless assortment, OpenSearch Service robotically detects alternatives to speed up your vector indexing workloads. This acceleration helps construct the vector knowledge buildings in your OpenSearch Service area or Serverless assortment.

You don’t have to provision the GPU situations, handle their utilization or pay for idle time. OpenSearch Service securely isolates your accelerated workloads to your area’s or assortment’s Amazon Digital Personal Cloud (Amazon VPC) inside your account. You pay just for helpful processing by the OpenSearch Compute Models (OCU) – Vector Acceleration pricing.

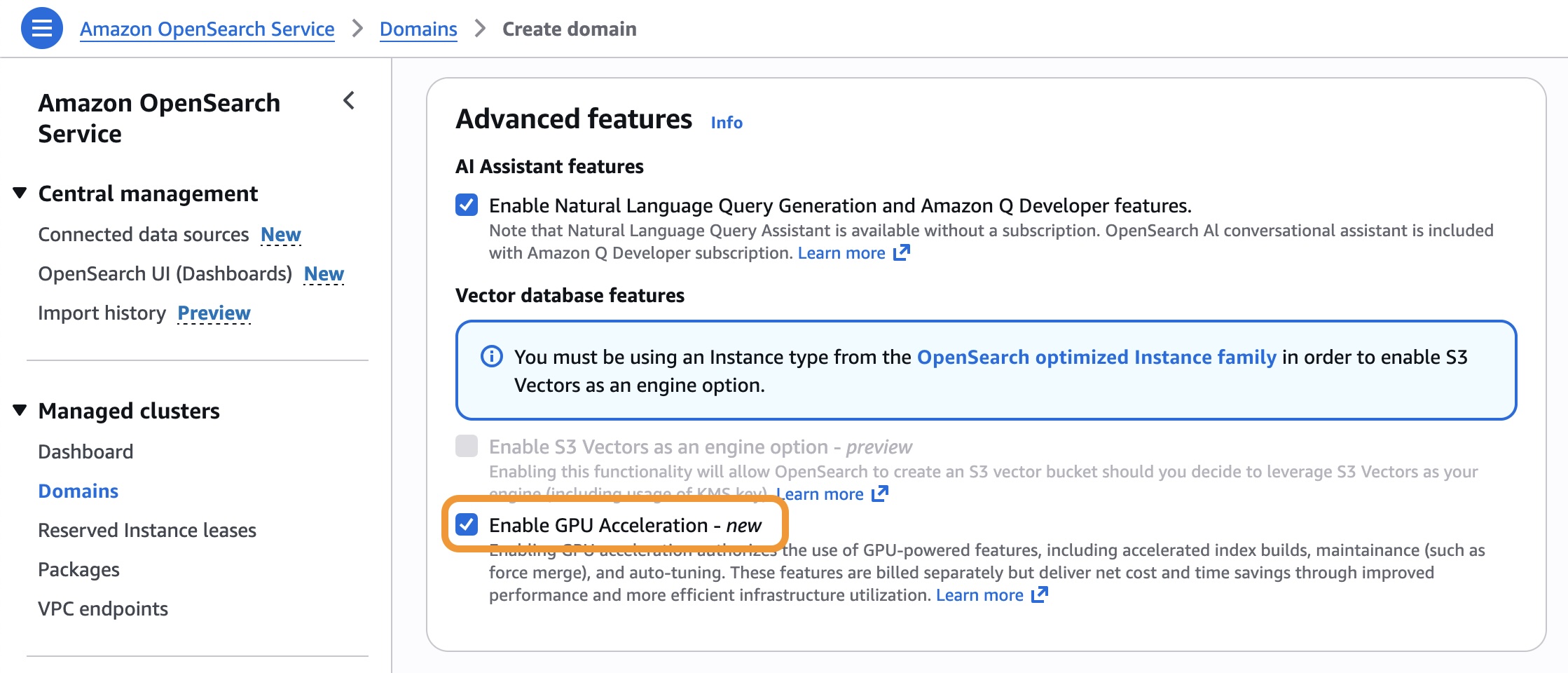

To allow GPU acceleration, go to the OpenSearch Service console and select Allow GPU Acceleration within the Superior options part while you create or replace your OpenSearch Service area or Serverless assortment.

You should use the next AWS Command Line Interface (AWS CLI) command to allow GPU acceleration for an present OpenSearch Service area.

$ aws opensearch update-domain-config

--domain-name my-domain

--aiml-options '{"ServerlessVectorAcceleration": {"Enabled": true}}'

You’ll be able to create a vector index optimized for GPU processing. This instance index shops 768-dimensional vectors for textual content embeddings by enabling index.knn.remote_index_build.enabled.

PUT my-vector-index

{

"settings": {

"index.knn": true,

"index.knn.remote_index_build.enabled": true

},

"mappings": {

"properties": {

"vector_field": {

"sort": "knn_vector",

"dimension": 768,

},

"textual content": {

"sort": "textual content"

}

}

}

}Now you may add vector knowledge and optimize your index utilizing normal OpenSearch Service operations utilizing the majority API. The GPU acceleration is robotically utilized to indexing and force-merge operations.

POST my-vector-index/_bulk

{"index": {"_id": "1"}}

{"vector_field": [0.1, 0.2, 0.3, ...], "textual content": "Pattern doc 1"}

{"index": {"_id": "2"}}

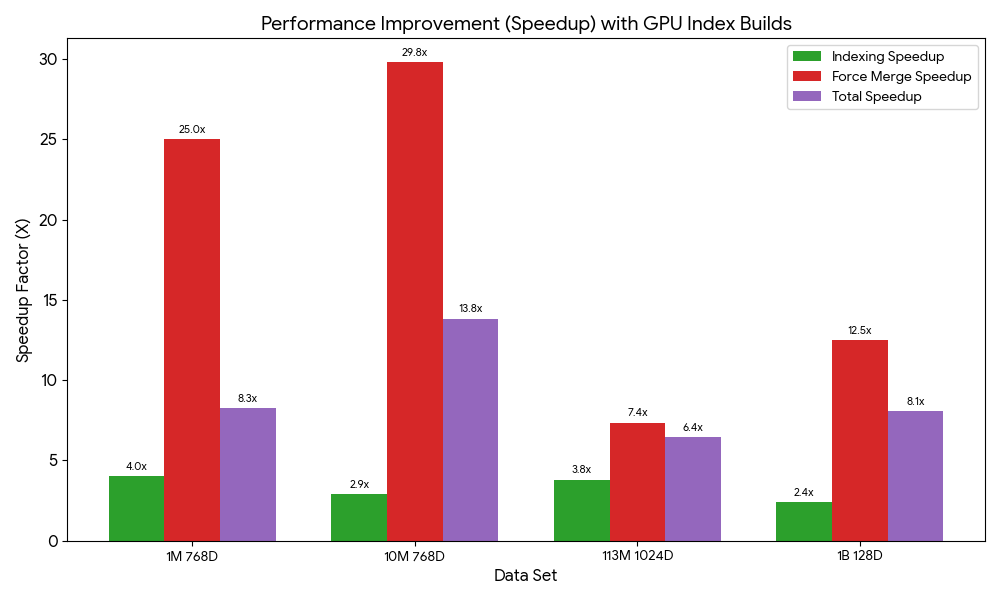

{"vector_field": [0.4, 0.5, 0.6, ...], "textual content": "Pattern doc 2"}We ran index construct benchmarks and noticed velocity good points from GPU acceleration ranging between 6.4 to 13.8 instances. Keep tuned for extra benchmarks and additional particulars in upcoming posts.

To study extra, go to GPU acceleration for vector indexing within the Amazon OpenSearch Service Developer Information.

Auto-optimizing vector databases

You should use the brand new vector ingestion function to ingest paperwork from Amazon Easy Storage Service (Amazon S3), generate vector embeddings, optimize indexes robotically, and construct large-scale vector indexes in minutes. Through the ingestion, auto-optimization generates suggestions primarily based in your vector fields and indexes of your OpenSearch Service area or Serverless assortment. You’ll be able to select certainly one of these suggestions to shortly ingest and index your vector dataset as a substitute of manually configuring these mappings.

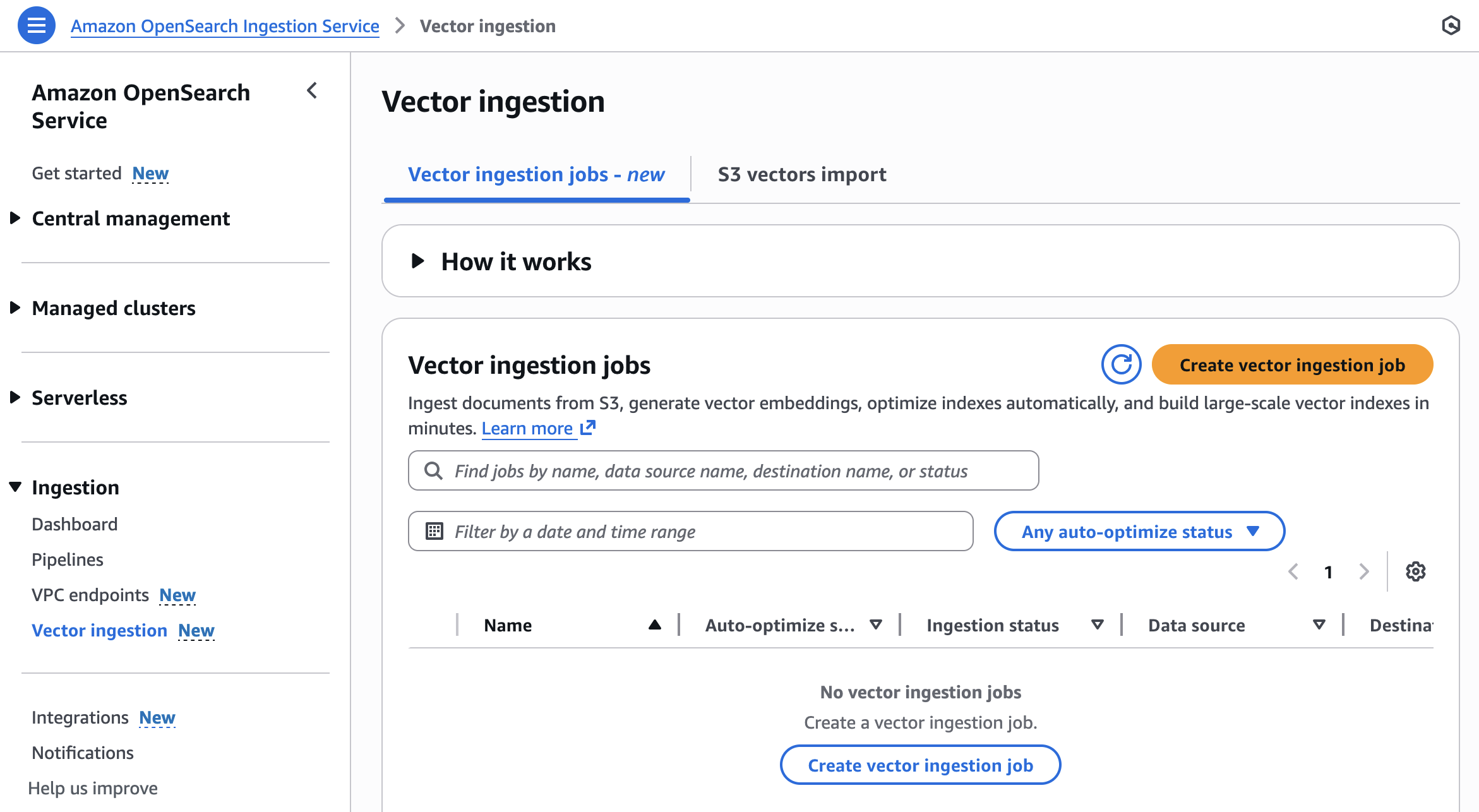

To get began, select Vector ingestion underneath the Ingestion menu within the left navigation pane of OpenSearch Service console.

You’ll be able to create a brand new vector ingestion job with the next steps:

- Put together dataset – Put together OpenSearch Service parquet paperwork in an S3 bucket and select a site or assortment in your vacation spot.

- Configure index and automate optimizations – Auto-optimize your vector fields or manually configure them.

- Ingest and speed up indexing – Use OpenSearch ingestion pipelines to load knowledge from Amazon S3 into OpenSearch Service. Construct massive vector indexes as much as 10 instances quicker at 1 / 4 of the fee.

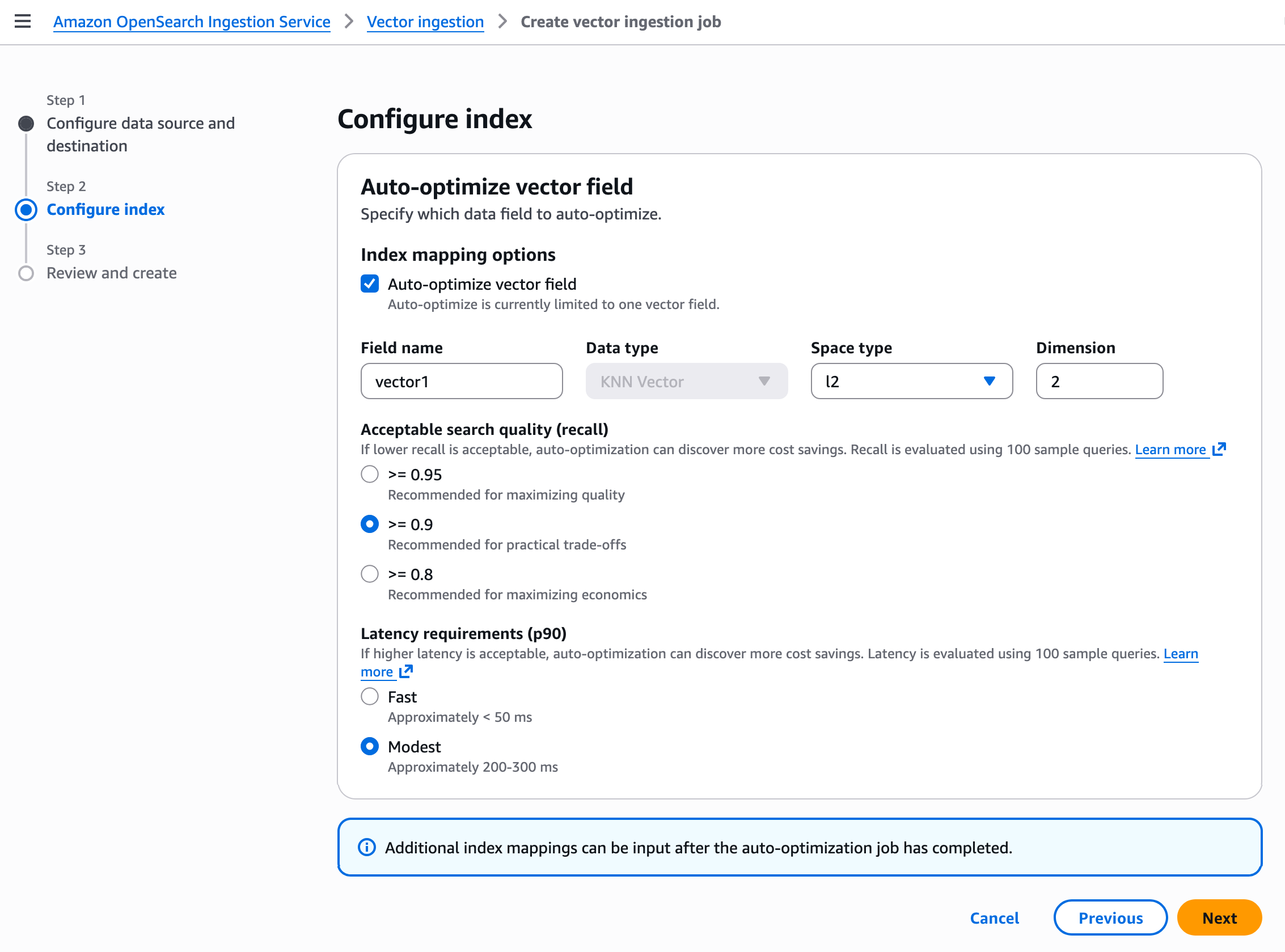

In Step 2, configure your vector index with auto-optimize vector discipline. Auto-optimize is at present restricted to at least one vector discipline. Additional index mappings could be enter after the auto-optimization job has accomplished.

Your vector discipline optimization settings rely in your use case. For instance, should you want excessive search high quality (recall fee) and don’t want quicker responses, then select Modest for the Latency necessities (p90) and greater than or equal to 0.9 for the Acceptable search high quality (recall). If you create a job, it begins to ingest vector knowledge and auto-optimize vector index. The processing time is determined by the vector dimensionality.

To study extra, go to Auto-optimize vector index within the OpenSearch Service Developer Information.

Now obtainable

GPU acceleration in Amazon OpenSearch Service is now obtainable within the US East (N. Virginia), US West (Oregon), Asia Pacific (Sydney), Asia Pacific (Tokyo), and Europe (Eire) Areas. Auto-optimization in OpenSearch Service is now obtainable within the US East (Ohio), US East (N. Virginia), US West (Oregon), Asia Pacific (Mumbai), Asia Pacific (Singapore), Asia Pacific (Sydney), Asia Pacific (Tokyo), Europe (Frankfurt), and Europe (Eire) Areas.

OpenSearch Service individually costs for used OCU – Vector Acceleration solely to index your vector databases. For extra data, go toOpenSearch Service pricing web page.

Give it a try to ship suggestions to the AWS re:Publish for Amazon OpenSearch Service or by your normal AWS Assist contacts.

— Channy