Whether or not you might be enhancing the resilience of mission-critical workloads or modernizing legacy methods; Azure Storage has an answer for you.

Microsoft is redefining what’s attainable within the public cloud and driving the following wave of AI-powered transformation for organizations. Whether or not you’re pushing the boundaries with AI, enhancing the resilience of mission-critical workloads, or modernizing legacy methods with cloud-native options, Azure Storage has an answer for you.

At Microsoft Ignite 2025 and KubeCon North America final month, we showcased the most recent improvements in Azure Storage, powering your workloads. Here’s a recap of these releases and developments.

Innovating for the longer term with AI

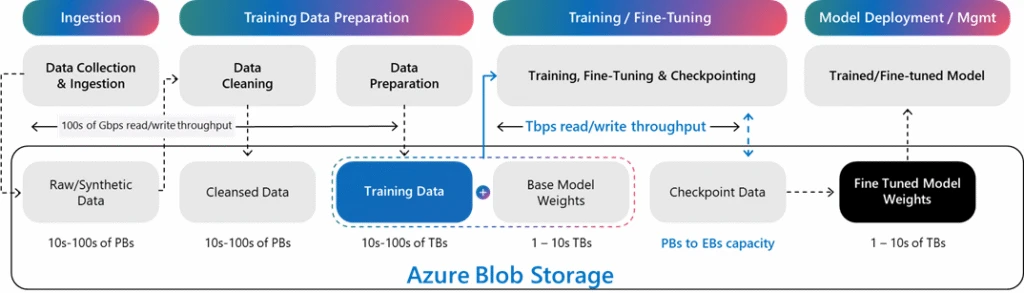

Azure Blob Storage offers a unified storage basis for your entire AI lifecycle, powering all the pieces from ingestion and preparation to checkpoint administration and mannequin deployment.

To allow clients to quickly prepare, fine-tune, and deploy AI fashions, we developed the Azure Blob Storage structure to scale and ship exabytes of capability, 10s of Tbps throughput, and tens of millions of IOPS to GPUs. On this video, you may see a single storage account scaling to over 50 Tbps on learn throughput. Azure Blob Storage can be the inspiration that allows OpenAI to coach and serve fashions at unprecedented pace and scale.

For patrons dealing with terabyte or petabyte scale AI coaching knowledge, Azure Managed Lustre (AMLFS) is a high-performance parallel file system delivering large throughput and parallel I/O to maintain GPUs repeatedly fed with knowledge. AMLFS 20 (preview) helps 25 PiB namespaces and as much as 512 GBps throughput. Hierarchical Storage Administration (HSM) integration enhances AMLFS scalability by enabling seamless knowledge motion between AMLFS and your exabyte-scale datasets in Azure Blob Storage. Auto-import (preview) permits you to pull solely required datasets into AMLFS, and auto-export sends educated fashions to long-term storage or inferencing.

Rakuten is accelerating the coaching of Japanese giant language fashions on Microsoft Azure, leveraging Azure Managed Lustre, Azure Blob Storage, and Azure Kubernetes Service to maximise GPU utilization and simplify scaling.

Natalie Mao, VP, AI & Information Division, Rakuten Group

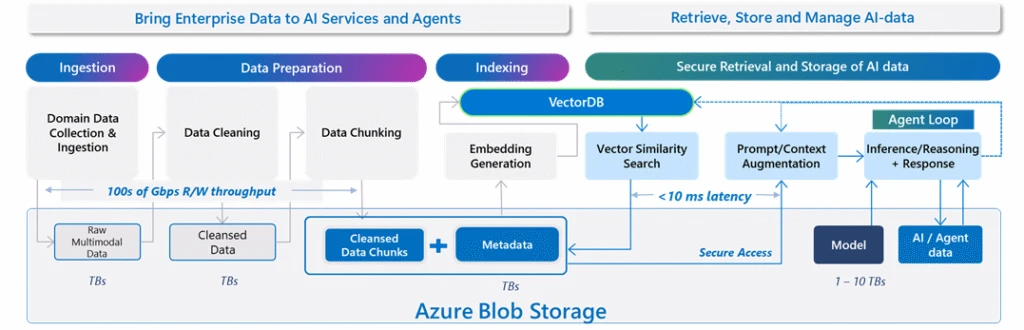

As soon as fashions are educated and fine-tuned, inferencing takes heart stage delivering real-time predictions and insights. Azure Blob Storage offers best-in-class storage for Microsoft AI companies, together with Microsoft Foundry Agent Information (preview) and AI Search retrieval brokers (preview), enabling clients to convey their very own storage accounts for full flexibility and management, guaranteeing that enterprise knowledge stays safe and prepared for retrieval-augmented era (RAG).

Moreover, Premium Blob Storage delivers constant low-latency and as much as 3X sooner retrieval efficiency, essential for RAG brokers. For patrons that favor open-source AI frameworks, Azure Storage constructed LangChain Azure Blob Loader which delivers granular safety, memory-efficient loading of tens of millions of objects and as much as 5x sooner efficiency in comparison with prior neighborhood implementations.

Azure Storage is evolving to be an built-in, clever AI-driven platform simplifying administration of exabyte-scale AI knowledge. Storage Discovery and Copilot work collectively that will help you analyze and perceive how your knowledge property is evolving over time utilizing dashboards and questions in pure language. With Storage Discovery and Storage Actions, you may optimize prices, defend your knowledge and govern giant datasets with a whole bunch of billions of objects used for coaching, and fine-tuning.

Optimizing fashionable functions with Cloud Native

Fashionable cloud-native functions demand agility. Two ideas persistently stand out: elasticity and suppleness. Your storage ought to scale seamlessly with dynamic workloads—with out operational overhead. The improvements beneath are designed for the cloud, enabling you to auto-scale, optimize prices intelligently, and ship the efficiency wanted by fashionable functions.

Azure Elastic SAN offers cloud-native block storage for scale and tight Kubernetes integration for quick scaling, and multi‑tenancy that optimizes price. With new auto scaling help, Elastic SAN mechanically expands assets as wanted, making it simpler to handle storage footprints throughout workloads. Early subsequent 12 months, we’ll prolong Kubernetes integration by way of Azure Container Storage for Azure Kubernetes Service (AKS) to basic availability (GA). These enhancements allow you to preserve acquainted internet hosting environments whereas layering in cloud-native capabilities.

Cloud-native agility can be essential for contemporary functions constructed on object storage, with the necessity to optimize prices and efficiency for dynamic and unpredictable site visitors patterns. Sensible Tier (preview) on Azure Blob Storage repeatedly analyzes entry patterns, transferring knowledge between tiers mechanically.

New knowledge begins within the scorching tier. After 30 days of inactivity, it strikes to chill, and after 90 days, to chilly. If an object is accessed once more, it’s promoted again to scorching which retains knowledge in probably the most cost-effective tier mechanically. You may optimize prices with out sacrificing efficiency, simplifying knowledge administration at scale and holding your deal with constructing.

Internet hosting mission-critical workloads

Enterprises as we speak run mission-critical workloads that require block storage and ship predictable efficiency and uncompromising enterprise continuity. Azure Extremely Disk is our highest-performance block storage providing, purpose-built for workloads like high-frequency buying and selling, ecommerce platforms, transactional databases, and digital well being document methods that demand distinctive pace, reliability, and scalability.

With Azure Extremely Disk, we are able to confidently scale our platform globally, understanding that efficiency and resilience will meet enterprise expectations, that consistency permits our groups to deal with AI innovation and workflow automation somewhat than infrastructure.

Charles McDaniels, Director of Methods Engineering Administration for International Cloud Companies, ServiceNow

We all know efficiency, price, and enterprise continuity stay the highest priorities for our clients and we’re elevating the bar in each class:

- Efficiency: We’ve got additional improved the common latency for Azure Extremely Disk by 30% with common latency nicely underneath 0.5ms for small IOs on digital machines (VMs) with Azure Increase. A single Azure Extremely Disk can ship business main efficiency of 400K IOPS and 10 GBps throughput. As well as, with Ebsv6 VMs, each Premium SSD v2 and Azure Extremely Disk can ship business main VM efficiency scale of 800K IOPS and 14 GBps throughput for probably the most demanding functions.

- Price: Versatile provisioning for Azure Extremely Disk reduces whole price of possession by as much as 50%, letting you scale capability, IOPS, and MBps independently at finer granularity.

- Enterprise continuity: Instantaneous Entry Snapshots (preview) allows you to backup and restore your workloads immediately with distinctive efficiency on rehydration. This differentiated expertise for Azure Premium v2 and Extremely Disk helps remove the operational overhead of monitoring snapshot readiness or pre‑warming assets, whereas lowering restoration, refresh, and scale‑out instances from hours to seconds.

Azure NetApp Information (ANF) is designed to ship low latency, excessive efficiency, and knowledge administration at scale. Its giant volumes capabilities have been considerably expanded offering an over 3x improve in single quantity capability scale to 7.2 PiB and a 4x improve in throughput to 50 GiBps. Cache volumes convey knowledge and information nearer to the place customers want speedy entry in an area environment friendly footprint. These make ANF appropriate for a number of high-performance computing workloads akin to Digital Design Automation (EDA), Seismic Interpretation and Visualization, Reservoir Simulations, and Threat Modeling. Microsoft just isn’t solely positioning ANF for mission essential functions but in addition utilizing ANF for in-house silicon design.

Breaking boundaries—migrating your storage infrastructure

Each group’s cloud journey is exclusive. Whether or not you could transfer current environments to the cloud with minimal disruption or plan a full modernization, Azure Storage gives options for you. Storage Migration Resolution Advisor in Copilot can present suggestions to assist streamline the decision-making course of for these migrations.

Azure Information Field and Storage Mover simplify the migration journey from on-premises and different clouds to Azure. The following era Azure Information Field is now GA. Storage Mover is our totally managed knowledge migration service that’s safe, environment friendly and scalable with new capabilities: on-premises NFS shares to Azure Information NFS 4.1, on-premises SMB shares to Azure Blob storage, and cloud-to-cloud transfers. Storage Migration Resolution Advisor in Copilot accelerates decision-making for migrations.

For customers able to migrate their NAS knowledge estates, Azure Information now makes this simpler than ever. We’ve got launched a new administration mannequin making it simpler and extra price efficient to make use of file shares. Moreover, Azure Information now allows you to remove complicated on-premises Lively Listing or area controller infrastructure, with Entra-only identities for SMB shares. With cloud native identification help, now you can handle your person permissions immediately in Azure, together with exterior identities for functions like Azure Digital Desktop (AVD).

Entra-only identities help with Azure Information transforms SLB’s Petrel workflows by eradicating dependencies on on-premises area controllers, simplifying identification administration and storage infrastructure for globally distributed groups engaged on complicated exploration and reservoir characterization. This cloud-native structure permits clients to entry SMB shares in a straightforward and safe method with out complicated VPN or hybrid infrastructure setups.

Swapnil Daga, Storage Architect for Tenant Infrastructure, SLB

ANF Migration Assistant simplifies transferring ONTAP workloads from on-premises or different clouds to Azure. Behind the scenes, the Migration Assistant makes use of NetApp’s SnapMirror replication know-how, offering environment friendly, full constancy, block-level incremental transfers. Now you can leverage giant datasets with out impacting manufacturing workloads.

For patrons operating on-premises accomplice options who wish to migrate to Azure utilizing the identical partner-provided know-how, Azure has just lately launched Azure Native gives with Pure Storage and Dell PowerScale.

To make migrations simpler, Azure Storage’s Migration Program connects you with a strong ecosystem of specialists and instruments. Trusted companions like Atempo, Cirata, Cirrus Information, and Komprise can speed up migration of SAN and NAS workloads. This program gives safe, low-risk transfers of information, objects, and block storage to assist enterprises unlock the complete potential of Azure.

Begin your subsequent chapter with Azure Storage

The period of AI-powered transformation is right here. Start your journey by exploring Azure’s superior storage choices and migration instruments, designed to speed up AI adoption, cloud migration, and modernization. Take the following step as we speak and unlock new prospects with Azure Storage as the inspiration to your AI initiatives.

For any questions, attain out at azurestoragefeedback@microsoft.com.