As expertise progresses, the Web of Issues (IoT) expands to embody an increasing number of issues. Because of this, organizations gather huge quantities of information from numerous sensor gadgets monitoring every little thing from industrial gear to good buildings. These sensor gadgets ceaselessly endure firmware updates, software program modifications, or configuration modifications that introduce new monitoring capabilities or retire out of date metrics. Because of this, the info construction (schema) of the knowledge transmitted by these gadgets evolves constantly.

Organizations generally select Apache Avro as their information serialization format for IoT information as a consequence of its compact binary format, built-in schema evolution help, and compatibility with huge information processing frameworks. This turns into essential when sensor producers launch updates that add new metrics or deprecate outdated ones, permitting for seamless information processing. For instance, when a sensor producer releases a firmware replace that provides new temperature precision metrics or deprecates legacy vibration measurements, Avro’s schema evolution capabilities permit for seamless dealing with of those modifications with out breaking current information processing pipelines.

Nevertheless, managing schema evolution at scale presents vital challenges. For instance, organizations have to retailer and course of information from hundreds of sensors and replace their schemas independently, deal with schema modifications occurring as ceaselessly as each hour as a consequence of rolling system updates, preserve historic information compatibility whereas accommodating new schema variations, question information throughout a number of time durations with completely different schemas for temporal evaluation, and guarantee minimal question failures as a consequence of schema mismatches.

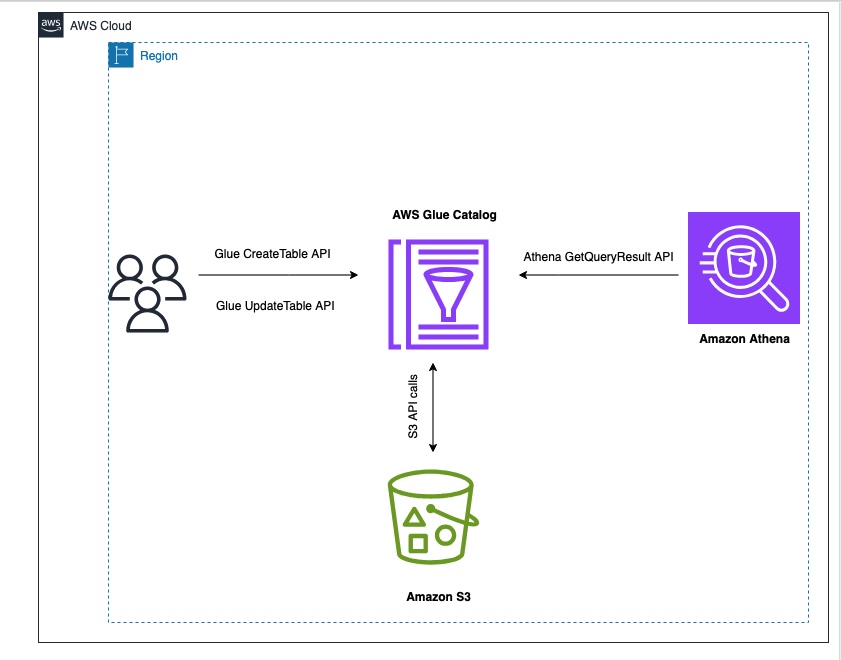

To deal with this problem, this publish demonstrates tips on how to construct such an answer by combining Amazon Easy Storage Service (Amazon S3) for information storage, AWS Glue Knowledge Catalog for schema administration, and Amazon Athena for one-time querying. We’ll focus particularly on dealing with Avro-formatted information in partitioned S3 buckets, the place schemas can change ceaselessly whereas offering constant question capabilities throughout all information no matter schema variations.

This resolution is particularly designed for Hive-based tables, equivalent to these within the AWS Glue Knowledge Catalog, and isn’t relevant for Iceberg tables. By implementing this strategy, organizations can construct a extremely adaptive and resilient analytics pipeline able to dealing with extraordinarily frequent Avro schema modifications in partitioned S3 environments.

Resolution overview

On this publish for example, we’re simulating a real-world IoT information pipeline with the next necessities:

- IoT gadgets constantly add sensor information in Avro format to an S3 bucket, simulating real-time IoT information ingestion

- The schema change occurs ceaselessly over time

- Knowledge will likely be partitioned hourly to replicate typical IoT information ingestion patterns

- Knowledge must be queryable utilizing the latest schema model by Amazon Athena.

To attain these necessities, we reveal the answer utilizing automated schema detection. We use AWS Command Line Interface (AWS CLI) and AWS SDK for Python (Boto3) scripts to simulate an automatic mechanism that frequently screens the S3 bucket for brand spanking new information, detects schema modifications in incoming Avro information, and triggers vital updates to the AWS Glue Knowledge Catalog.

For schema evolution dealing with, our resolution will reveal tips on how to create and replace desk definitions within the AWS Glue Knowledge Catalog, incorporate Avro schema literals to deal with schema modifications, and use the Athena partition projection for environment friendly querying throughout schema variations. The info steward or admin must know when and the way the schema is up to date in order that the admin can manually change the columns within the UpdateTable API name. For validation and querying, we use Amazon Athena queries to confirm desk definitions and partition particulars and reveal profitable querying of information throughout completely different schema variations. By simulating these parts, our resolution addresses the important thing necessities outlined within the introduction:

- Dealing with frequent schema modifications (as typically as hourly)

- Managing information from hundreds of sensors updating independently

- Sustaining historic information compatibility whereas accommodating new schemas

- Enabling querying throughout a number of time durations with completely different schemas

- Minimizing question failures as a consequence of schema mismatches

Though in a manufacturing surroundings this is able to be built-in into a complicated IoT information processing utility, our simulation utilizing AWS CLI and Boto3 scripts successfully demonstrates the ideas and methods for managing schema evolution in large-scale IoT deployments.

The next diagram illustrates the answer structure.

Conditions:

To carry out the answer, you’ll want to have the next conditions:

Create the bottom desk

On this part, we simulate the preliminary setup of an information pipeline for IoT sensor information. This step is essential as a result of it establishes the inspiration for our schema evolution demonstration. This preliminary desk serves as the place to begin from which our schema will evolve. It permits us to reveal tips on how to deal with schema modifications over time. On this state of affairs, the bottom desk comprises three key fields: customerID (bigint), sentiment (a struct containing customerrating), and dt (string) as a partition column. And Avro schema literal (‘avro.schema.literal’)together with different configurations. Observe these steps:

- Create a brand new file named

`CreateTableAPI.py`with the next content material. Exchange'Location': 's3://amzn-s3-demo-bucket/'together with your S3 bucket particulars and

- Run the script utilizing the command:

The schema literal serves as a type of metadata, offering a transparent description of your information construction. In Amazon Athena, Avro desk schema Serializer/Deserializer (SerDe) properties are important for making certain schema is suitable with the info saved in information, facilitating correct translation for question engines. These properties allow the exact interpretation of Avro-formatted information, permitting question engines to accurately learn and course of the knowledge throughout execution.

The Avro schema literal offers an in depth description of the info construction on the partition degree. It defines the fields, their information varieties, and any nested buildings throughout the Avro information. Amazon Athena makes use of this schema to accurately interpret the Avro information saved in Amazon S3. It makes positive that every subject within the Avro file is mapped to the proper column within the Athena desk.

The schema data helps Athena optimize question run by understanding the info construction upfront. It may possibly make knowledgeable choices about tips on how to course of and retrieve information effectively. When the Avro schema modifications (for instance, when new fields are added), updating the schema literal permits Athena to acknowledge and work with the brand new construction. That is essential for sustaining question compatibility as your information evolves over time. The schema literal offers express sort data, which is important for Avro’s sort system. This offers correct information sort conversion between Avro and Athena SQL varieties.

For advanced Avro schemas with nested buildings, the schema literal informs Athena tips on how to navigate and question these nested parts. The Avro schema can specify default values for fields, which Athena can use when querying information the place sure fields is likely to be lacking. Athena can use the schema to carry out compatibility checks between the desk definition and the precise information, serving to to determine potential points. Within the SerDe properties, the schema literal tells the Avro SerDe tips on how to deserialize the info when studying it from Amazon S3.

It’s essential for the SerDe to accurately interpret the binary Avro format right into a type Athena can question. The detailed schema data aids in question planning, permitting Athena to make knowledgeable choices about tips on how to execute queries effectively. The Avro schema literal specified within the desk’s SerDe properties offers Athena with the precise subject mappings, information varieties, and bodily construction of the Avro file. This permits Athena to carry out column pruning by calculating exact byte offsets for required fields, studying solely these particular parts of the Avro file from S3 relatively than retrieving your entire file.

- After creating the desk, confirm its construction utilizing the

SHOW CREATE TABLEcommand in Athena:

Notice that the desk is created with the preliminary schema as described beneath:

With the desk construction in place, you’ll be able to load the primary set of IoT sensor information and set up the preliminary partition. This step is essential for establishing the info pipeline that can deal with incoming sensor information.

- Obtain the instance sensor information from the next S3 bucket

Obtain preliminary schema from the primary partition

Obtain second schema from the second partition

Obtain third schema from the third partition

- Add the Avro-formatted sensor information to your partitioned S3 location. This represents your first day of sensor readings, organized within the date-based partition construction. Exchange the bucket title

amzn-s3-demo-buckettogether with your S3 bucket title and add a partitioned folder for thedtsubject.

- Register this partition within the AWS Glue Knowledge Catalog to make it discoverable. This tells AWS Glue the place to seek out your sensor information for this particular date:

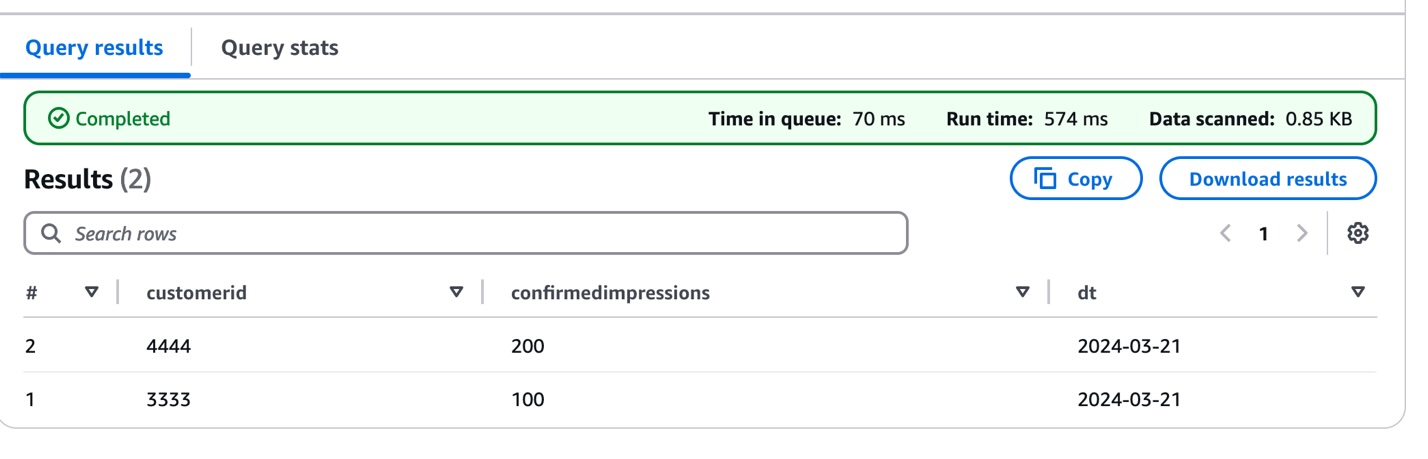

- Validate your sensor information ingestion by querying the newly loaded partition. This question helps confirm that your sensor readings are accurately loaded and accessible:

The next screenshot exhibits the question outcomes.

This preliminary information load establishes the inspiration for the IoT information pipeline, which implies you’ll be able to start monitoring sensor measurements whereas getting ready for future schema evolution as sensor capabilities develop or change.

Now, we reveal how the IoT information pipeline handles evolving sensor capabilities by introducing a schema change within the second information batch. As sensors obtain firmware updates or new monitoring options, their information construction must adapt accordingly. To point out this evolution, we add information from sensors that now embrace visibility measurements:

- Study the developed schema construction that accommodates the brand new sensor functionality:

Notice the addition of the visibility subject throughout the sentiment construction, representing the sensor’s enhanced monitoring functionality.

- Add this enhanced sensor information to a brand new date partition:

- Confirm information consistency throughout each the unique and enhanced sensor readings:

This demonstrates how the pipeline can deal with sensor upgrades whereas sustaining compatibility with historic information. Within the subsequent part, we discover tips on how to replace the desk definition to correctly handle this schema evolution, offering seamless querying throughout all sensor information no matter when the sensors had been upgraded. This strategy is especially priceless in IoT environments the place sensor capabilities ceaselessly evolve, which implies you’ll be able to preserve historic information whereas accommodating new monitoring options.

Replace the AWS Glue desk

To accommodate evolving sensor capabilities, you’ll want to replace the AWS Glue desk schema. Though conventional strategies equivalent to MSCK REPAIR TABLE or ALTER TABLE ADD PARTITION work for small datasets for updating partition data, you should utilize an alternate technique to deal with tables with greater than 100K partitions effectively.

We use the Athena partition projection, which eliminates the necessity to course of in depth partition metadata, which may be time-consuming for giant datasets. As an alternative, it dynamically infers partition existence and site, permitting for extra environment friendly information administration. This technique additionally quickens question planning by rapidly figuring out related partitions, resulting in sooner question execution. Moreover, it reduces the variety of API calls to the metadata retailer, probably decreasing prices related to these operations. Maybe most significantly, this resolution maintains efficiency because the variety of partitions grows, producing scalability for evolving datasets. These advantages mix to create a extra environment friendly and cost-effective manner of dealing with schema evolution in large-scale information environments.

To replace your desk schema to deal with the brand new sensor information, observe these steps:

- Copy the next code into the

UpdateTableAPI.pyfile:

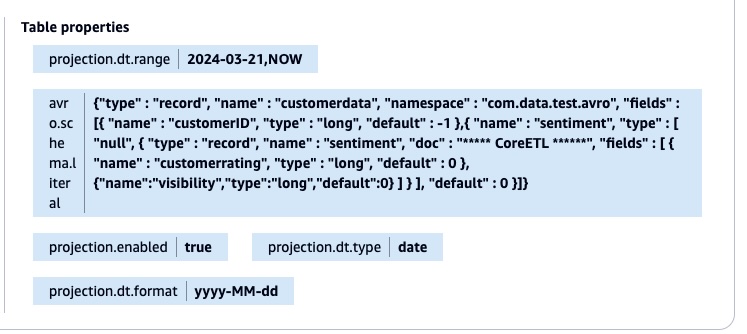

This Python script demonstrates tips on how to replace an AWS Glue desk to accommodate schema evolution and allow partition projection:

- It makes use of Boto3 to work together with AWS Glue API.

- Retrieves the present desk definition from the AWS Glue Knowledge Catalog.

- Updates the

'sentiment'column construction to incorporate new fields. - Modifies the Avro schema literal to replicate the up to date construction.

- Provides partition projection parameters for the partition column

dt- Units projection sort to

'date' - Defines date format as

'yyyy-MM-dd' - Permits partition projection

- Units date vary from

'2024-03-21'to'NOW'

- Units projection sort to

- Run the script utilizing the next command:

The script applies all modifications again to the AWS Glue desk utilizing the UpdateTable API name. The next screenshot exhibits the desk property with the brand new Avro schema literal and the partition projection.

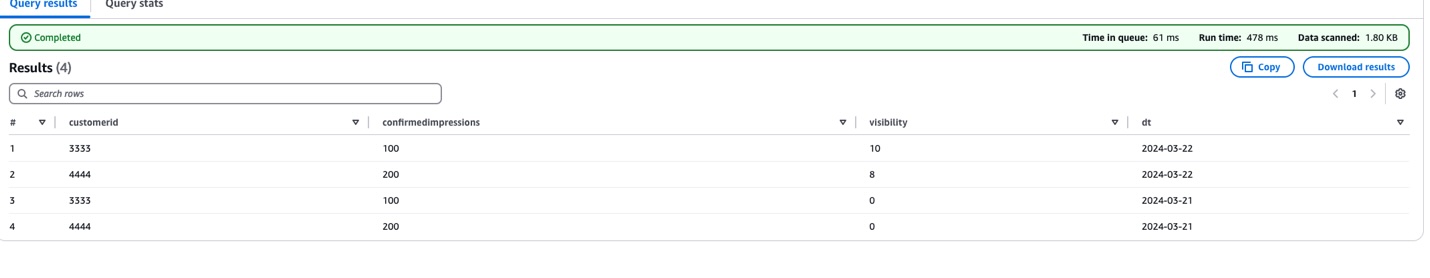

After the desk property is up to date, you don’t want so as to add the partitions manually utilizing the MSCK REPAIR TABLE or ALTER TABLE command. You’ll be able to validate the end result by working the question within the Athena console.

The next screenshot exhibits the question outcomes.

This schema evolution technique effectively handles new information fields throughout completely different time durations. Contemplate the 'visibility' subject launched on 2024-03-22. For information from 2024-03-21, the place this subject doesn’t exist, the answer robotically returns a default worth of 0. This strategy makes the question constant throughout all partitions, no matter their schema model.

Right here’s the Avro schema configuration that permits this flexibility:

Utilizing this configuration, you’ll be able to run queries throughout all partitions with out modifications, preserve backward compatibility with out information migration, and help gradual schema evolution with out breaking current queries.

Constructing on the schema evolution instance, we now introduce a 3rd enhancement to the sensor information construction. This new iteration provides a text-based classification functionality by a 'class' subject (string sort) to the sentiment construction. This represents a real-world state of affairs the place sensors obtain updates that add new classification capabilities, requiring the info pipeline to deal with each numeric measurements and textual categorizations.

The next is the improved schema construction:

This evolution demonstrates how the answer flexibly accommodates completely different information varieties as sensor capabilities develop whereas sustaining compatibility with historic information.

To implement this newest schema evolution for the brand new partition (dt=2024-03-23), we replace the desk definition to incorporate the ‘class’ subject. Right here’s the modified UpdateTableAPI.py script that handles this transformation:

- Replace the file

UpdateTableAPI.py:

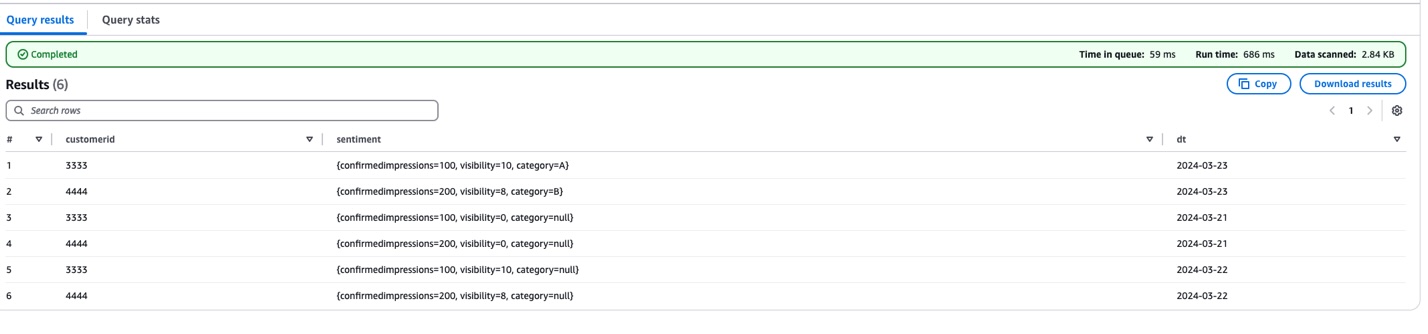

- Confirm the modifications by working the next question:

The next screenshot exhibits the question outcomes.

There are three key modifications on this replace:

- Added

'class'subject (string sort) to the sentiment construction - Set default worth

"null"for the class subject - Maintained current partition projection settings

To help that newest sensor information enhancement, we up to date the desk definition to incorporate a brand new text-based 'class' subject within the sentiment construction. The modified UpdateTableAPI script provides this functionality whereas sustaining the established schema evolution patterns. It achieves this by updating each the AWS Glue desk schema and the Avro schema literal, setting a default worth of "null" for the class subject.

This offers backward compatibility. Older information (earlier than 2024-03-23) exhibits "null" for the class subject, and new information consists of precise class values. The script maintains the partition projection settings, enabling environment friendly querying throughout all time durations.

You’ll be able to confirm this replace by querying the desk in Athena, which is able to now present the whole information construction, together with numeric measurements (customerrating, visibility) and textual content categorization (class) throughout all partitions. This enhancement demonstrates how the answer can seamlessly incorporate completely different information varieties whereas preserving historic information integrity and question efficiency.

Cleanup

To keep away from incurring future prices, delete your Amazon S3 information for those who not want it.

Conclusion

By combining Avro’s schema evolution capabilities with the facility of AWS Glue APIs, we’ve created a strong framework for managing numerous, evolving datasets. This strategy not solely simplifies information integration but additionally enhances the agility and effectiveness of your analytics pipeline, paving the best way for extra subtle predictive and prescriptive analytics.

This resolution affords a number of key benefits. It’s versatile, adapting to altering information buildings with out disrupting current analytics processes. It’s scalable, capable of deal with rising volumes of information and evolving schemas effectively. You’ll be able to automate it and scale back the handbook overhead in schema administration and updates. Lastly, as a result of it minimizes information motion and transformation prices, it’s cost-effective.

Associated references

In regards to the authors

Mohammad Sabeel Mohammad Sabeel is a Senior Cloud Help Engineer at Amazon Internet Companies (AWS) with over 14 years of expertise in Data Expertise (IT). As a member of the Technical Area Group (TFC) Analytics crew, he’s a Subject material professional in Analytics companies AWS Glue, Amazon Managed Workflows for Apache Airflow (MWAA), and Amazon Athena companies. Sabeel offers professional steering and technical help to enterprise and strategic clients, serving to them optimize their information analytics options and overcome advanced challenges. With deep subject material experience he allows organizations to construct scalable, environment friendly, and cost-effective information processing pipelines.

Mohammad Sabeel Mohammad Sabeel is a Senior Cloud Help Engineer at Amazon Internet Companies (AWS) with over 14 years of expertise in Data Expertise (IT). As a member of the Technical Area Group (TFC) Analytics crew, he’s a Subject material professional in Analytics companies AWS Glue, Amazon Managed Workflows for Apache Airflow (MWAA), and Amazon Athena companies. Sabeel offers professional steering and technical help to enterprise and strategic clients, serving to them optimize their information analytics options and overcome advanced challenges. With deep subject material experience he allows organizations to construct scalable, environment friendly, and cost-effective information processing pipelines.

Indira Balakrishnan Indira Balakrishnan is a Principal Options Architect within the Amazon Internet Companies (AWS) Analytics Specialist Options Architect (SA) Workforce. She helps clients construct cloud-based Knowledge and AI/ML options to handle enterprise challenges. With over 25 years of expertise in Data Expertise (IT), Indira actively contributes to the AWS Analytics Technical Area group, supporting clients throughout numerous Domains and Industries. Indira participates in Girls in Engineering and Girls at Amazon tech teams to encourage ladies to pursue STEM path to enter careers in IT. She additionally volunteers in early profession mentoring circles.

Indira Balakrishnan Indira Balakrishnan is a Principal Options Architect within the Amazon Internet Companies (AWS) Analytics Specialist Options Architect (SA) Workforce. She helps clients construct cloud-based Knowledge and AI/ML options to handle enterprise challenges. With over 25 years of expertise in Data Expertise (IT), Indira actively contributes to the AWS Analytics Technical Area group, supporting clients throughout numerous Domains and Industries. Indira participates in Girls in Engineering and Girls at Amazon tech teams to encourage ladies to pursue STEM path to enter careers in IT. She additionally volunteers in early profession mentoring circles.