Trendy producers face an more and more advanced problem: implementing clever decision-making techniques that reply to real-time operational information whereas sustaining safety and efficiency requirements. The quantity of sensor information and operational complexity calls for AI-powered options that course of data domestically for quick responses whereas leveraging cloud assets for advanced duties.The trade is at a essential juncture the place edge computing and AI converge. Small Language Fashions (SLMs) are light-weight sufficient to run on constrained GPU {hardware} but highly effective sufficient to ship context-aware insights. In contrast to Giant Language Fashions (LLMs), SLMs match inside the energy and thermal limits of commercial PCs or gateways, making them very best for manufacturing unit environments the place assets are restricted and reliability is paramount. For the aim of this weblog submit, assume a SLM has roughly 3 to fifteen billion parameters.

This weblog focuses on Open Platform Communications Unified Structure (OPC-UA) as a consultant manufacturing protocol. OPC-UA servers present standardized, real-time machine information that SLMs operating on the edge can eat, enabling operators to question tools standing, interpret telemetry, or entry documentation immediately—even with out cloud connectivity.

AWS IoT Greengrass allows this hybrid sample by deploying SLMs along with AWS Lambda capabilities on to OPC-UA gateways. Native inference ensures responsiveness for safety-critical duties, whereas the cloud handles fleet-wide analytics, multi-site optimization, or mannequin retraining below stronger safety controls.

This hybrid strategy opens prospects throughout industries. Automakers might run SLMs in car compute items for pure voice instructions and enhanced driving expertise. Vitality suppliers might course of SCADA sensor information domestically in substations. In gaming, SLMs might run on gamers’ units to energy companion AI in video games. Past manufacturing, greater schooling establishments might use SLMs to supply customized studying, proofreading, analysis help and content material era.

On this weblog, we are going to take a look at the best way to deploy SLMs to the sting seamlessly and at scale utilizing AWS IoT Greengrass.

The answer makes use of AWS IoT Greengrass to deploy and handle SLMs on edge units, with Strands Brokers offering native agent capabilities. The providers used embrace:

- AWS IoT Greengrass: An open-source edge software program and cloud service that allows you to deploy, handle and monitor gadget software program.

- AWS IoT Core: Service enabling you to attach IoT units to AWS cloud.

- Amazon Easy Storage Service (S3): A extremely scalable object storage which helps you to to retailer and retrieve any quantity of knowledge.

- Strands Brokers: A light-weight Python framework for operating multi-agent techniques utilizing cloud and native inference.

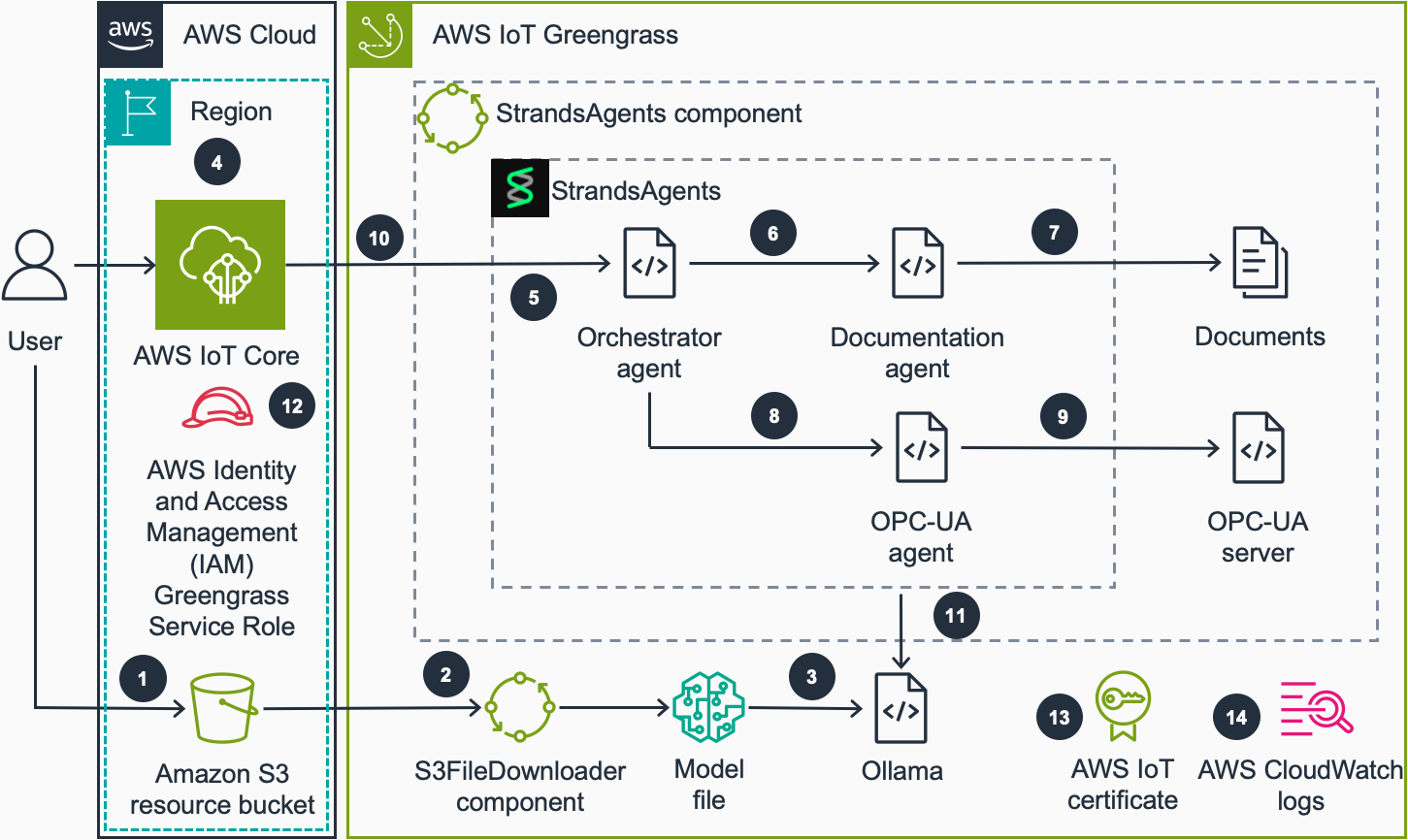

We exhibit the agent capabilities within the code pattern utilizing an industrial automation state of affairs. We offer an OPC-UA simulator which defines a manufacturing unit consisting of an oven and a conveyor belt in addition to upkeep runbooks because the supply of the economic information. This resolution could be prolonged to different use instances through the use of different agentic instruments.The next diagram reveals the high-level structure:

- Consumer uploads a mannequin file in GPT-Generated Unified Format (GGUF) format to an Amazon S3 bucket which AWS IoT Greengrass units have entry to.

- The units within the fleet obtain a file obtain job. S3FileDownloader part processes this job and downloads the mannequin file to the gadget from the S3 bucket. The S3FileDownloader part can deal with giant file sizes, usually wanted for SLM mannequin information that exceed the native Greengrass part artifact measurement limits.

- The mannequin file in GGUF format is loaded into Ollama when Strands Brokers part makes the primary name to Ollama. GGUF is a binary file format used for storing LLMs. Ollama is a software program which hundreds the GGUF mannequin file and runs inference. The mannequin identify is specified within the recipe.yaml file of the part.

- The person sends a question to the native agent by publishing a payload to a tool particular agent matter in AWS IoT MQTT dealer.

- After receiving the question, the part leverages the Strands Brokers SDK‘s model-agnostic orchestration capabilities. The Orchestrator Agent perceives the question, causes in regards to the required data sources, and acts by calling the suitable specialised brokers (Documentation Agent, OPC-UA Agent, or each) to collect complete information earlier than formulating a response.

- If the question is said to an data that may be discovered within the documentation, Orchestrator Agent calls Documentation Agent.

- Documentation Agent finds the data from the offered paperwork and returns it to Orchestrator Agent.

- If the question is said to present or historic machine information, Orchestrator Agent will name OPC-UA Agent.

- OPC-UA Agent makes a question to the OPC-UA server relying on the person question and returns the information from server to Orchestrator Agent.

- Orchestrator Agent kinds a response based mostly on the collected data. Strands Brokers part publishes the response to a tool particular agent response matter in AWS IoT MQTT dealer.

- The Strands Brokers SDK allows the system to work with domestically deployed basis fashions via Ollama on the edge, whereas sustaining the choice to change to cloud-based fashions like these in Amazon Bedrock when connectivity is obtainable.

- AWS IAM Greengrass service position gives entry to the S3 useful resource bucket to obtain fashions to the gadget.

- AWS IoT certificates connected to the IoT factor permits Strands Brokers part to obtain and publish MQTT payloads to AWS IoT Core.

- Greengrass part logs the part operation to the native file system. Optionally, AWS CloudWatch logs could be enabled to observe the part operation within the CloudWatch console.

Earlier than beginning this walkthrough, guarantee you’ve:

On this submit, you’ll:

- Deploy Strands Brokers as an AWS IoT Greengrass part.

- Obtain SLMs to edge units.

- Take a look at the deployed agent.

Element deployment

First, let’s deploy the StrandsAgentGreengrass part to your edge gadget.Clone the Strands Brokers repository:

Use Greengrass Improvement Equipment (GDK) to construct and publish the part:

To publish the part, it’s worthwhile to modify the area and bucket values in gdk-config.json file. The advisable artifact bucket worth is greengrass-artifacts. GDK will generate a bucket in greengrass-artifacts-

The part will seem within the AWS IoT Greengrass Parts Console. You possibly can confer with Deploy your part documentation to deploy the part to your units.

After the deployment, the part will run on the gadget. It consists of Strands Brokers, an OPC-UA simulation server and pattern documentation. Strands Brokers makes use of Ollama server because the SLM inference engine. The part has OPC-UA and documentation instruments to retrieve the simulated real-time information and pattern tools manuals for use by the agent.

If you wish to take a look at the part in an Amazon EC2 occasion, you need to use IoTResources.yaml Amazon CloudFormation template to deploy a GPU occasion with vital software program put in. This template additionally creates assets for operating Greengrass. After the deployment of the stack, a Greengrass Core gadget will seem within the AWS IoT Greengrass console. The CloudFormation stack could be discovered below supply/cfn folder within the repository. You possibly can learn the best way to deploy a CloudFormation stack in Create a stack from the CloudFormation console documentation.

Downloading the mannequin file

The part wants a mannequin file in GGUF format for use by Ollama because the SLM. It is advisable to copy the mannequin file below /tmp/vacation spot/ folder within the edge gadget. The mannequin file identify have to be mannequin.gguf, when you use the default ModelGGUFName parameter within the recipe.yaml file of the part.

For those who don’t have a mannequin file in GGUF format, you may obtain one from Hugging Face, for instance Qwen3-1.7B-GGUF. In a real-world utility, this could be a fine-tuned mannequin which solves particular enterprise issues in your use case.

(Elective) Use S3FileDownloader to obtain mannequin information

To handle mannequin distribution to edge units at scale, you need to use the S3FileDownloader AWS IoT Greengrass part. This part is especially invaluable for deploying giant information in environments with unreliable connectivity, because it helps automated retry and resume capabilities. For the reason that mannequin information could be giant, and gadget connectivity shouldn’t be dependable in lots of IoT use instances, this part might help you to deploy fashions to your gadget fleets reliably.

After deploying S3FileDownloader part to your gadget, you may publish the next payload to issues/ matter through the use of AWS IoT MQTT Take a look at Consumer. The file will likely be downloaded from the Amazon S3 bucket and put into /tmp/vacation spot/ folder within the edge gadget:

For those who used the CloudFormation template offered within the repository, you need to use the S3 bucket created by this template. Consult with the output of the CloudFormation stack deployment to view the identify of the bucket.

Testing the native agent

As soon as the deployment is full and the mannequin is downloaded, we will take a look at the agent via the AWS IoT Core MQTT Take a look at Consumer. Steps:

- Subscribe to

issues/matter to view the response of the agent./# - Publish a take a look at question to the enter matter

issues/:/agent/question

- It is best to obtain responses on a number of subjects:

- Remaining response matter (

issues/) which accommodates the ultimate response of the Orchestrator Agent:/agent/response

- Remaining response matter (

-

- Sub-agent responses (

issues/) which accommodates the response from middleman brokers resembling OPC-UA Agent and Documentation Agent:/agent/subagent

- Sub-agent responses (

The agent will course of your question utilizing the native SLM and supply responses based mostly on each the OPC-UA simulated information and the tools documentation saved domestically.For demonstration functions, we use the AWS IoT Core MQTT take a look at consumer as an easy interface to speak with the native gadget. In manufacturing, Strands Brokers can run absolutely on the gadget itself, eliminating the necessity for any cloud interplay.

Monitoring the part

To watch the part’s operation, you may join remotely to your AWS IoT Greengrass gadget and examine the part logs:

This can present you the real-time operation of the agent, together with mannequin loading, question processing, and response era. You possibly can study extra about Greengrass logging system in Monitor AWS IoT Greengrass logs documentation.

Go to AWS IoT Core Greengrass console to delete the assets created on this submit:

- Go to Deployments, select the deployment that you just used for deploying the part, then revise the deployment by eradicating the Strands Brokers part.

- If in case you have deployed S3FileDownloader part, you may take away it from the deployment as defined within the earlier step.

- Go to Parts, select the Strands Brokers part and select ‘Delete model’ to delete the part.

- If in case you have created S3FileDownloader part, you may delete it as defined within the earlier step.

- For those who deployed the CloudFormation stack to run the demo in an EC2 occasion, delete the stack from AWS CloudFormation console. Observe that the EC2 occasion will incur hourly costs till it’s stopped or terminated.

- For those who don’t want the Greengrass core gadget, you may delete it from Core units part of Greengrass console.

- After deleting Greengrass Core gadget, delete the IoT certificates connected to the core factor. To seek out the factor certificates, go to AWS IoT Issues console, select the IoT factor created on this information, view the Certificates tab, select the connected certificates, select Actions, then select Deactivate and Delete.

On this submit, we confirmed the best way to run a SLM domestically utilizing Ollama built-in via Strands Brokers on AWS IoT Greengrass. This workflow demonstrated how light-weight AI fashions could be deployed and managed on constrained {hardware} whereas benefiting from cloud integration for scale and monitoring. Utilizing OPC-UA as our manufacturing instance, we highlighted how SLMs on the edge allow operators to question tools standing, interpret telemetry, and entry documentation in actual time—even with restricted connectivity. The hybrid mannequin ensures essential choices occur domestically, whereas advanced analytics and retraining are dealt with securely within the cloud.This structure could be prolonged to create a hybrid cloud-edge AI agent system, the place edge AI brokers (utilizing AWS IoT Greengrass) seamlessly combine with cloud-based brokers (utilizing Amazon Bedrock). This permits distributed collaboration: edge brokers handle real-time, low-latency processing and quick actions, whereas cloud brokers deal with advanced reasoning, information analytics, mannequin refinement, and orchestration.

In regards to the authors

Ozan Cihangir is a Senior Prototyping Engineer at AWS Specialists & Companions Group. He helps clients to construct revolutionary options for his or her rising know-how initiatives within the cloud.

Ozan Cihangir is a Senior Prototyping Engineer at AWS Specialists & Companions Group. He helps clients to construct revolutionary options for his or her rising know-how initiatives within the cloud.

Luis Orus is a senior member of the AWS Specialists & Companions Group, the place he has held a number of roles – from constructing high-performing groups at international scale to serving to clients innovate and experiment rapidly via prototyping.

Luis Orus is a senior member of the AWS Specialists & Companions Group, the place he has held a number of roles – from constructing high-performing groups at international scale to serving to clients innovate and experiment rapidly via prototyping.

Amir Majlesi leads the EMEA prototyping staff inside AWS Specialists & Companions Group. He has in depth expertise in serving to clients speed up cloud adoption, expedite their path to manufacturing and foster a tradition of innovation. By speedy prototyping methodologies, Amir allows buyer groups to construct cloud native functions, with a concentrate on rising applied sciences resembling Generative & Agentic AI, Superior Analytics, Serverless and IoT.

Amir Majlesi leads the EMEA prototyping staff inside AWS Specialists & Companions Group. He has in depth expertise in serving to clients speed up cloud adoption, expedite their path to manufacturing and foster a tradition of innovation. By speedy prototyping methodologies, Amir allows buyer groups to construct cloud native functions, with a concentrate on rising applied sciences resembling Generative & Agentic AI, Superior Analytics, Serverless and IoT.

Jaime Stewart centered his Options Architect Internship inside AWS Specialists & Companions Group round Edge Inference with SLMs. Jaime presently pursues a MSc in Synthetic Intelligence.

Jaime Stewart centered his Options Architect Internship inside AWS Specialists & Companions Group round Edge Inference with SLMs. Jaime presently pursues a MSc in Synthetic Intelligence.