Apache Airflow 3.x on Amazon MWAA introduces architectural enhancements corresponding to API-based activity execution that gives enhanced safety and isolation. Different main updates embody a redesigned UI for higher consumer expertise, scheduler-based backfills for improved efficiency, and help for Python 3.12. Not like in-place minor Airflow model upgrades in Amazon MWAA, upgrading to Airflow 3 from Airflow 2 requires cautious planning and execution by a migration method as a result of basic breaking modifications.

This migration presents a possibility to embrace next-generation workflow orchestration capabilities whereas offering enterprise continuity. Nevertheless, it’s greater than a easy improve. Organizations migrating to Airflow 3.x on Amazon MWAA should perceive key breaking modifications, together with the removing of direct metadata database entry from employees, deprecation of SubDAGs, modifications to default scheduling habits, and library dependency updates. This publish offers finest practices and a streamlined method to efficiently navigate this vital migration, offering minimal disruption to your mission-critical knowledge pipelines whereas maximizing the improved capabilities of Airflow 3.

Understanding the migration course of

The journey from Airflow 2.x to three.x on Amazon MWAA introduces a number of basic modifications that organizations should perceive earlier than starting their migration. These modifications have an effect on core workflow operations and require cautious planning to realize a clean transition.

You have to be conscious of the next breaking modifications:

- Removing of direct database entry – A vital change in Airflow 3 is the removing of direct metadata database entry from employee nodes. Duties and customized operators should now talk by the REST API as an alternative of direct database connections. This architectural change impacts code that beforehand accessed the metadata database instantly by SQLAlchemy connections, requiring refactoring of current DAGs and customized operators.

- SubDAG deprecation – Airflow 3 removes the SubDAG assemble in favor of TaskGroups, Belongings, and Knowledge Conscious Scheduling. Organizations should refactor current SubDAGs to one of many beforehand talked about constructs.

- Scheduling habits modifications – Two notable modifications to default scheduling choices require an impression evaluation:

- The default values for catchup_by_default and create_cron_data_intervals modified to False. This alteration impacts DAGs that don’t explicitly set these choices.

- Airflow 3 removes a number of context variables, corresponding to execution_date, tomorrow_ds, yesterday_ds, prev_ds, and next_ds. It’s essential to change these variables with presently supported context variables.

- Library and dependency modifications – A major variety of libraries change in Airflow 3.x, requiring DAG code refactoring. Many beforehand included supplier packages would possibly want express addition to the

necessities.txtfile. - REST API modifications – The REST API path modifications from /api/v1 to /api/v2, affecting exterior integrations. For extra details about utilizing the Airflow REST API, see Creating an internet server session token and calling the Apache Airflow REST API.

- Authentication system – Though Airflow 3.0.1 and later variations default to SimpleAuthManager as an alternative of Flask-AppBuilder, Amazon MWAA will proceed utilizing Flask-AppBuilder for Airflow 3.x. This implies prospects on Amazon MWAA won’t see any authentication modifications.

The migration requires creating a brand new setting reasonably than performing an in-place improve. Though this method calls for extra planning and sources, it offers the benefit of sustaining your current setting as a fallback possibility in the course of the transition, facilitating enterprise continuity all through the migration course of.

Pre-migration planning and evaluation

Profitable migration depends upon thorough planning and evaluation of your present setting. This section establishes the inspiration for a clean transition by figuring out dependencies, configurations, and potential compatibility points. Consider your setting and code in opposition to the beforehand talked about breaking modifications to have a profitable migration.

Atmosphere evaluation

Start by conducting an entire stock of your present Amazon MWAA setting. Doc all DAGs, customized operators, plugins, and dependencies, together with their particular variations and configurations. Make sure that your present setting is on model 2.10.x, as a result of this offers the very best compatibility path for upgrading to Amazon MWAA with Airflow 3.x.

Establish the construction of the Amazon Easy Storage Service (Amazon S3) bucket containing your DAG code, necessities file, startup script, and plugins. You’ll replicate this construction in a brand new bucket for the brand new setting. Creating separate buckets for every setting avoids conflicts and permits continued improvement with out affecting present pipelines.

Configuration documentation

Doc all customized Amazon MWAA setting variables, Airflow connections, and setting configurations. Overview AWS Identification and Entry Administration (IAM) sources, as a result of your new setting’s execution function will want an identical insurance policies. IAM customers or roles accessing the Airflow UI require the CreateWebLoginToken permission for the brand new setting.

Pipeline dependencies

Understanding pipeline dependencies is vital for a profitable phased migration. Establish interdependencies by Datasets (now Belongings), SubDAGs, TriggerDagRun operators, or exterior API interactions. Develop your migration plan round these dependencies so associated DAGs can migrate on the similar time.

Take into account DAG scheduling frequency when planning migration waves. DAGs with longer intervals between runs present bigger migration home windows and decrease threat of duplicate execution in contrast with ceaselessly working DAGs.

Testing technique

Create your testing technique by defining a scientific method to figuring out compatibility points. Use the ruff linter with the AIR30 ruleset to mechanically establish code requiring updates:

Then, evaluate and replace your setting’s necessities.txt file to verify package deal variations adjust to the up to date constraints file. Moreover, generally used Operators beforehand included within the airflow-core package deal now reside in a separate package deal and must be added to your necessities file.

Check your DAGs utilizing the Amazon MWAA Docker pictures for Airflow 3.x. These pictures make it potential to create and check your necessities file, and ensure the Scheduler efficiently parses your DAGs.

Migration technique and finest practices

A methodical migration method minimizes threat whereas offering clear validation checkpoints. The really useful technique employs a phased blue/inexperienced deployment mannequin that gives dependable migrations and quick rollback capabilities.

Phased migration method

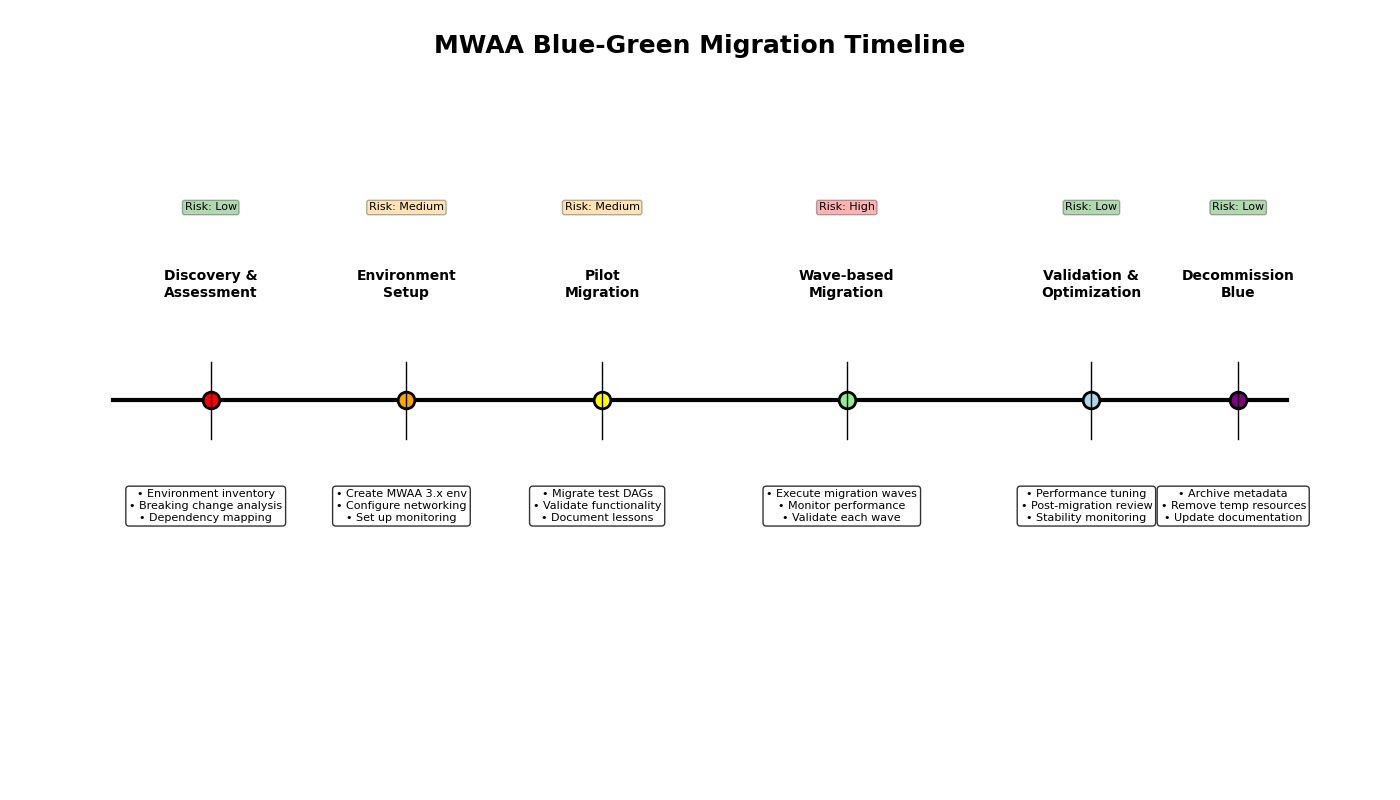

The next migration phases can help you in defining your migration plan:

- Section 1: Discovery, evaluation, and planning – On this section, full your setting stock, dependency mapping, and breaking change evaluation. With the gathered info, develop the detailed migration plan. This plan will embody steps for updating code, updating your necessities file, making a check setting, testing, creating the blue/inexperienced setting (mentioned later on this publish), and the migration steps. Planning should additionally embody the coaching, monitoring technique, rollback circumstances, and the rollback plan.

- Section 2: Pilot migration – The pilot migration section serves to validate your detailed migration plan in a managed setting with a small vary of impression. Focus the pilot on two or three non-critical DAGs with various traits, corresponding to totally different schedules and dependencies. Migrate the chosen DAGs utilizing the migration plan outlined within the earlier section. Use this section to validate your plan and monitoring instruments, and regulate each primarily based on precise outcomes. Through the pilot, set up baseline migration metrics to assist predict the efficiency of the total migration.

- Section 3: Wave-based manufacturing migration – After a profitable pilot, you’re prepared to start the total wave-based migration for the remaining DAGs. Group remaining DAGs into logical waves primarily based on enterprise criticality (least vital first), technical complexity, interdependencies (migrate dependent DAGs collectively), and scheduling frequency (much less frequent DAGs present bigger migration home windows). After you outline the waves, work with stakeholders to develop the wave schedule. Embody adequate validation intervals between waves to substantiate the wave is profitable earlier than beginning the subsequent wave. This time additionally reduces the vary of impression within the occasion of a migration situation, and offers adequate time to carry out a rollback.

- Section 4: Put up-migration evaluate and decommissioning – In spite of everything waves are full, conduct a post-migration evaluate to establish classes realized, optimization alternatives, and every other unresolved gadgets. That is additionally a very good time to supply an approval on system stability. The ultimate step is decommissioning the unique Airflow 2.x setting. After stability is set, primarily based on enterprise necessities and enter, decommission the unique (blue) setting.

Blue/inexperienced deployment technique

Implement a blue/inexperienced deployment technique for protected, reversible migration. With this technique, you should have two Amazon MWAA environments working in the course of the migration and handle which DAGs function by which setting.

The blue setting (present Airflow 2.x) maintains manufacturing workloads throughout transition. You may implement a freeze window for DAG modifications earlier than migration to keep away from last-minute code conflicts. This setting serves because the quick rollback setting if a difficulty is recognized within the new (inexperienced) setting.

The inexperienced setting (new Airflow 3.x) receives migrated DAGs in managed waves. It mirrors the networking, IAM roles, and safety configurations from the blue setting. Configure this setting with the identical choices because the blue setting, and create an identical monitoring mechanisms so each environments might be monitored concurrently. To keep away from duplicate DAG runs, be sure that a DAG solely runs in a single setting. This entails pausing the DAG within the blue setting earlier than activating the DAG within the inexperienced setting.Preserve the blue setting in heat standby mode throughout the whole migration. Doc particular rollback steps for every migration wave, and check your rollback process for no less than one non-critical DAG. Moreover, outline clear standards for triggering the rollback (corresponding to particular failure charges or SLA violations).

Step-by-step migration course of

This part offers detailed steps for conducting the migration.

Pre-migration evaluation and preparation

Earlier than initiating the migration course of, conduct an intensive evaluation of your present setting and develop the migration plan:

- Make sure that your present Amazon MWAA setting is on model 2.10.x

- Create an in depth stock of your DAGs, customized operators, and plugins together with their dependencies and variations

- Overview your present

necessities.txtfile to grasp package deal necessities - Doc all setting variables, connections, and configuration settings

- Overview the Apache Airflow 3.x launch notes to grasp breaking modifications

- Decide your migration success standards, rollback circumstances, and rollback plan

- Establish a small variety of DAGs appropriate for the pilot migration

- Develop a plan to coach, or familiarize, Amazon MWAA customers on Airflow 3

Compatibility checks

Figuring out compatibility points is vital to a profitable migration. This step helps builders deal with particular code that’s incompatible with Airflow 3.

Use the ruff linter with the AIR30 ruleset to mechanically establish code requiring updates:

Moreover, evaluate your code for situations of direct metadatabase entry.

DAG code updates

Based mostly in your findings throughout compatibility testing, replace the affected DAG code for Airflow 3.x. The ruff DAG test utility can mechanically repair frequent modifications. Use the next command to run the utility in replace mode:

Frequent modifications embody:

- Exchange direct metadata database entry with API calls:

- Exchange deprecated context variables with their fashionable equivalents:

Subsequent, consider the utilization of the 2 scheduling-related default modifications. catchup_by_default is now False, which means lacking DAG runs will now not mechanically backfill. If backfill is required, replace the DAG definition with catchup=True. In case your DAGs require backfill, you will need to take into account the impression of this migration and backfilling. Since you’re migrating a DAG to a clear setting with no historical past, enabling backfilling will create DAG runs for all runs starting with the required start_date. Take into account updating the start_date to keep away from pointless runs.

create_cron_data_intervals can be now False. With this transformation, cron expressions are evaluated as a CronTriggerTimetable assemble.

Lastly, consider the utilization of deprecated context variables for manually and Asset-triggered DAGs, then replace your code with appropriate replacements.

Updating necessities and testing

Along with potential package deal model modifications, a number of core Airflow operators beforehand included within the airflow-core package deal moved to the apache-airflow-providers-standard package deal. These modifications have to be included into your necessities.txt file. Specifying, or pinning, package deal variations in your necessities file is a finest follow and really useful for this migration.To replace your necessities file, full the next steps:

- Obtain and configure the Amazon MWAA Docker pictures. For extra particulars, seek advice from the GitHub repo.

- Copy the present setting’s

necessities.txtfile to a brand new file. - If wanted, add the apache-airflow-providers-standard package deal to the brand new necessities file.

- Obtain the suitable Airflow constraints file to your goal Airflow model to your working director. A constraints file is out there for every Airflow model and Python model mixture. The URL takes the next type:

https://uncooked.githubusercontent.com/apache/airflow/constraints-${AIRFLOW_VERSION}/constraints-${PYTHON_VERSION}.txt - Create your versioned necessities file utilizing your un-versioned file and the constraints file. For steerage on making a necessities file, see Making a

necessities.txtfile. Make sure that there aren’t any dependency conflicts earlier than transferring ahead. - Confirm your necessities file utilizing the Docker picture. Run the next command contained in the working container:

Handle any set up errors by updating package deal variations.

As a finest follow, we advocate packaging your packages right into a ZIP file for deployment in Amazon MWAA. This makes certain the identical actual packages are put in on all Airflow nodes. Confer with Putting in Python dependencies utilizing PyPi.org Necessities File Format for detailed details about packaging dependencies.

Creating a brand new Amazon MWAA 3.x setting

As a result of Amazon MWAA requires a migration method for main model upgrades, you will need to create a brand new setting to your blue/inexperienced deployment. This publish makes use of the AWS Command Line Interface (AWS CLI) for instance, you may also use infrastructure as code (IaC).

- Create a brand new S3 bucket utilizing the identical construction as the present S3 bucket.

- Add the up to date necessities file and any plugin packages to the brand new S3 bucket.

- Generate a template to your new setting configuration:

- Modify the generated JSON file:

- Copy configurations out of your current setting.

- Replace the setting identify.

- Set the AirflowVersion parameter to the goal 3.x model.

- Replace the S3 bucket properties with the brand new S3 bucket identify.

- Overview and replace different configuration parameters as wanted.

Configure the brand new setting with the identical networking settings, safety teams, and IAM roles as your current setting. Confer with the Amazon MWAA Person Information for these configurations.

- Create your new setting:

Metadata migration

Your new setting requires the identical variables, connections, roles, and pool configurations. Use this part as a information for migrating this info. For those who’re utilizing AWS Secrets and techniques Supervisor as your secrets and techniques backend, you don’t have to migrate any connections. Relying your setting’s measurement, you may migrate this metadata utilizing the Airflow UI or the Apache Airflow REST API.

- Replace any customized pool info within the new setting utilizing the Airflow UI.

- For environments utilizing the metadatabase as a secrets and techniques backend, migrate all connections to the brand new setting.

- Migrate all variables to the brand new setting.

- Migrate any customized Airflow roles to the brand new setting.

Migration execution and validation

Plan and execute the transition out of your outdated setting to the brand new one:

- Schedule the migration throughout a interval of low workflow exercise to reduce disruption.

- Implement a freeze window for DAG modifications earlier than and in the course of the migration.

- Execute the migration in phases:

- Pause DAGs within the outdated setting. For a small variety of DAGs, you should use the Airflow UI. For bigger teams, think about using the REST API.

- Confirm all working duties have accomplished within the Airflow UI.

- Redirect DAG triggers and exterior integrations to the brand new setting.

- Copy the up to date DAGs to the brand new setting’s S3 bucket.

- Allow DAGs within the new setting. For a small variety of DAGs, you should use the Airflow UI. For bigger teams, think about using the REST API.

- Monitor the brand new setting intently in the course of the preliminary operation interval:

- Look ahead to failed duties or scheduling points.

- Test for lacking variables or connections.

- Confirm exterior system integrations are functioning accurately.

- Monitor Amazon CloudWatch metrics to substantiate the setting is performing as anticipated.

Put up-migration validation

After the migration, totally validate the brand new setting:

- Confirm that each one DAGs are being scheduled accurately in line with their outlined schedules

- Test that activity historical past and logs are accessible and full

- Check vital workflows end-to-end to substantiate they execute efficiently

- Validate connections to exterior programs are functioning correctly

- Monitor CloudWatch metrics for efficiency validation

Cleanup and documentation

When the migration is full and the brand new setting is secure, full the next steps:

- Doc the modifications made in the course of the migration course of.

- Replace runbooks and operational procedures to mirror the brand new setting.

- After a adequate stability interval, outlined by stakeholders, decommission the outdated setting:

- Archive backup knowledge in line with your group’s retention insurance policies.

Conclusion

The journey from Airflow 2.x to three.x on Amazon MWAA is a chance to embrace next-generation workflow orchestration capabilities whereas sustaining the reliability of your workflow operations. By following these finest practices and sustaining a methodical method, you may efficiently navigate this transition whereas minimizing dangers and disruptions to what you are promoting operations.

A profitable migration requires thorough preparation, systematic testing, and sustaining clear documentation all through the method. Though the migration method requires extra preliminary effort, it offers the protection and management wanted for such a big improve.

Concerning the authors