OpenAI fashions have advanced drastically over the previous few years. The journey started with GPT-3.5 and has now reached GPT-5.1 and the newer o-series reasoning fashions. Whereas ChatGPT makes use of GPT-5.1 as its major mannequin, the API offers you entry to many extra choices which are designed for various sorts of duties. Some fashions are optimized for velocity and price, others are constructed for deep reasoning, and a few focus on pictures or audio.

On this article, I’ll stroll you thru all the key fashions accessible by way of the API. You’ll study what every mannequin is greatest fitted to, which sort of undertaking it suits, and work with it utilizing easy code examples. The intention is to offer you a transparent understanding of when to decide on a specific mannequin and use it successfully in an actual utility.

GPT-3.5 Turbo: The Bases of Trendy AI

The GPT-3.5 Turbo initiated the revolution of generative AI. The ChatGPT may energy the unique and can also be a secure and low cost low-cost answer to easy duties. The mannequin is narrowed all the way down to obeying instructions and conducting a dialog. It has the power to answer questions, summarise textual content and write easy code. Newer fashions are smarter, however GPT-3.5 Turbo can nonetheless be utilized to excessive quantity duties the place price is the principle consideration.

Key Options:

- Pace and Price: It is extremely quick and really low cost.

- Motion After Instruction: It’s also a dependable successor of straightforward prompts.

- Context: It justifies the 4K token window (roughly 3,000 phrases).

Fingers-on Instance:

The next is a short Python script to make use of GPT-3.5 Turbo for textual content summarization.

import openai

from google.colab import userdata

# Set your API key

consumer = openai.OpenAI(api_key=userdata.get('OPENAI_KEY'))

messages = [

{"role": "system", "content": "You are a helpful summarization assistant."},

{"role": "user", "content": "Summarize this: OpenAI changed the tech world with GPT-3.5 in 2022."}

]

response = consumer.chat.completions.create(

mannequin="gpt-3.5-turbo",

messages=messages

)

print(response.selections[0].message.content material)Output:

GPT-4 Household: Multimodal Powerhouses

The GPT-4 household was an unlimited breakthrough. Such collection are GPT-4, GPT-4 Turbo, and the very environment friendly GPT-4o. These fashions are multimodal, that’s that it is ready to comprehend each textual content and pictures. Their main energy lies in difficult pondering, authorized analysis, and inventive writing that’s delicate.

GPT-4o Options:

- Multimodal Enter: It handles texts and pictures directly.

- Pace: GPT-4o (o is Omni) is twice as quick as GPT-4.

- Worth: It’s a lot cheaper than the standard GPT-4 mannequin.

An openAI research revealed that GPT-4 achieved a simulated bar take a look at within the prime 10 % of people to take the take a look at. This is a sign of its functionality to take care of subtle logic.

Fingers-on Instance (Complicated Logic):

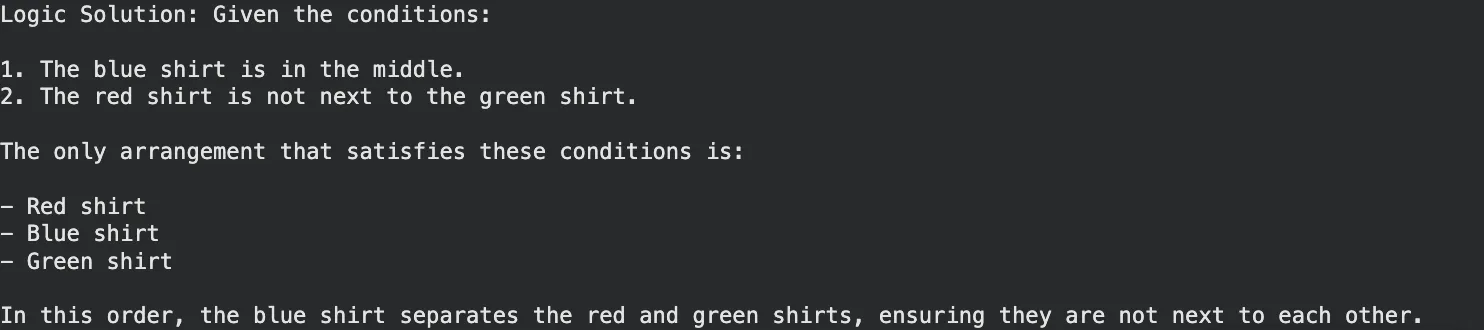

GPT-4o has the aptitude of fixing a logic puzzle which entails reasoning.

messages = [

{"role": "user", "content": "I have 3 shirts. One is red, one blue, one green. "

"The red is not next to the green. The blue is in the middle. "

"What is the order?"}

]

response = consumer.chat.completions.create(

mannequin="gpt-4o",

messages=messages

)

print("Logic Resolution:", response.selections[0].message.content material)Output:

The o-Sequence: Fashions That Suppose Earlier than They Converse

Late 2024 and early 2025 OpenAI introduced the o-series (o1, o1-mini and o3-mini). These are “reasoning fashions.” They don’t reply instantly however take time to assume and devise a method not like the traditional GPT fashions. This renders them math, science, and troublesome coding superior.

o1 and o3-mini Highlights:

- Chain of Thought: This mannequin checks its steps internally itself minimizing errors.

- Coding Prowess: o3-mini is designed to be quick and correct in codes.

- Effectivity: o3-mini is an extremely smart mannequin at a less expensive value in comparison with the whole o1 mannequin.

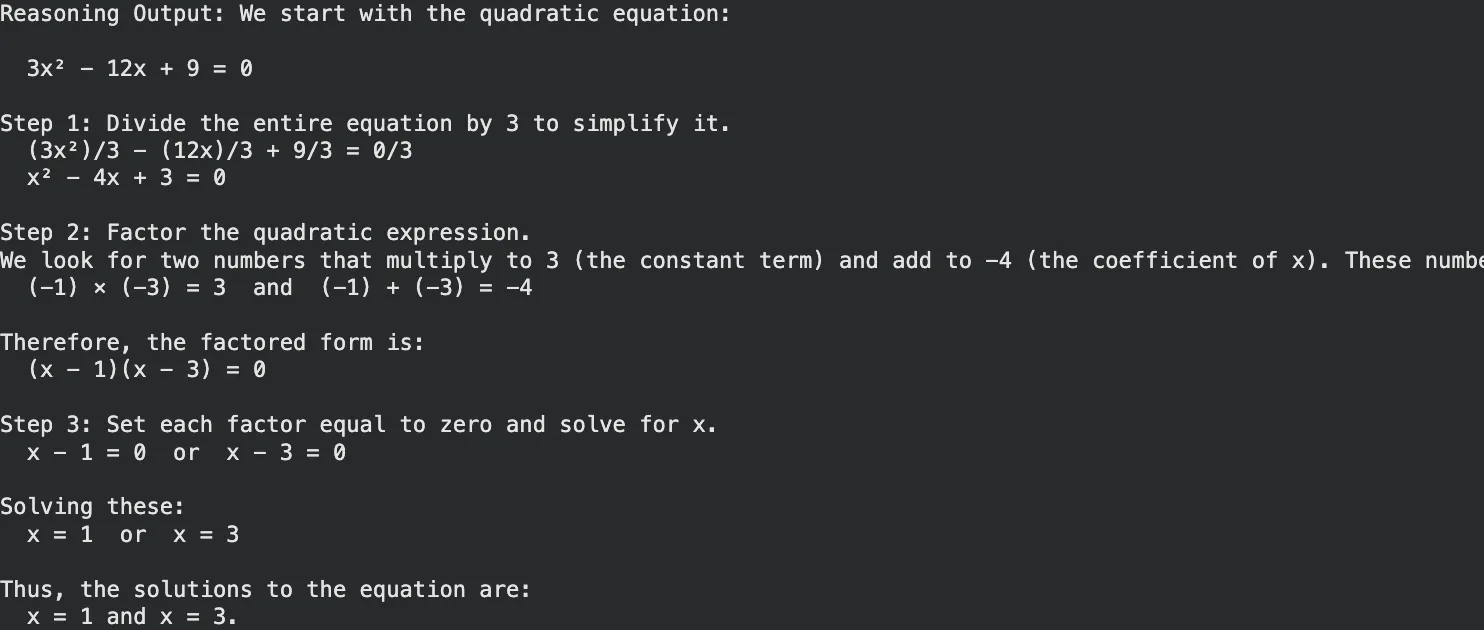

Fingers-on Instance (Math Reasoning):

Use o3-mini for a math downside the place step-by-step verification is essential.

# Utilizing the o3-mini reasoning mannequin

response = consumer.chat.completions.create(

mannequin="o3-mini",

messages=[{"role": "user", "content": "Solve for x: 3x^2 - 12x + 9 = 0. Explain steps."}]

)

print("Reasoning Output:", response.selections[0].message.content material)Output:

GPT-5 and GPT-5.1: The Subsequent Era

Each GPT-5 and its optimized model GPT-5.1, which was launched in mid-2025, mixed the tempo and logic. GPT-5 offers built-in pondering, through which the mannequin itself determines when to assume and when to reply in a short while. The model, GPT-5.1, is refined to have superior enterprise controls and fewer hallucinations.

What units them aside:

- Adaptive Considering: It takes easy queries all the way down to easy routes and easy reasoning as much as laborious reasoning routs.

- Enterprise Grade: GPT-5.1 has the choice of deep analysis with Professional options.

- The GPT Picture 1: That is an inbuilt menu that substitutes DALL-E 3 to supply easy picture creation in chat.

Fingers-on Instance (Enterprise Technique):

GPT-5.1 is superb on the prime degree technique which entails basic data and structured pondering.

# Instance utilizing GPT-5.1 for strategic planning

response = consumer.chat.completions.create(

mannequin="gpt-5.1",

messages=[{"role": "user", "content": "Draft a go-to-market strategy for a new AI coffee machine."}]

)

print("Technique Draft:", response.selections[0].message.content material)Output:

DALL-E 3 and GPT Picture: Visible Creativity

Within the case of visible knowledge, OpenAI offers DALL-E 3 and the more moderen GPT Picture fashions. These functions will remodel textual prompts into lovely in-depth pictures. Working with DALL-E 3 will allow you to attract pictures, logos, and schemes by simply describing them.

Learn extra: Picture era utilizing GPT Picture API

Key Capabilities:

- Instant Motion: It strictly observes elaborate directions.

- Integration: It’s built-in into ChatGPT and the API.

Fingers-on Instance (Picture Era):

This script generates a picture URL based mostly in your textual content immediate.

image_response = consumer.pictures.generate(

mannequin="dall-e-3",

immediate="A futuristic metropolis with flying vehicles in a cyberpunk model",

n=1,

measurement="1024x1024"

)

print("Picture URL:", image_response.knowledge[0].url)Output:

Whisper: Speech-to-Textual content Mastery

Whisper The speech recognition system is the state-of-the-art offered by OpenAI. It has the power to transcribe audio of dozens of languages putting them into English. It’s immune to background noise and accents. The next snippet of Whisper API tutorial is a sign of how easy it’s to make use of.

Fingers-on Instance (Transcription):

Be sure you are in a listing with an audio file (named as speech.mp3).

audio_file = open("speech.mp3", "rb")

transcript = consumer.audio.transcriptions.create(

mannequin="whisper-1",

file=audio_file

)

print("Transcription:", transcript.textual content)Output:

Embeddings and Moderation: The Utility Instruments

OpenAI has utility fashions that are important to the builders.

- Embeddings (text-embedding-3-small/giant): These are used to encode textual content as numbers (vectors). This allows you to create search engines like google which may decipher that means versus key phrases.

- Moderation: It is a free API that verifies textual content content material of hate speech, violence, or self-harm to make sure apps are safe.

Fingers-on Instance (Semantic Search):

This discovers the very fact that there’s a similarity between a question and a product.

# Get embeddings

resp = consumer.embeddings.create(

enter=["smartphone", "banana"],

mannequin="text-embedding-3-small"

)

# In an actual app, you examine these vectors to seek out the most effective match

print("Vector created with dimension:", len(resp.knowledge[0].embedding))Output:

Wonderful-Tuning: Customizing Your AI

Wonderful-tuning permits coaching of a mannequin utilizing its personal knowledge. GPT-4o-mini or GPT-3.5 could be refined to choose up a specific tone, format or trade jargon. That is mighty in case of enterprise functions, which require not more than basic response.

The way it works:

- Put together a JSON file with coaching examples.

- Add the file to OpenAI.

- Begin a fine-tuning job.

- Use your new customized mannequin ID within the API.

Conclusion

The OpenAI mannequin panorama presents a software for practically each digital process. From the velocity of GPT-3.5 Turbo to the reasoning energy of o3-mini and GPT-5.1, builders have huge choices. You’ll be able to construct voice functions with Whisper, create visible belongings with DALL-E 3, or analyze knowledge with the most recent reasoning fashions.

The boundaries to entry stay low. You merely want an API key and an idea. We encourage you to check the scripts offered on this information. Experiment with the totally different fashions to grasp their strengths. Discover the proper stability of price, velocity, and intelligence on your particular wants. The expertise exists to energy your subsequent utility. It’s now as much as you to use it.

Steadily Requested Questions

A. GPT-4o is a general-purpose multimodal mannequin greatest for many duties. o3-mini is a reasoning mannequin optimized for advanced math, science, and coding issues.

A. No, DALL-E 3 is a paid mannequin priced per picture generated. Prices range based mostly on decision and high quality settings.

A. Sure, the Whisper mannequin is open-source. You’ll be able to run it by yourself {hardware} with out paying API charges, offered you have got a GPU.

A. GPT-5.1 helps an enormous context window (typically 128k tokens or extra), permitting it to course of total books or lengthy codebases in a single go.

A. These fashions can be found to builders by way of the OpenAI API and to customers by way of ChatGPT Plus, Staff, or Enterprise subscriptions.

Login to proceed studying and revel in expert-curated content material.