The evolution of computing has at all times concerned important technological developments. The most recent developments are a large leap into quantum computing period. Early computer systems, just like the ENIAC, have been massive and relied on vacuum tubes for fundamental calculations. The invention of transistors and built-in circuits within the mid-Twentieth century led to smaller, extra environment friendly computer systems. The event of microprocessors within the Seventies enabled the creation of private computer systems, making know-how accessible to the general public.

Over the many years, steady innovation exponentially elevated computing energy. Now, quantum computer systems are of their infancy. That is utilizing quantum mechanics ideas to handle complicated issues past classical computer systems’ capabilities. This development marks a dramatic leap in computational energy and innovation.

Quantum Computing Fundamentals and Affect

Quantum computing originated within the early Nineteen Eighties, launched by Richard Feynman, who steered that quantum methods may very well be extra effectively simulated by quantum computer systems than classical ones. David Deutsch later formalized this concept, proposing a theoretical mannequin for quantum computer systems.

Quantum computing leverages quantum mechanics to course of data in a different way than classical computing. It makes use of qubits, which may exist in a state 0, 1 or each concurrently. This functionality, referred to as superposition, permits for parallel processing of huge quantities of knowledge. Moreover, entanglement allows qubits to be interconnected, enhancing processing energy and communication, even throughout distances. Quantum interference is used to control qubit states, permitting quantum algorithms to resolve issues extra effectively than classical computer systems. This functionality has the potential to remodel fields like cryptography, optimization, drug discovery, and AI by fixing issues past classical laptop’s attain.

Safety and Cryptography Evolution

Threats to safety and privateness have developed alongside technological developments. Initially, threats have been less complicated, corresponding to bodily theft or fundamental codebreaking. As know-how superior, so did the sophistication of threats, together with cyberattacks, information breaches, and identification theft. To fight these, strong safety measures have been developed, together with superior cybersecurity protocols and cryptographic algorithms.

Cryptography is the science of securing communication and knowledge by encrypting it into codes that require a secret key for decryption. Classical cryptographic algorithms are two primary varieties – symmetric and uneven. Symmetric, exemplified by AES, makes use of the identical key for each encryption and decryption, making it environment friendly for giant information volumes. Uneven key cryptography, together with RSA and ECC for authentication, includes public-private key pair, with ECC providing effectivity by smaller keys. Moreover hash features like SHA guarantee information integrity and Diffie-Hellman for key exchanges strategies which allow safe key sharing over public channels. Cryptography is important for securing web communications, defending databases, enabling digital signatures, and securing cryptocurrency transactions, enjoying an important function in safeguarding delicate data within the digital world.

Public key cryptography is based on mathematical issues which might be simple to carry out however troublesome to reverse, corresponding to multiplying massive primes. RSA makes use of prime factorization, and Diffie-Hellman depends on the discrete logarithm drawback. These issues type the safety foundation for these cryptographic methods as a result of they’re computationally difficult to resolve shortly with classical computer systems.

Quantum Threats

Probably the most regarding side of the transition to a quantum computing period is the potential menace it poses to present cryptographic methods.

Encryption breaches can have catastrophic outcomes. This vulnerability dangers exposing delicate data and compromising cybersecurity globally. The problem lies in creating and implementing quantum-resistant cryptographic algorithms, referred to as post-quantum cryptography (PQC), to guard in opposition to these threats earlier than quantum computer systems turn into sufficiently highly effective. Making certain a well timed and efficient transition to PQC is important to sustaining the integrity and confidentiality of digital methods.

Comparability – PQC, QC and CC

Put up-quantum cryptography (PQC) and quantum cryptography (QC) are distinct ideas.

Under desk illustrates the important thing variations and roles of PQC, Quantum Cryptography, and Classical Cryptography, highlighting their goals, methods, and operational contexts.

| Function | Put up-Quantum Cryptography (PQC) | Quantum Cryptography (QC) | Classical Cryptography (CC) |

|---|---|---|---|

| Goal | Safe in opposition to quantum laptop assaults | Use quantum mechanics for cryptographic duties | Safe utilizing mathematically arduous issues |

| Operation | Runs on classical computer systems | Entails quantum computer systems or communication strategies | Runs on classical computer systems |

| Strategies | Lattice-based, hash-based, code-based, and so on. | Quantum Key Distribution (QKD), quantum protocols | RSA, ECC, AES, DES, and so on. |

| Function | Future-proof present cryptography | Leverage quantum mechanics for enhanced safety | Safe information primarily based on present computational limits |

| Focus | Defend present methods from future quantum threats | Obtain new ranges of safety utilizing quantum ideas | Present safe communication and information safety |

| Implementation | Integrates with present communication protocols | Requires quantum applied sciences for implementation | Extensively carried out in present methods and networks |

Insights into Put up-Quantum Cryptography (PQC)

The Nationwide Institute of Requirements and Expertise (NIST) is at the moment reviewing a wide range of quantum-resistant algorithms:

| Cryptographic Kind | Key Algorithms | Foundation of Safety | Strengths | Challenges |

|---|---|---|---|---|

| Lattice-Based mostly | CRYSTALS-Kyber, CRYSTALS-Dilithium |

Studying With Errors (LWE), Shortest Vector Drawback (SVP) | Environment friendly, versatile; sturdy candidates for standardization | Complexity in understanding and implementation |

| Code-Based mostly | Basic McEliece | Decoding linear codes | Strong safety, many years of research | Giant key sizes |

| Hash-Based mostly | XMSS, SPHINCS+ | Hash features | Simple, dependable | Requires cautious key administration |

| Multivariate Polynomial | Rainbow | Methods of multivariate polynomial equations | Reveals promise | Giant key sizes, computational depth |

| Isogeny-Based mostly | SIKE (Supersingular Isogeny Key Encapsulation) | Discovering isogenies between elliptic curves | Compact key sizes | Issues about long-term safety because of cryptanalysis |

As summarized above, Quantum-resistant cryptography encompasses varied approaches. Every affords distinctive strengths, corresponding to effectivity and robustness, but additionally faces challenges like massive key sizes or computational calls for. NIST’s Put up-Quantum Cryptography Standardization Mission is working to carefully consider and standardize these algorithms, making certain they’re safe, environment friendly, and interoperable.

Quantum-Prepared Hybrid Cryptography

Hybrid cryptography combines classical algorithms like X25519 (ECC-based algorithm) with post-quantum algorithms typically referred as “Hybrid Key Change” to offer twin layer of safety in opposition to each present and future threats. Even when one element is compromised, the opposite stays safe, making certain the integrity of communication.

In Could 2024, Google Chrome enabled ML-KEM (a post-quantum key encapsulation mechanism) by default for TLS 1.3 and QUIC enhancing safety for connections between Chrome Desktop and Google Companies in opposition to future quantum laptop threats.

Challenges

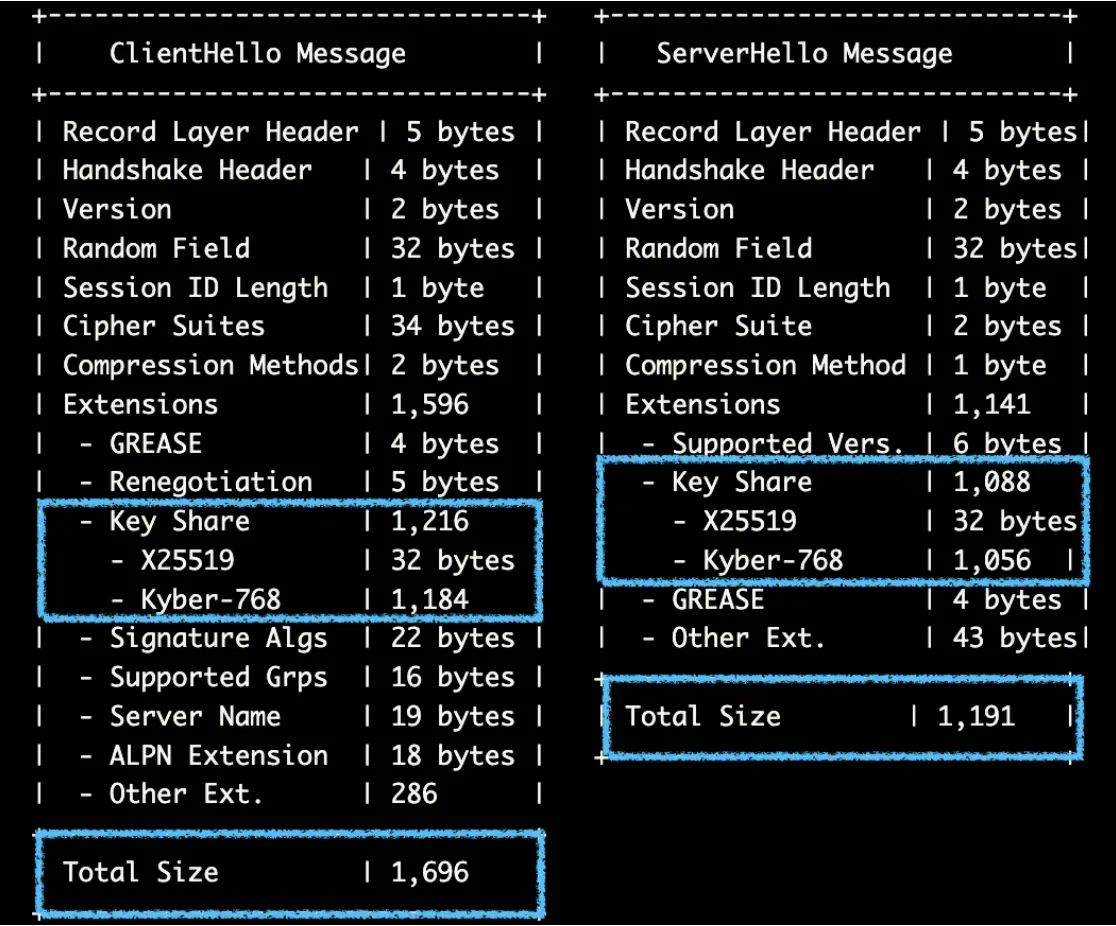

ML-KEM (Module Lattice Key Encapsulation Mechanism), which makes use of lattice-based cryptography, has bigger key shares because of its complicated mathematical buildings and wishes extra information to make sure sturdy safety in opposition to future quantum laptop threats. The additional information helps ensure the encryption is hard to interrupt, however it leads to greater key sizes in comparison with conventional strategies like X25519. Regardless of being bigger, these key shares are designed to maintain information safe in a world with highly effective quantum computer systems.

Under desk offers a comparability of the important thing and ciphertext sizes when utilizing hybrid cryptography, illustrating the trade-offs by way of measurement and safety:

| Algorithm Kind | Algorithm | Public Key Measurement | Ciphertext Measurement | Utilization |

|---|---|---|---|---|

| Classical Cryptography | X25519 | 32 bytes | 32 bytes | Environment friendly key change in TLS. |

| Put up-Quantum Cryptography |

Kyber-512 | ~800 bytes | ~768 bytes | Reasonable quantum-resistant key change. |

| Kyber-768 | 1,184 bytes | 1,088 bytes | Quantum-resistant key change. | |

| Kyber-1024 | 1,568 bytes | 1,568 bytes | Larger safety degree for key change. | |

| Hybrid Cryptography | X25519 + Kyber-512 | ~832 bytes | ~800 bytes | Combines classical and quantum safety. |

| X25519 + Kyber-768 | 1,216 bytes | 1,120 bytes | Enhanced safety with hybrid method. | |

| X25519 + Kyber-1024 | 1,600 bytes | 1,600 bytes | Strong safety with hybrid strategies. |

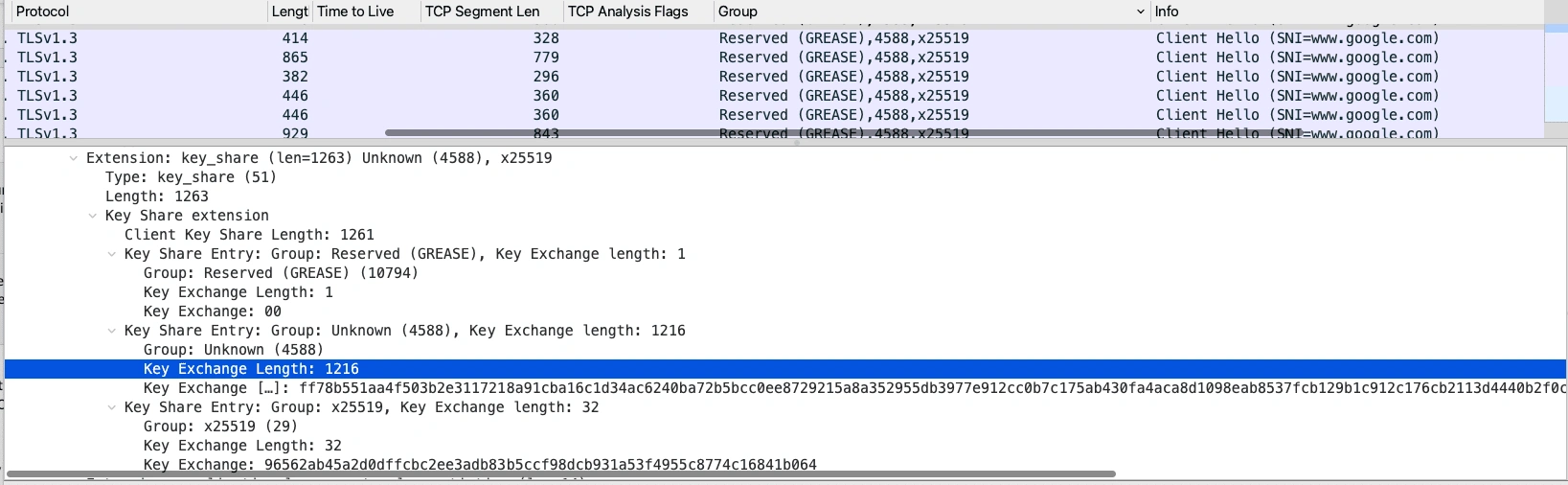

Within the following Wireshark seize from Google, the group identifier “4588” corresponds to the “X25519MLKEM768” cryptographic group inside the ClientHello message. This identifier signifies using an ML-KEM or Kyber-786 key share, which has a measurement of 1216 bytes, considerably bigger than the standard X25519 key share measurement of 32 bytes:

As illustrated within the photographs under, the mixing of Kyber-768 into the TLS handshake considerably impacts the scale of each the ClientHello and ServerHello messages.

Future additions of post-quantum cryptography teams may additional exceed typical MTU sizes. Excessive MTU settings can result in challenges corresponding to fragmentation, community incompatibility, elevated latency, error propagation, community congestion, and buffer overflows. These points necessitate cautious configuration to make sure balanced efficiency and reliability in community environments.

NGFW Adaptation

The combination of post-quantum cryptography (PQC) in protocols like TLS 1.3 and QUIC, as seen with Google’s implementation of ML-KEM, can have a number of implications for Subsequent-Technology Firewalls (NGFWs):

- Encryption and Decryption Capabilities: NGFWs that carry out deep packet inspection might want to deal with the bigger TLS handshake messages because of ML-KEM bigger key sizes and ciphertexts related to PQC. This elevated information load can require updates to processing capabilities and algorithms to effectively handle the elevated computational load.

- Packet Fragmentation: With bigger messages exceeding the everyday MTU, ensuing packet fragmentation can complicate visitors inspection and administration, as NGFWs should reassemble fragmented packets to successfully analyze and apply safety insurance policies.

- Efficiency Concerns: The adoption of PQC may affect the efficiency of NGFWs because of the elevated computational necessities. This would possibly necessitate {hardware} upgrades or optimizations within the firewall’s structure to take care of throughput and latency requirements.

- Safety Coverage Updates: NGFWs would possibly want updates to their safety insurance policies and rule units to accommodate and successfully handle the brand new cryptographic algorithms and bigger message sizes related to ML-KEM.

- Compatibility and Updates: NGFW distributors might want to guarantee compatibility with PQC requirements, which can contain firmware or software program updates to help new cryptographic algorithms and protocols.

By integrating post-quantum cryptography (PQC), Subsequent-Technology Firewalls (NGFWs) can present a forward-looking safety resolution, making them extremely enticing to organizations aiming to guard their networks in opposition to the constantly evolving menace panorama.

Conclusion

As quantum computing advances, it poses important threats to present cryptographic methods, making the adoption of post-quantum cryptography (PQC) important for information safety. Implementations like Google’s ML-KEM in TLS 1.3 and QUIC are essential for enhancing safety but additionally current challenges corresponding to elevated information masses and packet fragmentation, impacting Subsequent-Technology Firewalls (NGFWs). The important thing to navigating these adjustments lies in cryptographic agility—making certain methods can seamlessly combine new algorithms. By embracing PQC and leveraging quantum developments, organizations can strengthen their digital infrastructures, making certain strong information integrity and confidentiality. These proactive measures will paved the way in securing a resilient and future-ready digital panorama. As know-how evolves, our defenses should evolve too.

We’d love to listen to what you assume. Ask a Query, Remark Under, and Keep Linked with Cisco Safe on social!

Cisco Safety Social Channels

Share: