At the moment, I’m blissful to announce new serverless customization in Amazon SageMaker AI for standard AI fashions, comparable to Amazon Nova, DeepSeek, GPT-OSS, Llama, and Qwen. The brand new customization functionality supplies an easy-to-use interface for the most recent fine-tuning strategies like reinforcement studying, so you may speed up the AI mannequin customization course of from months to days.

With a couple of clicks, you may seamlessly choose a mannequin and customization method, and deal with mannequin analysis and deployment—all completely serverless so you may concentrate on mannequin tuning fairly than managing infrastructure. While you select serverless customization, SageMaker AI mechanically selects and provisions the suitable compute sources primarily based on the mannequin and knowledge dimension.

Getting began with serverless mannequin customization

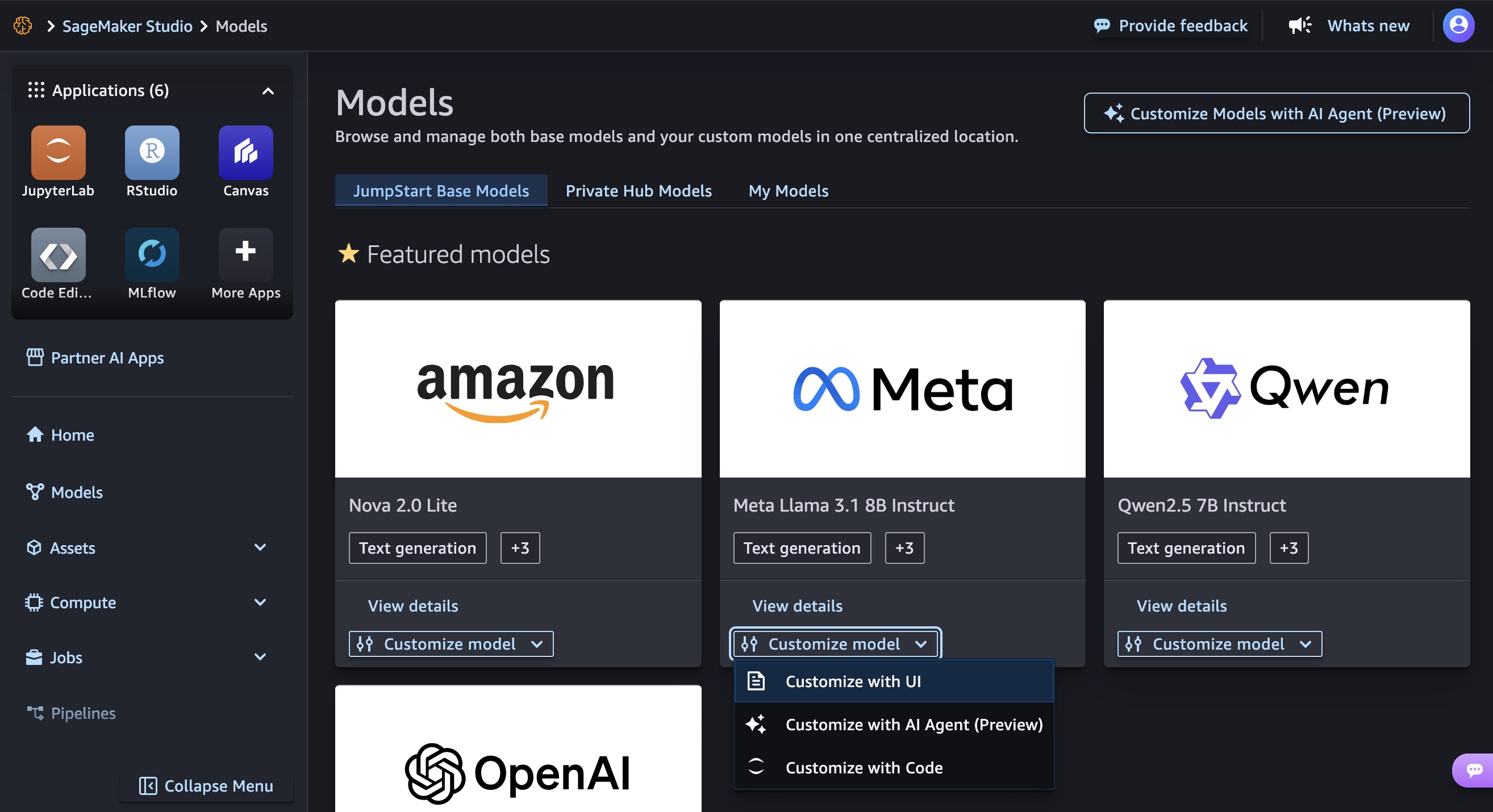

You will get began customizing fashions in Amazon SageMaker Studio. Select Fashions within the left navigation pane and take a look at your favourite AI fashions to be custom-made.

Customise with UI

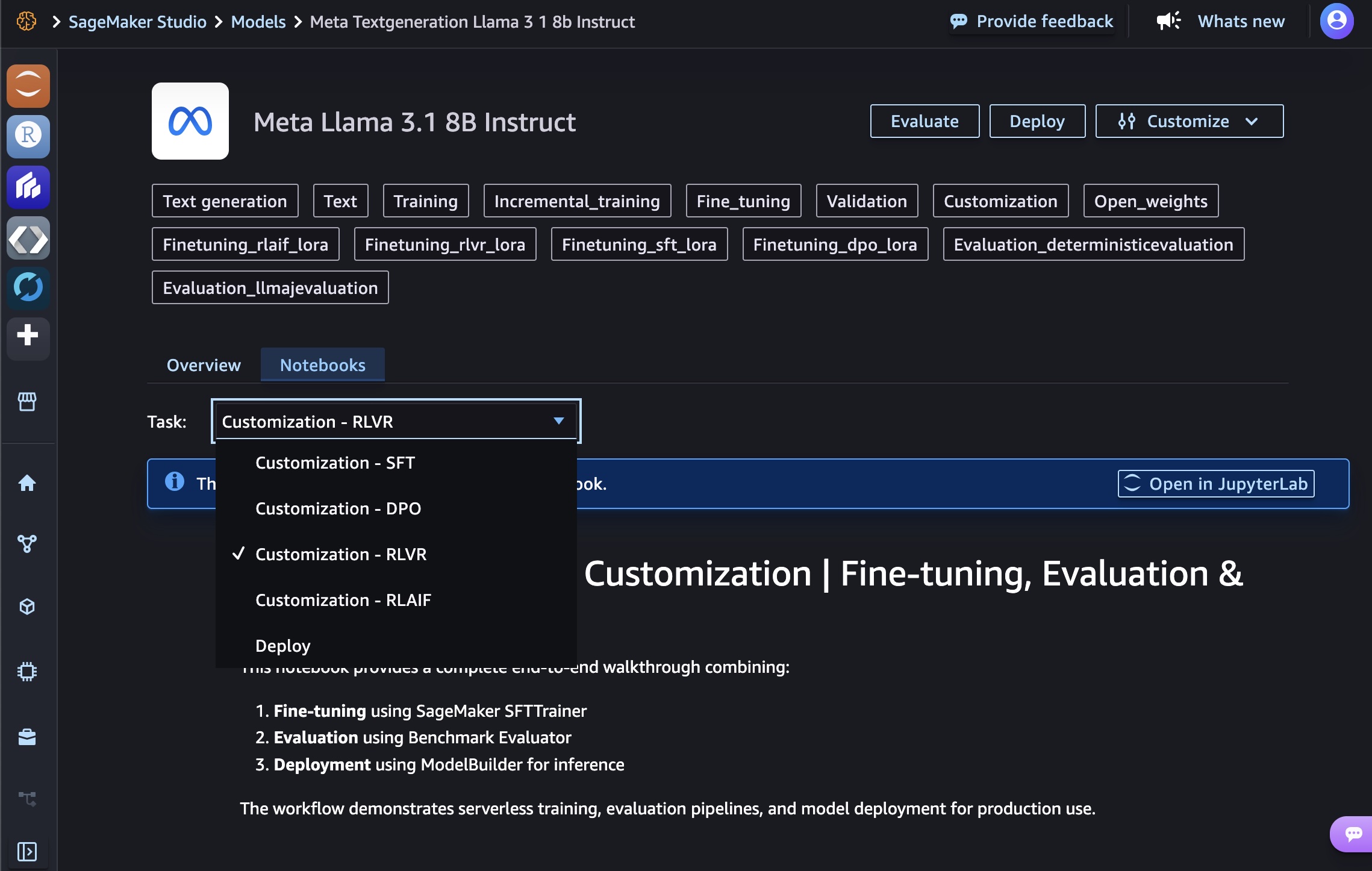

You may customise AI fashions in a solely few clicks. Within the Customise mannequin dropdown checklist for a selected mannequin comparable to Meta Llama 3.1 8B Instruct, select Customise with UI.

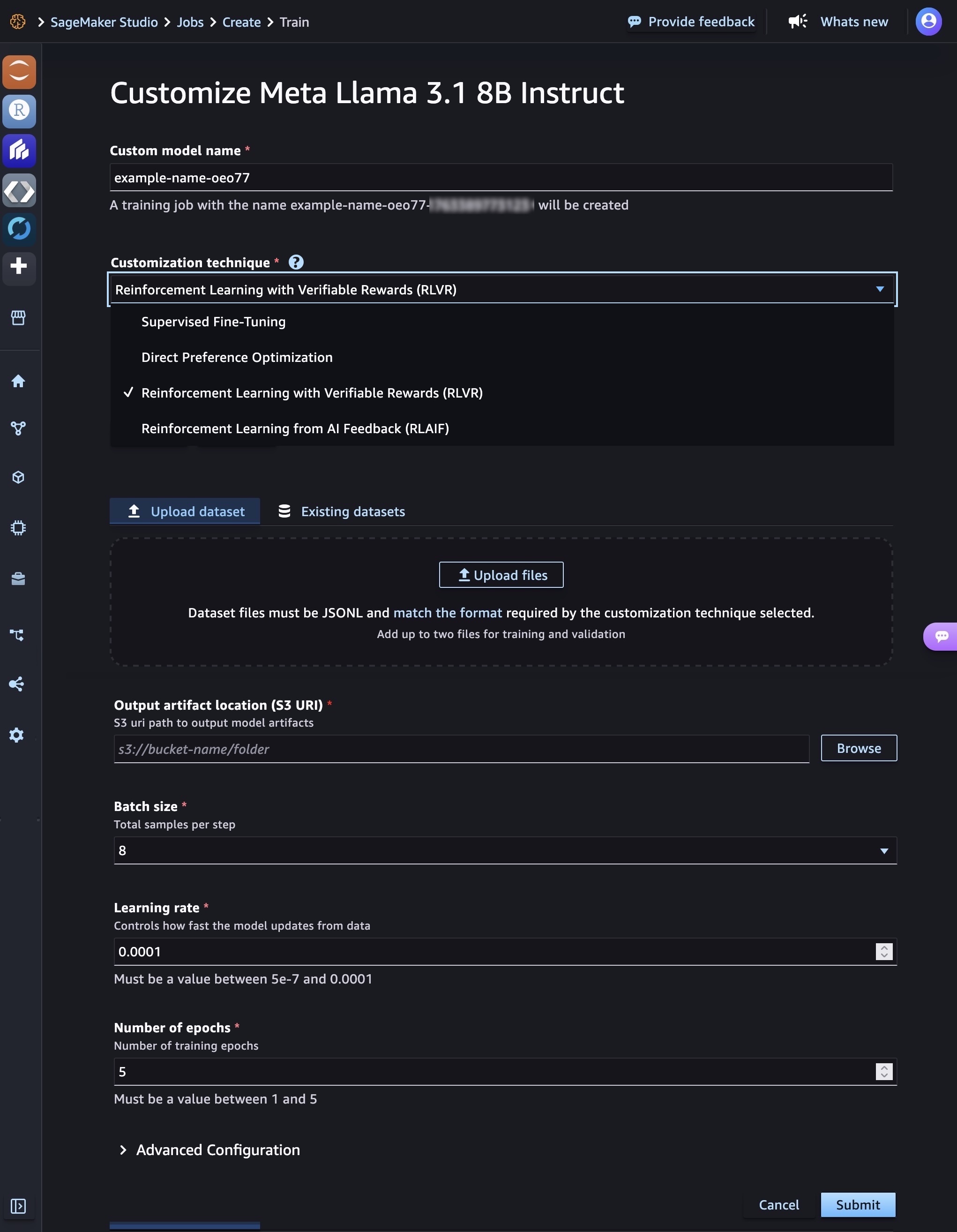

You may choose a customization method used to adapt the bottom mannequin to your use case. SageMaker AI helps Supervised Nice-Tuning and the most recent mannequin customization strategies together with Direct Desire Optimization, Reinforcement Studying from Verifiable Rewards (RLVR), and Reinforcement Studying from AI Suggestions (RLAIF). Every method optimizes fashions in numerous methods, with choice influenced by elements comparable to dataset dimension and high quality, obtainable computational sources, process at hand, desired accuracy ranges, and deployment constraints.

Add or choose a coaching dataset to match the format required by the customization method chosen. Use the values of batch dimension, studying charge, and variety of epochs beneficial by the method chosen. You may configure superior settings comparable to hyperparameters, a newly launched serverless MLflow utility for experiment monitoring, and community and storage quantity encryption. Select Submit to get began in your mannequin coaching job.

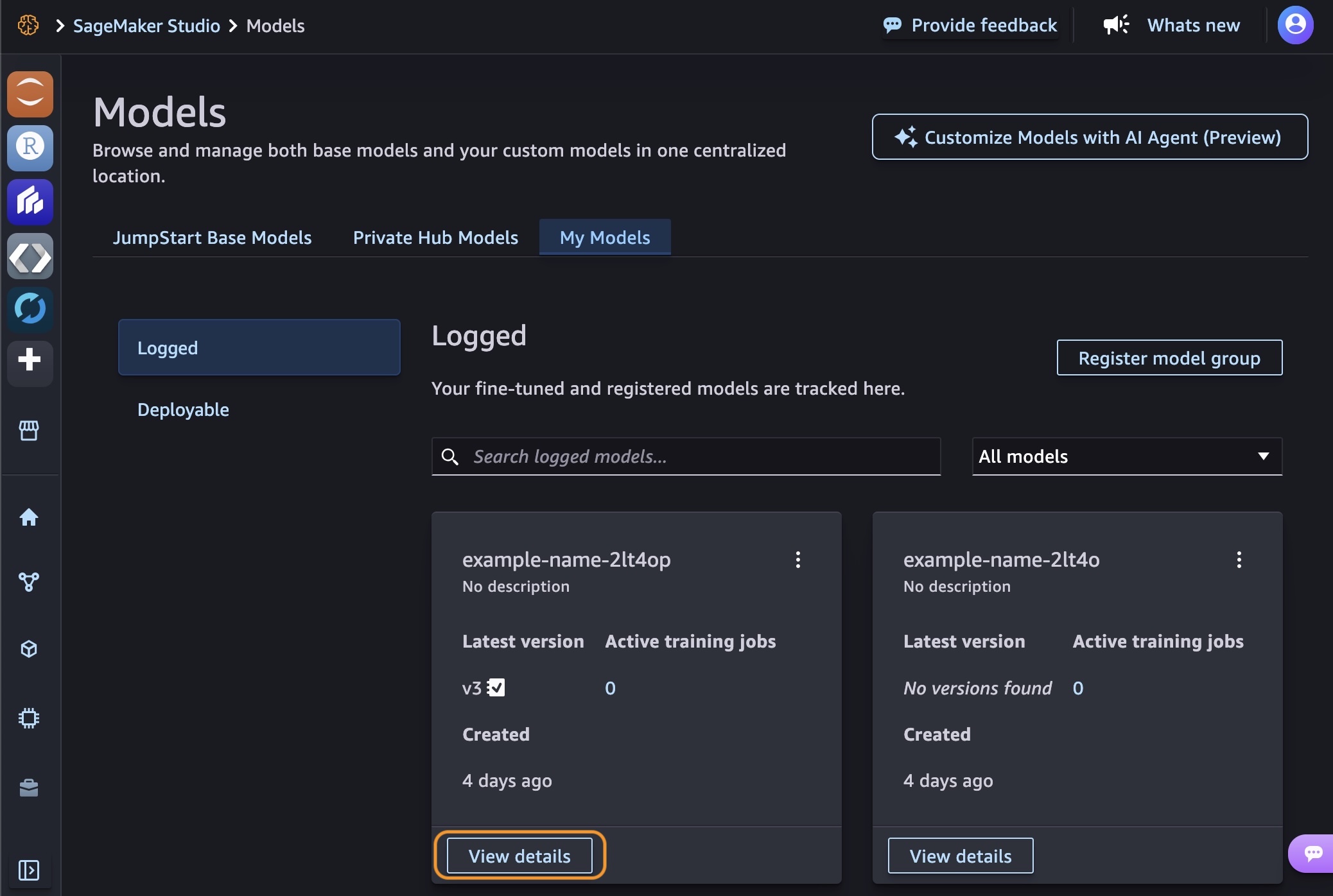

After your coaching job is full, you may see the fashions you created within the My Fashions tab. Select View particulars in one in all your fashions.

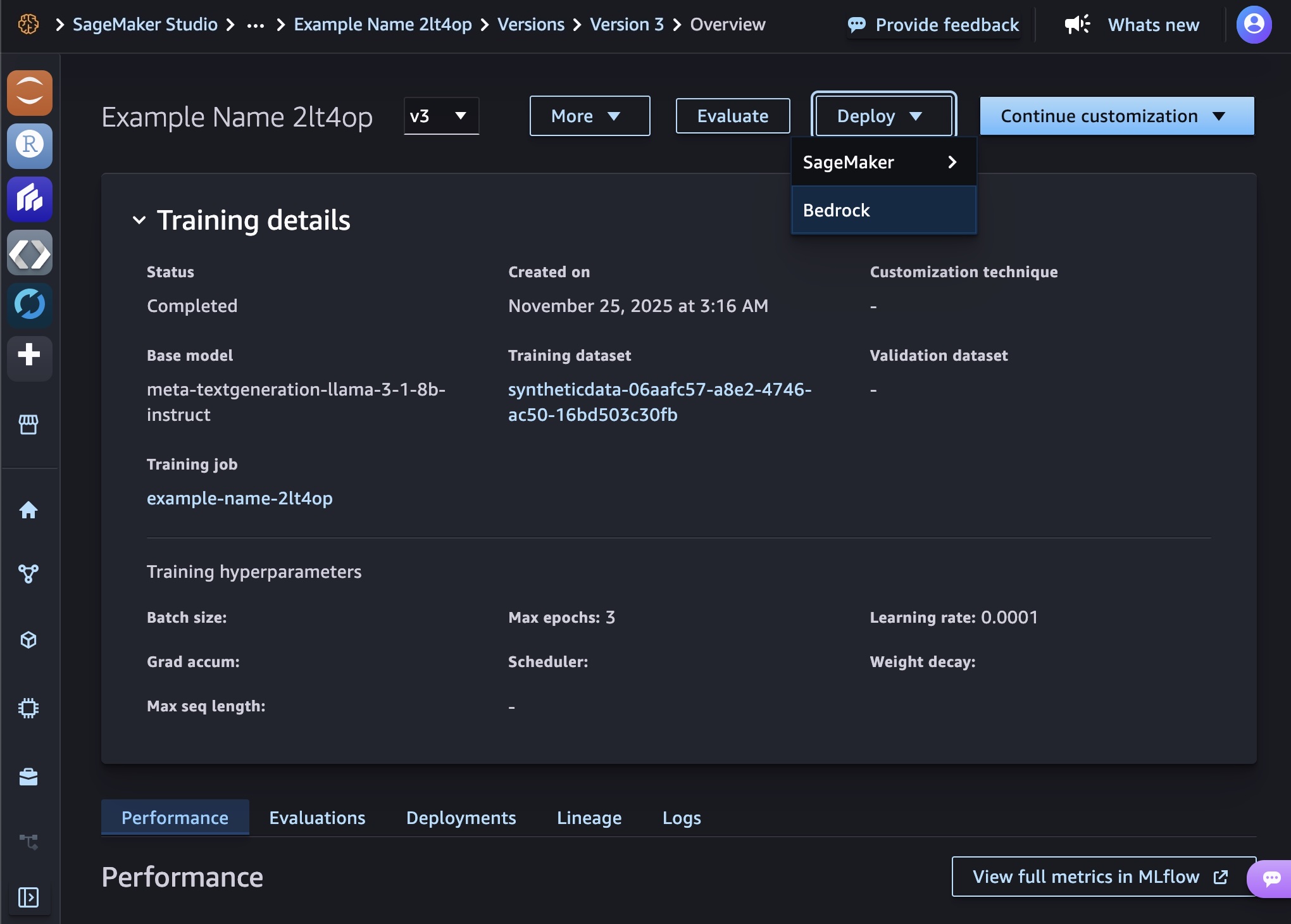

By selecting Proceed customization, you may proceed to customise your mannequin by adjusting hyperparameters or coaching with totally different strategies. By selecting Consider, you may consider your custom-made mannequin to see the way it performs in comparison with the bottom mannequin.

While you full each jobs, you may select both the SageMaker or Bedrock within the Deploy dropdown checklist to deploy your mannequin.

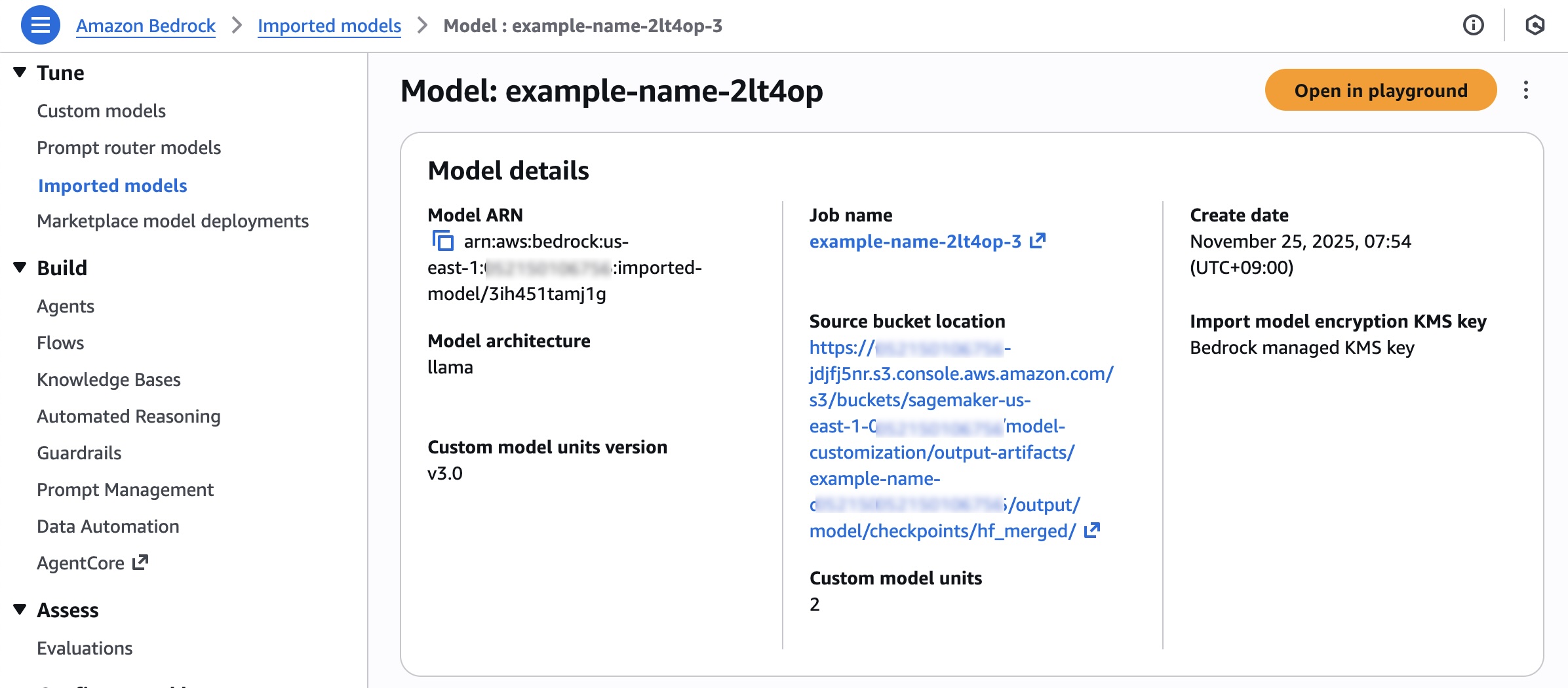

You may select Amazon Bedrock for serverless inference. Select Bedrock and the mannequin identify to deploy the mannequin into Amazon Bedrock. To search out your deployed fashions, select Imported fashions within the Bedrock console.

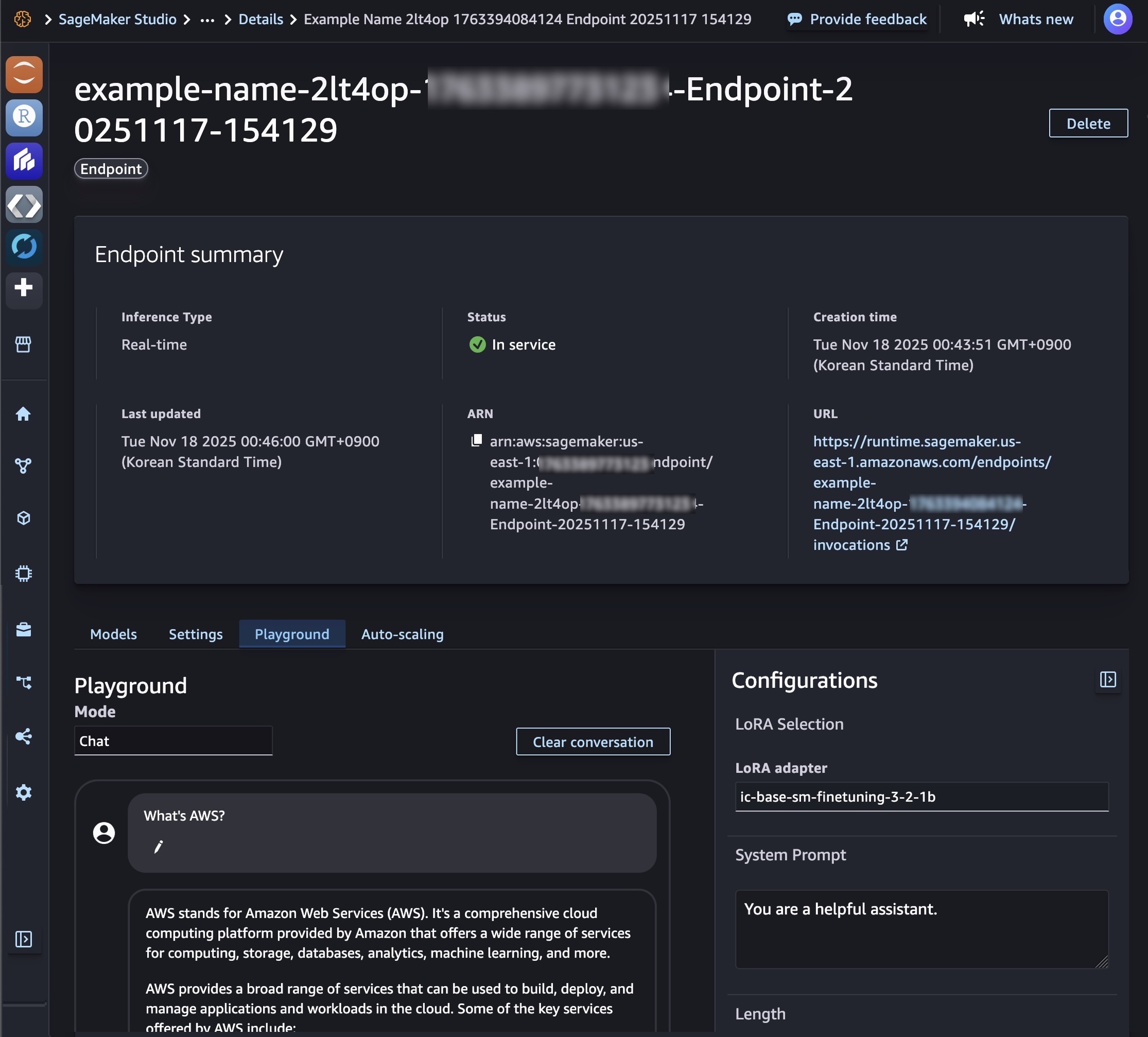

You can too deploy your mannequin to a SageMaker AI inference endpoint if you wish to management your deployment sources such for example sort and occasion rely. After the SageMaker AI deployment is In service, you should use this endpoint to carry out inference. Within the Playground tab, you may check your custom-made mannequin with a single immediate or chat mode.

With the serverless MLflow functionality, you may mechanically log all important experiment metrics with out modifying code and entry wealthy visualizations for additional evaluation.

Customise with code

While you select customizing with code, you may see a pattern pocket book to fine-tune or deploy AI fashions. If you wish to edit the pattern pocket book, open it in JupyterLab. Alternatively, you may deploy the mannequin instantly by selecting Deploy.

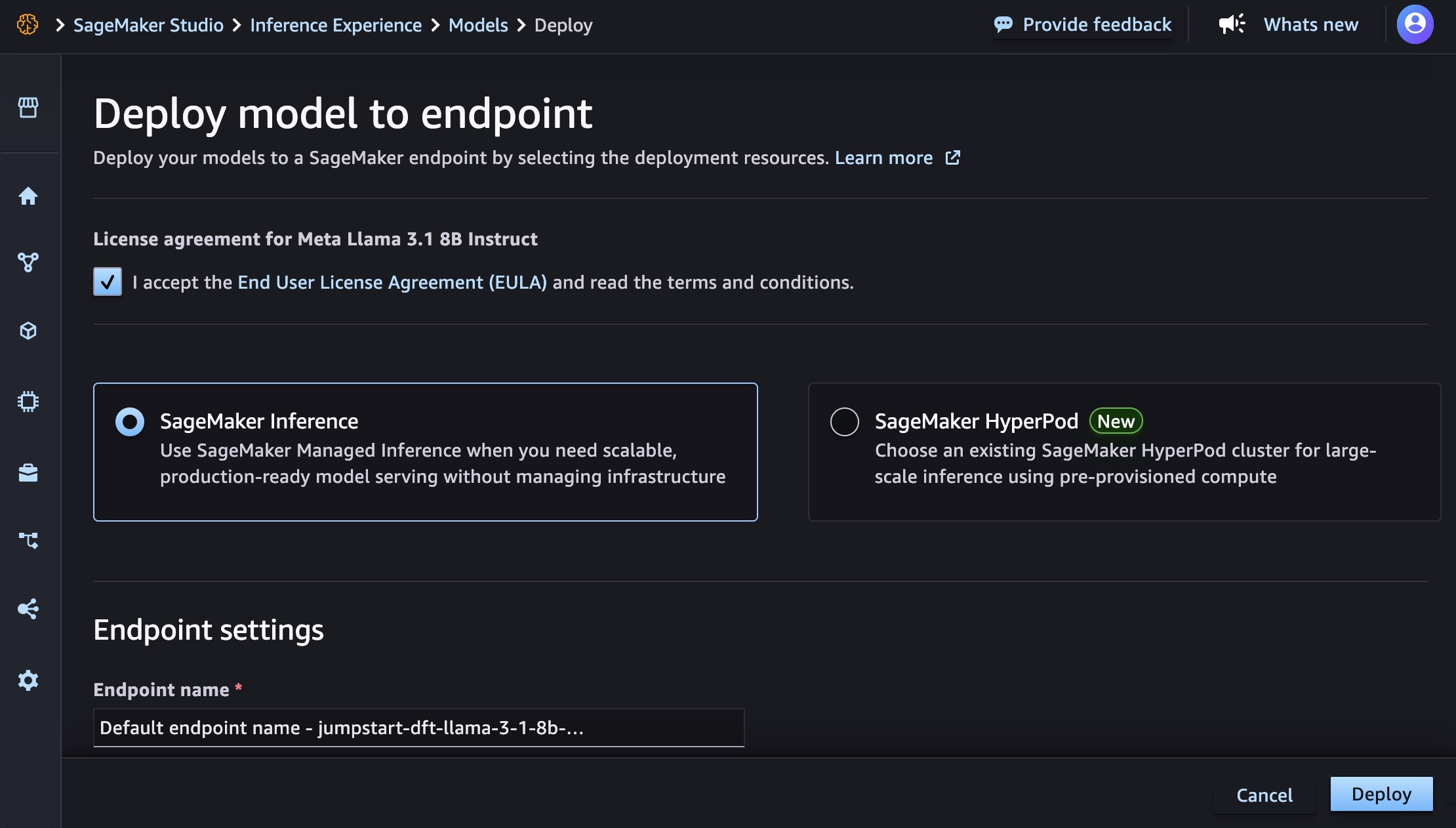

You may select the Amazon Bedrock or SageMaker AI endpoint by choosing the deployment sources both from Amazon SageMaker Inference or Amazon SageMaker Hyperpod.

While you select Deploy on the underside proper of the web page, will probably be redirected again to the mannequin element web page. After the SageMaker AI deployment is in service, you should use this endpoint to carry out inference.

Okay, you’ve seen how one can streamline the mannequin customization within the SageMaker AI. Now you can select your favourite means. To be taught extra, go to the Amazon SageMaker AI Developer Information.

Now obtainable

New serverless AI mannequin customization in Amazon SageMaker AI is now obtainable in US East (N. Virginia), US West (Oregon), Asia Pacific (Tokyo), and Europe (Eire) Areas. You solely pay for the tokens processed throughout coaching and inference. To be taught extra particulars, go to Amazon SageMaker AI pricing web page.

Give it a strive in Amazon SageMaker Studio and ship suggestions to AWS re:Submit for SageMaker or via your common AWS Help contacts.

— Channy