With the rising adoption of open desk codecs like Apache Iceberg, Amazon Redshift continues to advance its capabilities for open format knowledge lakes. In 2025, Amazon Redshift delivered a number of efficiency optimizations that improved question efficiency over twofold for Iceberg workloads on Amazon Redshift Serverless, delivering distinctive efficiency and cost-effectiveness in your knowledge lake workloads.

On this put up, we describe a number of the optimizations that led to those efficiency positive factors. Knowledge lakes have turn out to be a basis of contemporary analytics, serving to organizations retailer huge quantities of structured and semi-structured knowledge in cost-effective knowledge codecs like Apache Parquet whereas sustaining flexibility via open desk codecs. This structure creates distinctive efficiency optimization alternatives throughout your entire question processing pipeline.

Efficiency enhancements

Our newest enhancements span a number of areas of the Amazon Redshift SQL question processing engine, together with vectorized scanners that speed up execution, optimum question plans powered by just-in-time (JIT) runtime statistics, distributed Bloom filters, and new decorrelation guidelines.

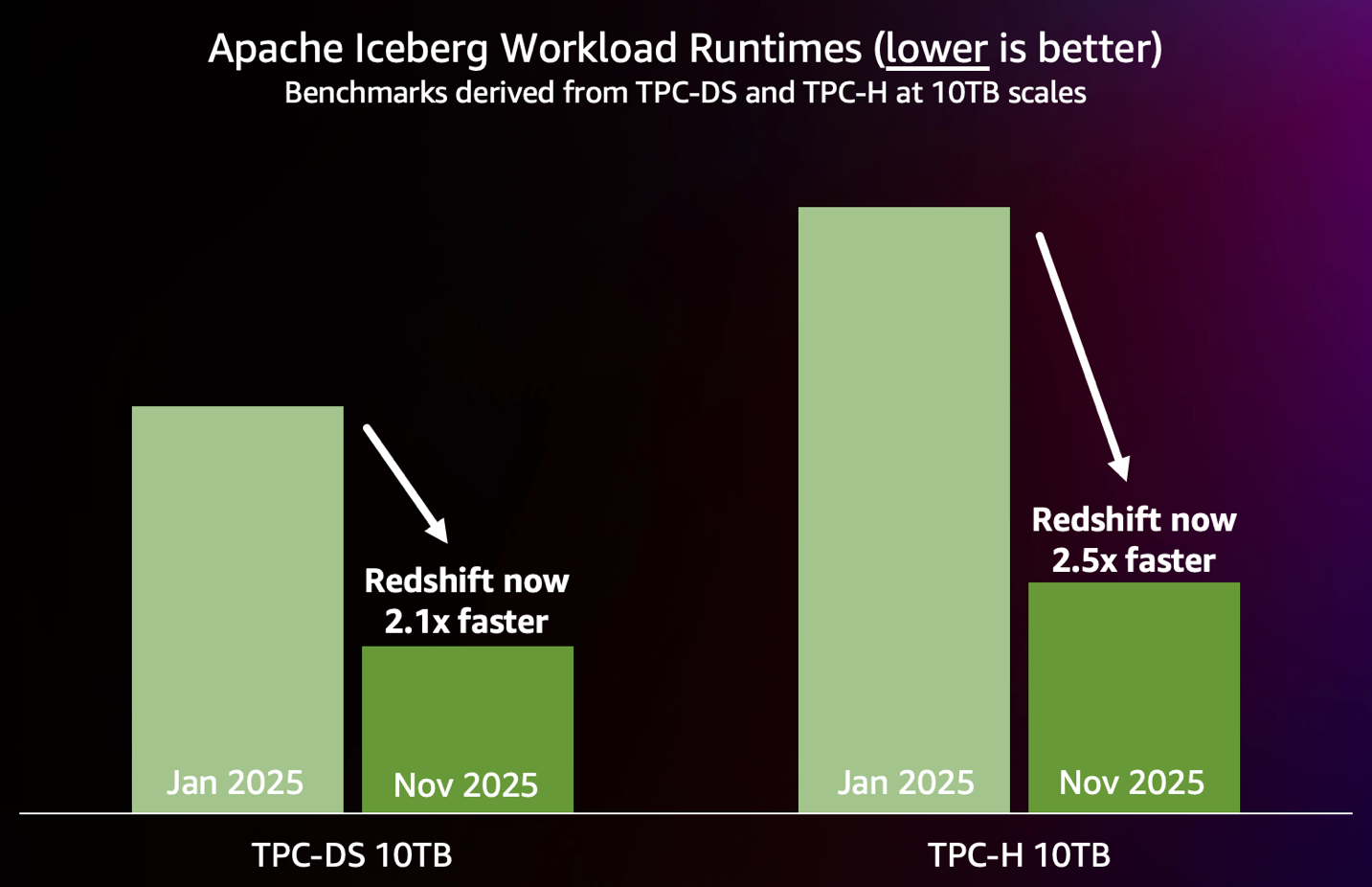

The next chart summarizes the efficiency enhancements achieved to date in 2025, as measured by {industry} normal 10 TB TPC-DS and TPC-H benchmarks run on Iceberg tables on an 88 RPU Redshift Serverless endpoint.

Discover the most effective efficiency in your workloads

The efficiency outcomes introduced on this put up are primarily based on benchmarks derived from the industry-standard TPC-DS and TPC-H benchmarks, and have the next traits:

- The schema and knowledge of Iceberg tables are used unmodified from TPC-DS. Tables are partitioned to mirror real-world knowledge group patterns.

- The queries are generated utilizing the official TPC-DS and TPC-H kits with question parameters generated utilizing the default random seed of the kits.

- The TPC-DS check contains all 99 TPC-DS SELECT queries. It doesn’t embody upkeep and throughput steps. The TPC-H check contains all 22 TPC-H SELECT queries.

- Benchmarks are run out of the field: no handbook tuning or stats assortment is finished for the workloads.

Within the following sections, we focus on key efficiency enhancements delivered in 2025.

Quicker knowledge lake scans

To enhance knowledge lake learn efficiency, the Amazon Redshift workforce constructed a very new scan layer designed from the ground-up for knowledge lakes. This new scan layer features a purpose-built I/O subsystem, incorporating sensible prefetch capabilities to scale back knowledge latency. Moreover, the brand new scan layer is optimized for processing Apache Parquet information, probably the most generally used file format for Iceberg, via quick vectorized scans.

This new scan layer additionally contains subtle knowledge pruning mechanisms that function at each partition and file ranges, dramatically decreasing the quantity of knowledge that must be scanned. This pruning functionality works in concord with the sensible prefetch system, making a coordinated strategy that maximizes effectivity all through your entire knowledge retrieval course of.

JIT ANALYZE for Iceberg tables

Not like conventional knowledge warehouses, knowledge lakes usually lack complete table- and column-level statistics concerning the underlying knowledge, making it difficult for the planner and optimizer within the question engine to decide on up-front which execution plan will likely be most optimum. Sub-optimal plans can result in slower and fewer predictable efficiency.

JIT ANALYZE is a brand new Amazon Redshift function that routinely collects and makes use of statistics for Iceberg tables throughout question execution—minimizing handbook statistics assortment whereas giving the planner and optimizer within the question engine the data it must generate optimum question plans. The system makes use of clever heuristics to establish queries that can profit from statistics, performs quick file-level sampling utilizing Iceberg metadata, and extrapolates inhabitants statistics utilizing superior strategies.

JIT ANALYZE delivers out-of-the-box efficiency practically equal to queries which have pre-calculated statistics, whereas offering the inspiration for a lot of different efficiency optimizations. Some TPC-DS queries improved by 50 occasions sooner with these statistics.

Question optimizations

For correlated subqueries reminiscent of those who include EXISTS/IN clauses, Amazon Redshift makes use of decorrelation guidelines to rewrite the queries. In lots of circumstances, these decorrelation guidelines weren’t producing optimum plans, leading to question execution efficiency regressions. To handle this, we launched a brand new inside be part of sort, SEMI JOIN, and a brand new decorrelation rule primarily based on this be part of sort. This decorrelation rule helps in producing probably the most optimum plans, thereby enhancing execution efficiency. For example, one of many TPC-DS queries that incorporates EXIST clause ran 7 occasions sooner with this optimization.

We launched distributed Bloom filter optimization for knowledge lake workloads. Distributed Bloom filters create Bloom filters regionally in each compute node after which distributes them to each different node. Distributing Bloom filters can considerably scale back the quantity of knowledge that must be despatched over the community for the be part of by filtering out the tuples earlier. This offers good efficiency positive factors for big, complicated knowledge lake queries that course of and be part of massive quantities of knowledge.

Conclusion

These efficiency enhancements for Iceberg workloads characterize a serious leap ahead in Redshift knowledge lake capabilities. By specializing in out-of-the-box efficiency, we’ve made it simple to realize distinctive question efficiency with out complicated tuning or optimization.

These enhancements display the facility of deep technical innovation mixed with sensible buyer focus. JIT ANALYZE reduces the operational burden of statistics administration whereas offering optimum question planning data. The brand new Redshift knowledge lake question engine on Redshift Serverless was rewritten from the bottom up for best-in-class scan efficiency, and lays the groundwork for extra superior efficiency optimizations. Semi-join optimizations deal with a number of the most difficult question patterns in analytical workloads. You may run complicated analytical workloads in your Iceberg knowledge and get quick, predictable question efficiency.

Amazon Redshift is dedicated to being the most effective analytics engine for knowledge lake workloads, and these efficiency optimizations characterize our continued funding in that objective.

To be taught extra about Amazon Redshift and its efficiency capabilities, go to the Amazon Redshift product web page. To get began with Redshift, you may strive Amazon Redshift Serverless and begin querying knowledge in minutes with out having to arrange and handle knowledge warehouse infrastructure. For extra particulars on efficiency finest practices, see the Amazon Redshift Database Developer Information. To remain up-to-date with the most recent developments in Amazon Redshift, subscribe to the What’s New in Amazon Redshift RSS feed.

Particular because of this put up’s contributors: Martin Milenkoski, Gerard Louw, Konrad Werblinski, Mengchu Cai, Mehmet Bulut, Mohammed Alkateb, and Sanket Hase