For a lot of organizations, the most important problem with AI brokers constructed over unstructured knowledge is not the mannequin, however it’s the context. If the agent can’t retrieve the suitable info, even essentially the most superior mannequin will miss key particulars and provides incomplete or incorrect solutions.

We’re introducing reranking in Mosaic AI Vector Search, now in Public Preview. With a single parameter, you possibly can enhance retrieval accuracy by a median of 15 proportion factors on our enterprise benchmarks. This implies higher-quality solutions, higher reasoning, and extra constant agent efficiency—with out additional infrastructure or advanced setup.

What Is Reranking?

Reranking is a method that improves agent high quality by guaranteeing the agent will get essentially the most related knowledge to carry out its process. Whereas vector databases excel at rapidly discovering related paperwork from hundreds of thousands of candidates, reranking applies deeper contextual understanding to make sure essentially the most semantically related outcomes seem on the high. This two-stage strategy—quick retrieval adopted by clever reordering—has change into important for RAG agent programs the place high quality issues.

Why We Added Reranking

You is perhaps constructing internally-facing chat brokers to reply questions on your paperwork. Otherwise you is perhaps constructing brokers that generate experiences on your prospects. Both approach, if you wish to construct brokers that may precisely use your unstructured knowledge, then high quality is tied to retrieval. Reranking is how Vector Search prospects enhance the standard of their retrieval and thereby enhance the standard of their RAG brokers.

From buyer suggestions, we’ve seen two frequent points:

- Brokers can miss crucial context buried in giant units of unstructured paperwork. The “proper” passage hardly ever sits on the very high of the retrieved outcomes from a vector database.

- Homegrown reranking programs considerably enhance agent high quality, however they take weeks to construct after which want vital upkeep.

By making reranking a local Vector Search function, you should utilize your ruled enterprise knowledge to floor essentially the most related info with out additional engineering.

The reranker function helped elevate our Lexi chatbot from functioning like a highschool pupil to performing like a regulation college graduate. We’ve seen transformative positive aspects in how our programs perceive, motive over, and generate content material from authorized documents-unlocking insights that have been beforehand buried in unstructured knowledge. — David Brady, Senior Director, G3 Enterprises

A Substantial High quality Enchancment Over Baselines

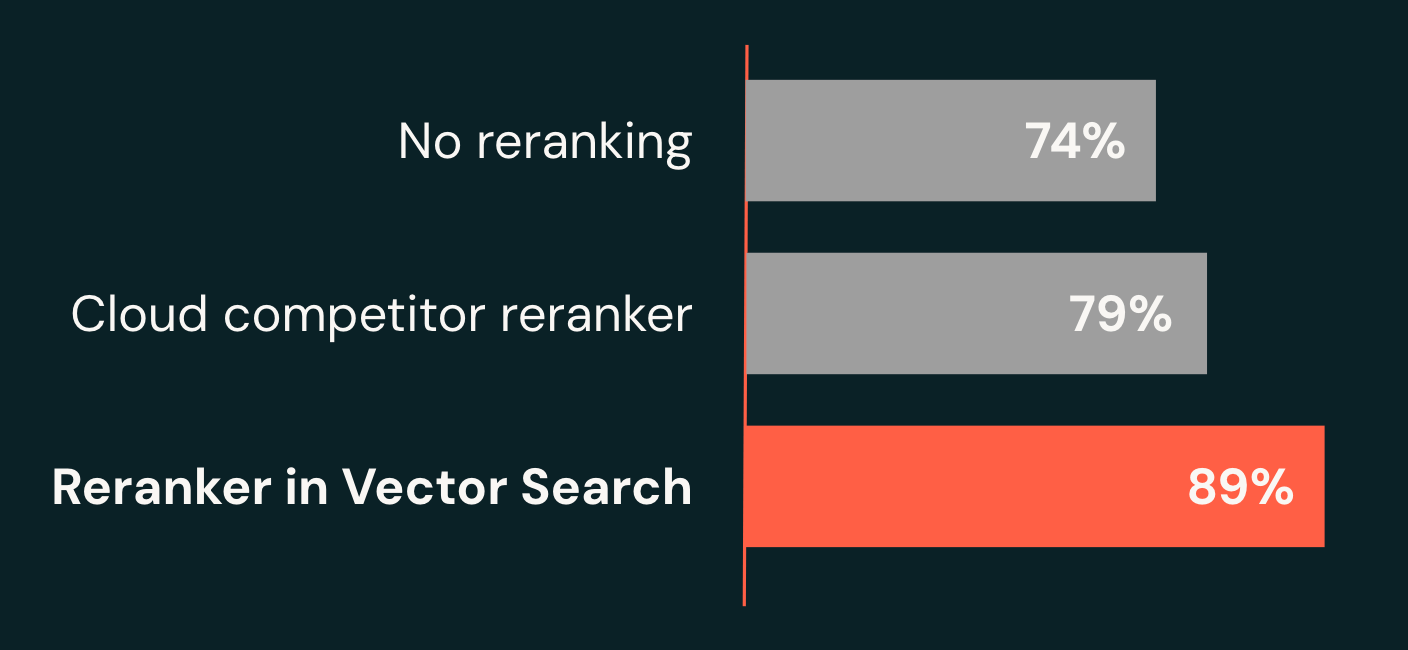

Our analysis crew achieved a breakthrough by constructing a novel compound AI system for agent workloads. On our enterprise benchmarks, the system retrieves the right reply inside its high 10 outcomes 89% of the time (recall@10), a 15-point enchancment over our baseline (74%) and 10 factors larger than main cloud options (79%). Crucially, our reranker delivers this high quality with latencies as little as 1.5 seconds, whereas modern programs typically take a number of seconds—and even minutes—to return high-quality solutions.

Simple, Excessive-High quality Retrieval

Allow enterprise-grade reranking in minutes, not weeks. Groups usually spend weeks researching fashions, deploying infrastructure, and writing customized logic. In distinction, enabling reranking for Vector Search requires only one further parameter in your Vector Search question to immediately get larger high quality retrieval on your brokers. No mannequin serving endpoints to handle, no customized wrappers to keep up, no advanced configurations to tune.

By specifying a number of columns in columns_to_rerank, you take the reranker’s high quality to the subsequent stage by giving it entry to metadata past simply the principle textual content. On this instance, the reranker makes use of contract summaries and class info to raised perceive context and enhance the relevance of search outcomes.

Optimized for Agent Efficiency

Pace meets high quality for real-time AI, agentic purposes. Our analysis crew optimized this compound AI system to rerank 50 leads to as little as 1.5 seconds. This makes it extremely efficient for agent programs that demand each accuracy and responsiveness. This breakthrough efficiency permits subtle retrieval methods with out compromising consumer expertise.

When to make use of Reranking?

We suggest testing reranking for any RAG agent use case. Sometimes, prospects will see huge high quality positive aspects when their present programs do discover the suitable reply someplace within the high 50 outcomes from retrieval, however wrestle to floor it throughout the high 10. In technical phrases, this implies prospects with low recall@10 however excessive recall@50.

Enhanced Developer Expertise

Past core reranking capabilities, we’re making it simpler than ever to construct and deploy high-quality retrieval programs.

LangChain Integration: Reranker works seamlessly with VectorSearchRetrieverTool, our official LangChain integration for Vector Search. Groups constructing RAG brokers with VectorSearchRetrieverTool can profit from larger high quality retrieval—no code adjustments required.

Clear Efficiency Metrics: Reranker latency is now included in question debug data, providing you with a whole end-to-end breakdown of your question efficiency.

response latency breakdown in milliseconds

Versatile Column Choice: Rerank primarily based on any mixture of textual content and metadata columns, permitting you to leverage all obtainable area context—from doc summaries to classes to customized metadata—for top relevance.

Begin Constructing Immediately

Reranker in Vector Search transforms the way you construct AI purposes. With zero infrastructure overhead and seamless integration, you possibly can lastly ship the retrieval high quality your customers deserve.

Able to get began?