Learn how to Construct Provider Analytics With Salesforce SAP Integration on Databricks

Provider information touches practically each a part of a corporation — from procurement and provide chain administration to finance and analytics. But, it’s usually unfold throughout programs that don’t talk with one another. For instance, Salesforce holds vendor profiles, contacts, and account particulars, and SAP S/4HANA manages invoices, funds, and basic ledger entries. As a result of these programs function independently, groups lack a complete view of provider relationships. The result’s sluggish reconciliation, duplicate data, and missed alternatives to optimize spend.

Databricks solves this by connecting each programs on one ruled information & AI platform. Utilizing Lakeflow Join for Salesforce for information ingestion and SAP Enterprise Information Cloud (BDC) Join, groups can unify CRM and ERP information with out duplication. The result’s a single, trusted view of distributors, funds, and efficiency metrics that helps each procurement and finance use circumstances, in addition to analytics.

On this how-to, you’ll discover ways to join each information sources, construct a blended pipeline, and create a gold layer that powers analytics and conversational insights by way of AI/BI Dashboards and Genie.

Why Zero-Copy SAP Salesforce Information Integration Works

Most enterprises attempt to join SAP and Salesforce by way of conventional ETL or third-party instruments. These strategies create a number of information copies, introduce latency, and make governance tough. Databricks takes a special method.

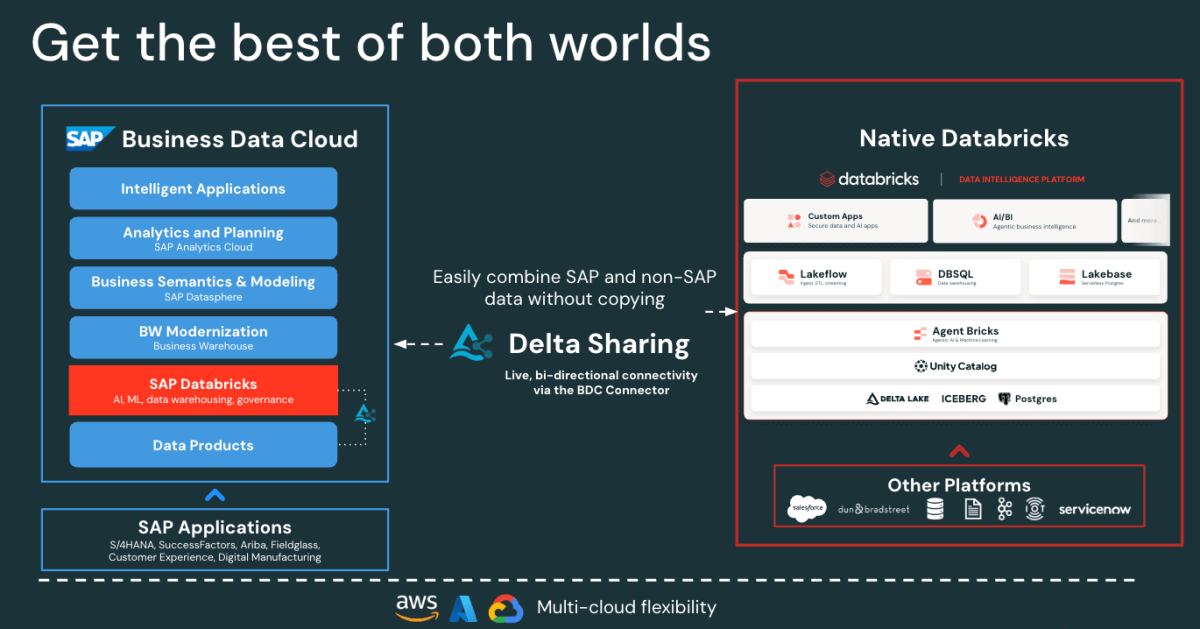

-

Zero-copy SAP entry: The SAP BDC Connector for Databricks offers you ruled, real-time entry to SAP S/4HANA information merchandise by way of Delta Sharing. No exports or duplication.

Determine: SAP BDC Connector to Native Databricks(Bi-directional) - Quick Salesforce Incremental ingestion: Lakeflow connects and ingests Salesforce information repeatedly, holding your datasets contemporary and constant.

- Unified governance: Unity Catalog enforces permissions, lineage, and auditing throughout each SAP and Salesforce sources.

- Declarative pipelines: Lakeflow Spark Declarative Pipelines simplifies ETL design and orchestration with automated optimizations for higher efficiency.

Collectively, these capabilities allow information engineers to mix SAP and Salesforce information on one platform, lowering complexity whereas sustaining enterprise-grade governance.

SAP Salesforce Information Integration Structure on Databricks

Earlier than constructing the pipeline, it’s helpful to know how these elements match collectively in Databricks.

At a excessive degree, SAP S/4HANA publishes enterprise information as curated, business-ready SAP-managed information merchandise in SAP Enterprise Information Cloud (BDC). SAP BDC Join for Databricks permits safe, zero-copy entry to these information merchandise utilizing Delta Sharing. In the meantime, Lakeflow Join handles Salesforce ingestion — capturing accounts, contacts, and alternative information by way of incremental pipelines.

All incoming information, whether or not from SAP or Salesforce, is ruled in Unity Catalog for governance, lineage, and permissions. Information engineers then use Lakeflow Declarative Pipelines to hitch and rework these datasets right into a medallion structure (bronze, silver, and gold layers). Lastly, the gold layer serves as the muse for analytics and exploration in AI/BI Dashboards and Genie.

This structure ensures that information from each programs stays synchronized, ruled, and analytics and AI prepared — with out the overhead of replication or exterior ETL instruments.

Learn how to Construct Unified Provider Analytics

The next steps define easy methods to join, mix, and analyze SAP and Salesforce information on Databricks.

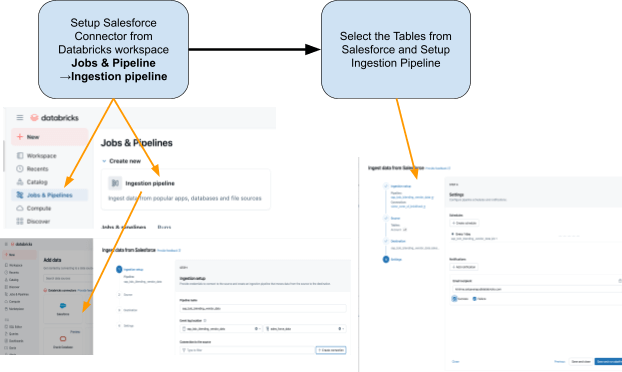

Step 1: Ingestion of Salesforce Information with Lakeflow Join

Use Lakeflow Join to convey Salesforce information into Databricks. You’ll be able to configure pipelines by way of the UI or API. These pipelines handle incremental updates robotically, making certain that information stays present with out guide refreshes.

The connector is absolutely built-in with Unity Catalog governance, Lakeflow Spark Declarative Pipelines for ETL, and Lakeflow Jobs for orchestration.

These are the tables that we’re planning to ingest from Salesforce:

- Account: Vendor/Provider particulars (fields embody: AccountId, Title, Trade, Kind, BillingAddress)

- Contact: Vendor Contacts (fields embody: ContactId, AccountId, FirstName, LastName, E mail)

Step 2: Entry SAP S/4HANA Information with the SAP BDC Connector

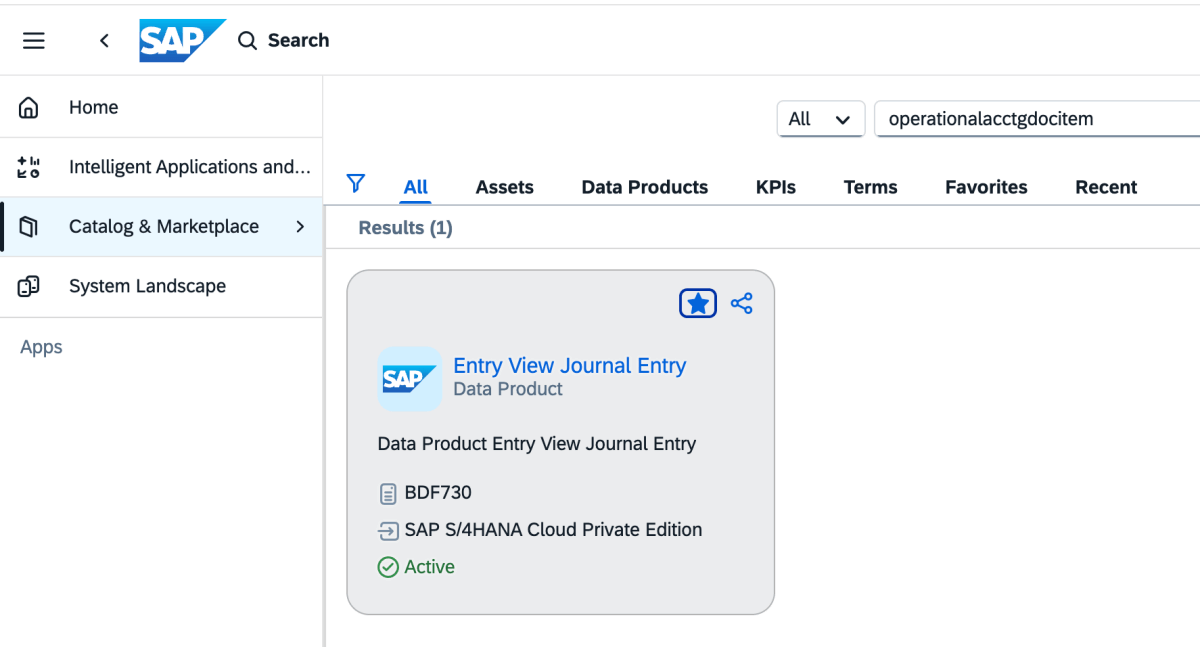

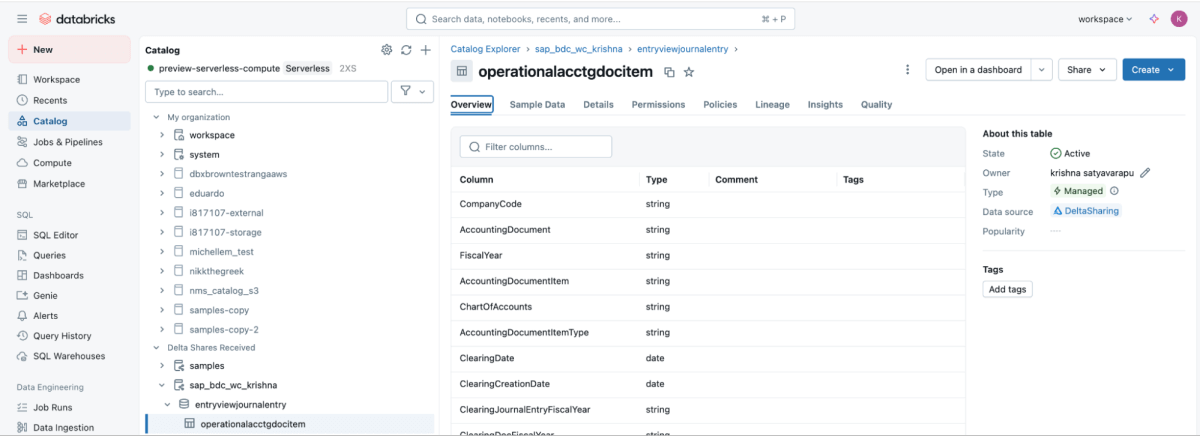

SAP BDC Join gives dwell, ruled entry to SAP S/4HANA vendor fee information on to Databricks — eliminating conventional ETL, by leveraging the SAP BDC information product sap_bdc_working_capital.entryviewjournalentry.operationalacctgdocitem—the Common Journal line-item view.

This BDC information product maps on to the SAP S/4HANA CDS view I_JournalEntryItem (Operational Accounting Doc Merchandise) on ACDOCA.

For the ECC context, the closest bodily constructions have been BSEG (FI line objects) with headers in BKPF, CO postings in COEP, and open/cleared indexes BSIK/BSAK (distributors) and BSID/BSAD (prospects). In SAP S/4HANA, these BS** objects are a part of the simplified information mannequin, the place vendor and G/L line objects are centralized within the Common Journal (ACDOCA), changing the ECC method that usually required becoming a member of a number of separate finance tables.

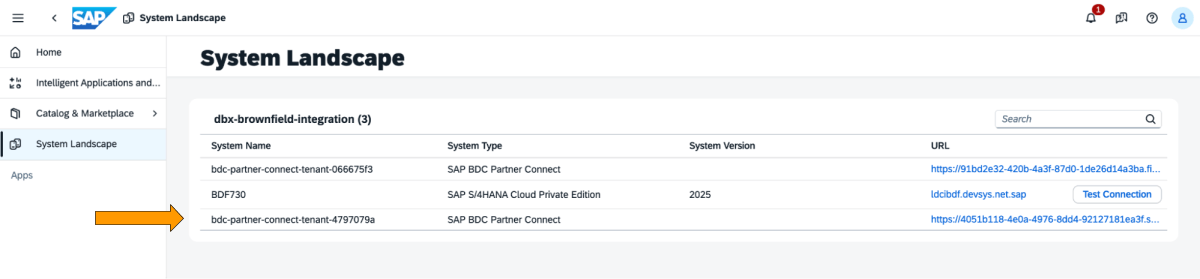

These are the steps that have to be carried out within the SAP BDC cockpit.

1: Log into the SAP BDC cockpit and take a look at the SAP BDC formation within the System Panorama. Hook up with Native Databricks by way of the SAP BDC delta sharing connector. For extra data on easy methods to join Native Databricks to the SAP BDC so it turns into a part of its formation.

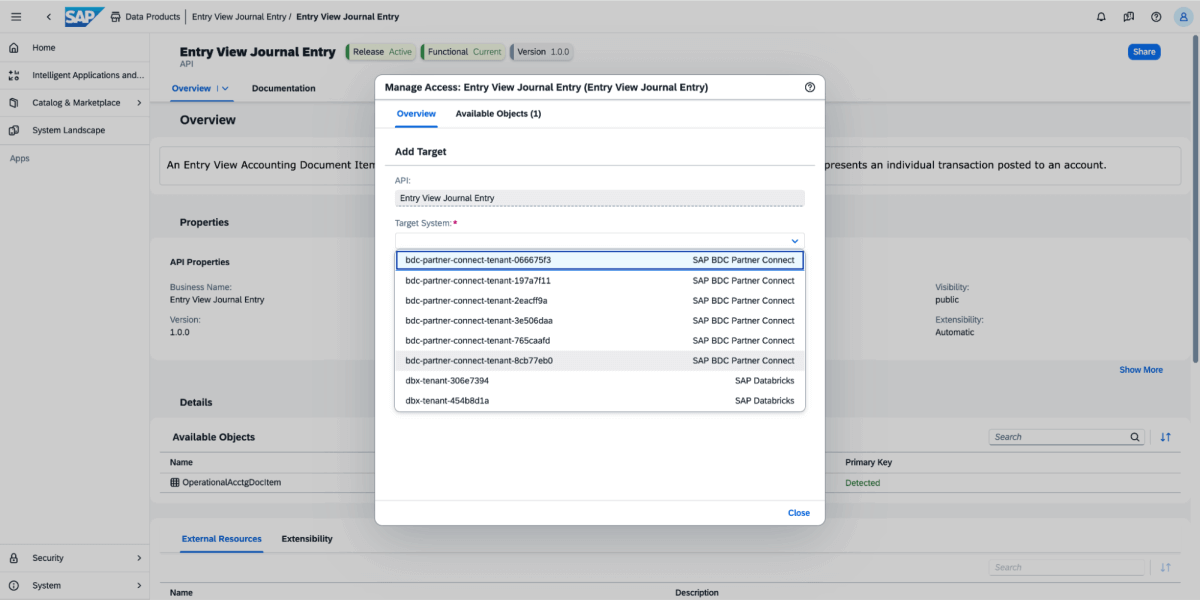

2: Go to Catalog and search for the Information Product Entry View Journal Entry as proven beneath

3: On the information product, choose Share, after which choose the goal system, as proven within the picture beneath.

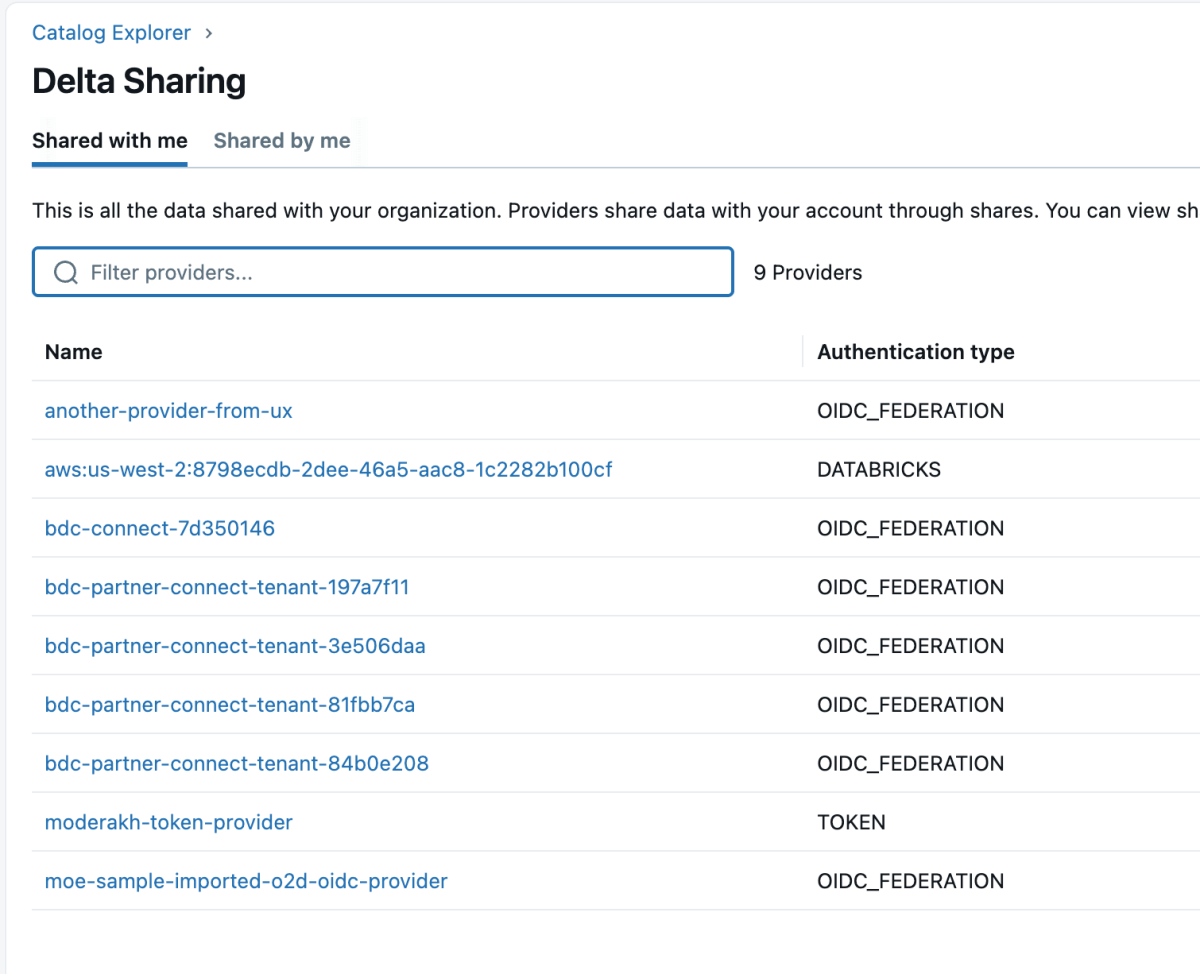

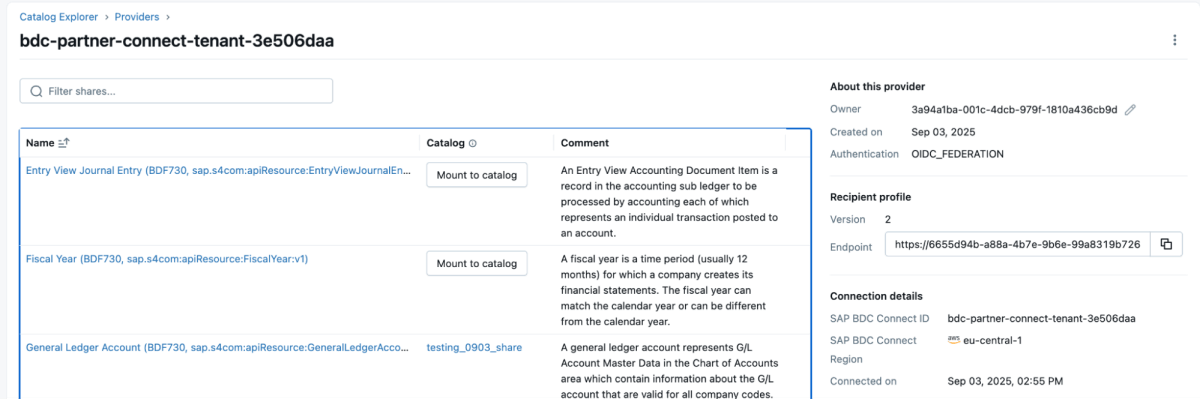

4: As soon as the information product is shared, it would come up as a delta share within the Databricks workspace as proven beneath. Guarantee you may have “Use Supplier” entry with a view to see these suppliers.

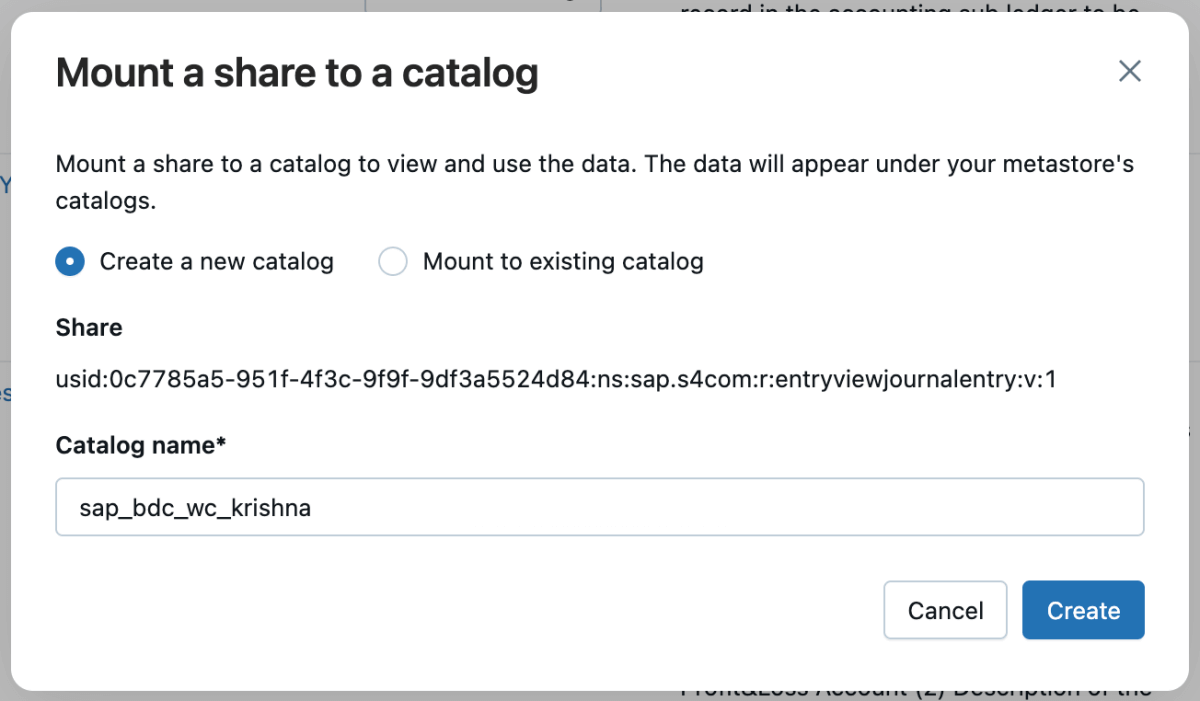

5: Then you possibly can mount that share to the catalog and both create a brand new catalog or mount it to an present catalog.

6: As soon as the share is mounted, it would replicate within the catalog.

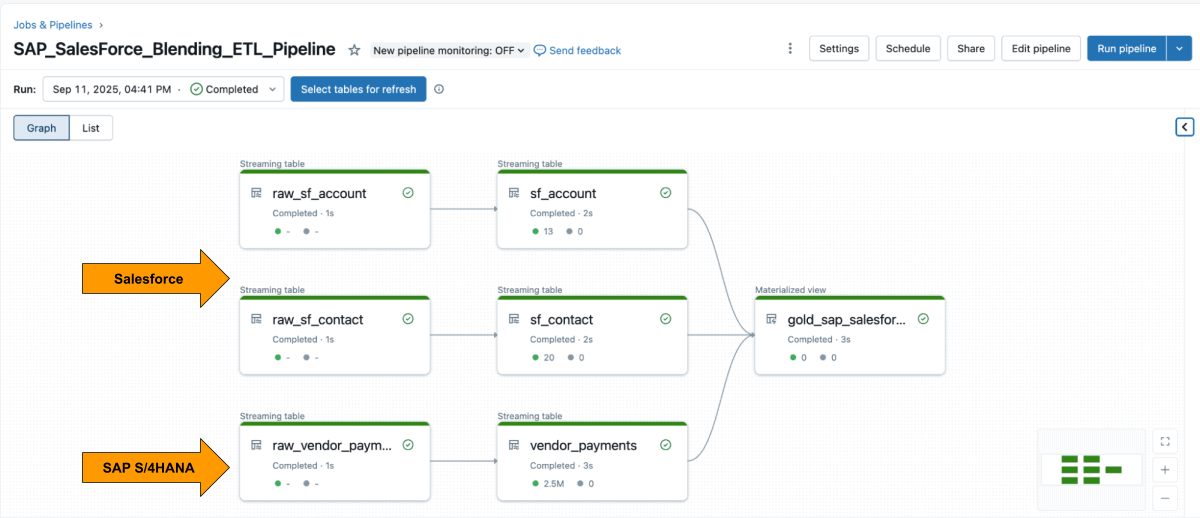

Step 3: Mixing the ETL Pipeline in Databricks utilizing Lakeflow Declarative Pipelines

With each sources obtainable, use Lakeflow Declarative Pipelines to construct an ETL pipeline with Salesforce and SAP information.

The Salesforce Account desk often contains the sphere SAP_ExternalVendorId__c, which matches the seller ID in SAP. This turns into the first be a part of key to your silver layer.

Lakeflow Spark Declarative Pipelines can help you outline transformation logic in SQL whereas Databricks handles optimization robotically and orchestrates the pipelines.

Instance: Construct curated business-level tables

This question creates a curated business-level materialized view that unifies vendor fee data from SAP with vendor particulars from Salesforce that’s prepared for analytics and reporting.

Step 4: Analyze with AI/BI Dashboards and Genie

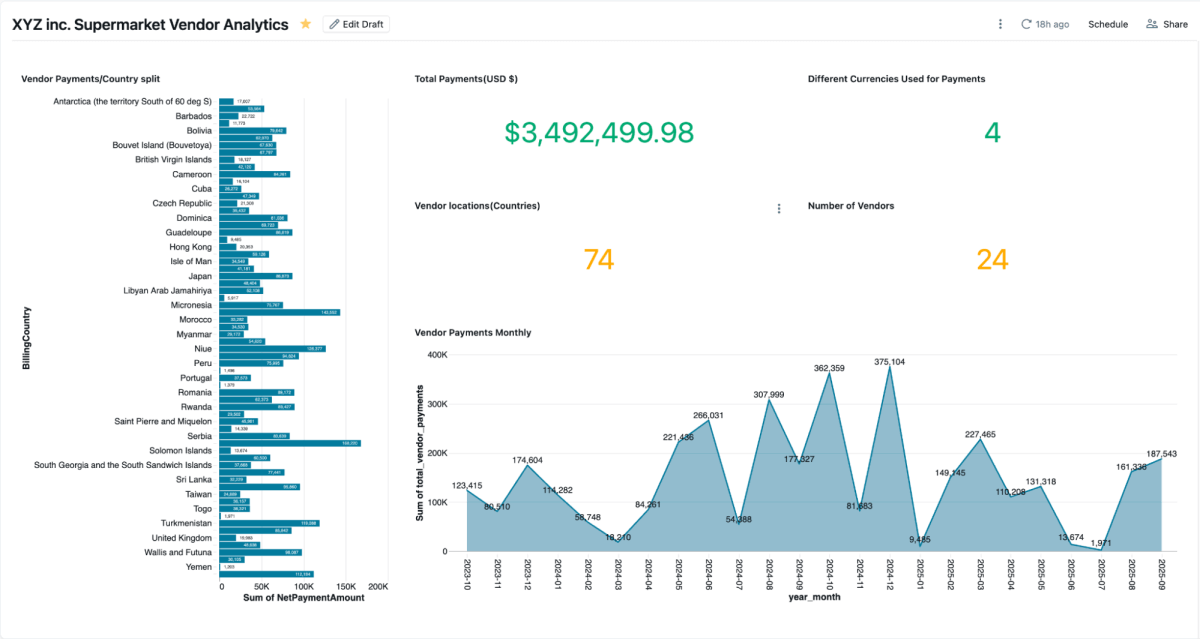

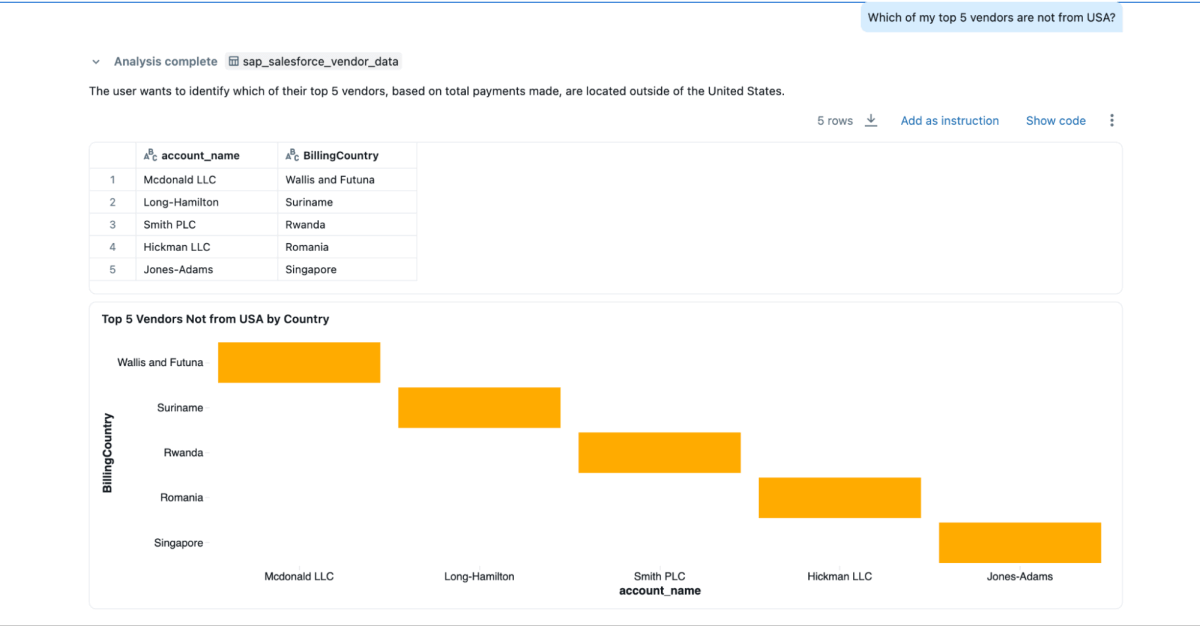

As soon as the materialized view is created, you possibly can discover it immediately in AI/BI Dashboards let groups visualize vendor funds, excellent balances, and spend by area.They assist dynamic filtering, search, and collaboration, all ruled by Unity Catalog. Genie permits natural-language exploration of the identical information.

You’ll be able to create Genie areas on this blended information and ask questions, which couldn’t be achieved if the information have been siloed in Salesforce and SAP

- “Who’re my prime 3 distributors whom I pay essentially the most, and I need their contact data as properly?”

- “What are the billing addresses for the highest 3 distributors?”

- “Which of my prime 5 distributors will not be from the USA?”

Enterprise Outcomes

By combining SAP and Salesforce information on Databricks, organizations acquire a whole and trusted view of provider efficiency, funds, and relationships. This unified method delivers each operational and strategic advantages:

- Sooner dispute decision: Groups can view fee particulars and provider contact data facet by facet, making it simpler to research points and resolve them rapidly.

- Early-pay financial savings: With fee phrases, clearing dates, and web quantities in a single place, finance groups can simply establish alternatives for early fee reductions.

- Cleaner vendor grasp: Becoming a member of on the

SAP_ExternalVendorId__cdiscipline helps establish and resolve duplicate or mismatched provider data, thereby sustaining correct and constant vendor information throughout programs. - Audit-ready governance: Unity Catalog ensures all information is ruled with constant lineage, permissions, and auditing, so analytics, AI fashions, and stories depend on the identical trusted supply.

Collectively, these outcomes assist organizations streamline vendor administration and enhance monetary effectivity — whereas sustaining the governance and safety required for enterprise programs.

Conclusion:

Unifying provider information throughout SAP and Salesforce doesn’t must imply rebuilding pipelines or managing duplicate programs.

With Databricks, groups can work from a single, ruled basis that seamlessly integrates ERP and CRM information in real-time. The mixture of zero-copy SAP BDC entry, incremental Salesforce ingestion, unified governance, and declarative pipelines replaces integration overhead with perception.

The consequence goes past sooner reporting. It delivers a linked view of provider efficiency that improves buying choices, strengthens vendor relationships, and unlocks measurable financial savings. And since it’s constructed on the Databricks Information Intelligence Platform, the identical SAP information that feeds funds and invoices also can drive dashboards, AI fashions, and conversational analytics — all from one trusted supply.

SAP information is usually the spine of enterprise operations. By integrating the SAP Enterprise Information Cloud, Delta Sharing, and Unity Catalog, organizations can lengthen this structure past provider analytics — into working-capital optimization, stock administration, and demand forecasting.

This method turns SAP information from a system of file right into a system of intelligence, the place each dataset is dwell, ruled, and prepared to be used throughout the enterprise.