Software program groups worldwide now depend on AI coding brokers to spice up productiveness and streamline code creation. However safety hasn’t saved up. AI-generated code usually lacks primary protections: insecure defaults, lacking enter validation, hardcoded secrets and techniques, outdated cryptographic algorithms, and reliance on end-of-life dependencies are widespread. These gaps create vulnerabilities that may simply be launched and sometimes go unchecked.

The trade wants a unified, open, and model-agnostic method to safe AI coding.

Immediately, Cisco is open-sourcing its framework for securing AI-generated code, internally known as Challenge CodeGuard.

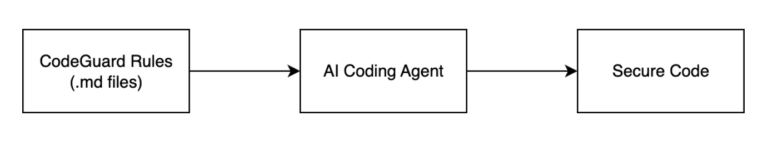

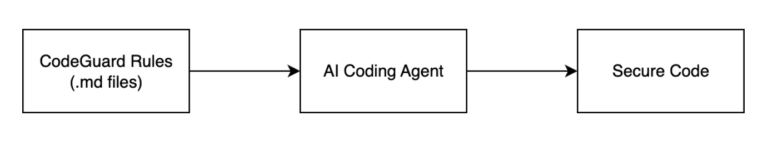

Challenge CodeGuard is a safety framework that builds secure-by-default guidelines into AI coding workflows. Challenge CodeGuard presents a community-driven ruleset, translators for common AI coding brokers, and validators to assist groups implement safety mechanically. Our objective: make safe AI coding the default, with out slowing builders down.

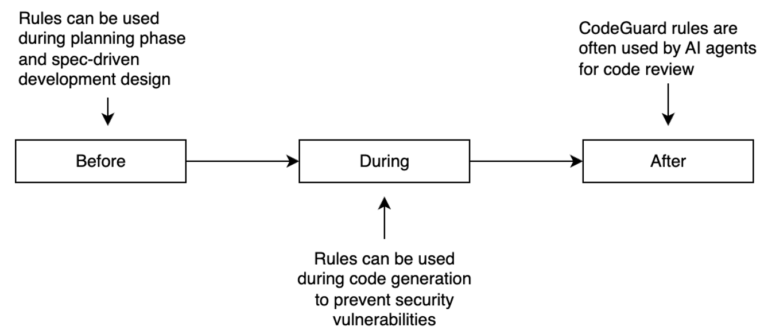

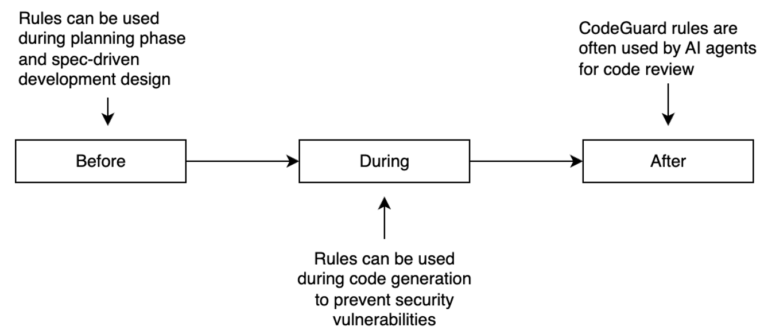

Challenge CodeGuard is designed to combine seamlessly throughout the complete AI coding lifecycle. Earlier than code era, rules can be used for the design of a product and for spec-driven development. You can use the principles within the “planning section” of an AI coding agent to steer fashions towards safe patterns from the beginning. Throughout code era, guidelines can help AI brokers to stop safety points as code is being written. After code era, AI brokers like Cursor, GitHub Copilot, Codex, Windsurf, and Claude Code can use the guidelines for code assessment.

These guidelines can be utilized earlier than, throughout and after code era. They can be utilized on the AI agent planning section or for preliminary specification-driven engineering duties. Challenge CodeGuard guidelines can be used to stop vulnerabilities from being launched throughout code era. They can be utilized by automated code-review AI brokers.

For instance, a rule targeted on enter validation may work at a number of phases: it would recommend safe enter dealing with patterns throughout code era, flag probably unsafe person or AI agent enter processing in real-time after which validate that correct sanitization and validation logic is current within the last code. One other rule concentrating on secret administration may stop hardcoded credentials from being generated, alert builders when delicate information patterns are detected, and confirm that secrets and techniques are correctly externalized utilizing safe configuration administration.

This multi-stage methodology ensures that safety issues are woven all through the event course of quite than being an afterthought, creating a number of layers of safety whereas sustaining the velocity and productiveness that make AI coding instruments so priceless.

Notice: These guidelines steer AI coding brokers towards safer patterns and away from widespread vulnerabilities by default. They don’t assure that any given output is safe. We must always at all times proceed to use commonplace safe engineering practices, together with peer assessment and different widespread safety greatest practices. Deal with Challenge CodeGuard as a defense-in-depth layer; not a alternative for engineering judgment or compliance obligations.

What we’re releasing in v1.0.0

We’re releasing:

- Core safety guidelines primarily based on established safety greatest practices and steerage (e.g., OWASP, CWE, and many others.)

- Automated scripts that act as rule translators for widespread AI coding brokers (e.g., Cursor, Windsurf, GitHub Copilot).

- Documentation to assist contributors and adopters get began shortly

Roadmap and Tips on how to Get Concerned

That is just the start. Our roadmap contains increasing rule protection throughout programming languages, integrating extra AI coding platforms, and constructing automated rule validation. Future enhancements will embody extra automated translation of guidelines to new AI coding platforms as they emerge, and clever rule solutions primarily based on mission context and expertise stack. The automation can even assist preserve consistency throughout completely different coding brokers, scale back guide configuration overhead, and supply actionable suggestions loops that constantly enhance rule effectiveness primarily based on group utilization patterns.

Challenge CodeGuard thrives on group collaboration. Whether or not you’re a safety engineer, software program engineering professional, or AI researcher, there are a number of methods to contribute:

- Submit new guidelines: Assist develop protection for particular languages, frameworks, or vulnerability courses

- Construct translators: Create integrations to your favourite AI coding instruments

- Share suggestions: Report points, recommend enhancements, or suggest new options

Able to get began? Go to our GitHub repository and be part of the dialog. Collectively, we are able to make AI-assisted coding safe by default.