As we speak, we’re asserting two new capabilities for Amazon S3 Tables: help for the brand new Clever-Tiering storage class that routinely optimizes prices primarily based on entry patterns, and replication help to routinely preserve constant Apache Iceberg desk replicas throughout AWS Areas and accounts with out handbook sync.

Organizations working with tabular knowledge face two widespread challenges. First, they should manually handle storage prices as their datasets develop and entry patterns change over time. Second, when sustaining replicas of Iceberg tables throughout Areas or accounts, they have to construct and preserve complicated architectures to trace updates, handle object replication, and deal with metadata transformations.

S3 Tables Clever-Tiering storage class

With the S3 Tables Clever-Tiering storage class, knowledge is routinely tiered to probably the most cost-effective entry tier primarily based on entry patterns. Knowledge is saved in three low-latency tiers: Frequent Entry, Rare Entry (40% decrease value than Frequent Entry), and Archive Instantaneous Entry (68% decrease value in comparison with Rare Entry). After 30 days with out entry, knowledge strikes to Rare Entry, and after 90 days, it strikes to Archive Instantaneous Entry. This occurs with out modifications to your purposes or influence on efficiency.

Desk upkeep actions, together with compaction, snapshot expiration, and unreferenced file elimination, function with out affecting the info’s entry tiers. Compaction routinely processes solely knowledge within the Frequent Entry tier, optimizing efficiency for actively queried knowledge whereas decreasing upkeep prices by skipping colder information in lower-cost tiers.

By default, all present tables use the Normal storage class. When creating new tables, you’ll be able to specify Clever-Tiering because the storage class, or you’ll be able to depend on the default storage class configured on the desk bucket degree. You possibly can set Clever-Tiering because the default storage class in your desk bucket to routinely retailer tables in Clever-Tiering when no storage class is specified throughout creation.

Let me present you the way it works

You should use the AWS Command Line Interface (AWS CLI) and the put-table-bucket-storage-class and get-table-bucket-storage-class instructions to vary or confirm the storage tier of your S3 desk bucket.

# Change the storage class

aws s3tables put-table-bucket-storage-class

--table-bucket-arn $TABLE_BUCKET_ARN

--storage-class-configuration storageClass=INTELLIGENT_TIERING

# Confirm the storage class

aws s3tables get-table-bucket-storage-class

--table-bucket-arn $TABLE_BUCKET_ARN

{ "storageClassConfiguration":

{

"storageClass": "INTELLIGENT_TIERING"

}

}S3 Tables replication help

The brand new S3 Tables replication help helps you preserve constant learn replicas of your tables throughout AWS Areas and accounts. You specify the vacation spot desk bucket and the service creates read-only duplicate tables. It replicates all updates chronologically whereas preserving parent-child snapshot relationships. Desk replication helps you construct world datasets to reduce question latency for geographically distributed groups, meet compliance necessities, and supply knowledge safety.

Now you can simply create duplicate tables that ship related question efficiency as their supply tables. Duplicate tables are up to date inside minutes of supply desk updates and help impartial encryption and retention insurance policies from their supply tables. Duplicate tables will be queried utilizing Amazon SageMaker Unified Studio or any Iceberg-compatible engine together with DuckDB, PyIceberg, Apache Spark, and Trino.

You possibly can create and preserve replicas of your tables by means of the AWS Administration Console or APIs and AWS SDKs. You specify a number of vacation spot desk buckets to duplicate your supply tables. While you activate replication, S3 Tables routinely creates read-only duplicate tables in your vacation spot desk buckets, backfills them with the most recent state of the supply desk, and regularly screens for brand new updates to maintain replicas in sync. This helps you meet time-travel and audit necessities whereas sustaining a number of replicas of your knowledge.

Let me present you the way it works

To indicate you the way it works, I proceed in three steps. First, I create an S3 desk bucket, create an Iceberg desk, and populate it with knowledge. Second, I configure the replication. Third, I connect with the replicated desk and question the info to indicate you that modifications are replicated.

For this demo, the S3 crew kindly gave me entry to an Amazon EMR cluster already provisioned. You possibly can comply with the Amazon EMR documentation to create your individual cluster. In addition they created two S3 desk buckets, a supply and a vacation spot for the replication. Once more, the S3 Tables documentation will provide help to to get began.

I take a observe of the 2 S3 Tables bucket Amazon Useful resource Names (ARNs). On this demo, I refer to those because the atmosphere variables SOURCE_TABLE_ARN and DEST_TABLE_ARN.

First step: Put together the supply database

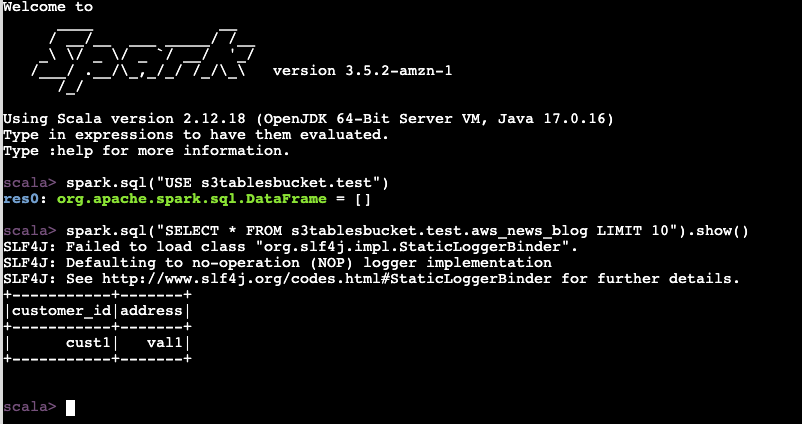

I begin a terminal, connect with the EMR cluster, begin a Spark session, create a desk, and insert a row of information. The instructions I exploit on this demo are documented in Accessing tables utilizing the Amazon S3 Tables Iceberg REST endpoint.

sudo spark-shell

--packages "org.apache.iceberg:iceberg-spark-runtime-3.5_2.12:1.4.1,software program.amazon.awssdk:bundle:2.20.160,software program.amazon.awssdk:url-connection-client:2.20.160"

--master "native[*]"

--conf "spark.sql.extensions=org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions"

--conf "spark.sql.defaultCatalog=spark_catalog"

--conf "spark.sql.catalog.spark_catalog=org.apache.iceberg.spark.SparkCatalog"

--conf "spark.sql.catalog.spark_catalog.sort=relaxation"

--conf "spark.sql.catalog.spark_catalog.uri=https://s3tables.us-east-1.amazonaws.com/iceberg"

--conf "spark.sql.catalog.spark_catalog.warehouse=arn:aws:s3tables:us-east-1:012345678901:bucket/aws-news-blog-test"

--conf "spark.sql.catalog.spark_catalog.relaxation.sigv4-enabled=true"

--conf "spark.sql.catalog.spark_catalog.relaxation.signing-name=s3tables"

--conf "spark.sql.catalog.spark_catalog.relaxation.signing-region=us-east-1"

--conf "spark.sql.catalog.spark_catalog.io-impl=org.apache.iceberg.aws.s3.S3FileIO"

--conf "spark.hadoop.fs.s3a.aws.credentials.supplier=org.apache.hadoop.fs.s3a.SimpleAWSCredentialProvider"

--conf "spark.sql.catalog.spark_catalog.rest-metrics-reporting-enabled=false"

spark.sql("""

CREATE TABLE s3tablesbucket.take a look at.aws_news_blog (

customer_id STRING,

deal with STRING

) USING iceberg

""")

spark.sql("INSERT INTO s3tablesbucket.take a look at.aws_news_blog VALUES ('cust1', 'val1')")

spark.sql("SELECT * FROM s3tablesbucket.take a look at.aws_news_blog LIMIT 10").present()

+-----------+-------+

|customer_id|deal with|

+-----------+-------+

| cust1| val1|

+-----------+-------+To this point, so good.

Second step: Configure the replication for S3 Tables

Now, I exploit the CLI on my laptop computer to configure the S3 desk bucket replication.

Earlier than doing so, I create an AWS Identification and Entry Administration (IAM) coverage to authorize the replication service to entry my S3 desk bucket and encryption keys. Confer with the S3 Tables replication documentation for the main points. The permissions I used for this demo are:

{

"Model": "2012-10-17",

"Assertion": [

{

"Effect": "Allow",

"Action": [

"s3:*",

"s3tables:*",

"kms:DescribeKey",

"kms:GenerateDataKey",

"kms:Decrypt"

],

"Useful resource": "*"

}

]

}After having created this IAM coverage, I can now proceed and configure the replication:

aws s3tables-replication put-table-replication

--table-arn ${SOURCE_TABLE_ARN}

--configuration '{

"position": "arn:aws:iam:::position/S3TableReplicationManualTestingRole",

"guidelines":[

{

"destinations": [

{

"destinationTableBucketARN": "${DST_TABLE_ARN}"

}]

}

]

The replication begins routinely. Updates are usually replicated inside minutes. The time it takes to finish is dependent upon the amount of information within the supply desk.

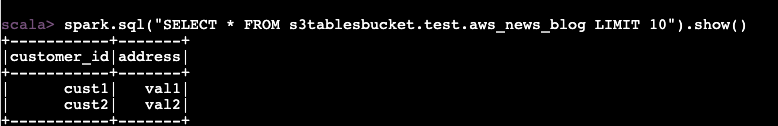

Third step: Connect with the replicated desk and question the info

Now, I connect with the EMR cluster once more, and I begin a second Spark session. This time, I exploit the vacation spot desk.

To confirm the replication works, I insert a second row of information on the supply desk.

spark.sql("INSERT INTO s3tablesbucket.take a look at.aws_news_blog VALUES ('cust2', 'val2')")

I wait a couple of minutes for the replication to set off. I comply with the standing of the replication with the get-table-replication-status command.

aws s3tables-replication get-table-replication-status

--table-arn ${SOURCE_TABLE_ARN}

{

"sourceTableArn": "arn:aws:s3tables:us-east-1:012345678901:bucket/manual-test/desk/e0fce724-b758-4ee6-85f7-ca8bce556b41",

"locations": [

{

"replicationStatus": "pending",

"destinationTableBucketArn": "arn:aws:s3tables:us-east-1:012345678901:bucket/manual-test-dst",

"destinationTableArn": "arn:aws:s3tables:us-east-1:012345678901:bucket/manual-test-dst/table/5e3fb799-10dc-470d-a380-1a16d6716db0",

"lastSuccessfulReplicatedUpdate": {

"metadataLocation": "s3://e0fce724-b758-4ee6-8-i9tkzok34kum8fy6jpex5jn68cwf4use1b-s3alias/e0fce724-b758-4ee6-85f7-ca8bce556b41/metadata/00001-40a15eb3-d72d-43fe-a1cf-84b4b3934e4c.metadata.json",

"timestamp": "2025-11-14T12:58:18.140281+00:00"

}

}

]

}When replication standing reveals prepared, I connect with the EMR cluster and I question the vacation spot desk. With out shock, I see the brand new row of information.

Further issues to know

Listed here are a few further factors to concentrate to:

- Replication for S3 Tables helps each Apache Iceberg V2 and V3 desk codecs, providing you with flexibility in your desk format selection.

- You possibly can configure replication on the desk bucket degree, making it simple to duplicate all tables beneath that bucket with out particular person desk configurations.

- Your duplicate tables preserve the storage class you select in your vacation spot tables, which suggests you’ll be able to optimize in your particular value and efficiency wants.

- Any Iceberg-compatible catalog can immediately question your duplicate tables with out further coordination—they solely have to level to the duplicate desk location. This offers you flexibility in selecting question engines and instruments.

Pricing and availability

You possibly can observe your storage utilization by entry tier by means of AWS Value and Utilization Stories and Amazon CloudWatch metrics. For replication monitoring, AWS CloudTrail logs present occasions for every replicated object.

There aren’t any further costs to configure Clever-Tiering. You solely pay for storage prices in every tier. Your tables proceed to work as earlier than, with automated value optimization primarily based in your entry patterns.

For S3 Tables replication, you pay the S3 Tables costs for storage within the vacation spot desk, for replication PUT requests, for desk updates (commits), and for object monitoring on the replicated knowledge. For cross-Area desk replication, you additionally pay for inter-Area knowledge switch out from Amazon S3 to the vacation spot Area primarily based on the Area pair.

As typical, discuss with the Amazon S3 pricing web page for the main points.

Each capabilities can be found at the moment in all AWS Areas the place S3 Tables are supported.

To study extra about these new capabilities, go to the Amazon S3 Tables documentation or strive them within the Amazon S3 console at the moment. Share your suggestions by means of AWS re:Publish for Amazon S3 or by means of your AWS Assist contacts.