Orchestrating machine studying pipelines is advanced, particularly when knowledge processing, coaching, and deployment span a number of companies and instruments. On this put up, we stroll via a hands-on, end-to-end instance of creating, testing, and operating a machine studying (ML) pipeline utilizing workflow capabilities in Amazon SageMaker, accessed via the Amazon SageMaker Unified Studio expertise. These workflows are powered by Amazon Managed Workflows for Apache Airflow (Amazon MWAA).

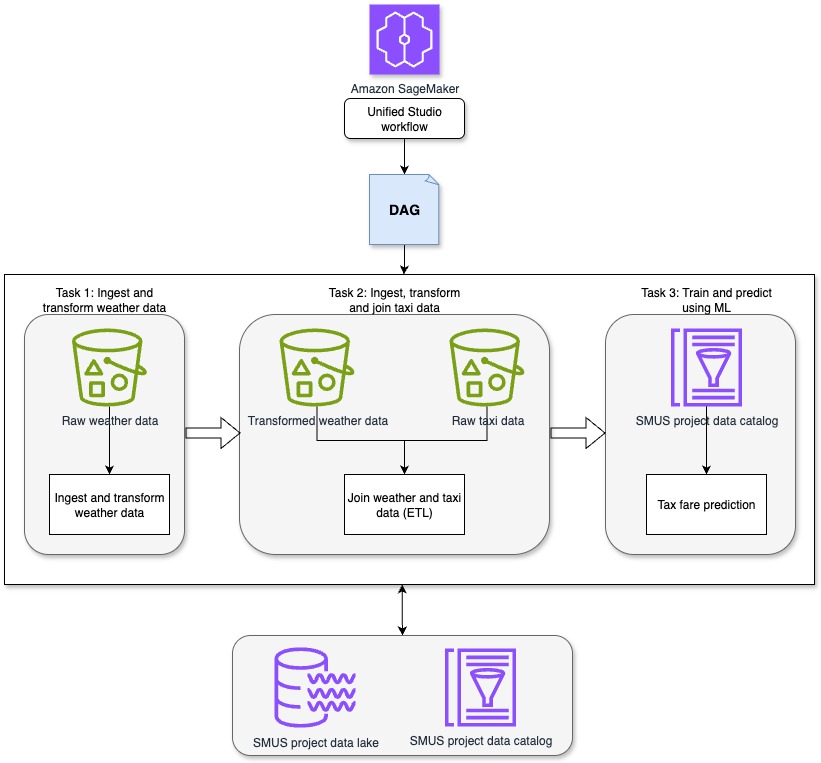

Whereas SageMaker Unified Studio features a visible builder for low-code workflow creation, this information focuses on the code-first expertise: authoring and managing workflows as Python-based Apache Airflow DAGs (Directed Acyclic Graphs). A DAG is a set of duties with outlined dependencies, the place every job runs solely after its upstream dependencies are full, selling right execution order and making your ML pipeline extra reproducible and resilient.We’ll stroll via an instance pipeline that ingests climate and taxi knowledge, transforms and joins datasets, and makes use of ML to foretell taxi fares—all orchestrated utilizing SageMaker Unified Studio workflows.

In the event you favor an easier, low-code expertise, see Orchestrate knowledge processing jobs, querybooks, and notebooks utilizing visible workflow expertise in Amazon SageMaker.

Resolution overview

This resolution demonstrates how SageMaker Unified Studio workflows can be utilized to orchestrate an entire data-to-ML pipeline in a centralized atmosphere. The pipeline runs via the next sequential duties, as proven within the previous diagram.

- Process 1: Ingest and remodel climate knowledge: This job makes use of a Jupyter pocket book in SageMaker Unified Studio to ingest and preprocess artificial climate knowledge. The artificial climate dataset consists of hourly observations with attributes equivalent to time, temperature, precipitation, and cloud cowl. For this job, the main focus is on time, temperature, rain, precipitation, and wind pace.

- Process 2: Ingest, remodel and be a part of taxi knowledge: A second Jupyter pocket book in SageMaker Unified Studio ingests the uncooked New York Metropolis taxi journey dataset. This dataset consists of attributes equivalent to pickup time, drop-off time, journey distance, passenger depend, and fare quantity. The related fields for this job embody pickup and drop-off time, journey distance, variety of passengers, and whole fare quantity. The pocket book transforms the taxi dataset in preparation for becoming a member of it with the climate knowledge. After transformation, the taxi and climate datasets are joined to create a unified dataset, which is then written to Amazon S3 for downstream use.

- Process 3: Prepare and predict utilizing ML: A 3rd Jupyter pocket book in SageMaker Unified Studio applies regression methods to the joined dataset to create a mannequin to find out how attributes of the climate and taxi knowledge equivalent to rain and journey distance influence taxi fares and create a fare prediction mannequin. The skilled mannequin is then used to generate fare predictions for brand new journey knowledge.

This unified method permits orchestration of extract, remodel, and cargo (ETL) and ML steps with full visibility into the info lifecycle and reproducibility via ruled workflows in SageMaker Unified Studio.

Stipulations

Earlier than you start, full the next steps:

- Create a SageMaker Unified Studio area: Comply with the directions in Create an Amazon SageMaker Unified Studio area – fast setup

- Register to your SageMaker Unified Studio area: Use the area you created in Step 1 check in. For extra data, see Entry Amazon SageMaker Unified Studio.

- Create a SageMaker Unified Studio challenge: Create a brand new challenge in your area by following the challenge creation information. For Mission profile, choose All capabilities.

Arrange workflows

You need to use workflows in SageMaker Unified Studio to arrange and run a collection of duties utilizing Apache Airflow to design knowledge processing procedures and orchestrate your querybooks, notebooks, and jobs. You’ll be able to create workflows in Python code, check and share them along with your crew, and entry the Airflow UI immediately from SageMaker Unified Studio. It supplies options to view workflow particulars, together with run outcomes, job completions, and parameters. You’ll be able to run workflows with default or customized parameters and monitor their progress. Now that you’ve your SageMaker Unified Studio challenge arrange, you may construct your workflows.

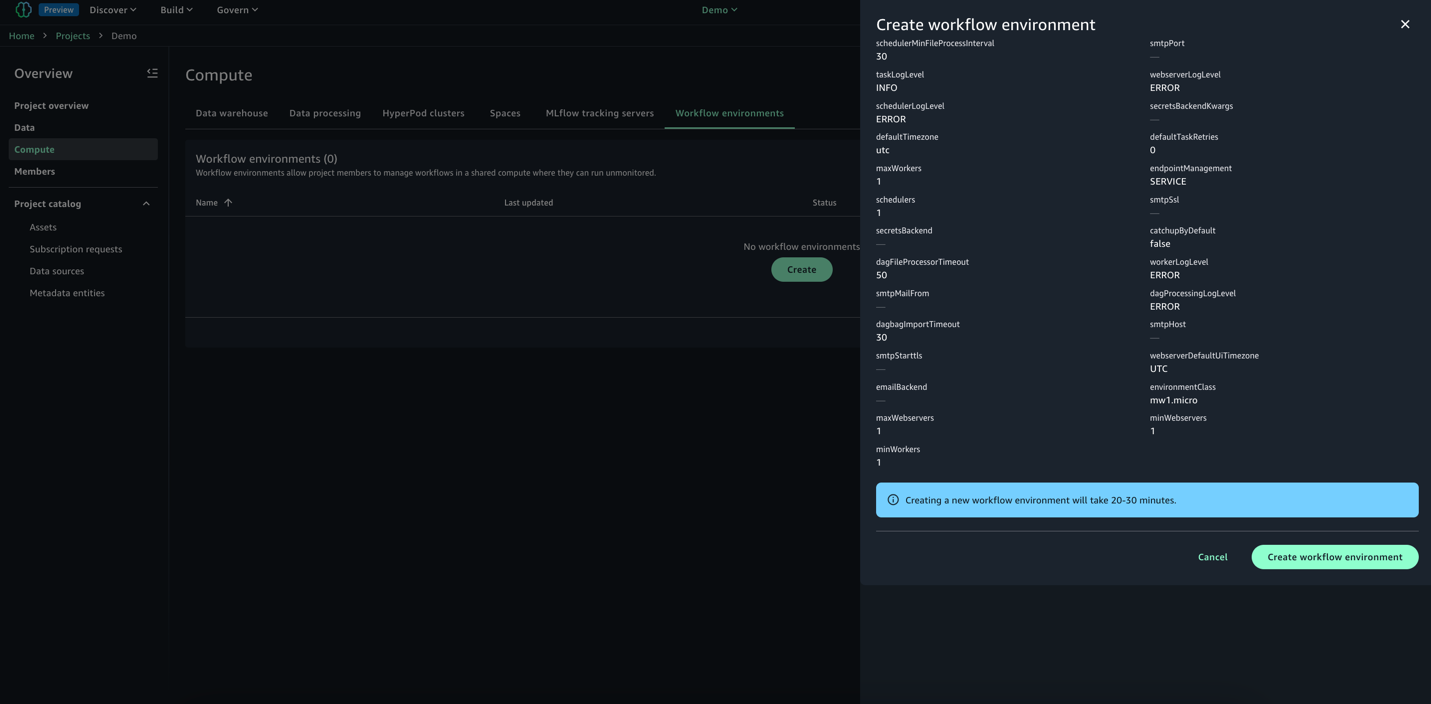

- In your SageMaker Unified Studio challenge, navigate to the Compute part and choose Workflow atmosphere.

- Select Create atmosphere to arrange a brand new workflow atmosphere.

- Assessment the choices and select Create atmosphere. By default, SageMaker Unified Studio creates an mw1.micro class atmosphere, which is appropriate for testing and small-scale workflows. To replace the atmosphere class earlier than challenge creation, navigate to Area and choose Mission Profiles after which All Capabilities and go to OnDemand Workflows blueprint deployment settings. By utilizing these settings, you may override default parameters and tailor the atmosphere to your particular challenge necessities.

Develop workflows

You need to use workflows to orchestrate notebooks, querybooks, and extra in your challenge repositories. With workflows, you may outline a set of duties organized as a DAG that may run on a user-defined schedule.To get began:

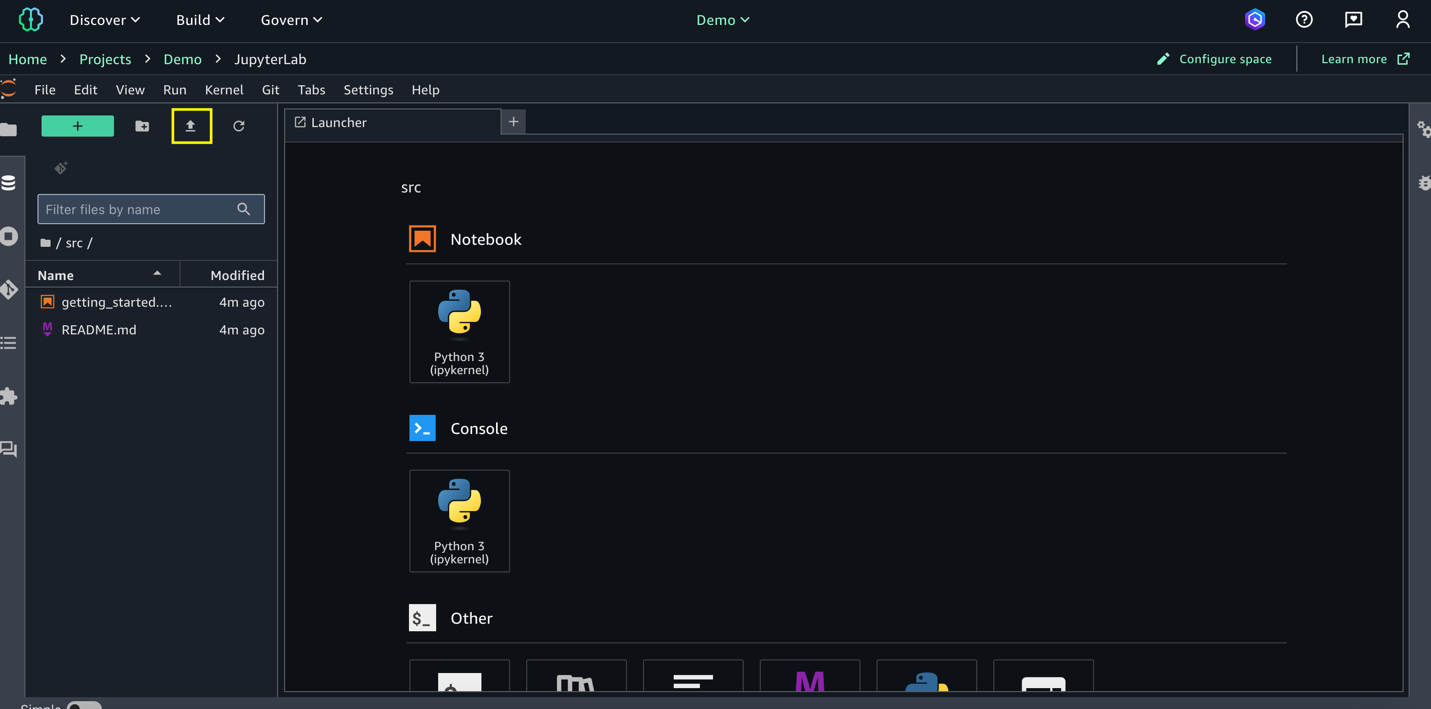

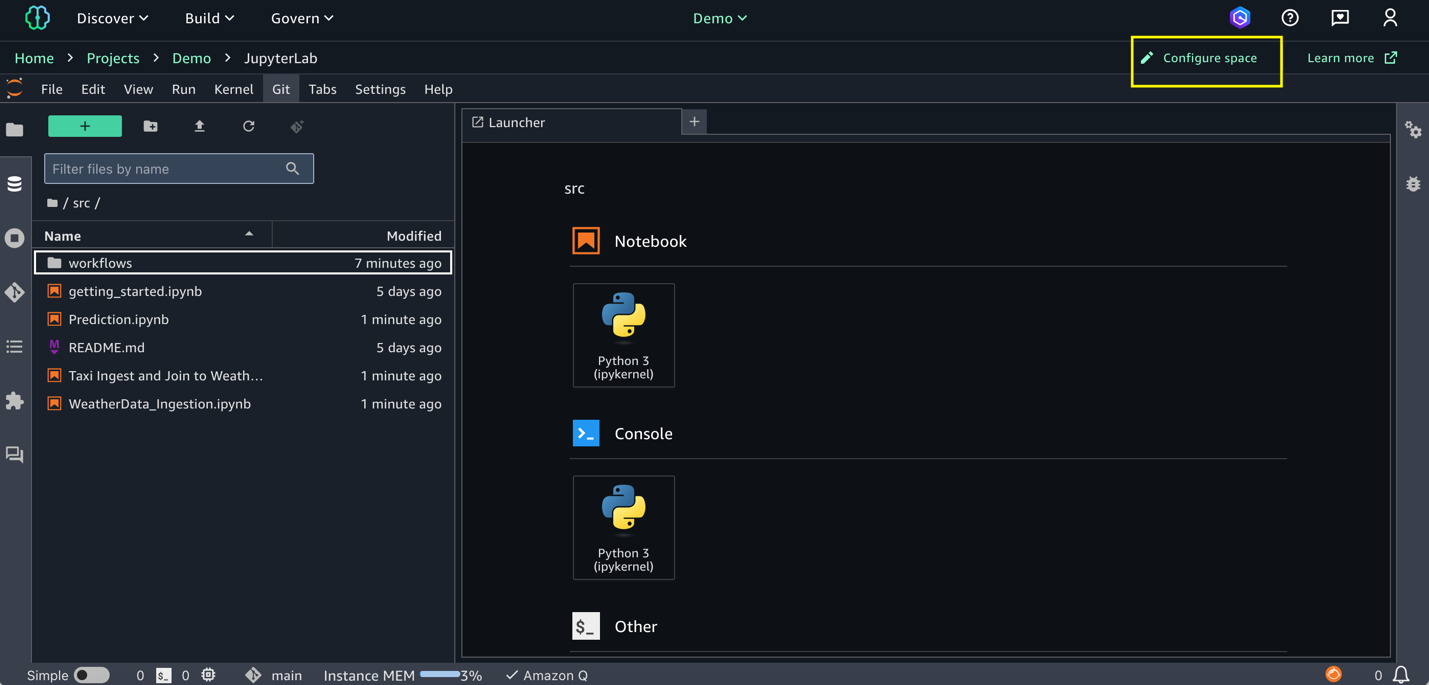

- Obtain Climate Knowledge Ingestion, Taxi Ingest and Be part of to Climate, and Prediction notebooks to your native atmosphere.

- Go to Construct and choose JupyterLab; select Add information and import the three notebooks you downloaded within the earlier step.

- Configure your SageMaker Unified Studio area: Areas are used to handle the storage and useful resource wants of the related software. For this demo, configure the area with an ml.m5.8xlarge occasion

- Select Configure Area within the right-hand nook and cease the area.

- Replace occasion sort to ml.m5.8xlarge and begin the area. Any lively processes will likely be paused through the restart, and any unsaved modifications will likely be misplaced. Updating the workspace would possibly take a take couple of minutes.

- Go to Construct and choose Orchestration after which Workflows.

- Choose the down arrow (▼) subsequent to Create new workflow. From the dropdown menu that seems, choose Create in code editor.

- Within the editor, create a brand new Python file named

multinotebook_dag.pybelowsrc/workflows/dags. Copy the next DAG code, which implements a sequential ML pipeline that orchestrates a number of notebooks in SageMaker Unified Studio. SubstituteNOTEBOOK_PATHSto match your precise pocket book places.

The code makes use of the NotebookOperator to execute three notebooks so as: knowledge ingestion for climate knowledge, knowledge ingestion for taxi knowledge, and the skilled mannequin created by combining the climate and taxi knowledge. Every pocket book runs as a separate job, with dependencies to assist be sure that they execute in sequence. You’ll be able to customise with your personal notebooks. You’ll be able to modify the NOTEBOOK_PATHS checklist to orchestrate any variety of notebooks of their workflow whereas sustaining sequential execution order.

The workflow schedule might be custom-made by updating WORKFLOW_SCHEDULE (for instance: '@hourly', '@weekly', or cron expressions like ‘13 2 1 * *’) to match your particular enterprise wants.

- After a workflow atmosphere has been created by a challenge proprietor, and when you’ve saved your workflows DAG information in JupyterLab, they’re routinely synced to the challenge. After the information are synced, all challenge members can view the workflows you might have added within the workflow atmosphere. See Share a code workflow with different challenge members in an Amazon SageMaker Unified Studio workflow atmosphere.

Check and monitor workflow execution

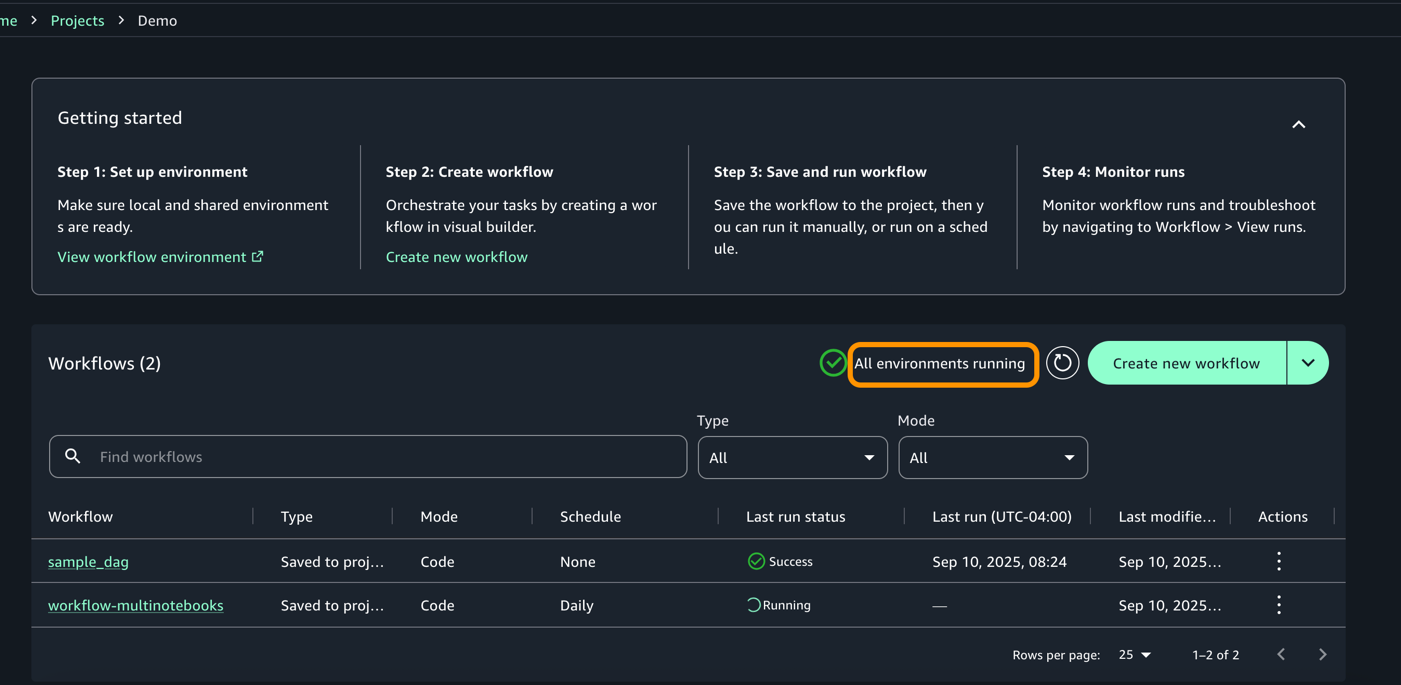

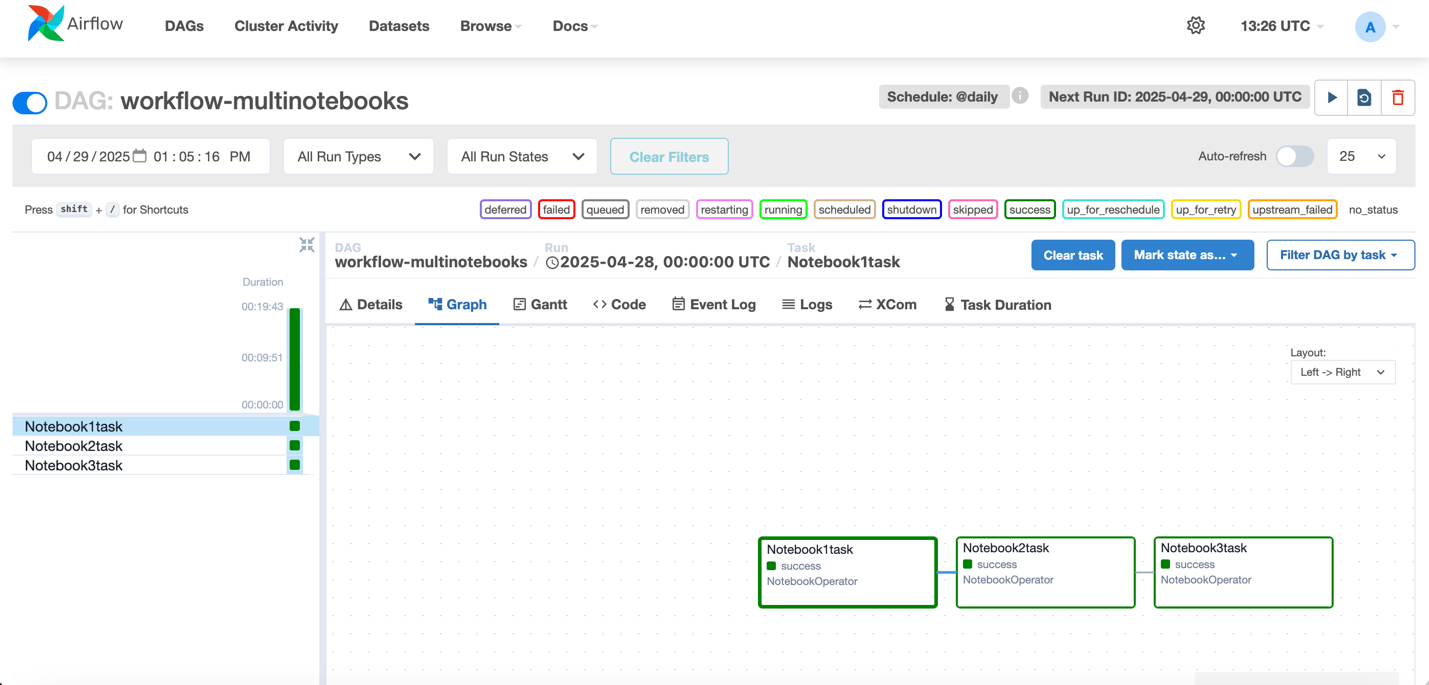

- To validate your DAG, Go to Construct > Orchestration > Workflows. It is best to now see the workflow operating in Native Area primarily based on the Schedule.

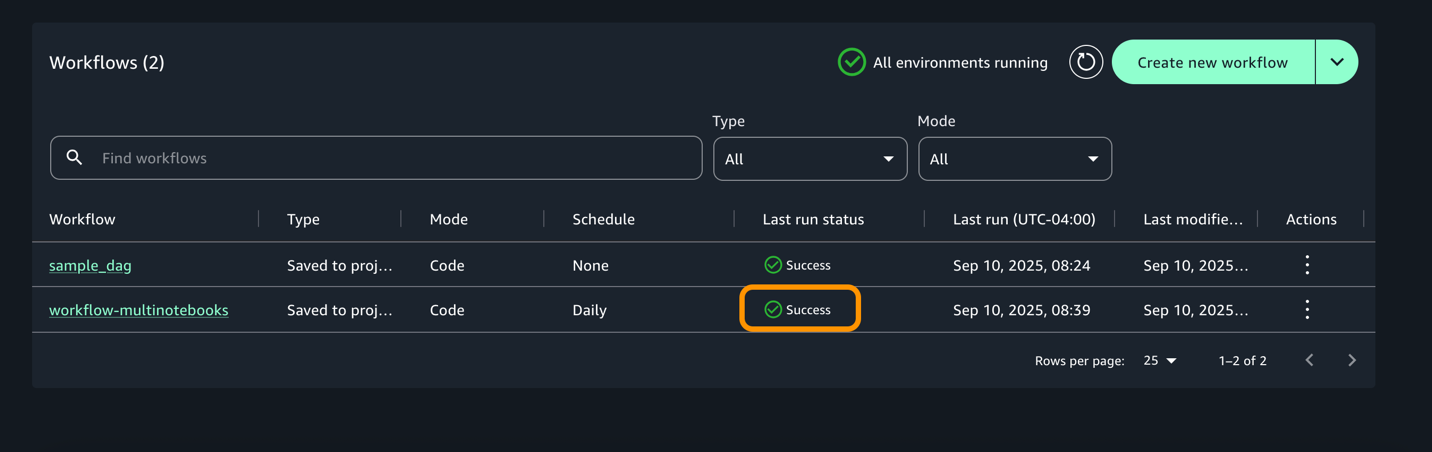

- As soon as the execution completes, workflow would change to success begin as proven under.

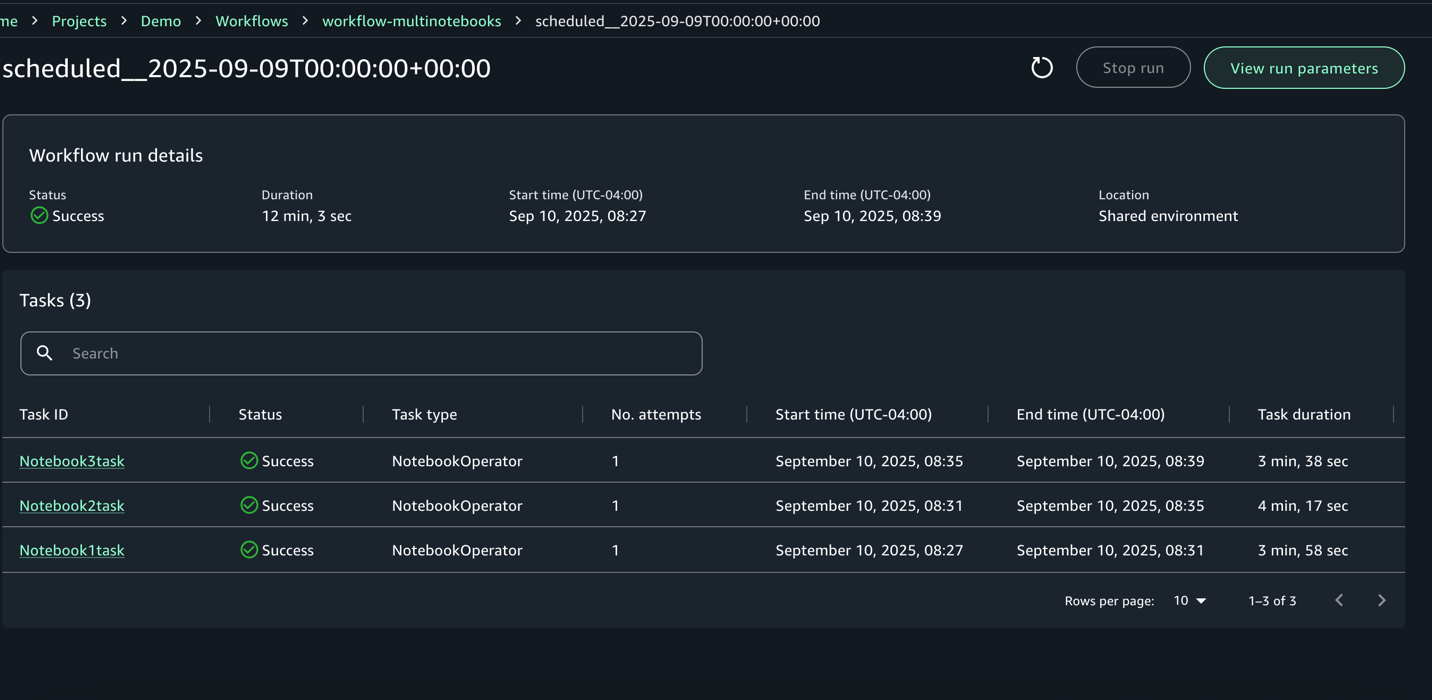

- For every execution, you may zoom in to get an in depth workflow run particulars and job logs

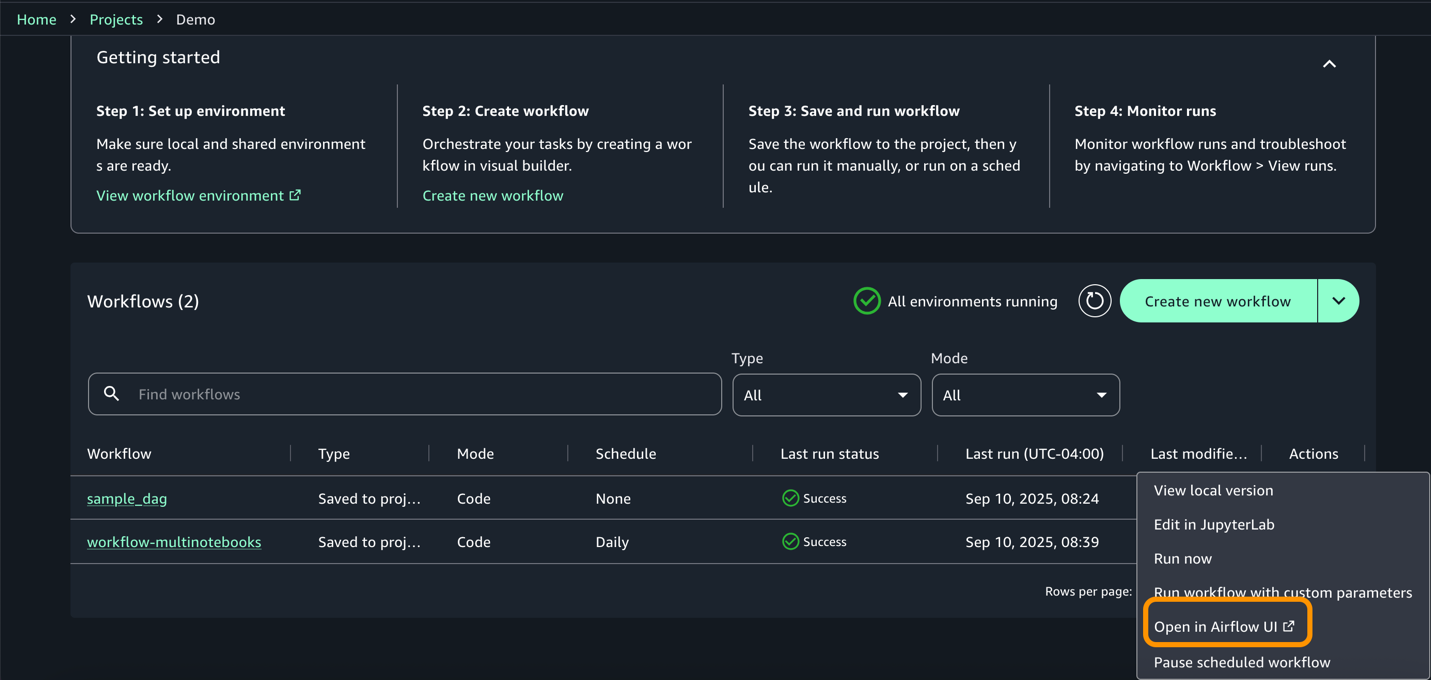

- Entry the airflow UI from actions for extra data on the dag and execution.

Outcomes

The mannequin’s output is written to the Amazon Easy Storage Service (Amazon S3) output folder as proven the next determine. These outcomes needs to be evaluated for correctness of match, prediction accuracy, and the consistency of relationships between variables. If any outcomes seem surprising or unclear, you will need to overview the info, engineering steps, and mannequin assumptions to confirm that they align with the meant use case.

Clear up

To keep away from incurring further prices related to sources created as a part of this put up, be sure you delete the gadgets created within the AWS account for this put up.

- The SageMaker area

- The S3 bucket related to the SageMaker area

Conclusion

On this put up, we demonstrated how you should use Amazon SageMaker to construct highly effective, built-in ML workflows that span the total knowledge and AI/ML lifecycle. You discovered how one can create an Amazon SageMaker Unified Studio challenge, use a multi-compute pocket book to course of knowledge, and use the built-in SQL editor to discover and visualize outcomes. Lastly, we confirmed you how one can orchestrate the complete workflow throughout the SageMaker Unified Studio interface.

SageMaker provides a complete set of capabilities for knowledge practitioners to carry out end-to-end duties, together with knowledge preparation, mannequin coaching, and generative AI software improvement. When accessed via SageMaker Unified Studio, these capabilities come collectively in a single, centralized workspace that helps eradicate the friction of siloed instruments, companies, and artifacts.

As organizations construct more and more advanced, data-driven functions, groups can use SageMaker, along with SageMaker Unified Studio, to collaborate extra successfully and operationalize their AI/ML belongings with confidence. You’ll be able to uncover your knowledge, construct fashions, and orchestrate workflows in a single, ruled atmosphere.

To be taught extra, go to the Amazon SageMaker Unified Studio web page.

In regards to the authors