We work together with LLMs day by day.

We write prompts, paste paperwork, proceed lengthy conversations, and count on the mannequin to recollect what we mentioned earlier. When it does, we transfer on. When it doesn’t, we repeat ourselves or assume one thing went flawed.

What most individuals hardly ever take into consideration is that each response is constrained by one thing referred to as the context window. It quietly decides how a lot of your immediate the mannequin can see, how lengthy a dialog stays coherent, and why older info all of a sudden drops out.

Each massive language mannequin has a context window, but most customers by no means study what it’s or why it issues. Nonetheless, it performs a important position in whether or not a mannequin can deal with quick chats, lengthy paperwork, or complicated multi step duties.

On this article, we are going to discover how the context window works, the way it differs throughout fashionable LLMs, and why understanding it modifications the best way you immediate, select, and use language fashions.

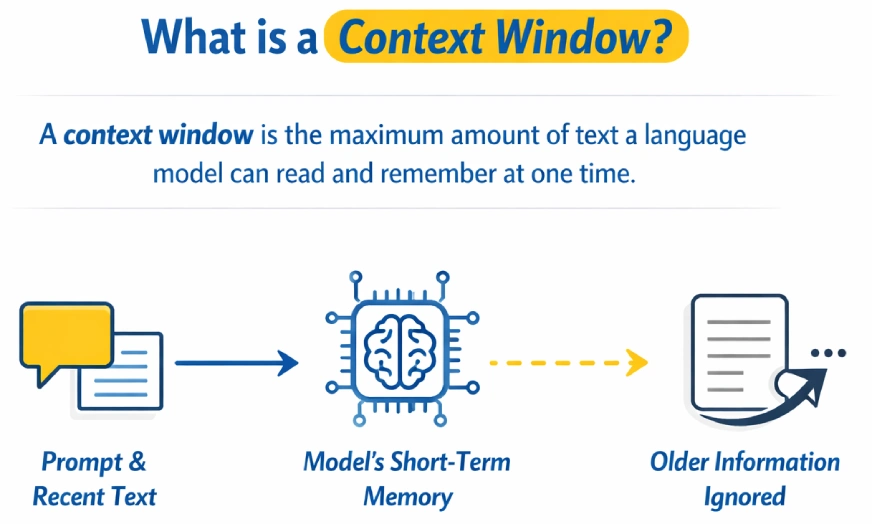

What’s Context Window?

A context window is the utmost quantity of textual content a language mannequin can learn and bear in mind at one time whereas producing a response. It acts because the mannequin’s short-term reminiscence, together with the immediate and up to date dialog. As soon as the restrict is exceeded, older info is ignored or forgotten.

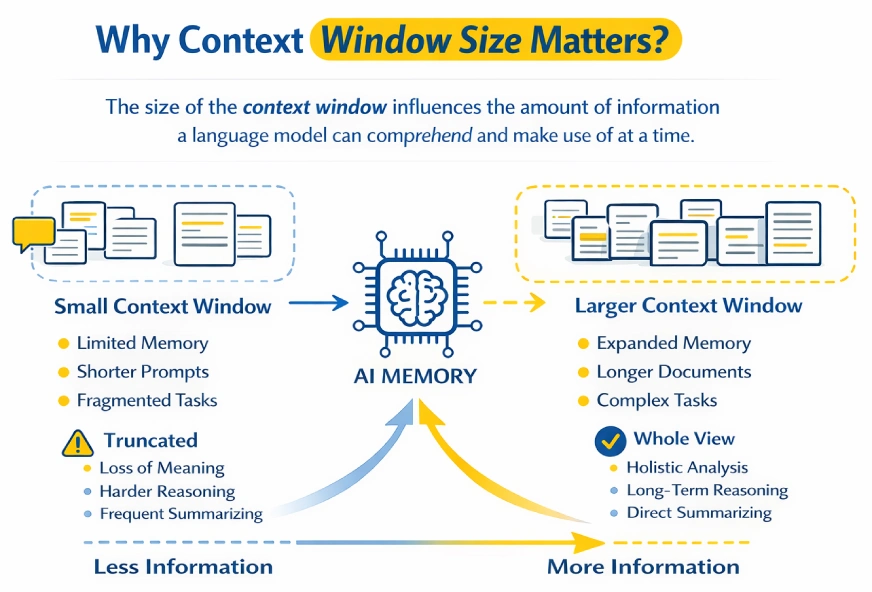

Why Does Context Window Measurement Matter?

The context window determines how a lot info a language mannequin can course of and cause over at a time.

Smaller context home windows power prompts or paperwork to be truncated, typically breaking continuity and dropping vital particulars. Bigger context home windows permit the mannequin to carry extra info concurrently, making it simpler to cause over lengthy conversations, paperwork, or codebases.

Consider the context window because the mannequin’s working reminiscence. Something exterior the present immediate is successfully forgotten. Bigger home windows assist protect multi-step reasoning and lengthy dialogues, whereas smaller home windows trigger earlier info to fade shortly.

Duties like analyzing lengthy experiences or summarizing massive paperwork in a single go grow to be potential with bigger context home windows. Nevertheless, they arrive with trade-offs: larger computational value, slower responses, and potential noise if irrelevant info is included.

The hot button is stability. Present sufficient context to floor the duty, however hold inputs centered and related.

Additionally Learn: Immediate Engineering Information 2026

Context Window Sizes in Completely different LLMs

Context window sizes fluctuate broadly throughout massive language fashions and have expanded quickly with newer generations. Early fashions have been restricted to only some thousand tokens, limiting them to quick prompts and small paperwork.

Fashionable fashions assist a lot bigger context home windows, enabling long-form reasoning, document-level evaluation, and prolonged multi-turn conversations inside a single interplay. This enhance has considerably improved a mannequin’s capacity to take care of coherence throughout complicated duties.

The desk under summarizes the generally reported context window sizes for fashionable mannequin households from OpenAI, Anthropic, Google, and Meta.

Examples of Context Window Sizes

| Mannequin | Group | Context Window Measurement | Notes |

|---|---|---|---|

| GPT-3 | OpenAI | ~2,048 tokens | Early technology; very restricted context |

| GPT-3.5 | OpenAI | ~4,096 tokens | Commonplace early ChatGPT restrict |

| GPT-4 (baseline) | OpenAI | As much as ~32,768 tokens | Bigger context than GPT-3.5 |

| GPT-4o | OpenAI | 128,000 tokens | Broadly used long-context mannequin |

| GPT-4.1 | OpenAI | ~1,000,000+ tokens | Huge extended-context assist |

| GPT-5.1 | OpenAI | ~128K–196K tokens | Subsequent-gen flagship with improved reasoning |

| Claude 3.5 / 3.7 Sonnet | Anthropic | 200,000 tokens | Sturdy stability of pace and lengthy context |

| Claude 4.5 Sonnet | Anthropic | ~200,000 tokens | Improved reasoning with massive window |

| Claude 4.5 Opus | Anthropic | ~200,000 tokens | Highest-end Claude mannequin |

| Gemini 3 / Gemini 3 Professional | Google DeepMind | ~1,000,000 tokens | Business-leading context size |

| Kimi K2 | Moonshot AI | ~256,000 tokens | Giant context, sturdy long-form reasoning |

| LLaMA 3.1 | Meta | ~128,000 tokens | Prolonged context for open-source fashions |

Advantages and Commerce-offs of Bigger Context Home windows

Advantages

- Helps far longer enter, whether or not full paperwork or lengthy conversations or massive codebases.

- Enhances the connectivity of multi-step reasoning and prolonged conversations.

- Makes it potential to carry out difficult duties corresponding to analyzing lengthy experiences or entire books without delay.

- Eliminates the need of chunking, summarizing or exterior retrieval techniques.

- Helps base responses on given reference textual content, which has the capability to lower hallucinations.

Commerce-offs

- Raises the fee, latency, and API utilization prices.

- Seems to be inefficient when a large context is utilized when it isn’t required.

- The worth of presents decreases when the timid accommodates irrelevant or noisy info.

- Could cause confusion or inconsistency the place there are conflicting particulars contained in lengthy inputs.

- Could have consideration issues in very lengthy prompts e.g. info not picked out alongside the center.

Virtually, the bigger context home windows are potent and simpler when they’re mixed with concentrated, high-quality enter as an alternative of default most size.

Additionally Learn: What’s Mannequin Collapse? Examples, Causes and Fixes

How Context Window Impacts Key Use Circumstances?

Provided that the context window constrains the quantity of knowledge {that a} mannequin can view at a given time, it extremely influences what the mannequin is able to undertaking with out further instruments or workarounds.

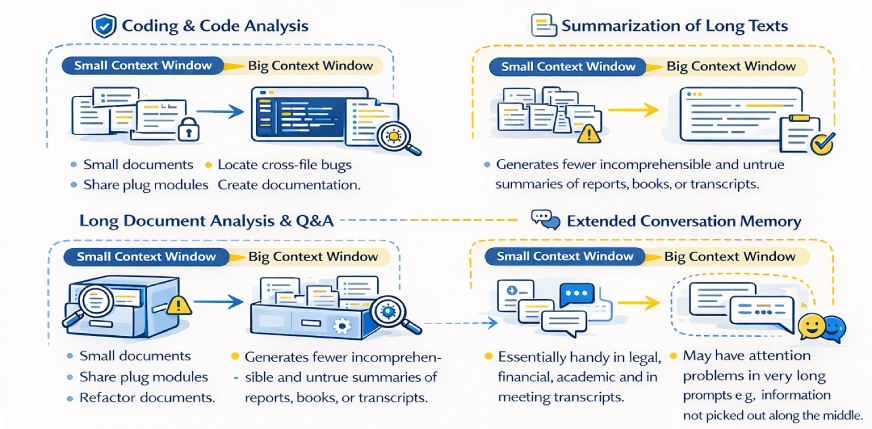

Coding and Code Evaluation

- The context home windows are small, which confines the mannequin to a single file or a handful of capabilities without delay.

- The mannequin is unaware of the larger codebase until it’s specified.

- Massive context home windows allow two or extra information or repositories to be seen.

- Permits whole-system duties corresponding to refactoring modules, finding cross-file bugs, and creating documentation.

- Eliminates guide chunking or the repetition of swapping code snippets.

- Nonetheless wants selectivity of very massive initiatives in order to keep away from noise and wastage of context.

Summarization of Lengthy Texts

- Minimal home windows make paperwork be divided and summarized in fragments.

- Small summaries are likely to lose international construction and vital relationships.

- Massive context home windows allow a one go abstract of entire paperwork.

- Generates fewer incomprehensible and unfaithful summaries of experiences, books, or transcripts.

- Primarily helpful in authorized, monetary, educational and in assembly transcripts.

- The trade-offs are to pay extra and course of slowly with massive inputs.

Lengthy Doc Evaluation and Q&A

- Small dimension home windows want retrieval techniques or guide part choice.

- Huge home windows make it possible to question full paperwork or open a couple of doc.

- Additionally permits cross-referencing of knowledge that’s in distant areas.

- Relevant to contracts, analysis papers, insurance policies and information bases.

- Eases pipelines by avoiding using search and chunking logic.

- Regardless of the lack to be correct underneath ambiguous steering and unrelated enter decisions, accuracy can nonetheless enhance.

Prolonged Dialog Reminiscence

- Chatbots have small window sizes, which result in forgetting earlier sections of an extended dialog.

- Because the context fades away, the person is required to repeat or restate info.

- Dialog historical past is longer and lasts longer in massive context home windows.

- Facilitates much less robotic, extra pure and private communications.

- Good to make use of in assist chats, writing collaboration and protracted brainstorming.

- Higher reminiscence is accompanied with higher use of tokens in addition to value when utilizing lengthy chats.

Additionally Learn: How Does LLM Reminiscence Work?

Conclusion

The context window defines how a lot info a language mannequin can course of without delay and acts as its short-term reminiscence. Fashions with bigger context home windows deal with lengthy conversations, massive paperwork, and sophisticated code extra successfully, whereas smaller home windows battle as inputs develop.

Nevertheless, larger context home windows additionally enhance value and latency and don’t assist if the enter consists of pointless info. The appropriate context dimension depends upon the duty: small home windows work effectively for fast or easy duties, whereas bigger ones are higher for deep evaluation and prolonged reasoning.

Subsequent time you write a immediate, embody solely the context the mannequin truly wants. Extra context is highly effective, however centered context is what works finest.

Regularly Requested Questions

A. When the context window is exceeded, the mannequin begins ignoring older elements of the enter. This will trigger it to neglect earlier directions, lose monitor of the dialog, or produce inconsistent responses.

A. No. Whereas bigger context home windows permit the mannequin to course of extra info, they don’t mechanically enhance output high quality. If the enter accommodates irrelevant or noisy info, efficiency can truly degrade.

A. In case your activity entails lengthy paperwork, prolonged conversations, multi-file codebases, or complicated multi-step reasoning, a bigger context window is useful. For brief questions, easy prompts, or fast duties, smaller home windows are normally enough.

A. Sure. Bigger context home windows enhance token utilization, which ends up in larger prices and slower response instances. That is why utilizing the utmost obtainable context by default is usually inefficient.

Login to proceed studying and luxuriate in expert-curated content material.