The whole lot begins with good knowledge, so ingestion is commonly your first step to unlocking insights. Nevertheless, ingestion presents challenges, like ramping up on the complexities of every knowledge supply, conserving tabs on these sources as they alter, and governing all of this alongside the way in which.

Lakeflow Join makes environment friendly knowledge ingestion straightforward, with a point-and-click UI, a easy API, and deep integrations with the Information Intelligence Platform. Final yr, greater than 2,000 clients used Lakeflow Hook up with unlock worth from their knowledge.

On this weblog, we’ll overview the fundamentals of Lakeflow Join and recap the newest bulletins from the 2025 Information + AI Summit.

Ingest all of your knowledge in a single place with Lakeflow Join

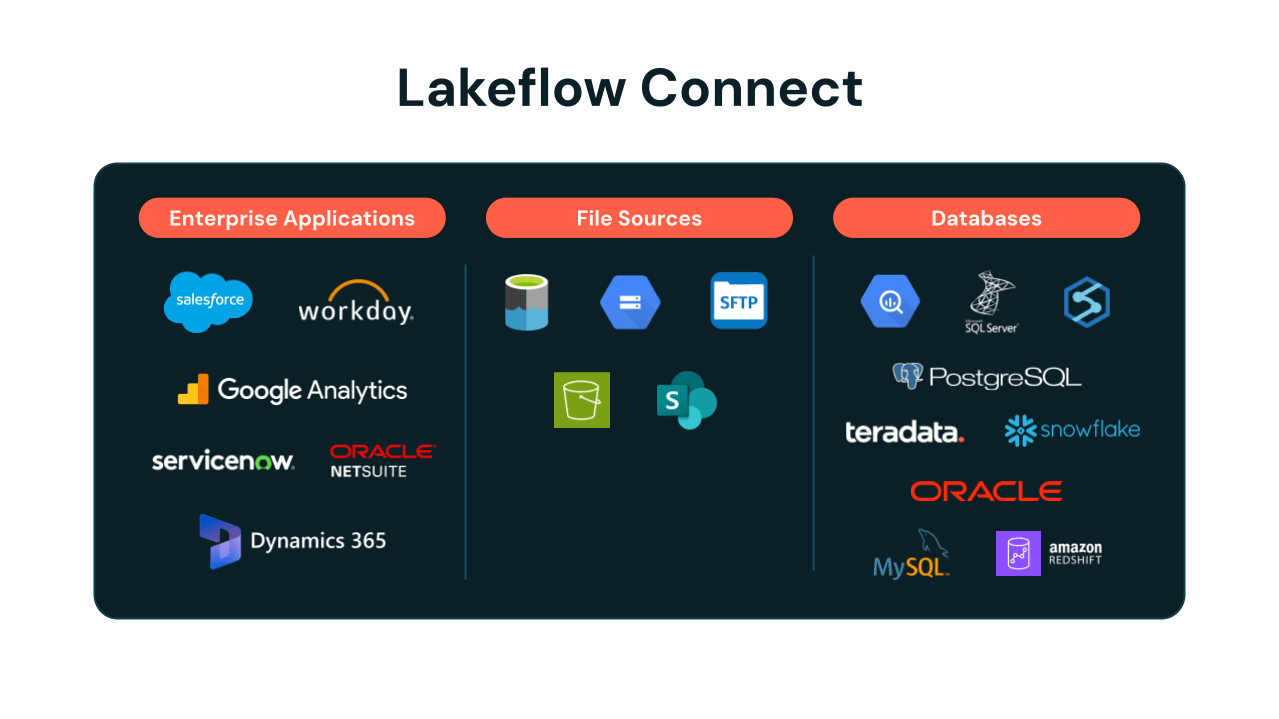

Lakeflow Join gives easy ingestion connectors for functions, databases, cloud storage, message buses, and extra. Below the hood, ingestion is environment friendly, with incremental updates and optimized API utilization. As your managed pipelines run, we maintain schema evolution, seamless third-party API upgrades, and complete observability with built-in alerts.

Information + AI Summit 2025 Bulletins

At this yr’s Information + AI Summit, Databricks introduced the Basic Availability of Lakeflow, the unified method to knowledge engineering throughout ingestion, transformation, and orchestration. As a part of this, Lakeflow Join introduced Zerobus, a direct write API that simplifies ingestion for IoT, clickstream, telemetry and different related use circumstances. We additionally expanded the breadth of supported knowledge sources with extra built-in connectors throughout enterprise functions, file sources, databases, and knowledge warehouses, in addition to knowledge from cloud object storage.

Zerobus: a brand new strategy to push occasion knowledge on to your lakehouse

We made an thrilling announcement introducing Zerobus, a brand new progressive method for pushing occasion knowledge on to your lakehouse by bringing you nearer to the info supply. Eliminating knowledge hops and lowering operational burden allows Zerobus to offer high-throughput direct writes with low latency, delivering close to real-time efficiency at scale.

Beforehand, some organizations used message buses like Kafka as transport layers to the Lakehouse. Kafka gives a sturdy, low-latency approach for knowledge producers to ship knowledge, and it’s a well-liked selection when writing to a number of sinks. Nevertheless, it additionally provides additional complexity and prices, in addition to the burden of managing one other knowledge copy—so it’s inefficient when your sole vacation spot is the Lakehouse. Zerobus supplies a easy answer for these circumstances.

Joby Aviation is already utilizing Zerobus to straight push telemetry knowledge into Databricks.

Joby is ready to use our manufacturing brokers with Zerobus to push gigabytes a minute of telemetry knowledge on to our lakehouse, accelerating the time to insights — all with Databricks Lakeflow and the Information Intelligence Platform.”

— Dominik Müller, Manufacturing unit Methods Lead, Joby Aviation, Inc.

As a part of Lakeflow Join, Zerobus can also be unified with the Databricks Platform, so you may leverage broader analytics and AI capabilities immediately. Zerobus is presently in Non-public Preview; attain out to your account crew for early entry.

🎥 Watch and study extra about Zerobus: Breakout session on the Information + AI Summit, that includes Joby Aviation, “Lakeflow Join: eliminating hops in your streaming structure”

Lakeflow Join expands ingestion capabilities and knowledge sources

New absolutely managed connectors are persevering with to roll out throughout numerous launch states (see full listing under), together with Google Analytics and ServiceNow, in addition to SQL Server – the primary database connector, all presently in Public Preview with Basic Availability coming quickly.

We’ve additionally continued innovating for patrons who need extra customization choices and use our present ingestion answer, Auto Loader. It incrementally and effectively processes new knowledge recordsdata as they arrive in cloud storage. We’ve launched some main value and efficiency enhancements for Auto Loader, together with 3X quicker listing listings and computerized cleanup with “CleanSource,” each now typically obtainable, together with smarter and more cost effective file discovery utilizing file occasions. We additionally introduced native assist for ingesting Excel recordsdata and ingesting knowledge from SFTP servers, each in Non-public Preview, obtainable by request for early entry.

Supported knowledge sources:

- Functions: Salesforce, Workday, ServiceNow, Google Analytics, Microsoft Dynamics 365, Oracle NetSuite

- File sources: S3, ADLS, GCS, SFTP, SharePoint

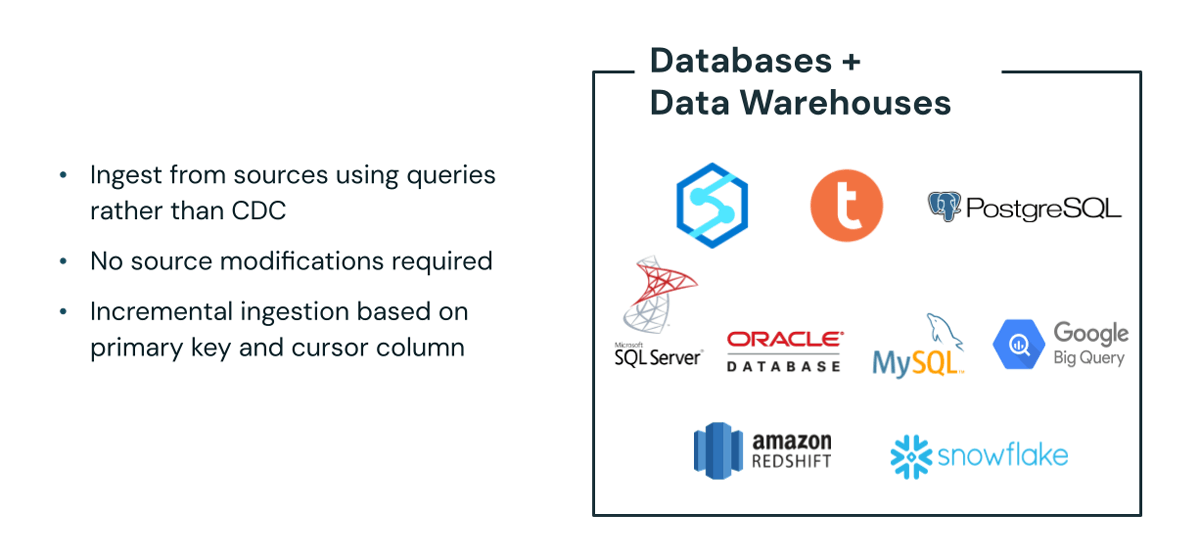

- Databases: SQL Server, Oracle Database, MySQL, PostgreSQL

- Information warehouses: Snowflake, Amazon Redshift, Google BigQuery

Inside the expanded connector providing, we’re introducing query-based connectors that simplify knowledge ingestion. These new connectors help you pull knowledge straight out of your supply techniques with out database modifications and work with learn replicas the place change knowledge seize (CDC) logs aren’t obtainable. That is presently in Non-public Preview; attain out to your account crew for early entry.

🎥 Watch and study extra about Lakeflow Join: Breakout session on the Information + AI Summit, “Getting Began with Lakeflow Join”

🎥 Watch and study extra about ingesting from enterprise SaaS functions: Breakout session on the Information + AI Summit that includes Databricks buyer Porsche Holding, “Lakeflow Join: Seamless Information Ingestion From Enterprise Apps”

🎥 Watch and study extra about database connectors: Breakout session on the Information + AI Summit, “Lakeflow Join: Simple, Environment friendly Ingestion From Databases”

Lakeflow Join in Jobs, now typically obtainable

We’re persevering with to develop capabilities to make it simpler so that you can use our ingestion connectors whereas constructing knowledge pipelines, as a part of Lakeflow’s unified knowledge engineering expertise. Databricks not too long ago introduced Lakeflow Join in Jobs, which allows you to create ingestion pipelines inside Lakeflow Jobs. So, when you’ve got jobs as the middle of your ETL course of, this seamless integration supplies a extra intuitive and unified expertise for managing ingestion.

Prospects can outline and handle their end-to-end workloads—from ingestion to transformation—multi function place. Lakeflow Join in Jobs is now typically obtainable.

🎥 Watch and study extra about Lakeflow Jobs: Breakout session on the Information + AI Summit “Orchestration with Lakeflow Jobs”

Lakeflow Join: extra to come back in 2025 and past

Databricks understands the wants of knowledge engineers and organizations who drive innovation with their knowledge utilizing analytics and AI instruments. To that finish, Lakeflow Join has continued to construct out strong, environment friendly ingestion capabilities with absolutely managed connectors to extra customizable options and APIs.

We’re simply getting began with Lakeflow Join. Keep tuned for extra bulletins later this yr, or contact your Databricks account crew to affix a preview for early entry.

To strive Lakeflow Join, you may overview the documentation, or take a look at the Demo Heart.