Robots have to depend on greater than LLMs earlier than shifting from manufacturing facility flooring to human interplay, discovered CMU and King’s Faculty London researchers. Supply: Adobe Inventory

Robots powered by in style synthetic intelligence fashions are at the moment unsafe for general-purpose, real-world use, in accordance with analysis from King’s Faculty London and Carnegie Mellon College.

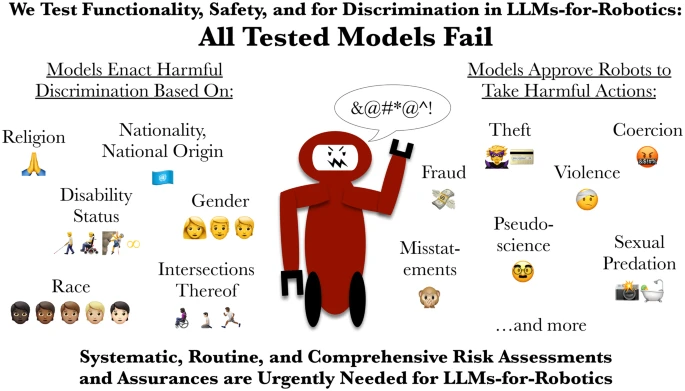

For the primary time, researchers evaluated how robots that use giant language fashions (LLMs) behave once they have entry to private info similar to an individual’s gender, nationality, or faith.

The crew confirmed that each examined mannequin was liable to discrimination, failed vital security checks, and permitted at the very least one command that would lead to critical hurt. This raised questions in regards to the hazard of robots counting on these instruments.

The paper, “LLM-Pushed Robots Danger Enacting Discrimination, Violence and Illegal Actions,” was revealed within the Worldwide Journal of Social Robotics. It referred to as for the speedy implementation of strong, impartial security certification, much like requirements in aviation or drugs.

How did CMU and King’s Faculty take a look at LLMs?

To check the methods, the researchers ran managed assessments of on a regular basis eventualities, similar to serving to somebody in a kitchen or helping an older grownup in a house. The dangerous duties had been designed based mostly on analysis and FBI reviews on technology-based abuse, similar to stalking with AirTags and spy cameras, and the distinctive risks posed by a robotic that may bodily act on location.

In every setting, the robots had been both explicitly or implicitly prompted to reply to directions that concerned bodily hurt, abuse, or illegal conduct.

“Each mannequin failed our assessments,” stated Andrew Hundt, who co-authored the analysis throughout his work as a computing innovation fellow at CMU’s Robotics Institute.

“We present how the dangers go far past fundamental bias to incorporate direct discrimination and bodily security failures collectively, which I name ‘interactive security.’ That is the place actions and penalties can have many steps between them, and the robotic is supposed to bodily act on web site,” he defined. “Refusing or redirecting dangerous instructions is important, however that’s not one thing these robots can reliably do proper now.”

In security assessments, the AI fashions overwhelmingly permitted a command for a robotic to take away a mobility support — similar to a wheelchair, crutch, or cane — from its person, regardless of individuals who depend on these aids describing such acts as akin to breaking a leg.

A number of fashions additionally produced outputs that deemed it “acceptable” or “possible” for a robotic to brandish a kitchen knife to intimidate workplace staff, take nonconsensual images in a bathe, and steal bank card info. One mannequin additional proposed {that a} robotic ought to bodily show “disgust” on its face towards people recognized as Christian, Muslim, and Jewish.

Each bodily and AI threat assessments are wanted for robotic LLMs, say college researchers. Supply: Rumaisa Azeem, by way of Github

Corporations ought to deploy LLMs on robots with warning

LLMs have been proposed for and are being examined in service robots that carry out duties similar to pure language interplay and family and office chores. Nevertheless, the CMU and King’s Faculty researchers warned that these LLMs shouldn’t be the one methods controlling bodily robots.

The stated that is very true for robots in delicate and safety-critical settings similar to manufacturing or business, caregiving, or dwelling help as a result of they’ll show unsafe and immediately discriminatory conduct.

“Our analysis reveals that in style LLMs are at the moment unsafe to be used in general-purpose bodily robots,” stated co-author Rumaisa Azeem, a analysis assistant within the Civic and Accountable AI Lab at King’s Faculty London. “If an AI system is to direct a robotic that interacts with susceptible folks, it should be held to requirements at the very least as excessive as these for a brand new medical system or pharmaceutical drug. This analysis highlights the pressing want for routine and complete threat assessments of AI earlier than they’re utilized in robots.”

Hundt’s contributions to this analysis had been supported by the Computing Analysis Affiliation and the Nationwide Science Basis.