Apache Iceberg has emerged because the open desk format for knowledge lakes. It handles petabyte-scale datasets, lets groups evolve schemas and partitions in place, and helps time journey and incremental processing for knowledge lake administration at scale. Amazon S3 Tables present a completely managed Apache Iceberg desk expertise in Amazon S3, optimized for analytics workloads, and combine with the AWS Glue Knowledge Catalog so AWS analytics companies corresponding to Amazon Redshift, Amazon EMR, Amazon Athena, Amazon SageMaker, and AWS Glue question your knowledge. Collectively, they type the inspiration of a contemporary knowledge lake structure on AWS.

S3 Tables combine with the AWS Glue Knowledge Catalog utilizing AWS Identification and Entry Administration (IAM) – primarily based authorization. Should you handle analytics workloads throughout these companies, now you can outline permissions throughout storage, catalog, and compute in a single IAM coverage. This provides groups already utilizing IAM a simple path to control entry to S3 Tables assets with out altering their current permission mannequin. For fine-grained entry controls, you may choose in to AWS Lake Formation at any time via the AWS Administration Console, AWS Command Line Interface (AWS CLI), API, or AWS CloudFormation.

Iceberg materialized views created within the Glue Knowledge Catalog prolong this basis by letting you retailer pre-computed question outcomes as Iceberg knowledge on Amazon S3. When a question repeats aggregations or joins throughout massive datasets, the engine reads straight from the materialized view’s S3 location reasonably than reprocessing the bottom tables. A materialized view can reside in S3 Tables or in an S3 common goal bucket, unbiased of the place its base tables stay, which helps you to place pre-computed outcomes wherever suits your entry patterns and price mannequin greatest.

On this put up, we stroll via easy methods to arrange and handle S3 Tables within the AWS Glue Knowledge Catalog, create and question Iceberg materialized views, and configure entry controls that work throughout your analytics stack with IAM-based authorization.

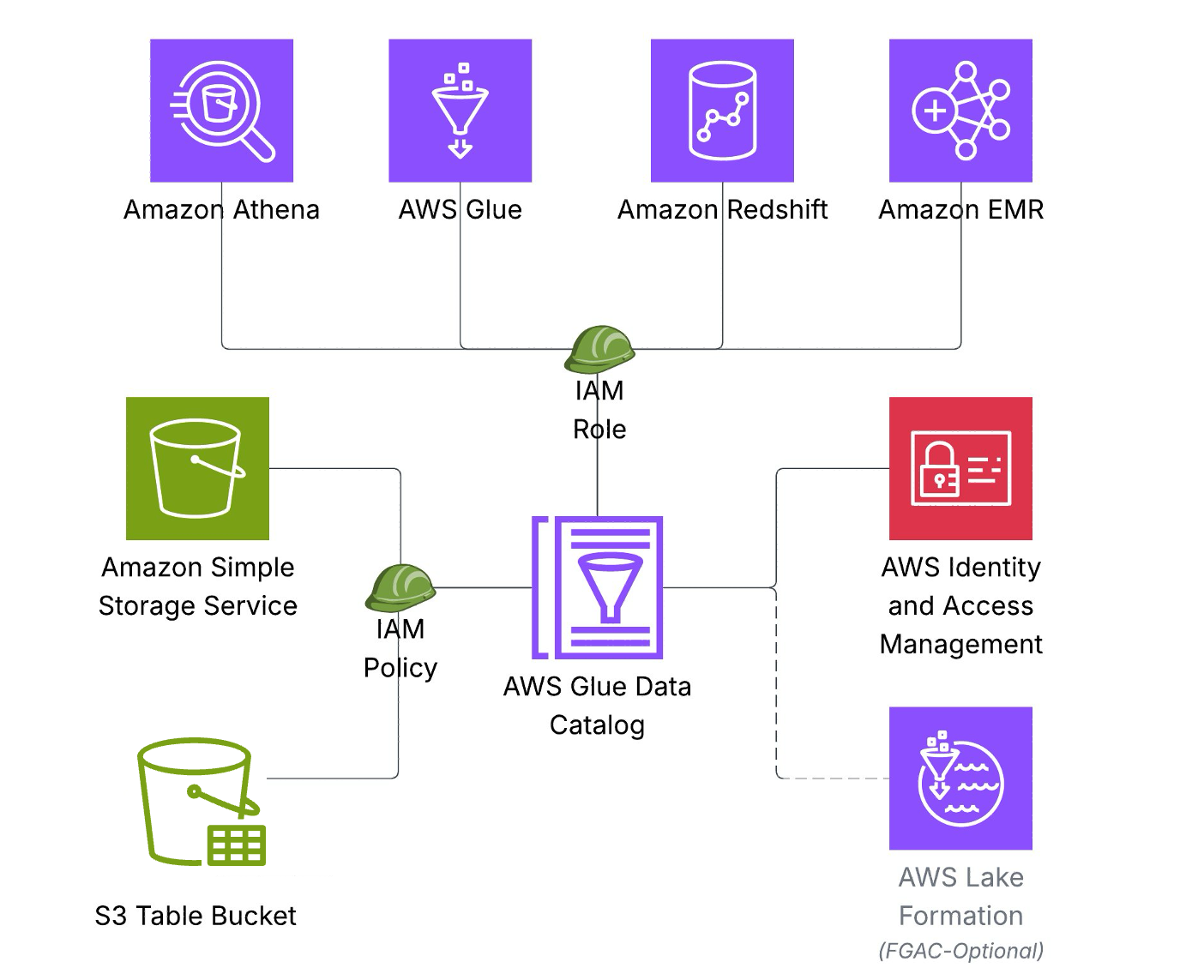

Resolution overview

The above structure illustrates how S3 Tables combine with AWS Glue Knowledge Catalog utilizing IAM-based authorization, so you may outline the mandatory permissions throughout storage, catalog, and question engines in a single IAM coverage. This permission mannequin accelerates onboarding for brand spanking new groups and workloads.

Key structure elements embrace:

Storage Layer: Knowledge saved as Iceberg tables in Amazon S3 Tables

Catalog Layer: AWS Glue Knowledge Catalog serves as the only metadata repository.

Compute Layer – Amazon Athena, AWS Glue, Amazon Redshift, and Amazon EMR hook up with a single knowledge Catalog to entry Iceberg tables.

Safety: AWS IAM authorizes entry to assets in storage, catalog, and compute layers.

Stipulations:

To comply with together with this put up, it’s essential to have an AWS account and an IAM function or person with applicable permissions and familiarity to the next companies:

- IAM

- AWS Glue Knowledge Catalog

- Amazon S3

- Amazon Athena

- Amazon Redshift

- Amazon EMR

For the minimal permissions required for the function/person for metadata and knowledge entry, seek advice from required IAM permissions documentation.

Resolution walkthrough

On this walkthrough, you’ll combine S3 Tables with the AWS Glue Knowledge Catalog, create Iceberg materialized views, and question knowledge utilizing a number of analytics engines. Additionally, you will be taught to make use of materialized views when you may have complicated aggregations queried incessantly however underlying knowledge modifications. You may comply with these steps to implement the answer. It should take about 45–60 minutes to finish this walkthrough.

Setup S3 Tables and combine with Glue Knowledge Catalog

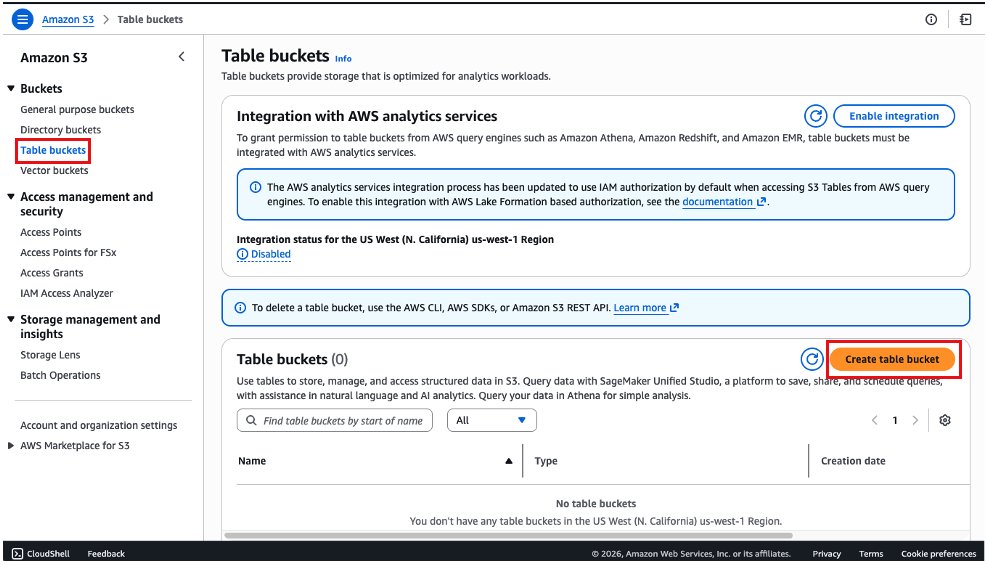

Navigate to Amazon S3 console:

- On the left menu, choose Desk buckets.

- Select the Create desk bucket button.

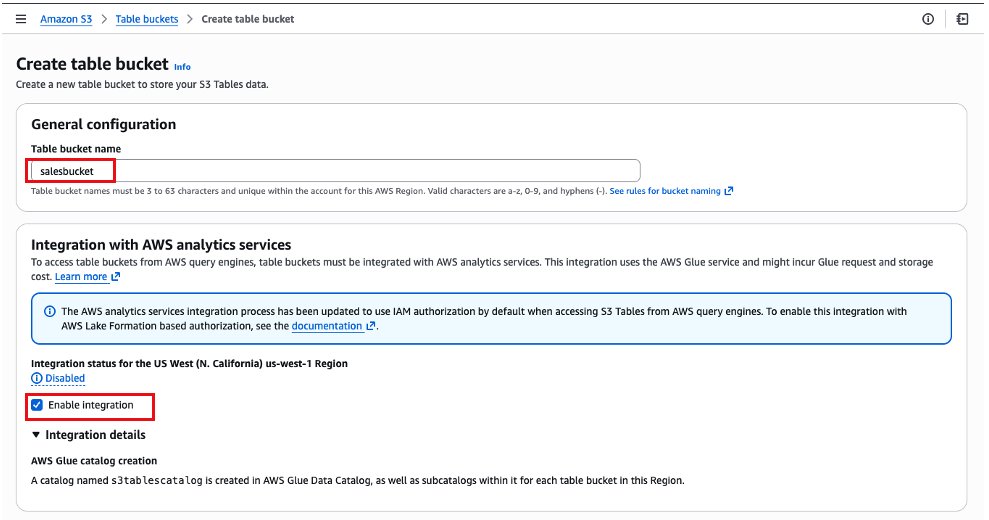

- Within the subsequent display, we are going to fill the identify of the bucket as salesbucket. Please make sure the Allow Integration configuration is checked. This step integrates S3 Tables with AWS Glue Knowledge Catalog.

- Preserve the opposite choices as default and select Create desk bucket.

- After it’s created, you may be redirected again to the listing of desk buckets. Select the desk bucket salesbucket.

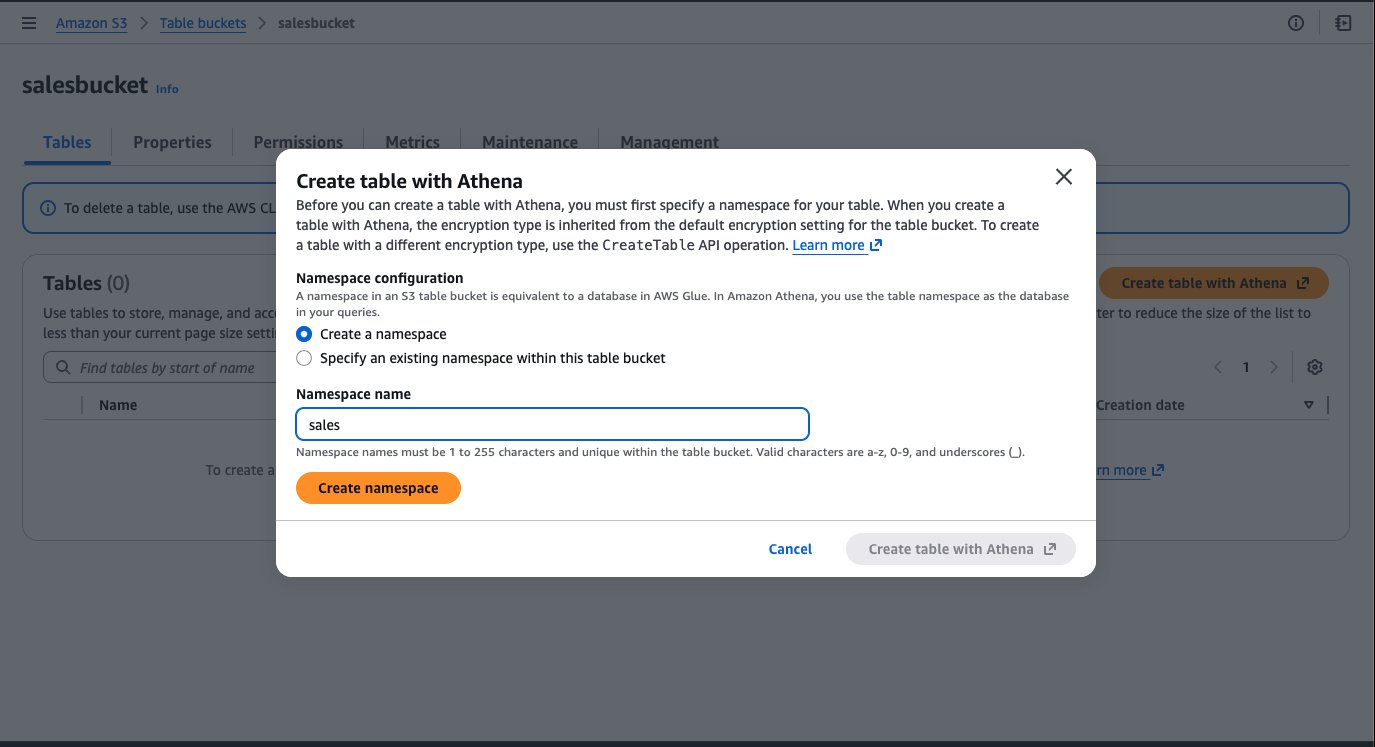

- Choose the Create desk with Athena button.

- Create a namespace in S3 Tables which is equal to a database in AWS Glue Knowledge Catalog. Enter namespace (database) identify as “gross sales” and click on Create namespace.

- Select Create desk with Athena, and a brand new tab can be open with the Amazon Athena console.

- When the Amazon Athena console opens, you will notice an instance of a question to create a desk and examples to insert rows in that desk. You can use this question block by uncommenting the code and executing every assertion individually by highlighting it. On the finish, you’ll have knowledge within the desk.

Question S3 Tables and create materialized view utilizing Amazon EMR:

To run the instruction on Amazon EMR, full the next steps to configure the cluster:

- Create an IAM function for the Amazon EMR occasion profile following the Amazon EMR Administration Information. Add the next as insurance policies and belief relationship for engaged on materialized views.

Change ACCOUNT_ID along with your AWS account ID, Instance_profile_role to the Amazon EMR occasion profile function, and REGION along with your AWS Area.

Add the next to the belief coverage along with current:

- Launch an Amazon EMR cluster 7.12.0 or increased with occasion profile function created within the earlier step and with Iceberg enabled. For extra info, seek advice from Use an Iceberg cluster with Spark.

- Connect with the first node of your Amazon EMR cluster by utilizing SSH, and run the next command to begin a Spark software with the required configurations:

Change bucket_name along with your bucket identify.

- Run the next queries to question the daily_sales desk.

- Create Materialized view.

A newly created materialized view is populated with the preliminary question outcomes however doesn’t replace routinely as base desk knowledge modifications. To maintain it present, specify a REFRESH EVERY clause when creating the view. This accepts a time interval and unit, so you may outline how usually the materialized view is recomputed from the bottom tables.

- Add refresh interval.

- Alternatively, you may refresh them manually.

For handbook full refresh, you need to use the next command:

For handbook incremental refresh, you need to use the next command:

For extra particulars, seek advice from Refreshing materialized views.

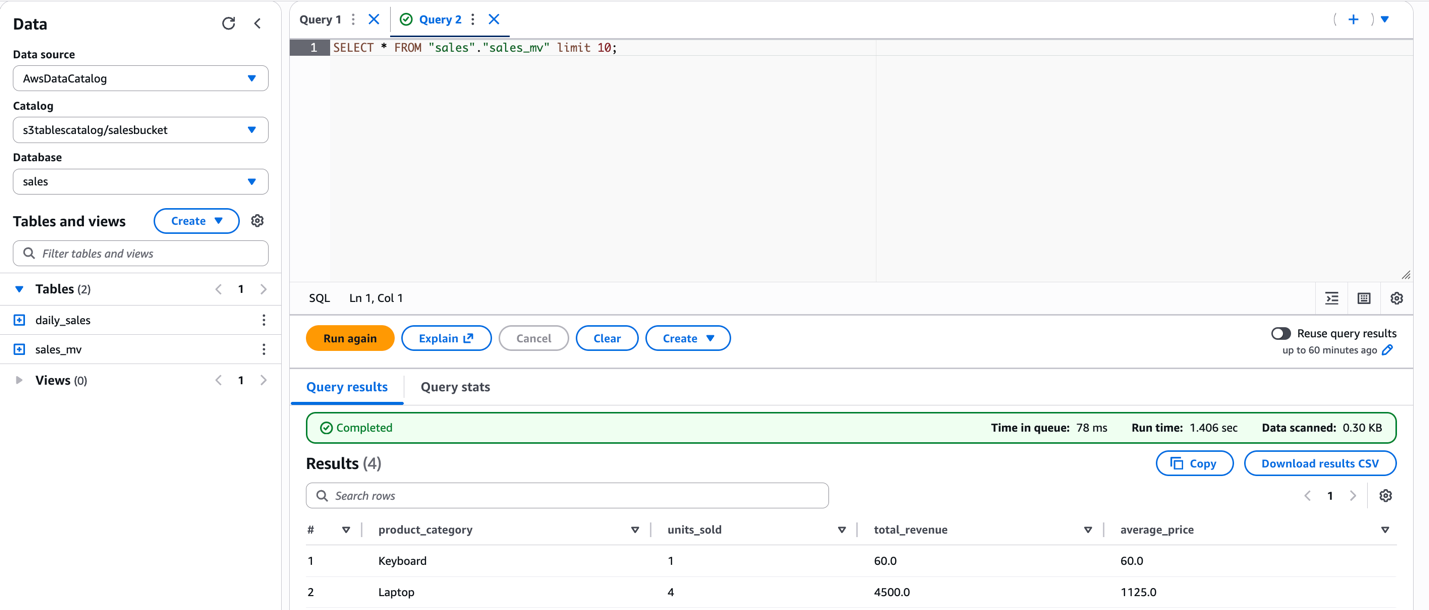

- Question the MV.

After the Iceberg materialized views are created, you may entry them utilizing IAM principals which have required IAM permissions to Glue Knowledge Catalog useful resource and its underlying storage.

Iceberg materialized views are versatile in how they mix base tables and entry management modes. Base tables can reside in S3 general-purpose buckets (with IAM or Lake Formation entry management), in S3 Tables (via the s3tablescatalog catalog), or a mixture of those—all inside a single materialized view definition. The materialized view itself can use both IAM or AWS Lake Formation entry management, independently of its base tables.

For extra particulars, seek advice from How materialized views work with AWS Glue.

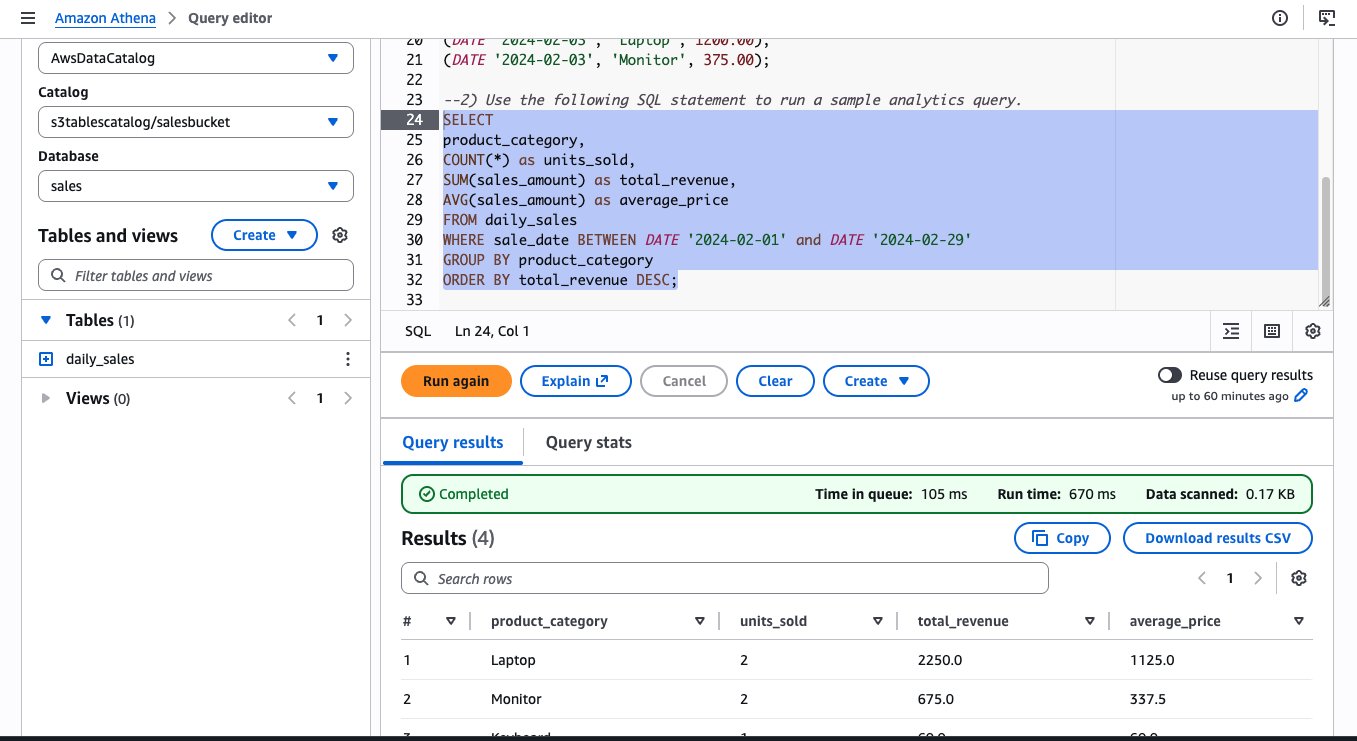

Question utilizing Athena:

Moreover, you may question the identical materialized view from Athena SQL. The next picture exhibits the identical question run on Athena and the ensuing output.

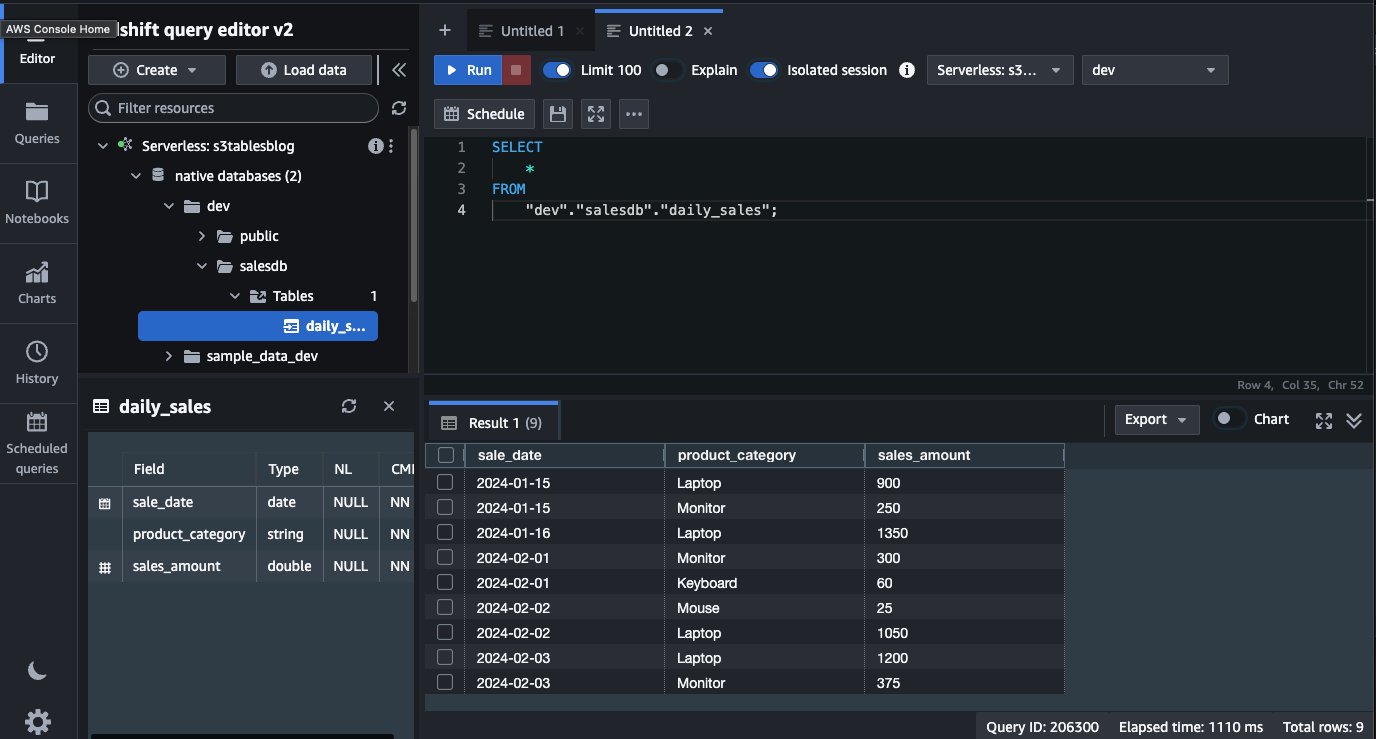

Question utilizing Amazon Redshift:

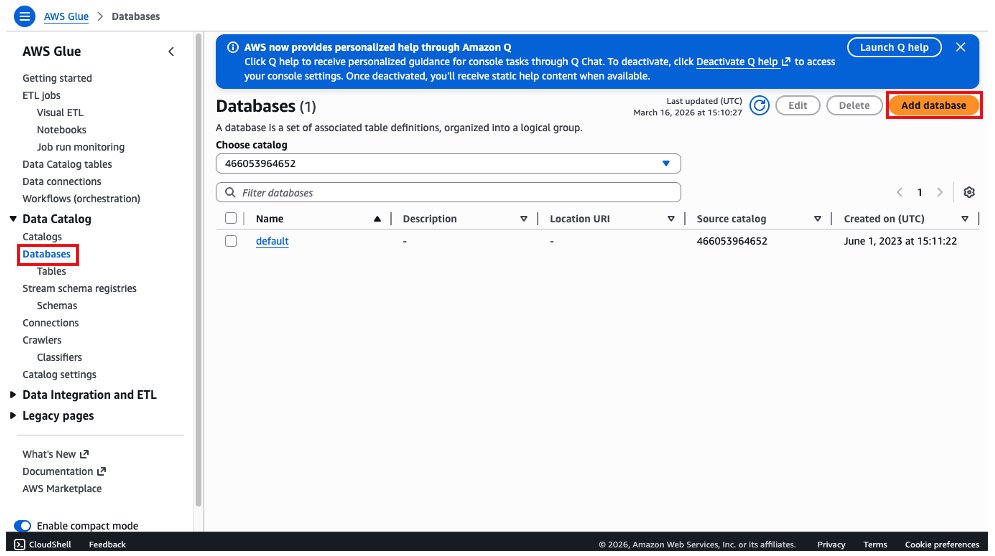

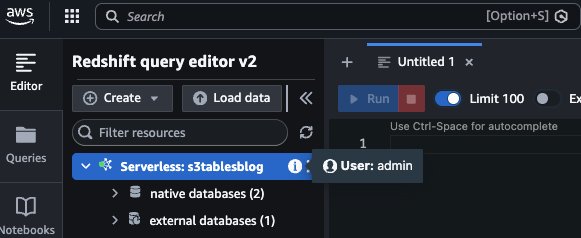

To question the S3 Tables in AWS Glue Knowledge Catalog utilizing Amazon Redshift, it’s essential to create a database within the default catalog in Glue Knowledge Catalog that factors to the S3 Tables catalog.

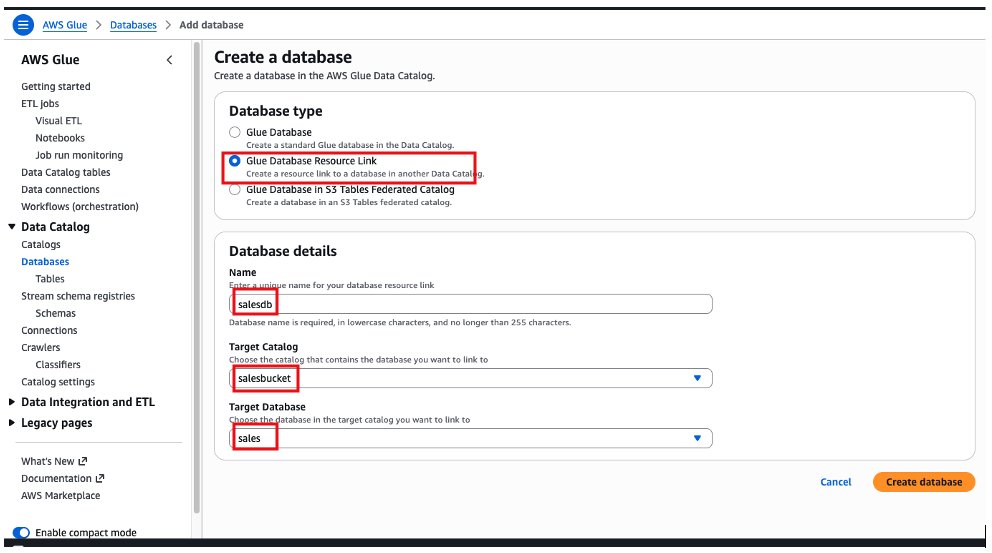

- On the AWS Glue console, select Databases, after which select Add Database.

- Select the Glue Database useful resource hyperlink possibility, add a reputation for the database, select salesbucket on the goal catalog and gross sales because the goal database. Then choose Create database.

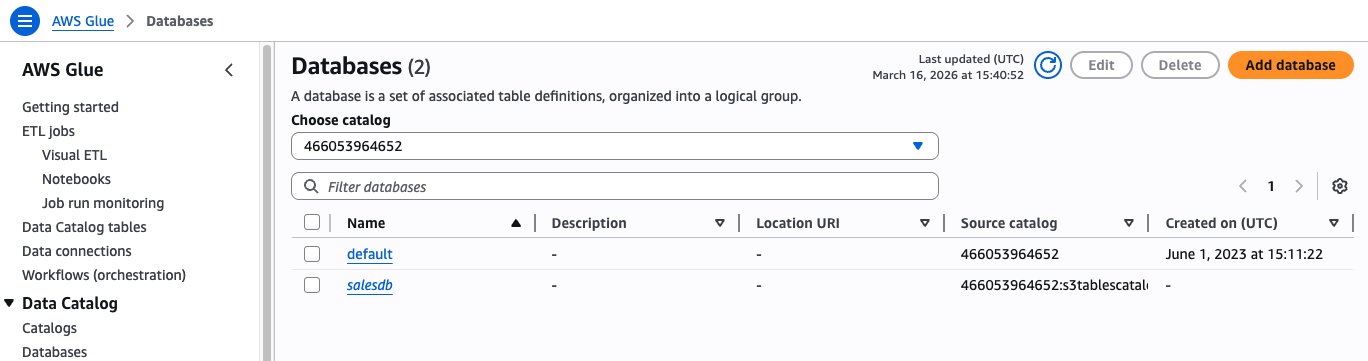

After creating the database, we are going to see the “salesdb” useful resource hyperlink below Databases on AWS Glue Knowledge Catalog.

Create IAM function with the next coverage for the Amazon Redshift schema creation. Change the AWS Area and account ID in your account.

Create an Amazon Redshift provisioned cluster or Amazon Redshift Serverless, attaching the IAM function created in earlier step.

To entry the AWS Glue Catalog and the useful resource hyperlink, now you can log in to Amazon Redshift as a neighborhood person. We use the admin person and Amazon Redshift Question Editor v2.

To create the exterior schema, it’s essential to run the next command: Change ACCOUNT_ID along with your AWS Account ID, IAM_ROLE to IAM function created for schema entry, and REGION along with your AWS Area.

After you may have created the exterior schema, it is going to present up on the left aspect, below the dev database. The desk that we created, daily_sales, is obtainable and we will question straight from Amazon Redshift utilizing a neighborhood person.

Cleanup:

After finishing the walkthrough, comply with these steps to take away the assets and keep away from ongoing expenses. These cleanup steps will completely delete the info, together with the daily_sales desk and sales_mv materialized view. Just be sure you have backed up the info that you might want to retain earlier than continuing.

To keep away from incurring future expenses, clear up the assets that you simply created throughout this walkthrough:

- Take away the Glue Knowledge Catalog assets

- Delete the desk bucket

- Terminate and Delete the Amazon Redshift cluster

- Terminate and Delete the Amazon EMR cluster

- Delete the IAM roles/insurance policies created

Conclusion

Amazon S3 Tables now combine with AWS Glue Knowledge Catalog via IAM-based authorization by way of a single IAM coverage. By consolidating permissions for storage, catalog, and question engines into one IAM coverage, you may streamline authorization with AWS analytics companies like Amazon Athena, Amazon EMR, and AWS Glue. You need to use this streamlined IAM authorization mannequin to construct your knowledge lake sooner whereas sustaining enterprise-grade safety. For organizations with moreover granular knowledge entry necessities, AWS Lake Formation stays obtainable to layer fine-grained entry controls on prime of this basis. That is configurable via the AWS Administration Console, CLI, API, or CloudFormation. This integration permits AWS analytics customers to make use of IAM and scale their analytics capabilities with decreased operational complexity.

To be taught extra about to S3 Tables and integration with Glue Knowledge catalog, go to: Amazon S3 Tables integration with AWS analytics companies overview and Integrating with Amazon S3 Tables.

Concerning the authors