GlobeScan’s 2026 company affairs analysis factors to a widening hole between the rising prominence of AI-related dangers and organizational readiness to handle them. Forty-four p.c of practitioners worldwide now cite the impression of AI and know-how as one of many largest dangers going through international enterprise over the subsequent two years, up sharply from 17 p.c in 2025. But confidence in managing deepfakes — one of the vital quick AI-driven threats — and AI-generated misinformation stays low.

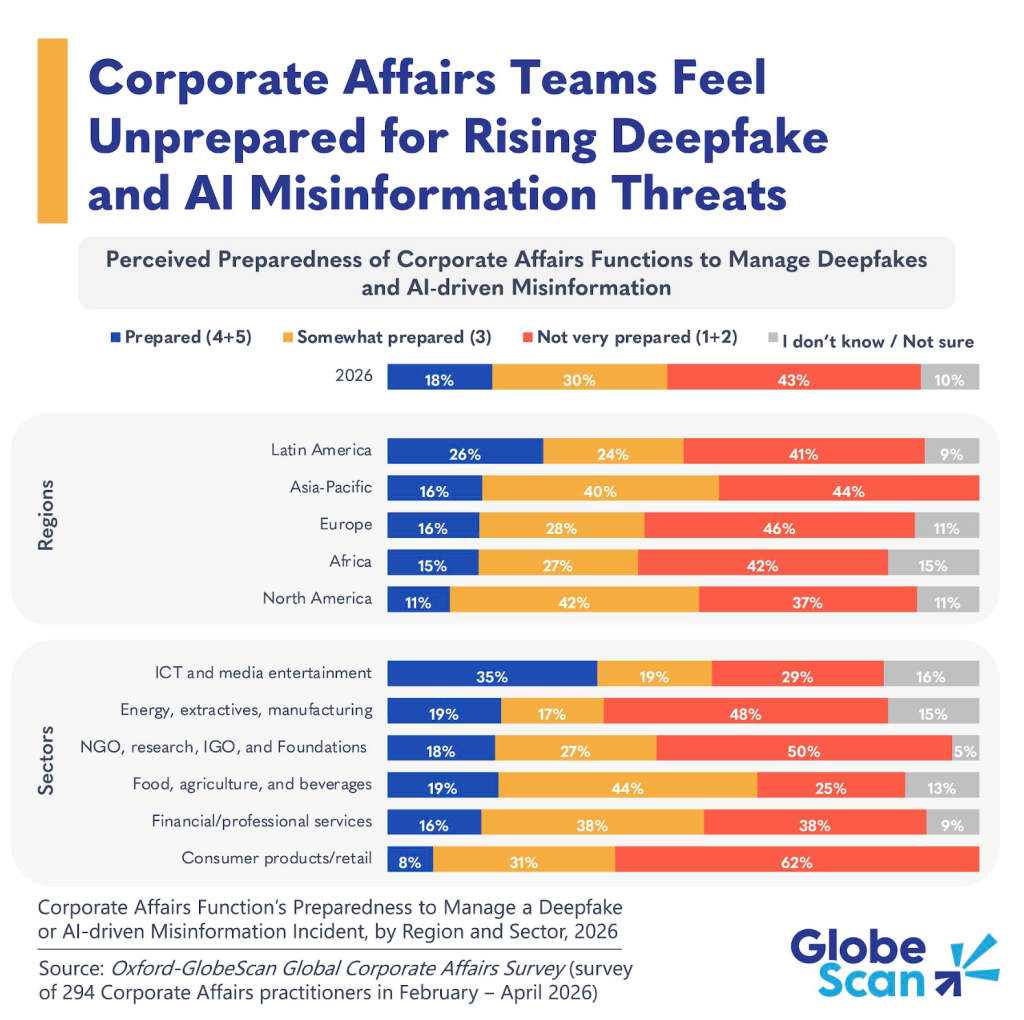

Globally, simply 18 p.c of practitioners say their company affairs operate is ready to handle a deepfake or AI-driven misinformation incident, whereas 43 p.c say it isn’t very ready. An extra 30 p.c describe themselves as considerably ready, suggesting that many organizations have partial plans or early-stage capabilities moderately than totally embedded and examined response mechanisms. As AI accelerates the credibility, pace and attain of false content material, this hole represents a rising reputational danger.

Regional patterns reveal necessary nuances. Europe stands out for notably low confidence, with 46 p.c of respondents saying their company affairs operate just isn’t ready and one other 11 p.c unable to reply. In Africa, greater than half of respondents additionally fall into these two classes (42 p.c and 15 p.c, respectively). In North America, simply 11 p.c of practitioners declare to be totally prepared, whereas 42 p.c place themselves within the considerably ready class, reflecting a cautious evaluation of the complexity concerned in managing AI-driven misinformation. On the different finish of the spectrum, respondents in Latin America report the strongest confidence in feeling ready, which some would possibly enterprise displays an optimistic underestimation of the menace forward.

Sector outcomes reinforce that publicity and readiness don’t at all times align. Company affairs practitioners within the ICT and media leisure sector are comparatively extra assured, with round one-third saying their operate is ready, per nearer proximity to digital platforms and AI-related dangers. In distinction, client merchandise and retail sectors present the weakest readiness profile, with 62 p.c of respondents saying they don’t seem to be very ready and simply 8 p.c saying they’re. This can be a notable vulnerability for a sector that depends closely on model belief and fast, high-visibility communications. Meals, agriculture and beverage corporations sit nearer to the center, with many reporting being considerably ready however comparatively few expressing robust confidence.

What does this imply?

For company affairs leaders, the findings underline that AI‑pushed misinformation has develop into a core reputational danger that’s advancing sooner than organizational readiness. The hole between being considerably ready and really ready issues, as deepfake‑pushed incidents compress resolution timelines and amplify publicity earlier than details are totally established. Closing this hole requires transferring from confidence to functionality, with clear escalation protocols, outlined resolution rights and examined response playbooks that stretch past communications groups to authorized, cybersecurity and senior management. Taken collectively, this elevates the administration of AI‑pushed misinformation right into a foundational company affairs functionality.

Primarily based on a survey of almost 300 senior company affairs practitioners throughout areas and sectors.